This is the official pytorch implementation of our ICLR 2022 paper DAB-DETR.

Authors: Shilong Liu, Feng Li, Hao Zhang, Xiao Yang, Xianbiao Qi, Hang Su, Jun Zhu, Lei Zhang

[2022/4/14] We release the .pptx file of our DETR-like models comparison figure for those who want to draw model arch figures in paper.

[2022/4/12] We fix a bug in the file datasets/coco_eval.py. The parameter useCats of CocoEvaluator should be True by default.

[2022/4/9] Our code is available!

[2022/3/9] We build a repo Awesome Detection Transformer to present papers about transformer for detection and segmenttion. Welcome to your attention!

[2022/3/8] Our new work DINO set a new record of 63.3AP on the MS-COCO leader board.

[2022/3/8] Our new work DN-DETR has been accpted by CVPR 2022!

[2022/1/21] Our work has been accepted to ICLR 2022.

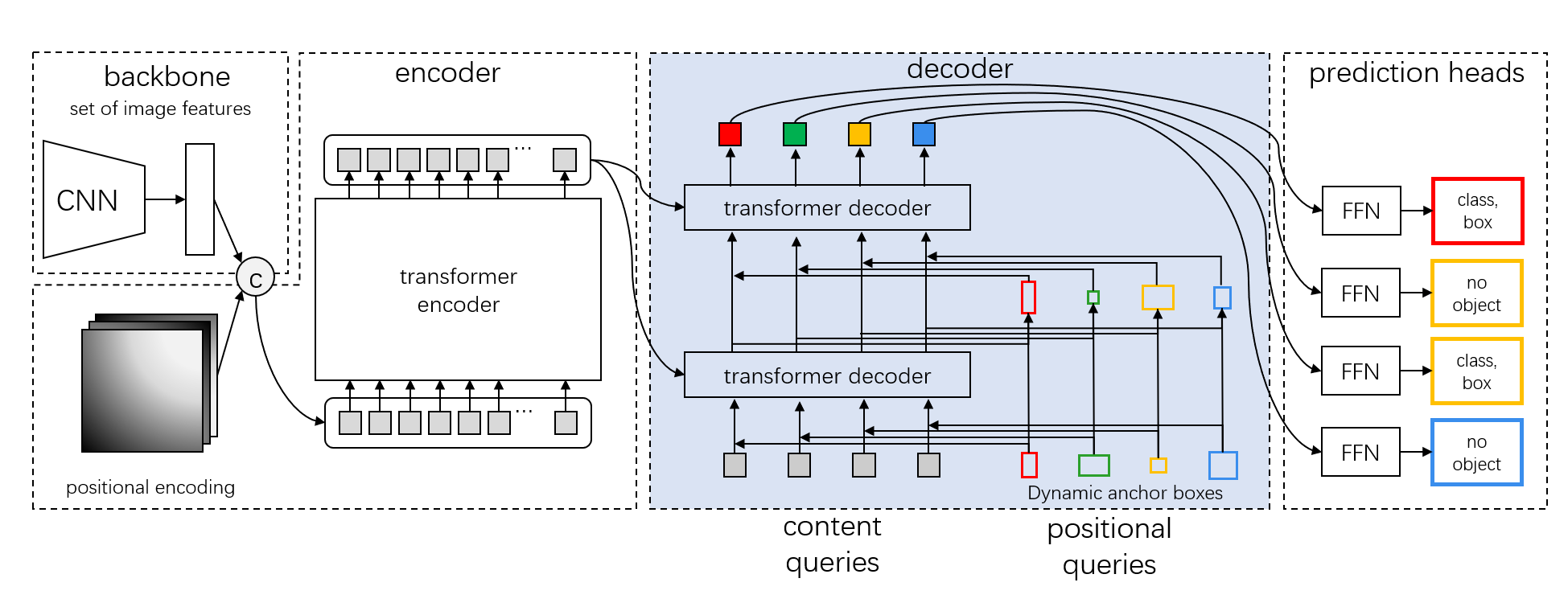

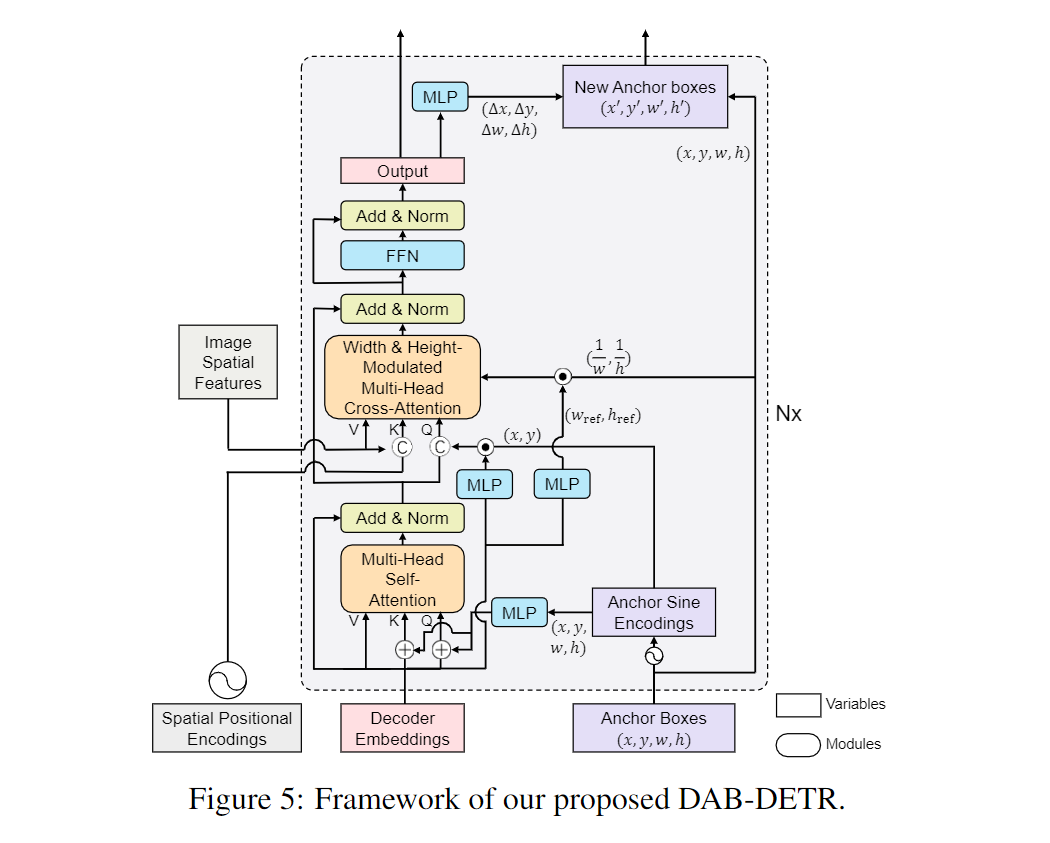

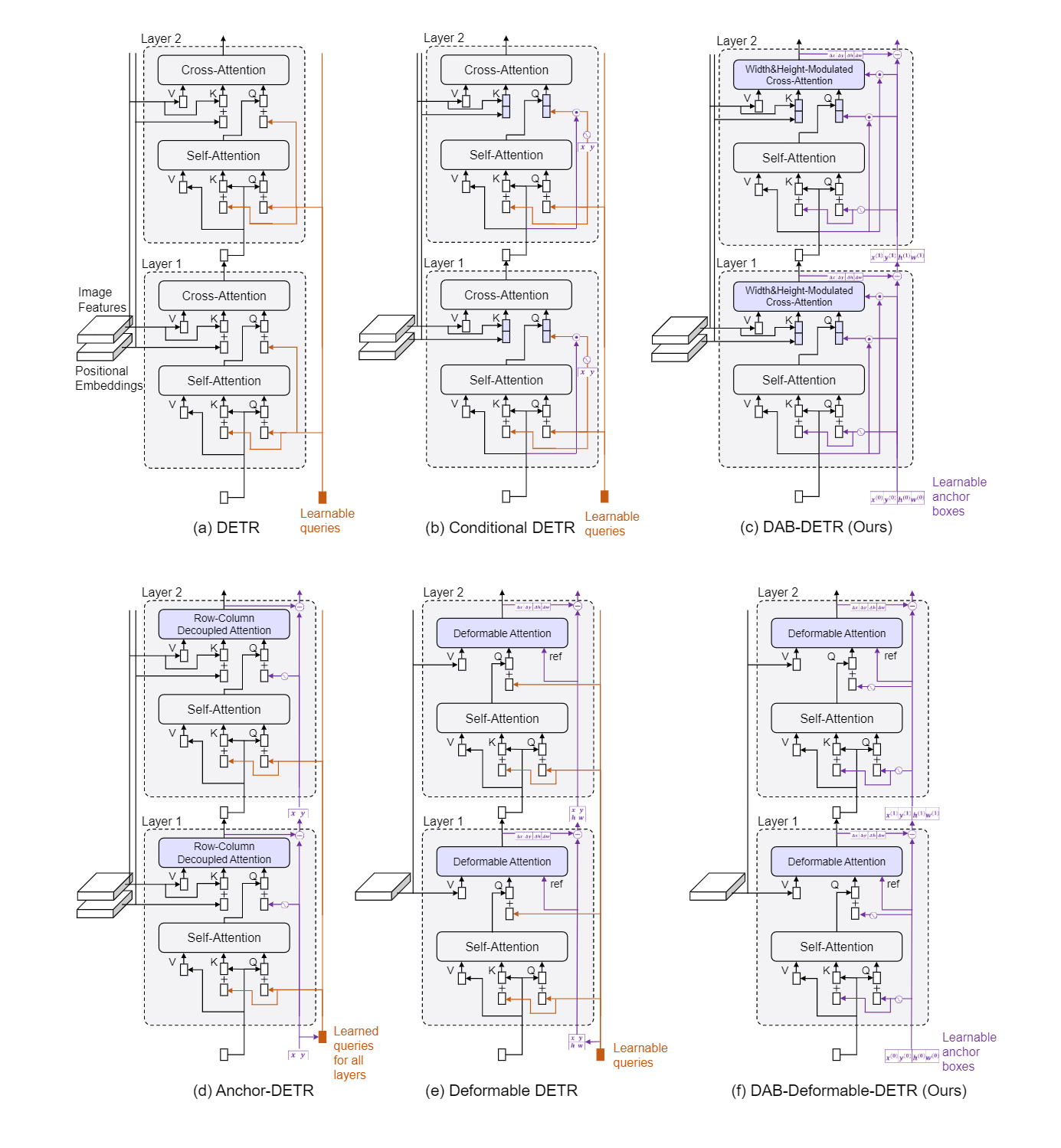

We present in this paper a novel query formulation using dynamic anchor boxes for DETR (DEtection TRansformer) and offer a deeper understanding of the role of queries in DETR. This new formulation directly uses box coordinates as queries in Transformer decoders and dynamically updates them layer-by-layer. Using box coordinates not only helps using explicit positional priors to improve the query-to-feature similarity and eliminate the slow training convergence issue in DETR, but also allows us to modulate the positional attention map using the box width and height information. Such a design makes it clear that queries in DETR can be implemented as performing soft ROI pooling layer-by-layer in a cascade manner. As a result, it leads to the best performance on MS-COCO benchmark among the DETR-like detection models under the same setting, e.g., AP 45.7% using ResNet50-DC5 as backbone trained in 50 epochs. We also conducted extensive experiments to confirm our analysis and verify the effectiveness of our methods.

We provide our models with R50 backbone, including both DAB-DETR and DAB-Deformable-DETR (See Appendix C of our paper for more details).

| name | backbone | box AP | Log/Config/Checkpoint | Where in Our Paper | |

|---|---|---|---|---|---|

| 0 | DAB-DETR-R50 | R50 | 42.2 | Google Drive | Tsinghua Cloud | Table 2 |

| 1 | DAB-DETR-R50(3 pat)1 | R50 | 42.6 | Google Drive | Tsinghua Cloud | Table 2 |

| 2 | DAB-DETR-R50-DC5 | R50 | 44.5 | Google Drive | Tsinghua Cloud | Table 2 |

| 3 | DAB-DETR-R50-DC5-fixxy2 | R50 | 44.7 | Google Drive | Tsinghua Cloud | Table 8. Appendix H. |

| 4 | DAB-DETR-R50-DC5(3 pat) | R50 | 45.7 | Google Drive | Tsinghua Cloud | Table 2 |

| 5 | DAB-Deformbale-DETR (Deformbale Encoder Only)3 |

R50 | 46.9 | Baseline for DN-DETR | |

| 6 | DAB-Deformable-DETR-R504 | R50 | 48.1 | Google Drive | Tsinghua Cloud | Extend Results for Table 5, Appendix C. |

Notes:

- 1: The models with marks (3 pat) are trained with multiple pattern embeds (refer to Anchor DETR or our paper for more details.).

- 2: The term "fixxy" means we use random initialization of anchors and do not update their parameters during training (See Appendix H of our paper for more details).

- 3: The DAB-Deformbale-DETR(Deformbale Encoder Only) is a multiscale version of our DAB-DETR. See DN-DETR for more details.

- 4: The result here is better than the number in our paper, as we use different losses coefficients during training. Refer to our config file for more details.

We use the great DETR project as our codebase, hence no extra dependency is needed for our DAB-DETR. For the DAB-Deformable-DETR, you need to compile the deformable attention operator manually.

We test our models under python=3.7.3,pytorch=1.9.0,cuda=11.1. Other versions might be available as well.

- Clone this repo

git clone https://github.com/IDEA-opensource/DAB-DETR.git

cd DAB-DETR- Install Pytorch and torchvision

Follow the instrction on https://pytorch.org/get-started/locally/.

# an example:

conda install -c pytorch pytorch torchvision- Install other needed packages

pip install -r requirements.txt- Compiling CUDA operators

cd models/dab_deformable_detr/ops

python setup.py build install

# unit test (should see all checking is True)

python test.py

cd ../../..Please download COCO 2017 dataset and organize them as following:

COCODIR/

├── train2017/

├── val2017/

└── annotations/

├── instances_train2017.json

└── instances_val2017.json

We use the standard DAB-DETR-R50 and DAB-Deformable-DETR-R50 as examples for training and evalulation.

Download our DAB-DETR-R50 model checkpoint from this link and perform the command below.

You can expect to get the final AP about 42.2.

For our DAB-Deformable-DETR (download here), the final AP expected is 48.1.

# for dab_detr: 42.2 AP

python main.py -m dab_detr \

--output_dir logs/DABDETR/R50 \

--batch_size 1 \

--coco_path /path/to/your/COCODIR \ # replace the args to your COCO path

--resume /path/to/our/checkpoint \ # replace the args to your checkpoint path

--eval

# for dab_deformable_detr: 48.1 AP

python main.py -m dab_deformable_detr \

--output_dir logs/dab_deformable_detr/R50 \

--batch_size 2 \

--coco_path /path/to/your/COCODIR \ # replace the args to your COCO path

--resume /path/to/our/checkpoint \ # replace the args to your checkpoint path

--transformer_activation relu \

--evalSimilarly, you can also train our model on a single process:

# for dab_detr

python main.py -m dab_detr \

--output_dir logs/DABDETR/R50 \

--batch_size 1 \

--epochs 50 \

--lr_drop 40 \

--coco_path /path/to/your/COCODIR # replace the args to your COCO pathHowever, as the training is time consuming, we suggest to train the model on multi-device.

If you plan to train the models on a cluster with Slurm, here is an example command for training:

# for dab_detr: 42.2 AP

python run_with_submitit.py \

--timeout 3000 \

--job_name DABDETR \

--coco_path /path/to/your/COCODIR \

-m dab_detr \

--job_dir logs/DABDETR/R50_%j \

--batch_size 2 \

--ngpus 8 \

--nodes 1 \

--epochs 50 \

--lr_drop 40

# for dab_deformable_detr: 48.1 AP

python run_with_submitit.py \

--timeout 3000 \

--job_name dab_deformable_detr \

--coco_path /path/to/your/COCODIR \

-m dab_deformable_detr \

--transformer_activation relu \

--job_dir logs/dab_deformable_detr/R50_%j \

--batch_size 2 \

--ngpus 8 \

--nodes 1 \

--epochs 50 \

--lr_drop 40 The final AP should be similar to ours. (42.2 for DAB-DETR and 48.1 for DAB-Deformable-DETR). Our configs and logs(see the model_zoo) could be used as references as well.

Notes:

- The results are sensitive to the batch size. We use 16(2 images each GPU x 8 GPUs) by default.

Or run with multi-processes on a single node:

# for dab_detr: 42.2 AP

python -m torch.distributed.launch --nproc_per_node=8 \

main.py -m dab_detr \

--output_dir logs/DABDETR/R50 \

--batch_size 2 \

--epochs 50 \

--lr_drop 40 \

--coco_path /path/to/your/COCODIR

# for dab_deformable_detr: 48.1 AP

python -m torch.distributed.launch --nproc_per_node=8 \

main.py -m dab_deformable_detr \

--output_dir logs/dab_deformable_detr/R50 \

--batch_size 2 \

--epochs 50 \

--lr_drop 40 \

--transformer_activation relu \

--coco_path /path/to/your/COCODIRThe source file can be found here.

DINO: DETR with Improved DeNoising Anchor Boxes for End-to-End Object Detection.

Hao Zhang*, Feng Li*, Shilong Liu*, Lei Zhang, Hang Su, Jun Zhu, Lionel M. Ni, Heung-Yeung Shum

arxiv 2022.

[paper] [code]

DN-DETR: Accelerate DETR Training by Introducing Query DeNoising.

Feng Li*, Hao Zhang*, Shilong Liu, Jian Guo, Lionel M. Ni, Lei Zhang.

IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 2022.

[paper] [code]

DAB-DETR is released under the Apache 2.0 license. Please see the LICENSE file for more information.

Copyright (c) IDEA. All rights reserved.

Licensed under the Apache License, Version 2.0 (the "License"); you may not use these files except in compliance with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software distributed under the License is distributed on an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the License for the specific language governing permissions and limitations under the License.

@inproceedings{

liu2022dabdetr,

title={{DAB}-{DETR}: Dynamic Anchor Boxes are Better Queries for {DETR}},

author={Shilong Liu and Feng Li and Hao Zhang and Xiao Yang and Xianbiao Qi and Hang Su and Jun Zhu and Lei Zhang},

booktitle={International Conference on Learning Representations},

year={2022},

url={https://openreview.net/forum?id=oMI9PjOb9Jl}

}