This project combines YOLOv2(reference) and seq-nms(reference) to realise real time video detection. [Tutorial develop for Ubuntu].

The lines attached under this colour in this repository are for running under the Ubuntu terminal.

-

Open a terminal.

-

Create a virtual environment with python 2.7:

conda create --name EnvExample python=2.7.conda activate EnvExample.

-

Clone the github repository in the folder you would like to have the project:

git clone https://github.com/carlosjimenezmwb/seq_nms_yolo.git.

-

Go inside the project:

cd seq_nms_yolo.

-

Make the proyect using the command:

make.

-

Download the yolo.weights and tiny-yolo.weights:

wget https://pjreddie.com/media/files/yolo.weights.wget https://pjreddie.com/media/files/yolov2-tiny.weights.

-

You must have the following libraries installed (with indicated versions)

- cv2

pip install opencv-python==4.2.0.32. - matplotlib

pip install matplotlib. - scipy

pip install scipy. - pillow

conda install -c anaconda pillow. - tensorflow

conda install tensorflow=1.15. - tf_object_detection

conda install -c conda-forge tf_object_detection.

- cv2

-

Copy a video file that you want to use to the video (/seq_nms_yolo/video) folder, for example, 'input.mp4';

-

Go to the directory /seq_nms_yolo/video and run video2img.py and get_pkllist.py:

python video2img.py -i input.mp4.python get_pkllist.py.

-

Export the paths:

export PATH=/usr/local/cuda-10.1/bin${PATH:+:${PATH}}.export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/cuda-10.1/lib64s.export LIBRARY_PATH=$LIBRARY_PATH:/usr/local/cuda-10.1/lib64.

11.Return to root folder and run yolo_seqnms.py to generate output images in video/output:

* python yolo_seqnms.py.

- If you want to reconstruct a video from these output images, you can go to the video folder and run img2video.py:

python img2video.py -i output.

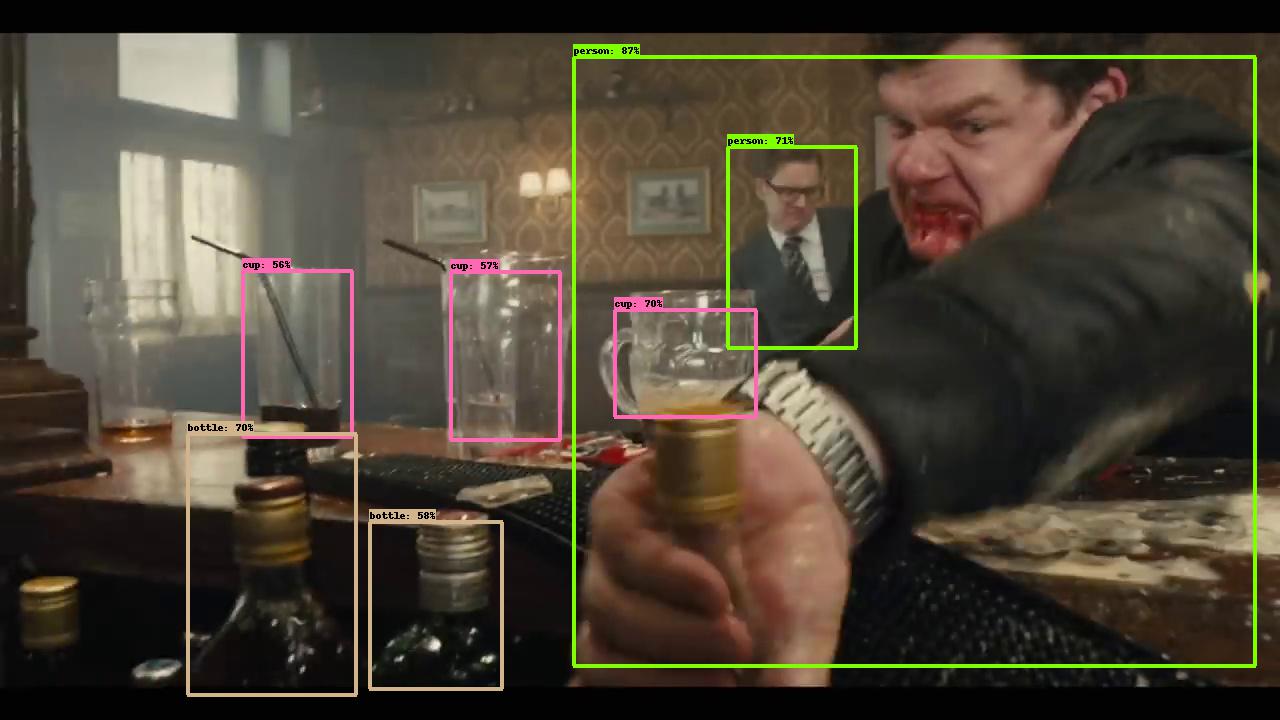

And you will see detection results in video/output

This project copies lots of code from darknet , Seq-NMS and models.