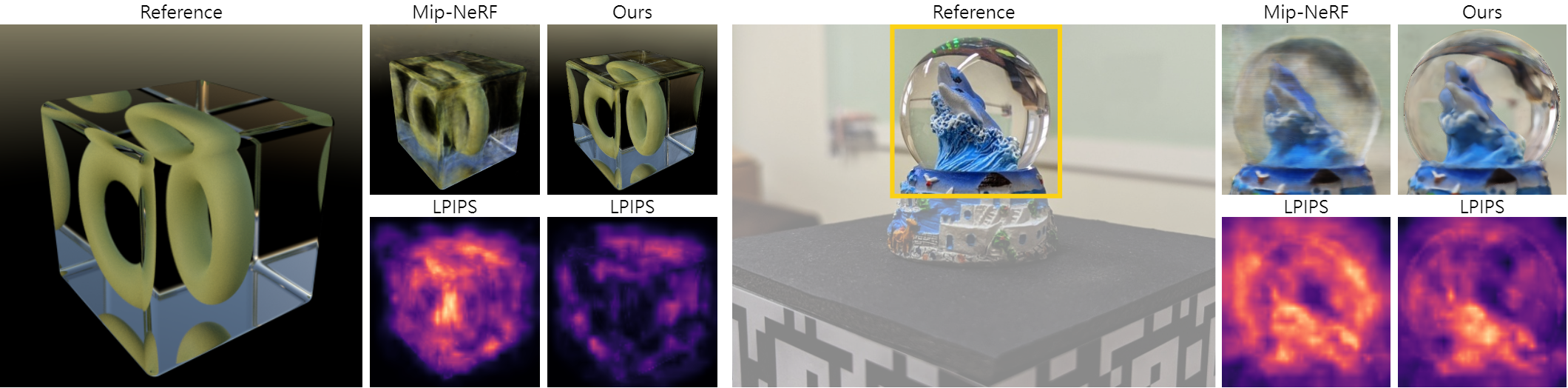

This is an official implementation of Sampling Neural Radiance Fields for Refractive Objects.

Jen-I Pan, Jheng-Wei Su, Kai-Wen Hsiao, Ting-Yu Yen, Hung-Kuo Chu

SIGGRAPH Asia 2022 Technical Communications

The implementation is based on JaxNeRF and mip-NeRF.

The device is an RTX 3090 with CUDA 11.1 installed on Ubuntu 16.04, and use Anaconda to setup the environment:

conda create --name rnerf python=3.8; conda activate rnerf

pip install -r requirements.txt

pip install --upgrade jaxlib==0.1.72+cuda111 -f https://storage.googleapis.com/jax-releases/jax_cuda_releases.html

Note that the versions of jax/jaxlib/flax/optax and CUDA must be compatible. Please refer to https://github.com/google/jax#pip-installation-gpu-cuda and https://storage.googleapis.com/jax-releases/jax_cuda_releases.html for more details.

Then, install the local pysdf under sdf/.

cd sdf

pip install .

Download the data from Google Drive, and unzip it to the place wherever you want. Take the following directory structure as an example:

${DATA_DIR}

|-- synthetic

|-- nerf

|-- ship_skydome-bkgd_no-partial-reflect_cycles

|-- ${SCENE}

|-- real

|-- dolphin

|-- ${SCENE}

Note that Ball, Pen, and Glass are data provided by Eikonal Fields for Refractive Novel-View Synthesis, SIGGRAPH 2022 (Conference Proceedings), and we include our preprocessed results.

Download the pretrained models from Google Drive, and unzip them to the place wherever you want. Take the following directory structure as an example:

${TRAIN_DIR}

|-- refractive-nerf-jax

|-- ship_skydome-bkgd_no-partial-reflect_cycles

|-- radiance_pe-bkgd_bg-smooth-l2-1.0-ps-128

|-- dolphin

|-- ${SCENE}

|-- ${EXPERIMENT}

For synthetic scenes, run the following command:

sh train_nerf.sh

For real scenes, run the following command:

sh train_opencv.sh

Note that change

DATA_DIRandTRAIN_DIRto your own paths as${DATA_DIR}and${TRAIN_DIR}in Data and Pretrained Models sections. Moreover, the argumentstagealways starts withradiance_*.

The default hyperparameters are specified by ${SCENE}.gin and ${SCENE}.yaml under configs/.

If you encounter OOM errors, please uncomment the following lines in train.py or eval.py:

os.environ['TF_FORCE_GPU_ALLOW_GROWTH'] = 'true'

os.environ["XLA_PYTHON_CLIENT_PREALLOCATE"] = "false"

os.environ["XLA_PYTHON_CLIENT_ALLOCATOR"] = "platform"

For synthetic scenes, run the following command:

sh eval_nerf.sh

For real scenes, run the following command:

sh eval_opencv.sh

Note that change

DATA_DIRandTRAIN_DIRto your own paths as${DATA_DIR}and${TRAIN_DIR}in Data and Pretrained Models sections. Moreover, the argumentstageandgin_paramshould be the same config name underconfigs/.

Use Anaconda to setup the environment:

cd calib

conda create --name camcalib python=3.6; conda activate camcalib

pip install -r requirements.txt

Then, set your own image directory (root), visualization and visual hull parameters in calib/cfg.py. Take the following directory structure as an example:

${YOUR_OWN_IMG_DIR}

|-- 000000.jpg

|-- mask_000000.png

|-- *.jpg

|-- mask_*.png

|-- ...

-

Calibrate camera parameters

- [Option 1] AprilTag + OpenCV

- Download the tags from here, and the calibration patterns can be generated by

create_apriltagincalib_camera_with_apriltag.py. - Run the following command, and uncomment the line

resize_imagesincalib_camera_with_apriltag.pyto resize the images by half.python calib_camera_with_apriltag.py

- Download the tags from here, and the calibration patterns can be generated by

- [Option 2] COLMAP

- [Option 1] AprilTag + OpenCV

-

Visualize camera poses

- [Option 1] AprilTag + OpenCV

python vis_camera_pose_with_opencv.py - [Option 2] COLMAP

python vis_camera_pose_with_llff.py

- [Option 1] AprilTag + OpenCV

-

Reconstruct a proxy geometry

- Run the following command, and you can go back to Step 2 to visualize both camera poses and proxy geometry.

python make_visual_hull.py - You will find

calib.pkl,calib.jsonandmesh.objunder${YOUR_OWN_IMG_DIR}, and organize your own TRAIN/VALID/TEST splits by replacingcalib.jsonto<train/val/test>_transforms.jsonand moving images to the corresponding directory (file_pathincalib.jsonmaybe need to be changed). Take the following directory structure as an example:${SCENE} |-- train |-- 000000.png |-- ... |-- val |-- test |-- train_transforms.json |-- ...

- Run the following command, and you can go back to Step 2 to visualize both camera poses and proxy geometry.

You may remove the noisy components in the proxy geometry in Blender and export OBJ named as mesh.obj. Run the following command, and you will find mesh.pkl. Moreover, prepare a config for the scene under configs/ if not exist.

For synthetic scenes, specfiy the bounding box of a voxel grid by extent:

cd ..

sh voxelize_nerf.sh

For real scenes, specfiy the bounding box of a voxel grid by min_point and max_point:

cd ..

sh voxelize_opencv.sh

Use Anaconda to setup the environment:

cd metric

conda create --name metric python=3.8; conda activate metric

pip install -r requirements.txt

To evaluate PSNR, SSIM, and LPIPS, run the following command:

python summary.py

Note that run

python render_mask.pyfirst to generate object cropping region for real scenes. Change the code within the comment blockConfiginsummary.pyandrender_mask.py.

If you find our code/models useful, please consider citing our paper:

@inproceedings{pan2022sample,

author = {Pan, Jen-I and Su, Jheng-Wei and Hsiao, Kai-Wen and Yen, Ting-Yu and Chu, Hung-Kuo},

title = {Sampling Neural Radiance Fields for Refractive Objects},

year = {2022},

isbn = {9781450394659},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3550340.3564234},

doi = {10.1145/3550340.3564234},

booktitle = {SIGGRAPH Asia 2022 Technical Communications},

articleno = {5},

numpages = {4},

keywords = {eikonal rendering, neural radiance fields},

location = {Daegu, Republic of Korea},

series = {SA '22 Technical Communications}

}

The code base contains JaxNeRF, mip-NeRF, pysdf, and LLFF. The synthetic scenes are rendered from Ship, DeerGlobe, and StarLamp by Blender, and three of the real scenes are from eikonalfield.