Next word predictor with R

This repo hosts the code for my next word predictor app using R for analysis and cleaning, and Python for modeling and deploying for my capstone project for the Data Science Specialization by Johns Hopkins University.

Click here for more information about the corpora used to build this model, and click here for an overview about the project.

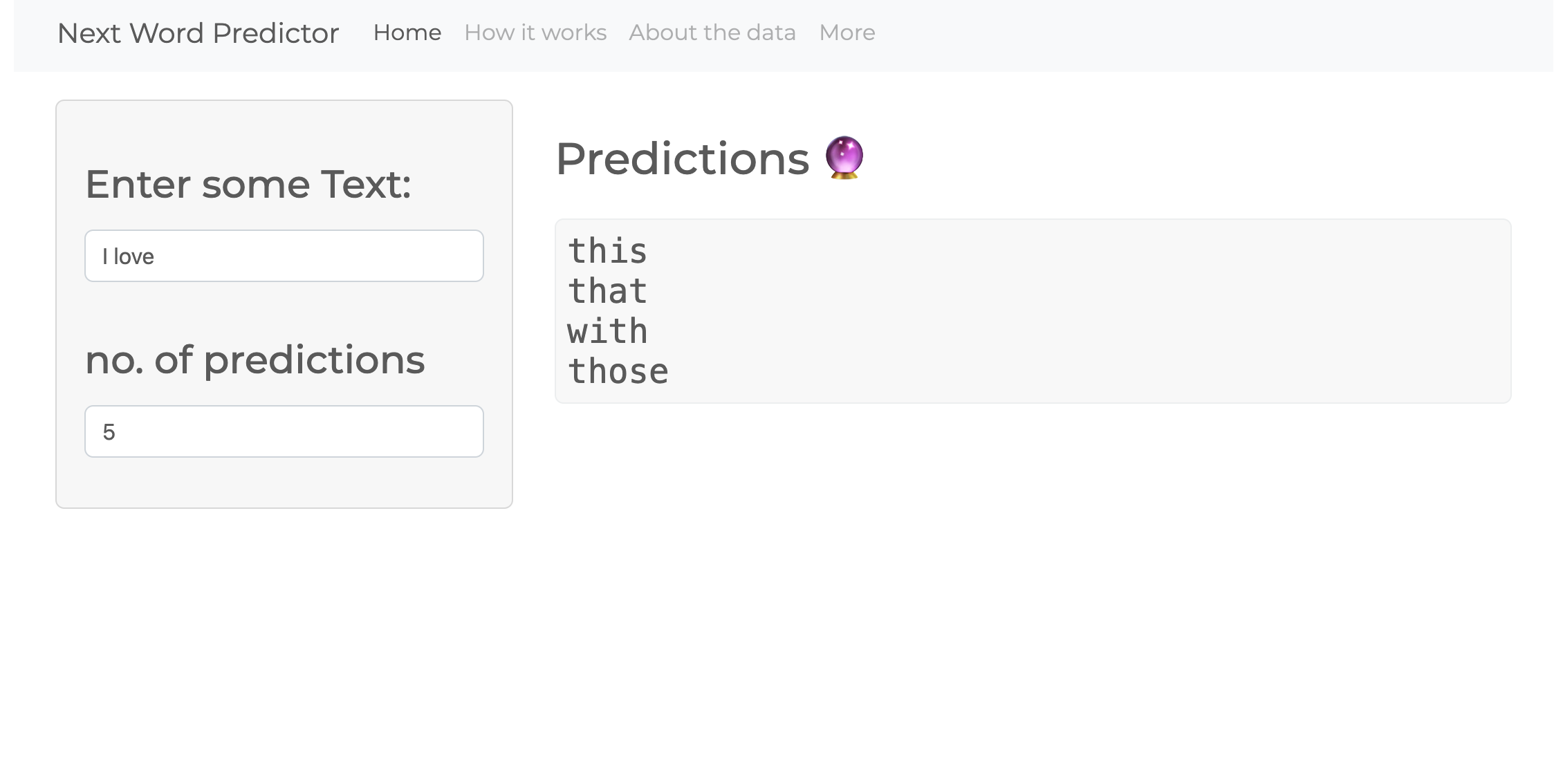

The app

Data Analysis

Dataset information

| file_name | size | line_count | word_count | max_line |

|---|---|---|---|---|

| blogs.txt | 200.4 MB | 899,288 | 37,334,690 | 140 |

| news.txt | 196.3 MB | 1,010,242 | 34,372,720 | 11,384 |

| twitter.txt | 159.4 MB | 2,360,148 | 30,374,206 | 40,833 |

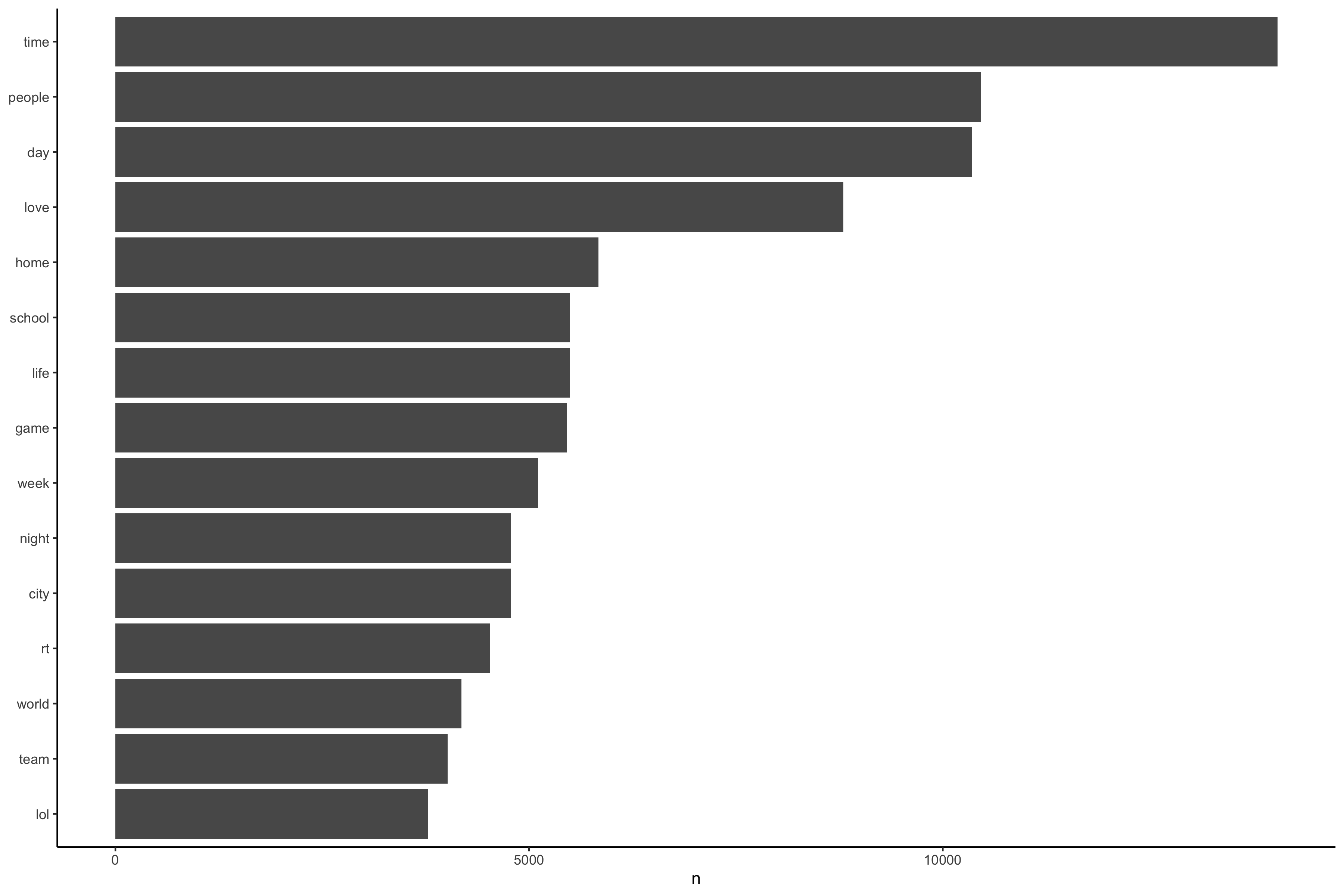

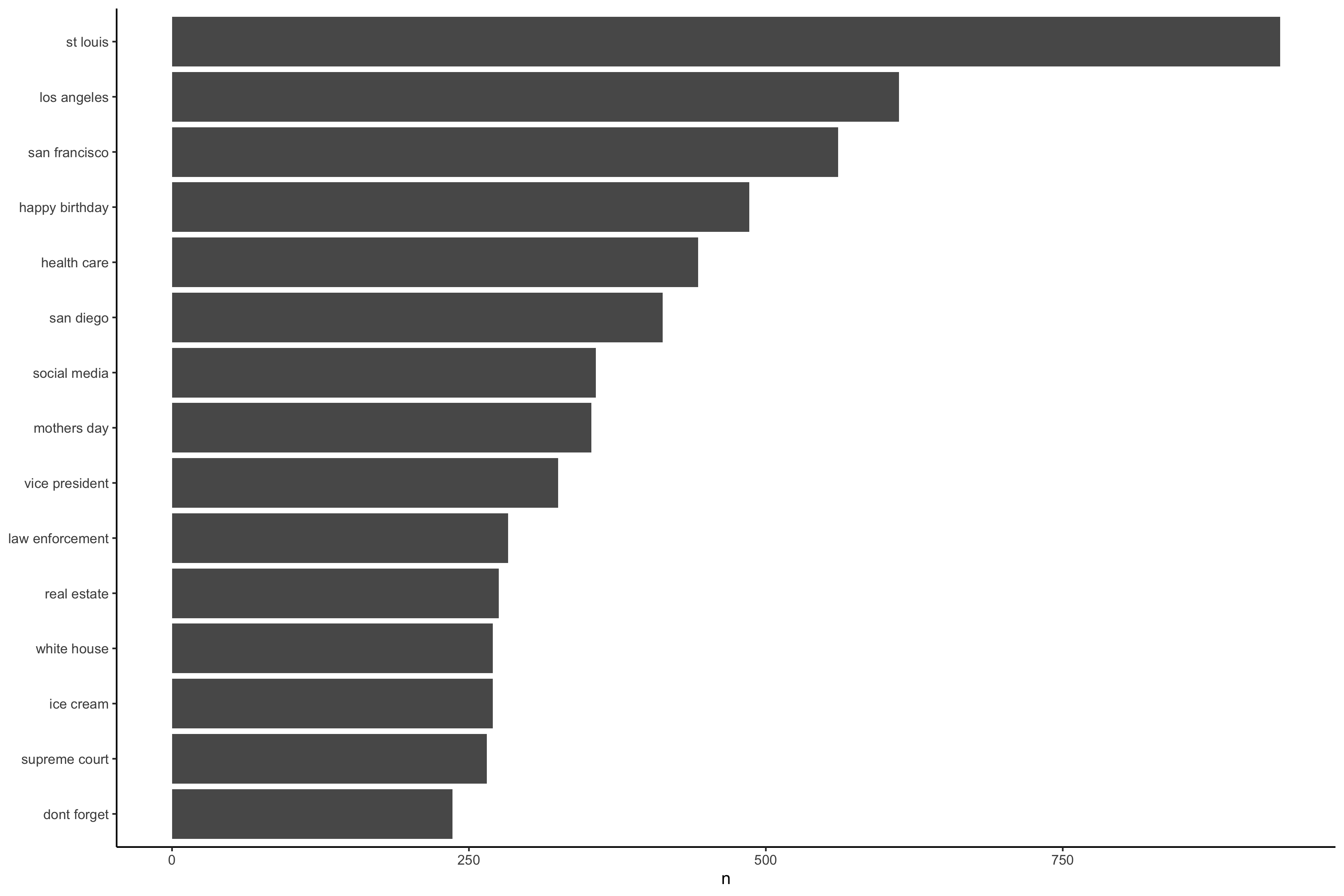

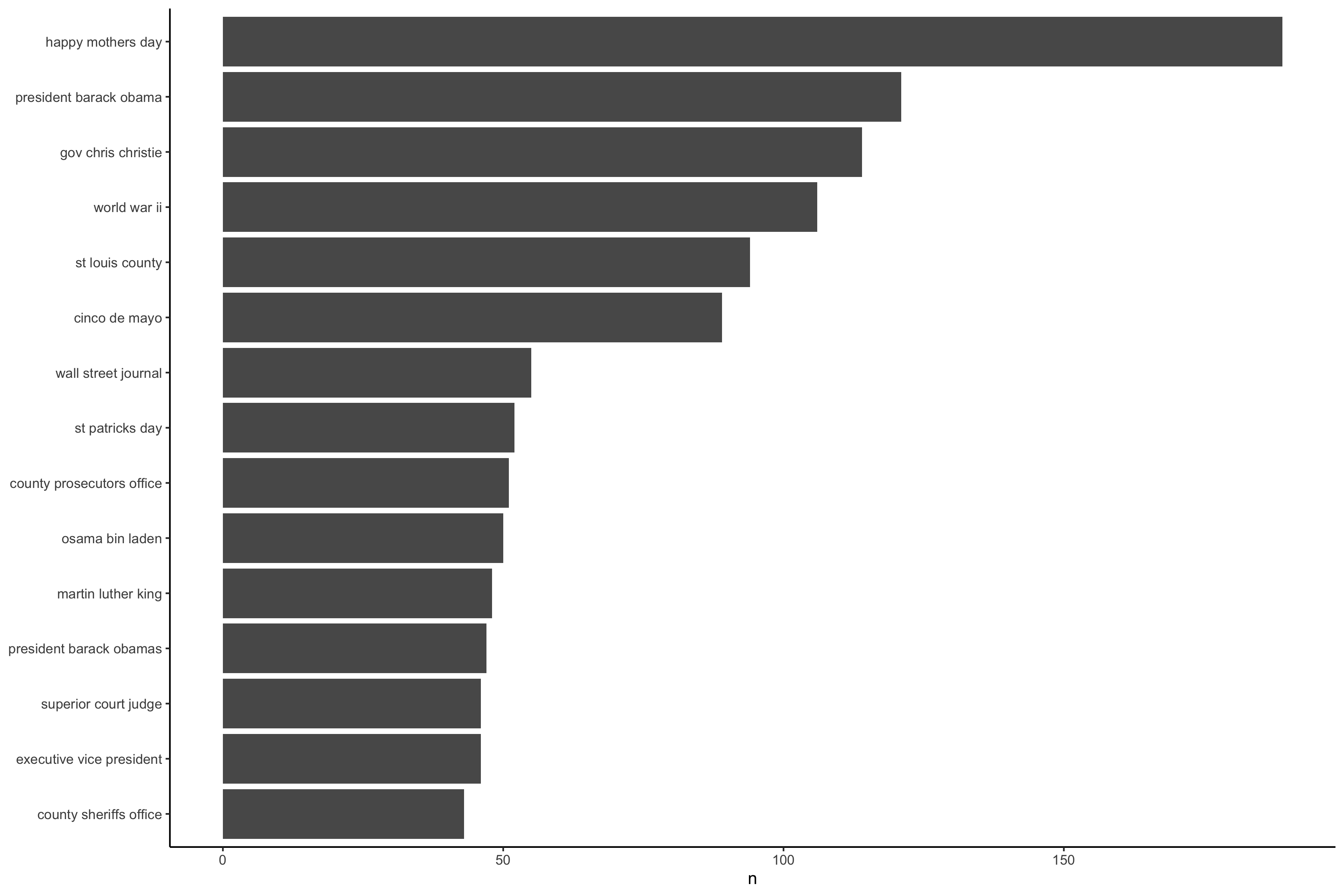

N-grams bar charts

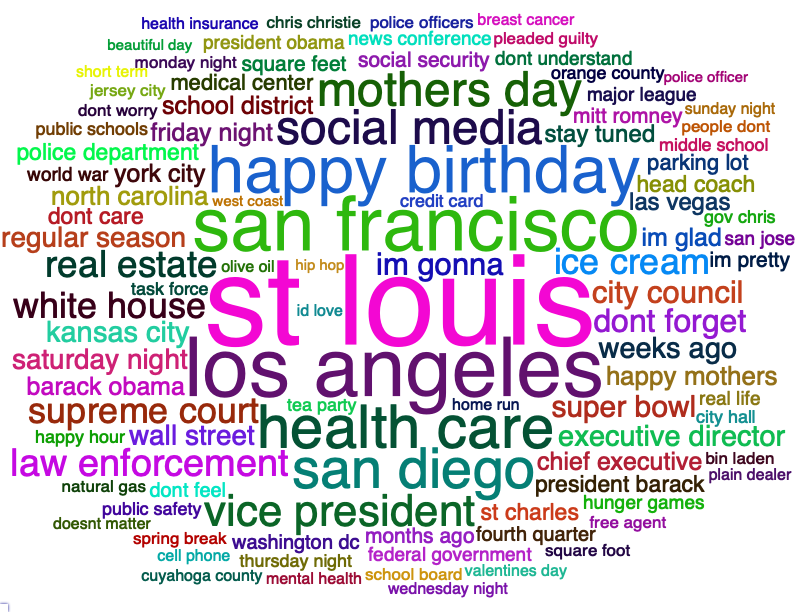

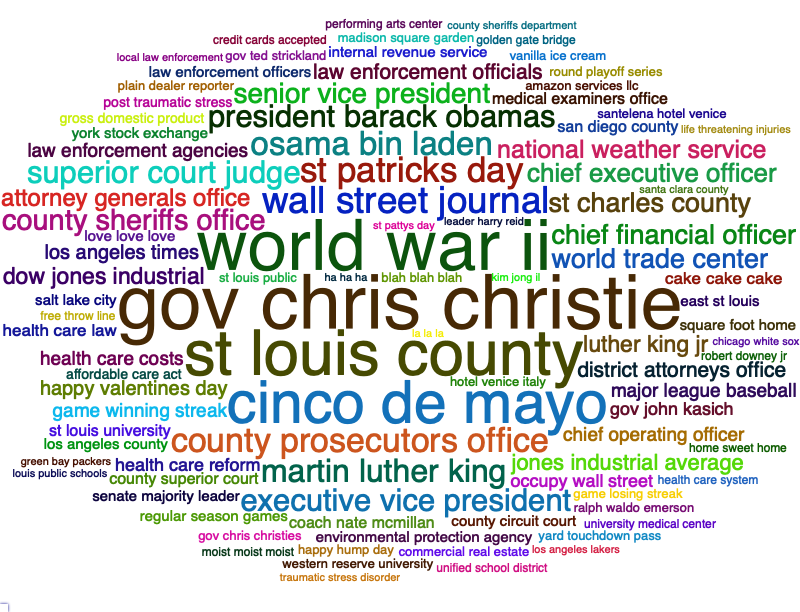

Ngrams wordclouds

View the full report here

You can find all the ngrams in the data folder.

Model Building

The intial approach was to place ngrams in tibble format, and filter for the right strings. That approach was very expensive, and the shiny app was not able to run. The second approach was using the markovchain package to build markov models using the ngrams. The goal was to use back-off for the model, but due to the limitation of Shiny's free tier limitation, I was only able to use a small subset of the unigrams, which is 100mb (The initial model was 6bg) and the bigrams markov model was ~40GB.

One way to improve this model is to use LSTM or transformers, which can give much more accurate predictions.

View the initial approach here

View the markov approach here