Failed to run benchmark scripts in Android

liute62 opened this issue · 20 comments

Hi there,

I just new to here. So I typed by following the tutorial:

benchmarking/run_bench.py -b specifications/models/caffe2/shufflenet/shufflenet.json --platforms android

After a long time compiling, all of compile and link tasks are finished in the build_android folder in my pytorch repo. But it throws an error:

cmake unknown rule to install xxxx

It looks the caffe2_benchmark executable has been generated by failed to copied to the install folder, so I manually copied to the folder, namely:

/home/new/.aibench/git/exec/caffe2/android/2019/4/5/fefa6d305ea3e820afe64cec015d2f6746d9ca88

Then I modified repo_driver.py to avoid compiling again and run function _runBenchmarkSuites

But failed :

In file included from ../third_party/zstd/lib/common/pool.h:20:0,

from ../third_party/zstd/lib/common/pool.c:14:

../third_party/zstd/lib/common/zstd_internal.h:382:37: error: unknown type name ‘ZSTD_dictMode_e’; did you mean ‘FSE_decode_t’?

ZSTD_dictMode_e dictMode,

^~~~~~~~~~~~~~~

FSE_decode_t

Questions:

- Any suggestions on how to run the tutorial correctly?

- How to avoid the long time compiling for each time running

benchmarking/run_bench.py -b specifications/models/caffe2/shufflenet/shufflenet.json --platforms android

thanks!

Hi @liute62 , thanks for using FAI-PEP!

To answer your questions: (1) I haven't encounter this problem with failed errors about this third_party. Actually this zstd is from pytorch repo https://github.com/pytorch/pytorch/tree/master/third_party. I would suggest you download the latest repo from the github page. (2) Once you built caffe2_benchmark, you can copied it to somewhere in your laptop, and changed the lines here https://github.com/facebook/FAI-PEP/blob/master/specifications/frameworks/caffe2/android/build.sh. In particular, you can comment out line 5 and 6, and changed line 7 to something like cp YOUR_BUILT_CAFF2_BENCHMARK $2. By doing this, it will build again but just using your pre build binary.

One more thing, I think from the wiki, the command you want to use is benchmarking/run_bench.py -b specifications/models/caffe2/shufflenet/shufflenet.json, i.e., no --platforms android.

@liute62 , can you please share the entire log somewhere?

To speedup the build, you can try incremental build by specifying --platforms android/interactive.

Another way to speedup the build is to do the process with a more powerful host with more cores. The number of parallel threads is capped to the number of cores in the system.

Hi @hl475 and @sf-wind

Thanks for the quick response! I just modified the build.sh and it did work! I got numerous output of the result, for benchmarking/run_bench.py -b specifications/models/caffe2/shufflenet/shufflenet.json

ex:

NET latency: value median 146049.00000 MAD: 2139.00000

ID_0_Conv_gpu_0/conv3_0 latency: value median 6958.12500 MAD: 188.93500

ID_100_Conv_gpu_0/gconv3_9 latency: value median 5317.45000 MAD: 198.00000

ID_101_SpatialBN_gpu_0/gconv3_9_bn latency: value median 132.60650 MAD: 3.69650

ID_102_Conv_gpu_0/gconv1_19 latency: value median 1441.87500 MAD: 79.97000

ID_103_SpatialBN_gpu_0/gconv1_19_bn latency: value median 123.35950 MAD: 5.56950

ID_104_Sum_gpu_0/block9 latency: value median 127.13200 MAD: 16.63550

ID_105_Relu_gpu_0/block9 latency: value median 46.07390 MAD: 2.52530

ID_106_Conv_gpu_0/gconv1_20 latency: value median 1130.52000 MAD: 52.89000

ID_107_SpatialBN_gpu_0/gconv1_20_bn latency: value median 121.87600 MAD: 5.15750

ID_108_Relu_gpu_0/gconv1_20_bn latency: value median 42.08370 MAD: 2.70845

ID_109_ChannelShuffle_gpu_0/shuffle_10 latency: value median 144.16900 MAD: 4.03600

But failed in:

ERROR 19:45:31 utilities.py: 163: Post Connection failed HTTPConnectionPool(host='127.0.0.1', port=8000): Max retries exceeded with url: /benchmark/store-result (Caused by NewConnectionError('<urllib3.connection.HTTPConnection object at 0x7f1b39761da0>: Failed to establish a new connection: [Errno 111] Connection refused',))

INFO 19:45:31 utilities.py: 170: wait 64 seconds. Retrying...

-

Do you have any ideal about how to setup the server? Is is a data visualization platform?

-

How to parse the output above? For ex:

NET latency: value median 146049.00000 MAD: 2139.00000

My understanding here is that the median value for the running network once is 146049 (ms) ? and what MAD is here? Besides, how could you get the result? Is it totally running on CPU or already leveraged some libraries like OpenCL or GPU in mobile? -

Does the benchmark also contain any memory consumption measurements?

-

For the battery usage, I find it needs an extra hardware device, have you guys tried measuring the battery using some software methods?

thanks!

For HTTPConnectionPool, I have the same problem last night when I tried the wiki as well. I changed one line of my config.txt file from the wiki to "--remote_reporter": null, to make it work.

- You can follow this page to set up the server, and it contains the info for data visualization as well.

- (1) According to the json file, the median value are the median of NET latency for 50 iterations.

(2) MAD stands for Median absolute deviation.

(3) Once you fixedremote_reporterinconfig.txt, run the command one more time. There will be one line in the output log likeINFO 00:48:28 local_reporter.py: 67: Writing file for SM-G950U-7.0-24 (988837435645343543) SOME_PATH_ON_YOUR_LAPTOP. You can find all results in that path.

(4) This is not controlled by FAI-PEP. Indeed, it depends on the framework you are using. In this case, you better check with PyTorch. - I don't think we do that. cc @sf-wind to confirm.

- You can find more info in this page, and this is the way we are currently using for the battery usage.

For 3, no, we don't measure memory consumption. it is not difficult to add one though. That needs to instrument the framework code to measure it, since PEP just runs on the host system. We use a separate mechanism internally to get the memory consumption.

@hl475 , why do we have a default remote report specified in the confit.txt. Is it added by @ZhizhenQin for the remote lab? In that case, it only affects the lab, not the client.

haha.. I added [2]. I feel the right behavior is to move it to

FAI-PEP/benchmarking/run_bench.py

Line 54 in b674c39

Sure, will do.

Hi @hl475 and @sf-wind

Thanks for the kind response!

I just setup the server and figured out you guys have solved the HTTP connection errors before, nice job!

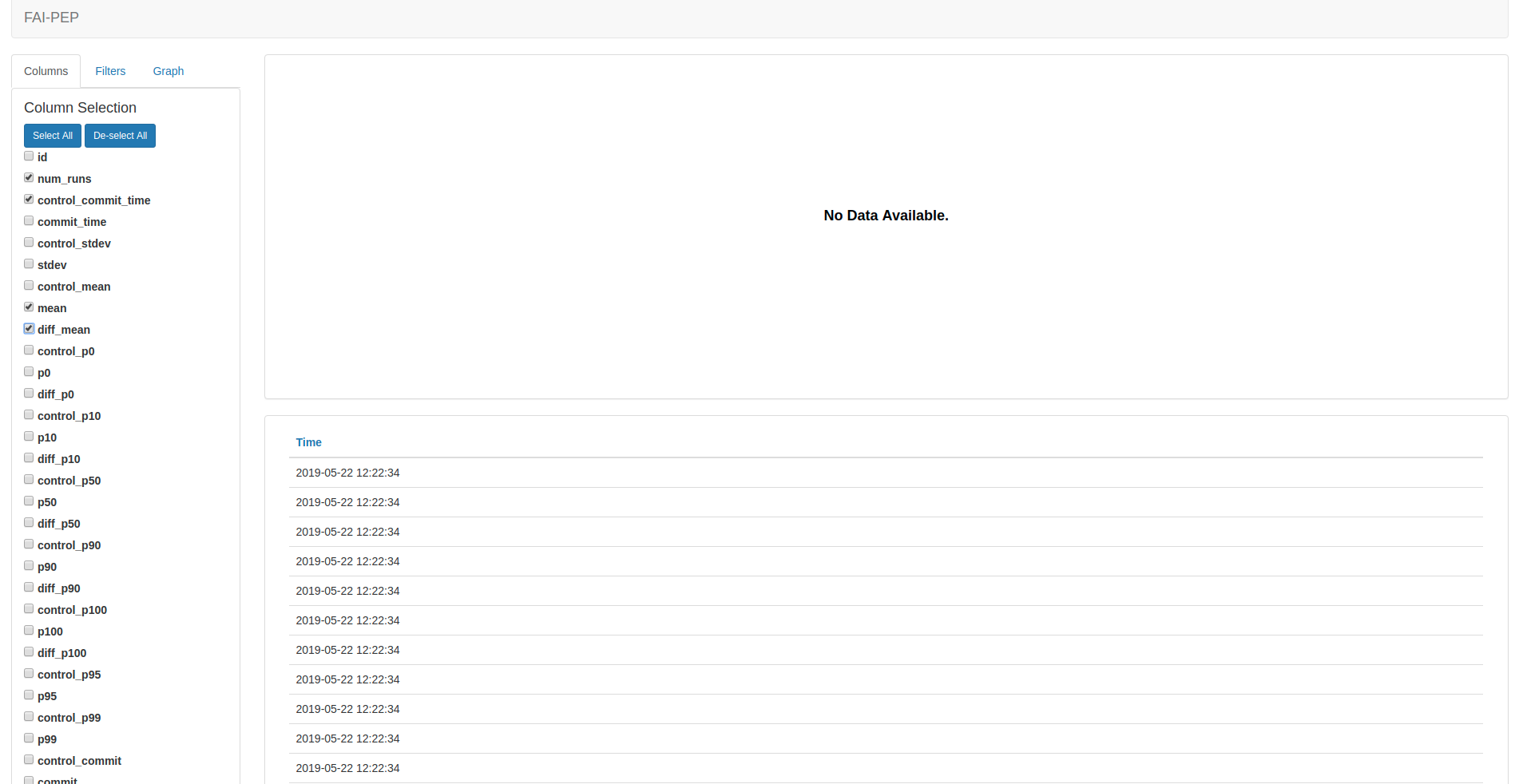

Btw, after I enter the data visualization platform, I have this screen:

My questions are here:

-

there is no data available, but in my Django database, I got bunches of benchmark results entries. So how could I show it?

-

I noticed that even after one single running:

benchmarking/run_bench.py -b specifications/models/caffe2/shufflenet/shufflenet.json

I got numerous output with the same timestamp in the time table on the website, like:

Is it the normal behavior of output and the data visualization platform?

thanks!

-

I believe you need to add some filters, especially the one called user_identifier. By default, it doesn't print out everything.

-

it does output a lot of entries. You can select some fields in columns and then you will see them displayed.

-

So what the user_identifier here is referring to? How could I know which is my user_identifier? Any document pages for the filtering conditions?

-

I just randomly select fields in columns or select all in columns, but the screen is still showing no data available after I pressing the submit button. Any specific rules for that?

that is strange. Do you use the remote lab flow by following https://github.com/facebook/FAI-PEP/tree/master/ailab?

I thought if you select some fields to display, the fields should appear in the table section. Can you post some screen shot?

I didn't setup the nginx and uwsgi but I guess it is still enough to run the system locally with Django.

Step1:

after setup the database make migrations:

(venv) (base) new@tower2:~/Documents/git/FAI-PEP/ailab$ python manage.py runserver

Django version 2.2.1, using settings 'ailab.settings'

Starting development server at http://127.0.0.1:8000/

Step2:

(venv) (base) new@tower2:~/Documents/git/FAI-PEP/benchmarking$ python run_bench.py --lab --claimer_id 1

INFO 15:10:02 lab_driver.py: 129: Running <class 'run_lab.RunLab'> with raw_args ['--app_id', None, '--token', None, '--root_model_dir', '/home/new/.aibench/git/root_model_dir', '--logger_level', 'info', '--benchmark_table', 'benchmark_benchmarkinfo', '--cache_config', '/home/new/.aibench/git/cache_config.txt', '--commit', 'master', '--commit_file', '/home/new/.aibench/git/processed_commit', '--exec_dir', '/home/new/.aibench/git/exec', '--file_storage', 'django', '--framework', 'caffe2', '--local_reporter', '/home/new/.aibench/git/reporter', '--model_cache', '/home/new/.aibench/git/model_cache', '--platform', 'android', '--remote_reporter', 'http://127.0.0.1:8000/benchmark/store-result|oss', '--remote_repository', 'origin', '--repo', 'git', '--repo_dir', '/home/new/Documents/git/pytorch', '--result_db', 'django', '--screen_reporter', '', '--server_addr', 'http://127.0.0.1:8000', '--status_file', '/home/new/.aibench/git/status', '--timeout', '300', '--claimer_id', '1']

[{"kind": "PBDM00-8.1.0-27", "hash": "35661df3"}]

Step3:

open browser in http://127.0.0.1:8000/benchmark/visualize

did you submit any test?

did you submit any test?

After I launched the Django server, i just run:

benchmarking/run_bench.py -b specifications/models/caffe2/shufflenet/shufflenet.json

also noticed that the django model benchmark result has been saved multiple times.

So what is the test here? Need to have more extra steps?

Hi @liute62, how do you submit your job remotely? are you using

python run_bench.py -b <benchmark_file> --remote --devices <devices> --server_addr <server_name>

as said from here?

In particular, this <server_name> has to match the one when you start the lab

python run_bench.py --lab --claimer_id <claimer_id> --server_addr <server_name> --remote_reporter "<server_name>/benchmark/store-result|oss" --platform android

as said from here

@hl475 @sf-wind Thanks for your clarification:

I just write down details below as an example accompany to the tutorial, for other's references.

And I didn't setup the nginx and uwsgi.

Steps order are 1) 2) 3)

1) Terminal 1:

(venv) (base) new@tower2:~/Documents/git/FAI-PEP/ailab$ python manage.py runserver

Django version 2.2.1, using settings 'ailab.settings'

Starting development server at http://127.0.0.1:8000/

Quit the server with CONTROL-C.

2)Terminal 2:

(venv) (base) new@tower2:~/Documents/git/FAI-PEP/benchmarking$ python run_bench.py --lab --claimer_id 1 --server_addr http://127.0.0.1:8000 --remote_reporter "http://127.0.0.1:8000/benchmark/store-result|oss" --platform android

INFO 14:49:09 lab_driver.py: 129: Running <class 'run_lab.RunLab'> with raw_args ['--app_id', None, '--token', None, '--root_model_dir', '/home/new/.aibench/git/root_model_dir', '--logger_level', 'info', '--benchmark_table', 'benchmark_benchmarkinfo', '--cache_config', '/home/new/.aibench/git/cache_config.txt', '--commit', 'master', '--commit_file', '/home/new/.aibench/git/processed_commit', '--exec_dir', '/home/new/.aibench/git/exec', '--file_storage', 'django', '--framework', 'caffe2', '--local_reporter', '/home/new/.aibench/git/reporter', '--model_cache', '/home/new/.aibench/git/model_cache', '--remote_repository', 'origin', '--repo', 'git', '--repo_dir', '/home/new/Documents/git/pytorch', '--result_db', 'django', '--screen_reporter', '', '--status_file', '/home/new/.aibench/git/status', '--timeout', '300', '--claimer_id', '1', '--server_addr', 'http://127.0.0.1:8000', '--remote_reporter', 'http://127.0.0.1:8000/benchmark/store-result|oss', '--platform', 'android']

[{"kind": "PBDM00-8.1.0-27", "hash": "35661df3"}]

3) Terminal 3:

(venv) (base) new@tower2:~/Documents/git/FAI-PEP/benchmarking$ python run_bench.py -b ../specifications/models/caffe2/shufflenet/shufflenet.json --remote --devices PBDM00-8.1.0-27 --server_addr http://127.0.0.1:8000

INFO 14:51:50 build_program.py: 41: + cp /home/new/Documents/git/pytorch/build_android/bin/caffe2_benchmark /tmp/tmpmvdlhx_n/program

INFO 14:51:50 upload_download_files_django.py: 21: Uploading /tmp/tmpmvdlhx_n/program to http://127.0.0.1:8000/upload/

INFO 14:51:50 upload_download_files_django.py: 31: File has been uploaded to http://127.0.0.1:8000/media/documents/2019/05/24/program_e5ieX2H

INFO 14:51:50 run_remote.py: 170: program: http://127.0.0.1:8000/media/documents/2019/05/24/program_e5ieX2H

Result URL => http://127.0.0.1:8000/benchmark/visualize?sort=-p10&selection_form=%5B%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22identifier%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22metric%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22net_name%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22p10%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22p50%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22p90%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22platform%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22time%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22type%22%7D%2C+%7B%22name%22%3A+%22columns%22%2C+%22value%22%3A+%22user_identifier%22%7D%2C+%7B%22name%22%3A+%22graph-type-dropdown%22%2C+%22value%22%3A+%22bar-graph%22%7D%2C+%7B%22name%22%3A+%22rank-column-dropdown%22%2C+%22value%22%3A+%22p10%22%7D%5D&filters=%7B%22condition%22%3A+%22AND%22%2C+%22rules%22%3A+%5B%7B%22id%22%3A+%22user_identifier%22%2C+%22field%22%3A+%22user_identifier%22%2C+%22type%22%3A+%22string%22%2C+%22input%22%3A+%22text%22%2C+%22operator%22%3A+%22equal%22%2C+%22value%22%3A+%22591648383115815%22%7D%5D%2C+%22valid%22%3A+true%7D

Job status for PBDM00-8.1.0-27 is changed to QUEUE

Job status for PBDM00-8.1.0-27 is changed to RUNNING

Job status for PBDM00-8.1.0-27 is changed to DONE

ID:0 NET latency: 91662.5

4) Visualization

http://127.0.0.1:8000/benchmark/visualize Doesn't show anything though, but just typed in Result URL, you would get:

5)More

- It will be great to attach each layer's latency to a built-in network graph visualization.

- It will be great to have the caffe support for some old projects that build on caffe.

Right, you need to click the result URL to see the result. It just have some filters preset. In http://127.0.0.1:8000/benchmark/visualize, you can set the same filters to see the values.

For the operator latency, you can adjust the filters to remove the NET entry, then you will get the operator latency in the plot in clearer format.