Currently, the config and code in official Stable Diffusion is incompleted.

Thus, the repo aims to reproduce SD on different generation task. I highly recommend you to read the config to understand each fuction.

- Task1 Unconditional Image Synthesis

- Task2 Class-conditional Image Synthesis

- Task3 Inpainting

- Task4 Super-resolution

- Task5 Text-to-Image

- Task6 Layout-to-Image Synthesis

- Task7 Semantic Image Synthesis

- Task8 Image-to-Image

- Task9 Depth-to-Image

If you find it useful, please cite their original paper.

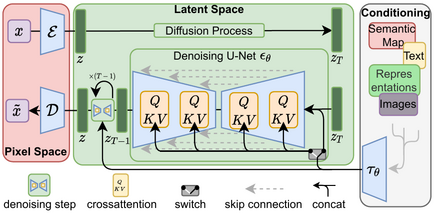

High-Resolution Image Synthesis with Latent Diffusion Models

Robin Rombach,

Andreas Blattmann,

Dominik Lorenz,

Patrick Esser,

Björn Ommer

A suitable conda environment named ldm can be created

and activated with:

conda env create -f environment.yaml

conda activate ldm

A general list of all available checkpoints is available in via our model zoo. If you use any of these models in your work, we are always happy to receive a citation.

Training on your own dataset can be beneficial to get better tokens and hence better images for your domain. Those are the steps to follow to make this work:

- install the repo with

conda env create -f environment.yaml,conda activate ldmandpip install -e . - put your .jpg files in a folder

your_folder - create 2 text files a

xx_train.txtandxx_test.txtthat point to the files in your training and test set respectively (for examplefind $(pwd)/your_folder -name "*.jpg" > train.txt) - adapt

configs/custom_vqgan.yamlto point to these 2 files - run

python main.py --base configs/custom_vqgan.yaml -t True --gpus 0,1to train on two GPUs. Use--gpus 0,(with a trailing comma) to train on a single GPU.

For downloading the CelebA-HQ and FFHQ datasets, proceed as described in the taming-transformers repository.

The LSUN datasets can be conveniently downloaded via the script available here.

We performed a custom split into training and validation images, and provide the corresponding filenames

at https://ommer-lab.com/files/lsun.zip.

After downloading, extract them to ./data/lsun. The beds/cats/churches subsets should

also be placed/symlinked at ./data/lsun/bedrooms/./data/lsun/cats/./data/lsun/churches, respectively.

The code will try to download (through Academic

Torrents) and prepare ImageNet the first time it

is used. However, since ImageNet is quite large, this requires a lot of disk

space and time. If you already have ImageNet on your disk, you can speed things

up by putting the data into

${XDG_CACHE}/autoencoders/data/ILSVRC2012_{split}/data/ (which defaults to

~/.cache/autoencoders/data/ILSVRC2012_{split}/data/), where {split} is one

of train/validation. It should have the following structure:

${XDG_CACHE}/autoencoders/data/ILSVRC2012_{split}/data/

├── n01440764

│ ├── n01440764_10026.JPEG

│ ├── n01440764_10027.JPEG

│ ├── ...

├── n01443537

│ ├── n01443537_10007.JPEG

│ ├── n01443537_10014.JPEG

│ ├── ...

├── ...

If you haven't extracted the data, you can also place

ILSVRC2012_img_train.tar/ILSVRC2012_img_val.tar (or symlinks to them) into

${XDG_CACHE}/autoencoders/data/ILSVRC2012_train/ /

${XDG_CACHE}/autoencoders/data/ILSVRC2012_validation/, which will then be

extracted into above structure without downloading it again. Note that this

will only happen if neither a folder

${XDG_CACHE}/autoencoders/data/ILSVRC2012_{split}/data/ nor a file

${XDG_CACHE}/autoencoders/data/ILSVRC2012_{split}/.ready exist. Remove them

if you want to force running the dataset preparation again.

Logs and checkpoints for trained models are saved to logs/<START_DATE_AND_TIME>_<config_spec>.

Configs for training a KL-regularized autoencoder on ImageNet are provided at configs/autoencoder.

Training can be started by running

CUDA_VISIBLE_DEVICES=<GPU_ID> python main.py --base configs/autoencoder/<config_spec>.yaml -t --gpus 0,

where config_spec is one of {autoencoder_kl_8x8x64(f=32, d=64), autoencoder_kl_16x16x16(f=16, d=16),

autoencoder_kl_32x32x4(f=8, d=4), autoencoder_kl_64x64x3(f=4, d=3)}.

For training VQ-regularized models, see the taming-transformers repository.

In configs/latent-diffusion/ we provide configs for training LDMs on the LSUN-, CelebA-HQ, FFHQ and ImageNet datasets.

Training can be started by running

CUDA_VISIBLE_DEVICES=<GPU_ID> python main.py --base configs/latent-diffusion/<config_spec>.yaml -t --gpus 0,where <config_spec> is one of {celebahq-ldm-vq-4(f=4, VQ-reg. autoencoder, spatial size 64x64x3),ffhq-ldm-vq-4(f=4, VQ-reg. autoencoder, spatial size 64x64x3),

lsun_bedrooms-ldm-vq-4(f=4, VQ-reg. autoencoder, spatial size 64x64x3),

lsun_churches-ldm-vq-4(f=8, KL-reg. autoencoder, spatial size 32x32x4),cin-ldm-vq-8(f=8, VQ-reg. autoencoder, spatial size 32x32x4)}.

We also provide a script for sampling from unconditional LDMs (e.g. LSUN, FFHQ, ...). Start it via

CUDA_VISIBLE_DEVICES=<GPU_ID> python scripts/sample_diffusion.py -r pre_trained_models/ldm/<model_spec>/model.ckpt -l <logdir> -n <\#samples> --batch_size <batch_size> -c <\#ddim steps> -e <\#eta>

CUDA_VISIBLE_DEVICES=<GPU_ID> python main.py --base configs/latent-diffusion/<config_spec>.yaml -t --gpus 0,

class2img.py

Available via a notebook .

python scripts/generate_llama_mask/gen_mask_dataset.py --config scripts/generate_llama_mask/data_gen_configs/random_medium_512.yaml --indir latent-diffusion/data/ --outdir /opt/data/private/latent-diffusion/data/x_inpaint --ext jpg

python scripts/generate_llama_mask/generate_csv.py --llama_masked_outdir /opt/data/private/latent-diffusion/data/INPAINTING/captain_inpaint/ --csv_out_path data/INPAINTING/x.csv

python main.py --base configs/latent-diffusion/inpainting_example_overfit.yaml -t Ture --gpus 0,1, -x xxx

Download the pre-trained weights

wget -O models/ldm/inpainting_big/last.ckpt https://heibox.uni-heidelberg.de/f/4d9ac7ea40c64582b7c9/?dl=1

and sample with

python scripts/inpaint.py --indir data/inpainting_examples/ --outdir outputs/inpainting_results

indir should contain images *.png and masks <image_fname>_mask.png like

the examples provided in data/inpainting_examples.

https://colab.research.google.com/drive/1xqzUi2iXQXDqXBHQGP9Mqt2YrYW6cx-J?usp=sharing

CUDA_VISIBLE_DEVICES=<GPU_ID> python main.py --base configs/latent-diffusion/<config_spec>.yaml -t --gpus 0,

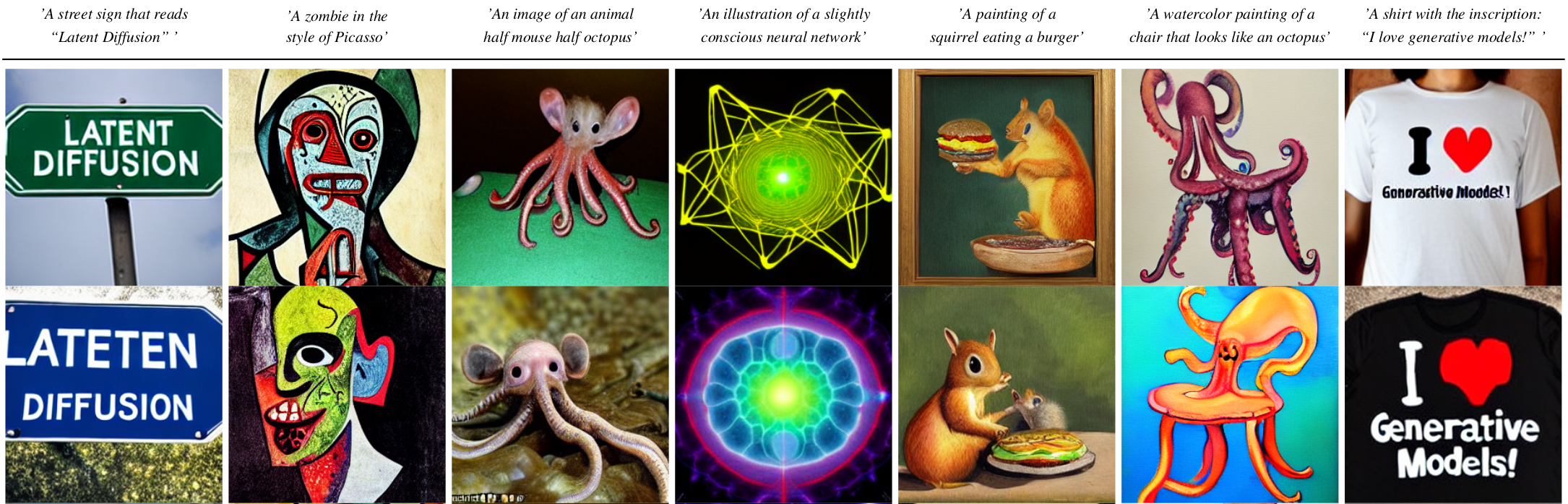

Download the pre-trained weights (5.7GB)

mkdir -p models/ldm/text2img-large/

wget -O models/ldm/text2img-large/model.ckpt https://ommer-lab.com/files/latent-diffusion/nitro/txt2img-f8-large/model.ckpt

and sample with

python scripts/txt2img.py --prompt "a virus monster is playing guitar, oil on canvas" --ddim_eta 0.0 --n_samples 4 --n_iter 4 --scale 5.0 --ddim_steps 50

This will save each sample individually as well as a grid of size n_iter x n_samples at the specified output location (default: outputs/txt2img-samples).

Quality, sampling speed and diversity are best controlled via the scale, ddim_steps and ddim_eta arguments.

As a rule of thumb, higher values of scale produce better samples at the cost of a reduced output diversity.

Furthermore, increasing ddim_steps generally also gives higher quality samples, but returns are diminishing for values > 250.

Fast sampling (i.e. low values of ddim_steps) while retaining good quality can be achieved by using --ddim_eta 0.0.

Faster sampling (i.e. even lower values of ddim_steps) while retaining good quality can be achieved by using --ddim_eta 0.0 and --plms (see Pseudo Numerical Methods for Diffusion Models on Manifolds).

For certain inputs, simply running the model in a convolutional fashion on larger features than it was trained on

can sometimes result in interesting results. To try it out, tune the H and W arguments (which will be integer-divided

by 8 in order to calculate the corresponding latent size), e.g. run

python scripts/txt2img.py --prompt "a sunset behind a mountain range, vector image" --ddim_eta 1.0 --n_samples 1 --n_iter 1 --H 384 --W 1024 --scale 5.0

to create a sample of size 384x1024. Note, however, that controllability is reduced compared to the 256x256 setting.

The example below was generated using the above command.

COCO format

CUDA_VISIBLE_DEVICES=<GPU_ID> python main.py --base configs/latent-diffusion/<config_spec>.yaml -t --gpus 0,

python layout2img.py

CUDA_VISIBLE_DEVICES=<GPU_ID> python main.py --base configs/latent-diffusion/<config_spec>.yaml -t --gpus 0,

python mask2img.py

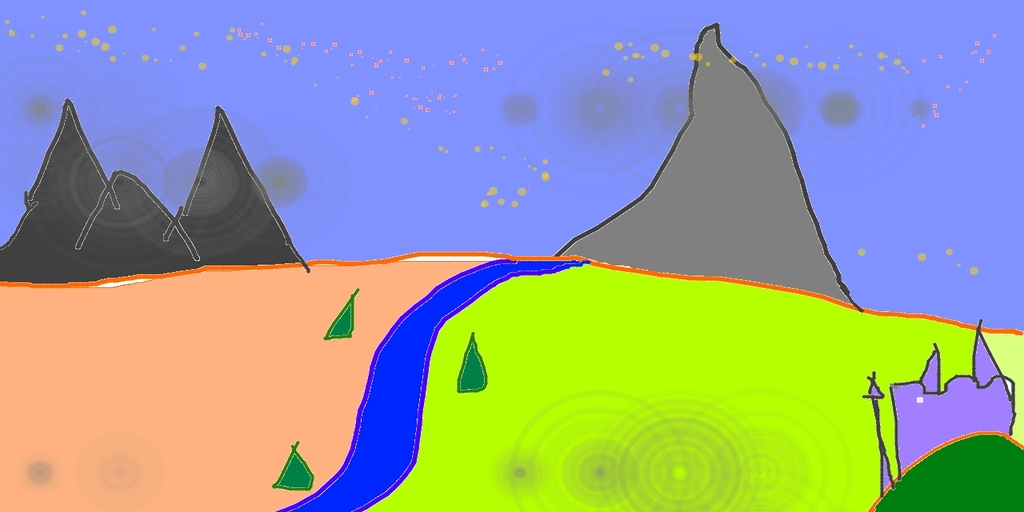

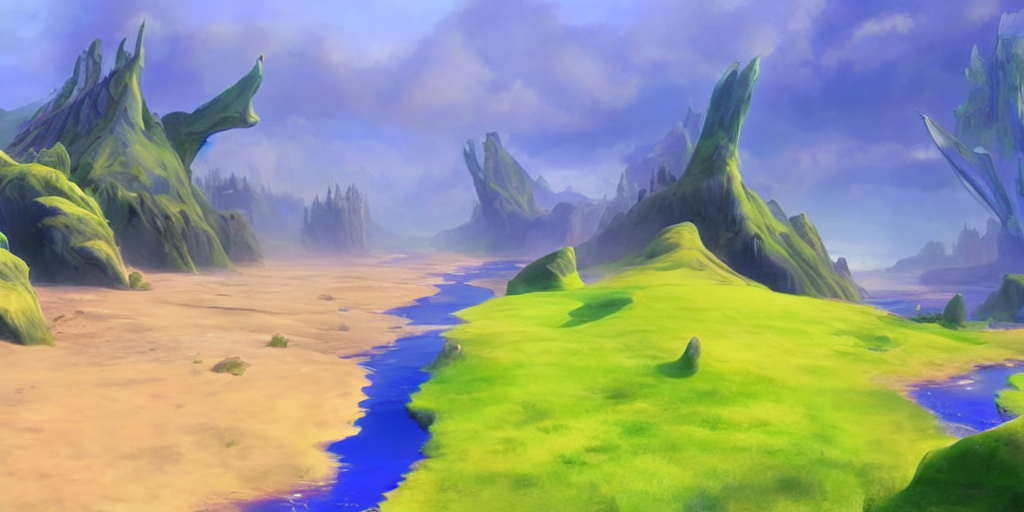

By using a diffusion-denoising mechanism as first proposed by SDEdit, the model can be used for different tasks such as text-guided image-to-image translation and upscaling. Similar to the txt2img sampling script, we provide a script to perform image modification with Stable Diffusion.

The following describes an example where a rough sketch made in Pinta is converted into a detailed artwork.

python scripts/img2img.py --prompt "A fantasy landscape, trending on artstation" --init-img <path-to-img.jpg> --strength 0.8

Here, strength is a value between 0.0 and 1.0, that controls the amount of noise that is added to the input image. Values that approach 1.0 allow for lots of variations but will also produce images that are not semantically consistent with the input. See the following example.

Input

Outputs

This procedure can, for example, also be used to upscale samples from the base model.

- Inference code and model weights to run our retrieval-augmented diffusion models are now available. See this section.

-

Thanks to Katherine Crowson, classifier-free guidance received a ~2x speedup and the PLMS sampler is available. See also this PR.

-

Our 1.45B latent diffusion LAION model was integrated into Huggingface Spaces 🤗 using Gradio. Try out the Web Demo:

-

More pre-trained LDMs are available:

- A 1.45B model trained on the LAION-400M database.

- A class-conditional model on ImageNet, achieving a FID of 3.6 when using classifier-free guidance Available via a colab notebook

.

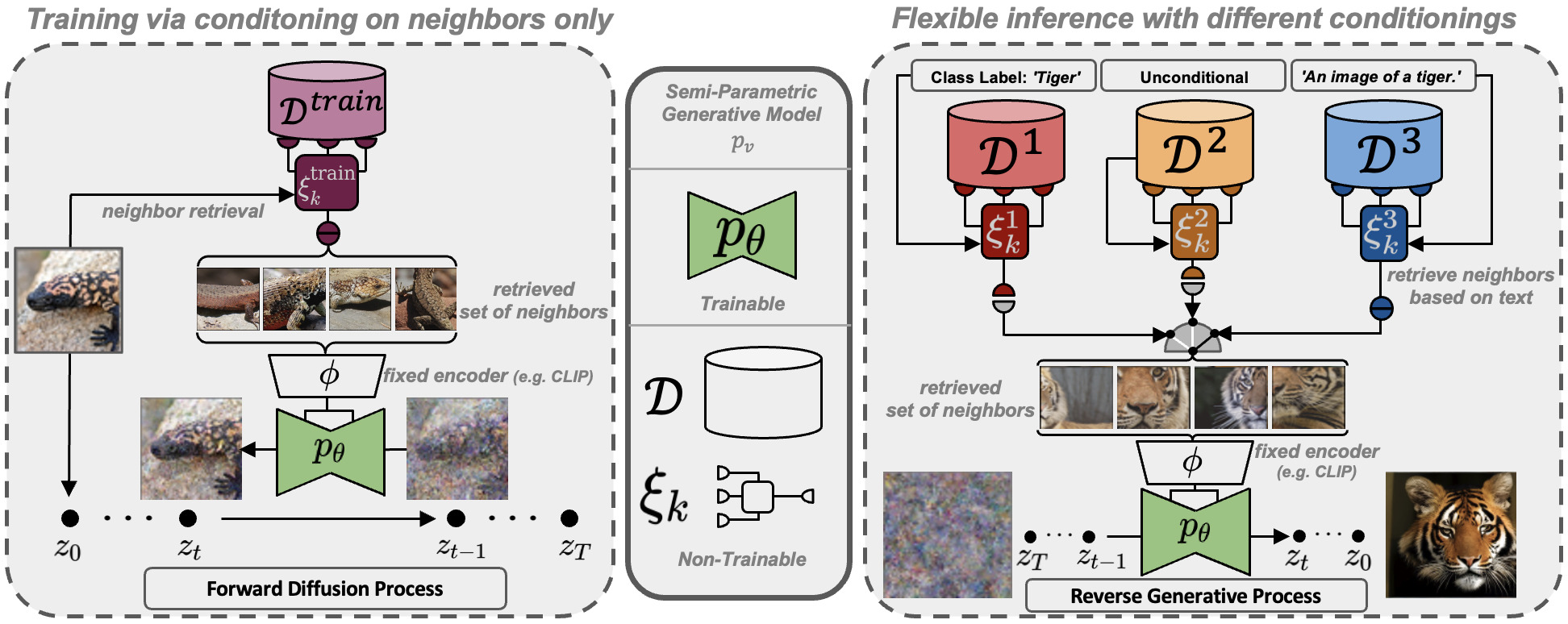

We include inference code to run our retrieval-augmented diffusion models (RDMs) as described in https://arxiv.org/abs/2204.11824.

We include inference code to run our retrieval-augmented diffusion models (RDMs) as described in https://arxiv.org/abs/2204.11824.

To get started, install the additionally required python packages into your ldm environment

pip install transformers==4.19.2 scann kornia==0.6.4 torchmetrics==0.6.0

pip install git+https://github.com/arogozhnikov/einops.gitand download the trained weights (preliminary ceckpoints):

mkdir -p pre_trained_models/rdm/rdm768x768/

wget -O pre_trained_models/rdm/rdm768x768/model.ckpt https://ommer-lab.com/files/rdm/model.ckptAs these models are conditioned on a set of CLIP image embeddings, our RDMs support different inference modes, which are described in the following.

Since CLIP offers a shared image/text feature space, and RDMs learn to cover a neighborhood of a given example during training, we can directly take a CLIP text embedding of a given prompt and condition on it. Run this mode via

python scripts/knn2img.py --prompt "a happy bear reading a newspaper, oil on canvas"

To be able to run a RDM conditioned on a text-prompt and additionally images retrieved from this prompt, you will also need to download the corresponding retrieval database. We provide two distinct databases extracted from the Openimages- and ArtBench- datasets. Interchanging the databases results in different capabilities of the model as visualized below, although the learned weights are the same in both cases.

Download the retrieval-databases which contain the retrieval-datasets (Openimages (~11GB) and ArtBench (~82MB)) compressed into CLIP image embeddings:

mkdir -p data/rdm/retrieval_databases

wget -O data/rdm/retrieval_databases/artbench.zip https://ommer-lab.com/files/rdm/artbench_databases.zip

wget -O data/rdm/retrieval_databases/openimages.zip https://ommer-lab.com/files/rdm/openimages_database.zip

unzip data/rdm/retrieval_databases/artbench.zip -d data/rdm/retrieval_databases/

unzip data/rdm/retrieval_databases/openimages.zip -d data/rdm/retrieval_databases/We also provide trained ScaNN search indices for ArtBench. Download and extract via

mkdir -p data/rdm/searchers

wget -O data/rdm/searchers/artbench.zip https://ommer-lab.com/files/rdm/artbench_searchers.zip

unzip data/rdm/searchers/artbench.zip -d data/rdm/searchersSince the index for OpenImages is large (~21 GB), we provide a script to create and save it for usage during sampling. Note however, that sampling with the OpenImages database will not be possible without this index. Run the script via

python scripts/train_searcher.pyRetrieval based text-guided sampling with visual nearest neighbors can be started via

python scripts/knn2img.py --prompt "a happy pineapple" --use_neighbors --knn <number_of_neighbors>

Note that the maximum supported number of neighbors is 20.

The database can be changed via the cmd parameter --database which can be [openimages, artbench-art_nouveau, artbench-baroque, artbench-expressionism, artbench-impressionism, artbench-post_impressionism, artbench-realism, artbench-renaissance, artbench-romanticism, artbench-surrealism, artbench-ukiyo_e].

For using --database openimages, the above script (scripts/train_searcher.py) must be executed before.

Due to their relatively small size, the artbench datasetbases are best suited for creating more abstract concepts and do not work well for detailed text control.

- better models

- more resolutions

- image-to-image retrieval

All models were trained until convergence (no further substantial improvement in rFID).

| Model | rFID vs val | train steps | PSNR | PSIM | Link | Comments |

|---|---|---|---|---|---|---|

| f=4, VQ (Z=8192, d=3) | 0.58 | 533066 | 27.43 +/- 4.26 | 0.53 +/- 0.21 | https://ommer-lab.com/files/latent-diffusion/vq-f4.zip | |

| f=4, VQ (Z=8192, d=3) | 1.06 | 658131 | 25.21 +/- 4.17 | 0.72 +/- 0.26 | https://heibox.uni-heidelberg.de/f/9c6681f64bb94338a069/?dl=1 | no attention |

| f=8, VQ (Z=16384, d=4) | 1.14 | 971043 | 23.07 +/- 3.99 | 1.17 +/- 0.36 | https://ommer-lab.com/files/latent-diffusion/vq-f8.zip | |

| f=8, VQ (Z=256, d=4) | 1.49 | 1608649 | 22.35 +/- 3.81 | 1.26 +/- 0.37 | https://ommer-lab.com/files/latent-diffusion/vq-f8-n256.zip | |

| f=16, VQ (Z=16384, d=8) | 5.15 | 1101166 | 20.83 +/- 3.61 | 1.73 +/- 0.43 | https://heibox.uni-heidelberg.de/f/0e42b04e2e904890a9b6/?dl=1 | |

| f=4, KL | 0.27 | 176991 | 27.53 +/- 4.54 | 0.55 +/- 0.24 | https://ommer-lab.com/files/latent-diffusion/kl-f4.zip | |

| f=8, KL | 0.90 | 246803 | 24.19 +/- 4.19 | 1.02 +/- 0.35 | https://ommer-lab.com/files/latent-diffusion/kl-f8.zip | |

| f=16, KL (d=16) | 0.87 | 442998 | 24.08 +/- 4.22 | 1.07 +/- 0.36 | https://ommer-lab.com/files/latent-diffusion/kl-f16.zip | |

| f=32, KL (d=64) | 2.04 | 406763 | 22.27 +/- 3.93 | 1.41 +/- 0.40 | https://ommer-lab.com/files/latent-diffusion/kl-f32.zip |

Running the following script downloads und extracts all available pretrained autoencoding models.

bash scripts/download_first_stages.shThe first stage models can then be found in models/first_stage_models/<model_spec>

| Datset | Task | Model | FID | IS | Prec | Recall | Link | Comments |

|---|---|---|---|---|---|---|---|---|

| CelebA-HQ | Unconditional Image Synthesis | LDM-VQ-4 (200 DDIM steps, eta=0) | 5.11 (5.11) | 3.29 | 0.72 | 0.49 | https://ommer-lab.com/files/latent-diffusion/celeba.zip | |

| FFHQ | Unconditional Image Synthesis | LDM-VQ-4 (200 DDIM steps, eta=1) | 4.98 (4.98) | 4.50 (4.50) | 0.73 | 0.50 | https://ommer-lab.com/files/latent-diffusion/ffhq.zip | |

| LSUN-Churches | Unconditional Image Synthesis | LDM-KL-8 (400 DDIM steps, eta=0) | 4.02 (4.02) | 2.72 | 0.64 | 0.52 | https://ommer-lab.com/files/latent-diffusion/lsun_churches.zip | |

| LSUN-Bedrooms | Unconditional Image Synthesis | LDM-VQ-4 (200 DDIM steps, eta=1) | 2.95 (3.0) | 2.22 (2.23) | 0.66 | 0.48 | https://ommer-lab.com/files/latent-diffusion/lsun_bedrooms.zip | |

| ImageNet | Class-conditional Image Synthesis | LDM-VQ-8 (200 DDIM steps, eta=1) | 7.77(7.76)* /15.82** | 201.56(209.52)* /78.82** | 0.84* / 0.65** | 0.35* / 0.63** | https://ommer-lab.com/files/latent-diffusion/cin.zip | *: w/ guiding, classifier_scale 10 **: w/o guiding, scores in bracket calculated with script provided by ADM |

| Conceptual Captions | Text-conditional Image Synthesis | LDM-VQ-f4 (100 DDIM steps, eta=0) | 16.79 | 13.89 | N/A | N/A | https://ommer-lab.com/files/latent-diffusion/text2img.zip | finetuned from LAION |

| OpenImages | Super-resolution | LDM-VQ-4 | N/A | N/A | N/A | N/A | https://ommer-lab.com/files/latent-diffusion/sr_bsr.zip | BSR image degradation |

| OpenImages | Layout-to-Image Synthesis | LDM-VQ-4 (200 DDIM steps, eta=0) | 32.02 | 15.92 | N/A | N/A | https://ommer-lab.com/files/latent-diffusion/layout2img_model.zip | |

| Landscapes | Semantic Image Synthesis | LDM-VQ-4 | N/A | N/A | N/A | N/A | https://ommer-lab.com/files/latent-diffusion/semantic_synthesis256.zip | |

| Landscapes | Semantic Image Synthesis | LDM-VQ-4 | N/A | N/A | N/A | N/A | https://ommer-lab.com/files/latent-diffusion/semantic_synthesis.zip | finetuned on resolution 512x512 |

The LDMs listed above can jointly be downloaded and extracted via

bash scripts/download_models.shThe models can then be found in models/ldm/<model_spec>.

- In the meantime, you can play with our colab notebook https://colab.research.google.com/drive/1xqzUi2iXQXDqXBHQGP9Mqt2YrYW6cx-J?usp=sharing

-

My codebase for the diffusion models builds heavily on

- OpenAI's ADM codebase

- https://github.com/lucidrains/denoising-diffusion-pytorch

- Thanks for open-sourcing!

-

The implementation of the transformer encoder is from x-transformers by lucidrains.

@misc{rombach2021highresolution,

title={High-Resolution Image Synthesis with Latent Diffusion Models},

author={Robin Rombach and Andreas Blattmann and Dominik Lorenz and Patrick Esser and Björn Ommer},

year={2021},

eprint={2112.10752},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

@misc{https://doi.org/10.48550/arxiv.2204.11824,

doi = {10.48550/ARXIV.2204.11824},

url = {https://arxiv.org/abs/2204.11824},

author = {Blattmann, Andreas and Rombach, Robin and Oktay, Kaan and Ommer, Björn},

keywords = {Computer Vision and Pattern Recognition (cs.CV), FOS: Computer and information sciences, FOS: Computer and information sciences},

title = {Retrieval-Augmented Diffusion Models},

publisher = {arXiv},

year = {2022},

copyright = {arXiv.org perpetual, non-exclusive license}

}