Attention mask?

pcuenca opened this issue · 1 comments

Like in Stable Diffusion, no attention mask appears to be used for input tokens:

open-muse/muse/pipeline_muse.py

Lines 93 to 101 in 2a03657

But according to third party analysis this appears to have been a mistake all along. Do we have insight on whether attention masks would help for better prompt-image alignment?

these authors reckon it's better to train on an unmasked text embeddings (even though that risks learning from PAD token embeddings):

huggingface/diffusers#1890 (comment)

as for inference: the user needs to be able to match whatever approach was used during training.

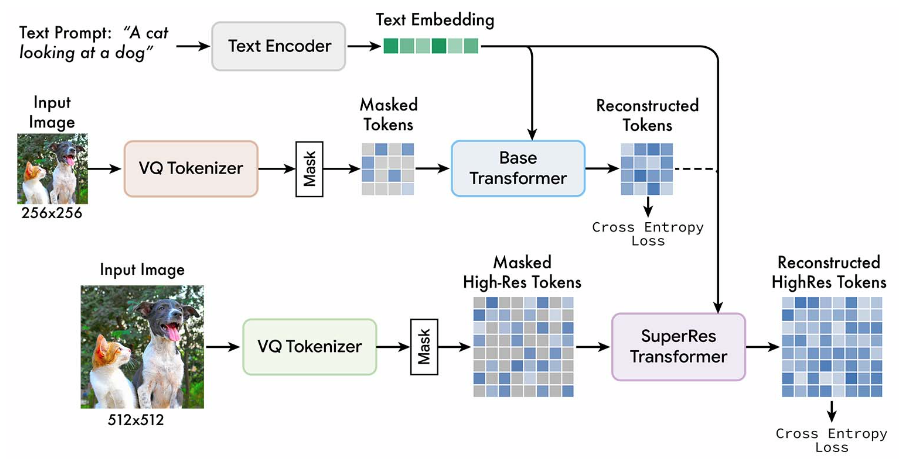

I thought Muse was a bit wackier though. it actually masks vision tokens:

https://github.com/lucidrains/muse-maskgit-pytorch/