This repo contains PyTorch model definitions, pre-trained weights and inference/sampling code for our paper exploring Fast training diffusion models with transformers. You can find more visualizations on our project page.

PixArt-α Community: Join our PixArt-α discord channels

PixArt-α Community: Join our PixArt-α discord channels

for discussions. Coders are welcome to contribute.

for discussions. Coders are welcome to contribute.

PixArt-α: Fast Training of Diffusion Transformer for Photorealistic Text-to-Image Synthesis

Junsong Chen*, Jincheng Yu*, Chongjian Ge*, Lewei Yao*, Enze Xie†, Yue Wu, Zhongdao Wang, James Kwok, Ping Luo, Huchuan Lu, Zhenguo Li

Huawei Noah’s Ark Lab, Dalian University of Technology, HKU, HKUST

- (🔥 New) Nov. 30, 2023. 💥 PixArt collaborates with LCMs team to make the fastest Training & Inference Text-to-Image Generation System. Here, Training code & Inference code & Weights & Demo are all released, we hope users will enjoy them. Refer to docs for more details. At the same time, we update the codebase for better user experience and fix some bugs in the newest version.

- ✅ Nov. 27, 2023. 💥 PixArt-α Community: Join our PixArt-α discord channels

for discussions. Coders are welcome to contribute.

for discussions. Coders are welcome to contribute. - ✅ Nov. 21, 2023. 💥 SA-Sovler official code first release here.

- ✅ Nov. 19, 2023. Release

PixArt + Dreamboothtraining scripts. - ✅ Nov. 16, 2023. Diffusers support

random resolutionandbatch imagesgeneration now. Besides, runningPixartin under 8GB GPU VRAM is available in 🧨 diffusers. - ✅ Nov. 10, 2023. Support DALL-E 3 Consistency Decoder in 🧨 diffusers.

- ✅ Nov. 06, 2023. Release pretrained weights with 🧨 diffusers integration, Hugging Face demo, and Google Colab example.

- ✅ Nov. 03, 2023. Release the LLaVA-captioning inference code.

- ✅ Oct. 27, 2023. Release the training & feature extraction code.

- ✅ Oct. 20, 2023. Collaborate with Hugging Face & Diffusers team to co-release the code and weights. (plz stay tuned.)

- ✅ Oct. 15, 2023. Release the inference code.

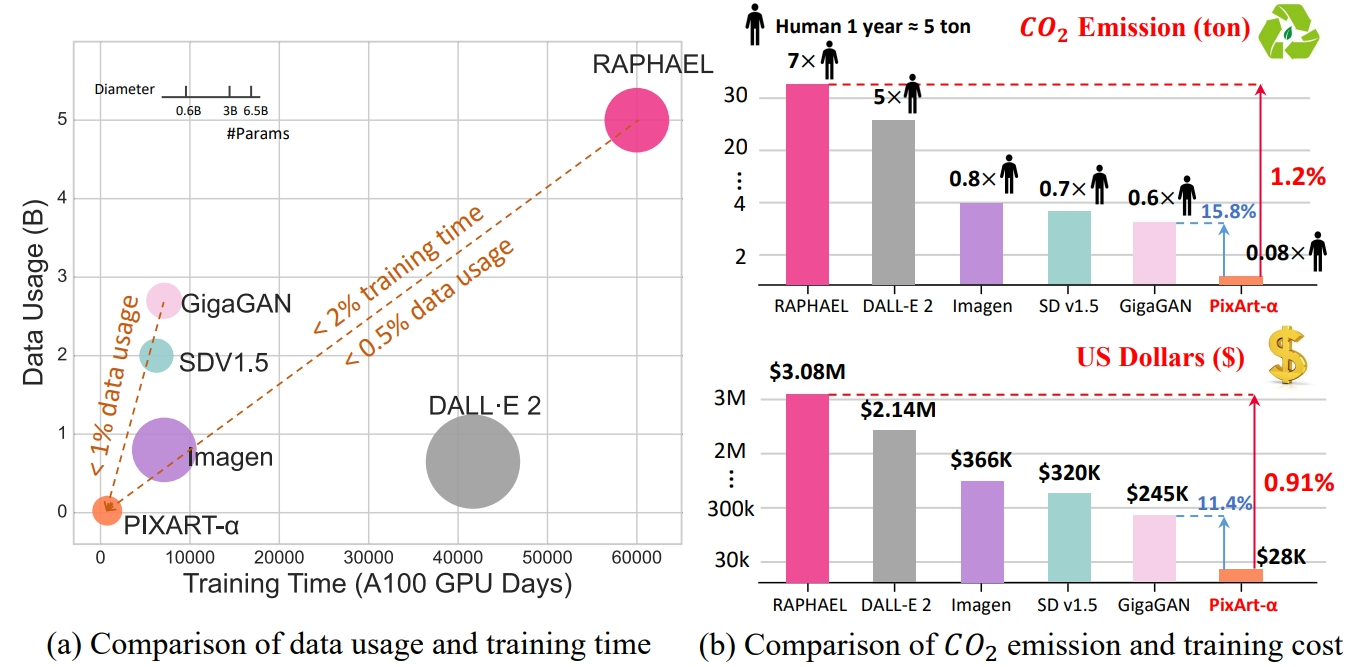

TL; DR: PixArt-α is a Transformer-based T2I diffusion model whose image generation quality is competitive with state-of-the-art image generators (e.g., Imagen, SDXL, and even Midjourney), and the training speed markedly surpasses existing large-scale T2I models, e.g., PixArt-α only takes 10.8% of Stable Diffusion v1.5's training time (675 vs. 6,250 A100 GPU days).

CLICK for the full abstract

The most advanced text-to-image (T2I) models require significant training costs (e.g., millions of GPU hours), seriously hindering the fundamental innovation for the AIGC community while increasing CO2 emissions. This paper introduces PixArt-α, a Transformer-based T2I diffusion model whose image generation quality is competitive with state-of-the-art image generators (e.g., Imagen, SDXL, and even Midjourney), reaching near-commercial application standards. Additionally, it supports high-resolution image synthesis up to 1024px resolution with low training cost. To achieve this goal, three core designs are proposed: (1) Training strategy decomposition: We devise three distinct training steps that separately optimize pixel dependency, text-image alignment, and image aesthetic quality; (2) Efficient T2I Transformer: We incorporate cross-attention modules into Diffusion Transformer (DiT) to inject text conditions and streamline the computation-intensive class-condition branch; (3) High-informative data: We emphasize the significance of concept density in text-image pairs and leverage a large Vision-Language model to auto-label dense pseudo-captions to assist text-image alignment learning. As a result, PixArt-α's training speed markedly surpasses existing large-scale T2I models, e.g., PixArt-α only takes 10.8% of Stable Diffusion v1.5's training time (675 vs. 6,250 A100 GPU days), saving nearly $300,000 ($26,000 vs. $320,000) and reducing 90% CO2 emissions. Moreover, compared with a larger SOTA model, RAPHAEL, our training cost is merely 1%. Extensive experiments demonstrate that PixArt-α excels in image quality, artistry, and semantic control. We hope PixArt-α will provide new insights to the AIGC community and startups to accelerate building their own high-quality yet low-cost generative models from scratch.PixArt-α only takes 12% of Stable Diffusion v1.5's training time (753 vs. 6,250 A100 GPU days), saving nearly $300,000 ($28,000 vs. $320,000) and reducing 90% CO2 emissions. Moreover, compared with a larger SOTA model, RAPHAEL, our training cost is merely 1%.

| Method | Type | #Params | #Images | FID-30K ↓ | A100 GPU days |

|---|---|---|---|---|---|

| DALL·E | Diff | 12.0B | 250M | 27.50 | |

| GLIDE | Diff | 5.0B | 250M | 12.24 | |

| LDM | Diff | 1.4B | 400M | 12.64 | |

| DALL·E 2 | Diff | 6.5B | 650M | 10.39 | 41,66 |

| SDv1.5 | Diff | 0.9B | 2000M | 9.62 | 6,250 |

| GigaGAN | GAN | 0.9B | 2700M | 9.09 | 4,783 |

| Imagen | Diff | 3.0B | 860M | 7.27 | 7,132 |

| RAPHAEL | Diff | 3.0B | 5000M+ | 6.61 | 60,000 |

| PixArt-α | Diff | 0.6B | 25M | 7.32 (zero-shot) | 753 |

| PixArt-α | Diff | 0.6B | 25M | 5.51 (COCO FT) | 753 |

- More samples

- PixArt + Dreambooth

- PixArt + ControlNet

- Python >= 3.10 (Recommend to use Anaconda or Miniconda)

- PyTorch >= 1.13.0+cu11.7

conda create -n pixart python==3.9.0

conda activate pixart

cd path/to/pixart

pip install torch==2.0.0+cu117 torchvision==0.15.1+cu117 torchaudio==2.0.1 --index-url https://download.pytorch.org/whl/cu117

pip install -r requirements.txtAll models will be automatically downloaded. You can also choose to download manually from this url.

| Model | #Params | url | Download in OpenXLab |

|---|---|---|---|

| T5 | 4.3B | T5 | T5 |

| VAE | 80M | VAE | VAE |

| PixArt-α-SAM-256 | 0.6B | 256 | 256 |

| PixArt-α-512 | 0.6B | 512 or diffuser version | 512 |

| PixArt-α-1024 | 0.6B | 1024 or diffuser version | 1024 |

ALSO find all models in OpenXLab_PixArt-alpha

Here we take SAM dataset training config as an example, but of course, you can also prepare your own dataset following this method.

You ONLY need to change the config file in config and dataloader in dataset.

python -m torch.distributed.launch --nproc_per_node=2 --master_port=12345 train_scripts/train.py configs/pixart_config/PixArt_xl2_img256_SAM.py --work-dir output/train_SAM_256The directory structure for SAM dataset is:

cd ./data

SA1B

├──images/ (images are saved here)

│ ├──sa_xxxxx.jpg

│ ├──sa_xxxxx.jpg

│ ├──......

├──captions/ (corresponding captions are saved here, same name as images)

│ ├──sa_xxxxx.txt

│ ├──sa_xxxxx.txt

├──partition/ (all image names are stored txt file where each line is a image name)

│ ├──part0.txt

│ ├──part1.txt

│ ├──......

├──caption_feature_wmask/ (run tools/extract_caption_feature.py to generate caption T5 features, same name as images except .npz extension)

│ ├──sa_xxxxx.npz

│ ├──sa_xxxxx.npz

│ ├──......

├──img_vae_feature/ (run tools/extract_img_vae_feature.py to generate image VAE features, same name as images except .npy extension)

│ ├──train_vae_256/

│ │ ├──noflip/

│ │ │ ├──sa_xxxxx.npy

│ │ │ ├──sa_xxxxx.npy

│ │ │ ├──......

Here we prepare data_toy for better understanding

cd ./data

git lfs install

git clone https://huggingface.co/datasets/PixArt-alpha/data_toyThen, Here is an example of partition/part0.txt file.

Besides, for json file guided training, here is a toy json file for better understand.

Thanks to @kopyl, you can reproduce the full fine-tune training flow on Pokemon dataset from HugginFace with notebooks:

- Train with notebooks/train.ipynb.

- Convert to Diffusers with notebooks/convert-checkpoint-to-diffusers.ipynb.

- Run the inference with converted checkpoint in step 2 with notebooks/infer.ipynb.

Following the Pixart + DreamBooth training guidance

Following the PixArt + LCM training guidance

Inference requires at least 23GB of GPU memory using this repo, while 11GB and 8GB using in 🧨 diffusers.

Currently support:

1. Quick start with Gradio

To get started, first install the required dependencies. Make sure you've downloaded the models to the output/pretrained_models folder, and then run on your local machine:

DEMO_PORT=12345 python scripts/app.pyAs an alternative, a sample Dockerfile is provided to make a runtime container that starts the Gradio app.

docker build . -t pixart

docker run --gpus all -it -p 12345:12345 -v <path_to_models>:/workspace/output/pretrained_models pixartLet's have a look at a simple example using the http://your-server-ip:12345.

Make sure you have the updated versions of the following libraries:

pip install -U transformers accelerate diffusersAnd then:

import torch

from diffusers import PixArtAlphaPipeline, ConsistencyDecoderVAE, AutoencoderKL

# You can replace the checkpoint id with "PixArt-alpha/PixArt-XL-2-512x512" too.

pipe = PixArtAlphaPipeline.from_pretrained("PixArt-alpha/PixArt-XL-2-1024-MS", torch_dtype=torch.float16, use_safetensors=True)

# If use DALL-E 3 Consistency Decoder

# pipe.vae = ConsistencyDecoderVAE.from_pretrained("openai/consistency-decoder", torch_dtype=torch.float16)

# If use SA-Solver sampler

# from diffusion.sa_solver_diffusers import SASolverScheduler

# pipe.scheduler = SASolverScheduler.from_config(pipe.scheduler.config, algorithm_type='data_prediction')

# Enable memory optimizations.

pipe.enable_model_cpu_offload()

prompt = "A small cactus with a happy face in the Sahara desert."

image = pipe(prompt).images[0]Check out the documentation for more information abount SA-Solver Sampler.

This integration allows running the pipeline with a batch size of 4 under 11 GBs of GPU VRAM. Check out the documentation to learn more.

GPU VRAM consumption under 8 GB is supported now, please refer to documentation for more information.

To get started, first install the required dependencies, then run on your local machine:

# diffusers version

DEMO_PORT=12345 python scripts/app.pyLet's have a look at a simple example using the http://your-server-ip:12345.

You can also click here to have a free trial on Google Colab.

python tools/convert_pixart_alpha_to_diffusers.py --image_size your_img_size --orig_ckpt_path path/to/pth --dump_path path/to/diffusers --only_transformer=TrueThanks to the code base of LLaVA-Lightning-MPT, we can caption the LAION and SAM dataset with the following launching code:

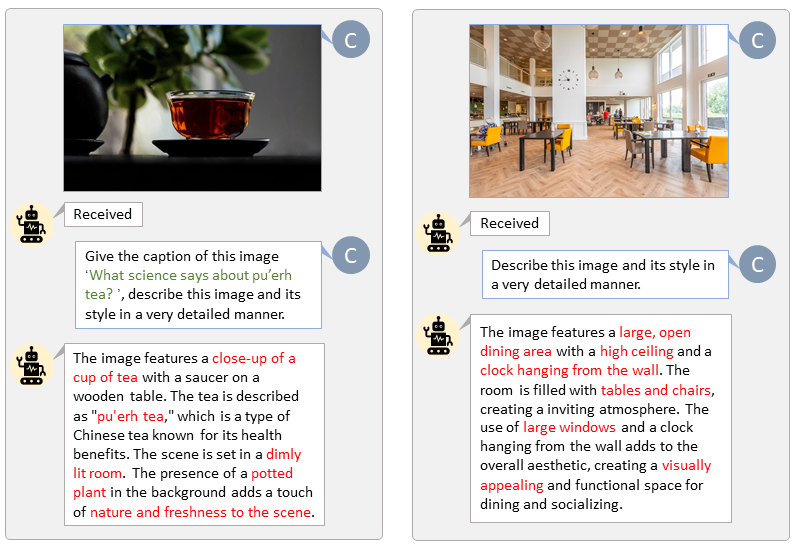

python tools/VLM_caption_lightning.py --output output/dir/ --data-root data/root/path --index path/to/data.jsonWe present auto-labeling with custom prompts for LAION (left) and SAM (right). The words highlighted in green represent the original caption in LAION, while those marked in red indicate the detailed captions labeled by LLaVA.

Prepare T5 text feature and VAE image feature in advance will speed up the training process and save GPU memory.

python tools/extract_features.py- Inference code

- Training code

- T5 & VAE feature extraction code

- LLaVA captioning code

- Model zoo

- Diffusers version & Hugging Face demo

- Google Colab example

- DALLE3 VAE integration

- Inference under 8GB GPU VRAM with diffusers

- Dreambooth Training code

- SA-Solver code

- PixArt-α-LCM will release soon

- PixArt-α-LCM-LoRA will release soon

- SAM-LLaVA caption dataset

- ControlNet code

@misc{chen2023pixartalpha,

title={PixArt-$\alpha$: Fast Training of Diffusion Transformer for Photorealistic Text-to-Image Synthesis},

author={Junsong Chen and Jincheng Yu and Chongjian Ge and Lewei Yao and Enze Xie and Yue Wu and Zhongdao Wang and James Kwok and Ping Luo and Huchuan Lu and Zhenguo Li},

year={2023},

eprint={2310.00426},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

- Thanks to Diffusers for their wonderful technical support and awesome collaboration!

- Thanks to Hugging Face for sponsoring the nicely demo!

- Thanks to DiT for their wonderful work and codebase!