The official repository of the paper SyncTalk: The Devil is in the Synchronization for Talking Head Synthesis

Paper | Project Page | Code

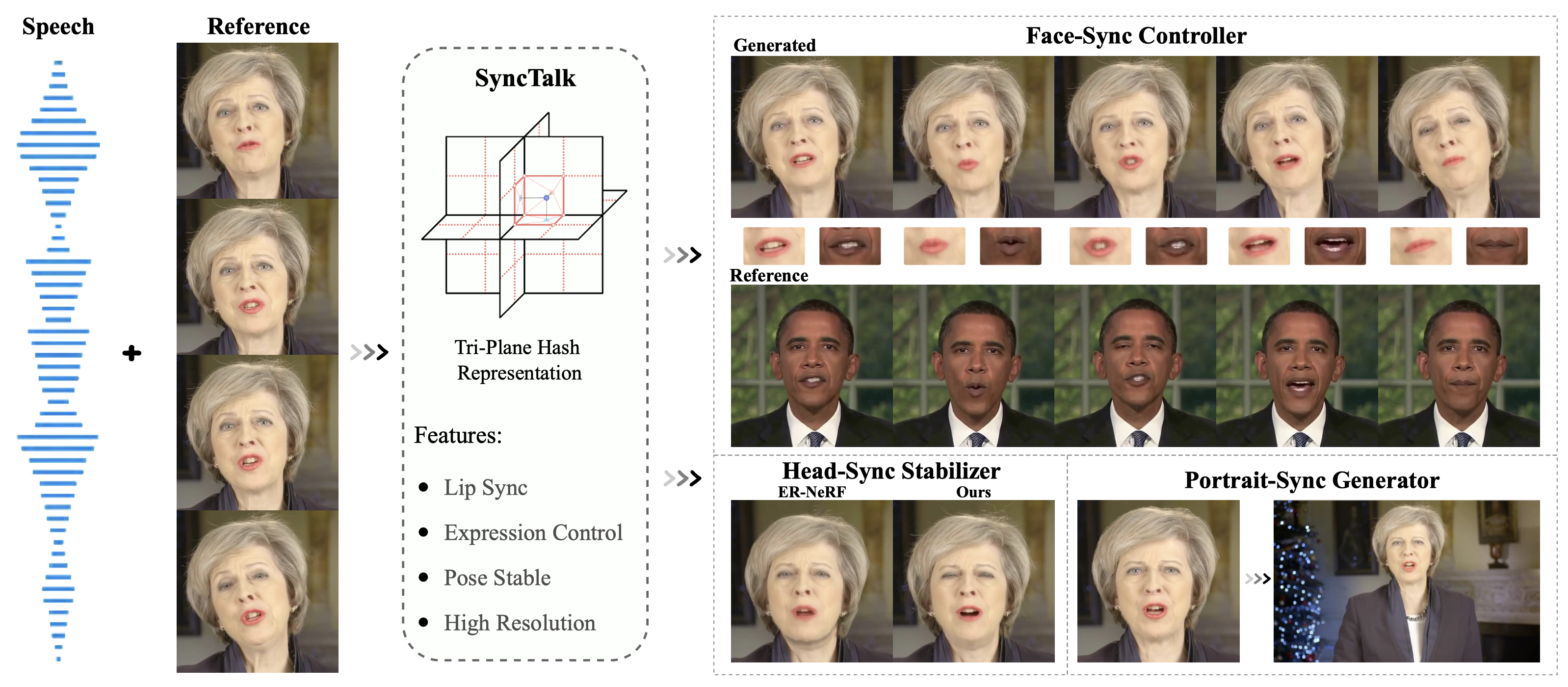

The proposed SyncTalk synthesizes synchronized talking head videos, employing tri-plane hash representations to maintain subject identity. It can generate synchronized lip movements, facial expressions, and stable head poses, and restores hair details to create high-resolution videos.

Tested on Ubuntu 18.04, Pytorch 1.12.1 and CUDA 11.3.

git clone https://github.com/ZiqiaoPeng/SyncTalk.git

cd SyncTalkconda create -n synctalk python==3.8.8

conda activate synctalk

pip install torch==1.12.1+cu113 torchvision==0.13.1+cu113 torchaudio==0.12.1 --extra-index-url https://download.pytorch.org/whl/cu113

pip install -r requirements.txt

pip install --no-index --no-cache-dir pytorch3d -f https://dl.fbaipublicfiles.com/pytorch3d/packaging/wheels/py38_cu113_pyt1121/download.html

pip install ./freqencoder

pip install ./shencoder

pip install ./gridencoder

pip install ./raymarchingIf you encounter problems installing PyTorch3D, you can use the following command to install it:

python ./scripts/install_pytorch3d.pyPlease place the May.zip in the data folder, the trial_may.zip in the model folder, and then unzip them.

python main.py data/May --workspace model/trial_may -O --test --asr_model ave

python main.py data/May --workspace model/trial_may -O --test --asr_model ave --portrait“ave” refers to our Audio Visual Encoder, “portrait” signifies pasting the generated face back onto the original image, representing higher quality. If it runs correctly, you will get the following results.

| Setting | PSNR | LPIPS | LMD |

|---|---|---|---|

| SyncTalk (w/o Portrait) | 32.201 | 0.0394 | 2.822 |

| SyncTalk (Portrait) | 37.644 | 0.0117 | 2.825 |

This is for a single subject; the paper reports the average results for multiple subjects.

python main.py data/May --workspace model/trial_may -O --test --test_train --asr_model ave --portrait --aud ./demo/test.wavPlease use files with the “.wav” extension for inference, and the inference results will be saved in “model/trial_may/results/”.

# by default, we load data from disk on the fly.

# we can also preload all data to CPU/GPU for faster training, but this is very memory-hungry for large datasets.

# `--preload 0`: load from disk (default, slower).

# `--preload 1`: load to CPU (slightly slower)

# `--preload 2`: load to GPU (fast)

python main.py data/May --workspace model/trial_may -O --iters 60000 --asr_model ave

python main.py data/May --workspace model/trial_may -O --iters 100000 --finetune_lips --patch_size 64 --asr_model ave

# or you can use the script to train

sh ./scripts/train_may.shpython main.py data/May --workspace model/trial_may -O --test --asr_model ave --portrait- Release Training Code.

- Release Pre-trained Model.

- Release Google Colab.

- Release Preprocessing Code.

@InProceedings{peng2023synctalk,

title = {SyncTalk: The Devil is in the Synchronization for Talking Head Synthesis},

author = {Ziqiao Peng and Wentao Hu and Yue Shi and Xiangyu Zhu and Xiaomei Zhang and Jun He and Hongyan Liu and Zhaoxin Fan},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2024},

}

This code is developed heavily relying on ER-NeRF, and also RAD-NeRF, GeneFace, DFRF, AD-NeRF, and Deep3DFaceRecon_pytorch.

Thanks for these great projects.