- Minimal overhead - written on Asp.Net Core, on dotnet 8. One of the fastest web-servers in the business.

- Enable advanced Azure APIm scenarios such as passing a Subscription Key, and a JWT from libraries like PromptFlow that don't support that out-of-the-box.

- PII Stripping logging to Cosmos DB

- Powered by

graemefoster/aicentral.logging.piistripping

- Powered by

- Lightweight out-the-box token metrics surfaced through Open Telemetry

- Does not buffer and block streaming

- Use for PTU Chargeback scenarios

- Gain quick insights into who's using what, how much, and how often

- Standard Open Telemetry format to surface Dashboards in you monitoring solution of choice

- Prompt and usage logging to Azure Monitor

- Works for streaming endpoints as-well as non-streaming

- Intelligent Routing

- Endpoint Selector that favours endpoints reporting higher available capacity

- Random endpoint selector

- Prioritised endpoint selector with fallback

- Lowest Latency endpoint selector

- Can proxy asynchronous requests such as Azure OpenAI DALLE2 Image Generation across fleets of servers

- Custom consumer OAuth2 authorisation

- Can mint JWT time-bound and consumer-bound JWT tokens to make it easy to run events like Hackathons without blowing your budget

- Circuit breakers and backoff-retry over downstream AI services

- Local token rate limiting

- By consumer / by endpoint

- By number of tokens (including streaming by estimated token count)

- Local request rate limiting

- By consumer / by endpoint

- Bulkhead support for buffering requests to backend

- Distributed token rate limiting (using Redis)

- Powered by an extension

graemefoster/aicentral.ratelimiting.distributedredis

- Powered by an extension

- AI Search Vectorization endpoint

- Powered by an extension

graemefoster/aicentral.azureaisearchvectorizer

- Powered by an extension

- Broadcast messages to DAPR

- Powered by an extension

graemefoster/AICentral.Dapr.Broadcast - Simplifies using any DAPR Pub/Sub component for post-processing LLM requests

- Simplifies using any DAPR Pub/Sub component for post-processing LLM requests

- Powered by an extension

Extensibility model makes it easy to build your own plugins

To make it easy to get up and running, we are creating QuickStart configurations. Simply pull the docker container, set a few environment variables, and you're away.

| Quickstart | Features |

|---|---|

| APImProxyWithCosmosLogging | Run in-front of Azure APIm AI Gateway for easy PromptFlow and PII stripped logging. |

See Configuration for more details.

The Azure OpenAI SDK retries by default. As AI Central does this for you you can turn it off in the client by passing

new Azure.AI.OpenAI.OpenAIClientOptions() { RetryPolicy = new RetryPolicy(0) }when you create an OpenAIClient

-

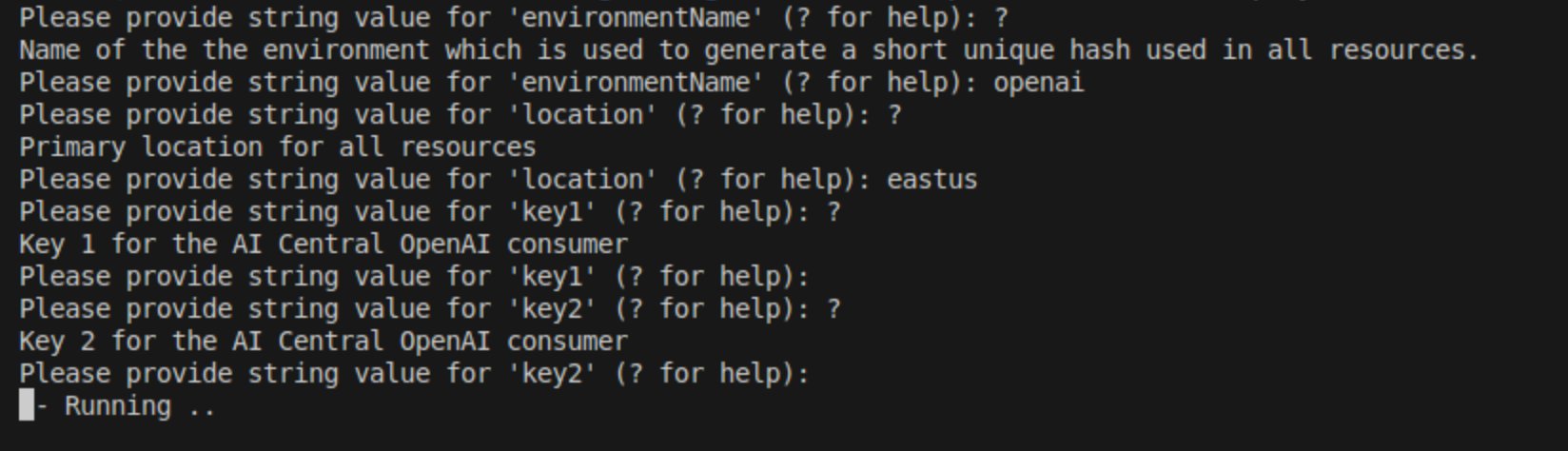

Install Azure CLI if you haven't done so already.

-

Install the Bicep CLI by running the following command in your terminal:

az bicep install- Compile your Bicep file to an ARM template with the following command:

az bicep build --file ./infra/main.bicepThis will create a file named main.json in the same directory as your main.bicep file.

- Deploy the generated ARM template using the Azure CLI. You'll need to login to your Azure account and select the subscription where you want to deploy the resources:

az login

az account set --subscription "your-subscription-id"

az deployment sub create --template-file ./infra/main.json --location "your-location"Replace "your-subscription-id" with your actual Azure subscription ID and "your-location" with the location where you want to deploy the resources (e.g., "westus2").

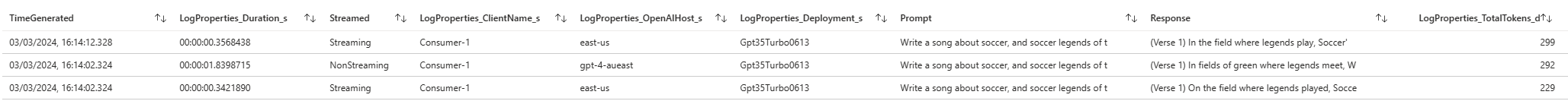

To test deployment retrieve url for the webapp and update the following curl command:

curl -X POST \

-H "Content-Type: application/json" \

-H "api-key: {your-customer-key}" \

-d '{

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "what is .net core"}

]

}' \

"https://{your-web-url}/openai/deployments/Gpt35Turbo0613/chat/completions?api-version=2024-02-01"To test if everything works by running some code of your choice, e.g., this code with OpenAI Python SDK:

import json

import httpx

from openai import AzureOpenAI

api_key = "<your-customer-key>"

def event_hook(req: httpx.Request) -> None:

print(json.dumps(dict(req.headers), indent=2))

client = AzureOpenAI(

azure_endpoint="https://app-[a]-[b].azurewebsites.net", #if you deployed to Azure Web App app-[a]-[b].azurewebsites.net

api_key=api_key,

api_version="2023-05-15",

http_client=httpx.Client(event_hooks={"request": [event_hook]})

)

response = client.chat.completions.create(

model="Gpt35Turbo0613",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "What is the first letter of the alphabet?"}

]

)

print(response)Note: delete create resources az deployment group list --resource-group "your-resource-group-name" --query "[].{Name:name, Timestamp:properties.timestamp, State:properties.provisioningState}" --output table

This sample produces a AI-Central proxy that

- Listens on a hostname of your choosing

- Proxies directly through to a back-end Open AI server

- Can be accessed using standard SDKs

# Run container in Docker, referencing a local configuration file

docker run -p 8080:8080 -v .\appsettings.Development.json:/app/appsettings.Development.json -e ASPNETCORE_ENVIRONMENT=Development graemefoster/aicentral:latest#Create new project and bootstrap the AICentral nuget package

dotnet new web -o MyAICentral

cd MyAICentral

dotnet add package AICentral

#dotnet add package AICentral.Logging.AzureMonitor//Minimal API to configure AI Central

var builder = WebApplication.CreateBuilder(args);

builder.Services.AddAICentral(builder.Configuration);

app.UseAICentral(

builder.Configuration,

//if using logging extension

additionalComponentAssemblies: [ typeof(AzureMonitorLoggerFactory).Assembly ]

);

var app = builder.Build();

app.Run();

{

"AICentral": {

"Endpoints": [

{

"Type": "AzureOpenAIEndpoint",

"Name": "openai-1",

"Properties": {

"LanguageEndpoint": "https://<my-ai>.openai.azure.com",

"AuthenticationType": "Entra"

}

}

],

"AuthProviders": [

{

"Type": "Entra",

"Name": "aad-role-auth",

"Properties": {

"Entra": {

"ClientId": "<my-client-id>",

"TenantId": "<my-tenant-id>",

"Instance": "https://login.microsoftonline.com/"

},

"Requirements" : {

"Roles": ["required-roles"]

}

}

}

],

"EndpointSelectors": [

{

"Type": "SingleEndpoint",

"Name": "default",

"Properties": {

"Endpoint": "openai-1"

}

}

],

"Pipelines": [

{

"Name": "AzureOpenAIPipeline",

"Host": "mypipeline.mydomain.com",

"AuthProvider": "aad-role-auth",

"EndpointSelector": "default"

}

]

}

}

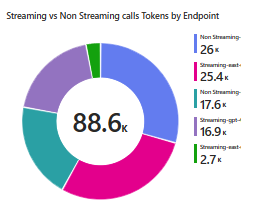

Out of the box AI Central emits Open Telemetry metrics with the following dimensions:

- Consumer

- Endpoint

- Pipeline

- Prompt Tokens

- Response Tokens including streaming

Allowing insightful dashboards to be built using your monitoring tool of choice.

AI Central also allows fine-grained logging. We ship an extension that logs to Azure Monitor, but it's easy to build your own.

See advanced-otel for dashboard inspiration!

This pipeline will:

- Present an Azure OpenAI, and an Open AI downstream as a single upstream endpoint

- maps the incoming deployment Name "GPT35Turbo0613" to the downstream Azure OpenAI deployment "MyGptModel"

- maps incoming Azure OpenAI deployments to Open AI models

- Present it as an Azure OpenAI style endpoint

- Protect the front-end by requiring an AAD token issued for your own AAD application

- Put a local Asp.Net core rate-limiting policy over the endpoint

- Add logging to Azure monitor

- Logs quota, client caller information, and in this case the Prompt but not the response.

{

"AICentral": {

"Endpoints": [

{

"Type": "AzureOpenAIEndpoint",

"Name": "openai-priority",

"Properties": {

"LanguageEndpoint": "https://<my-ai>.openai.azure.com",

"AuthenticationType": "Entra|EntraPassThrough|ApiKey",

"ModelMappings": {

"Gpt35Turbo0613": "MyGptModel"

}

}

},

{

"Type": "OpenAIEndpoint",

"Name": "openai-fallback",

"Properties": {

"LanguageEndpoint": "https://api.openai.com",

"ModelMappings": {

"Gpt35Turbo0613": "gpt-3.5-turbo",

"Ada002Embedding": "text-embedding-ada-002"

},

"ApiKey": "<my-api-key>",

"Organization": "<optional-organisation>"

}

}

],

"AuthProviders": [

{

"Type": "Entra",

"Name": "simple-aad",

"Properties": {

"Entra": {

"ClientId": "<my-client-id>",

"TenantId": "<my-tenant-id>",

"Instance": "https://login.microsoftonline.com/",

"Audience": "<custom-audience>"

},

"Requirements" : {

"Roles": ["required-roles"]

}

}

}

],

"EndpointSelectors": [

{

"Type": "Prioritised",

"Name": "my-endpoint-selector",

"Properties": {

"PriorityEndpoints": ["openai-1"],

"FallbackEndpoints": ["openai-fallback"]

}

}

],

"GenericSteps": [

{

"Type": "AspNetCoreFixedWindowRateLimiting",

"Name": "window-rate-limiter",

"Properties": {

"LimitType": "PerConsumer|PerAICentralEndpoint",

"MetricType": "Requests",

"Options": {

"Window": "00:00:10",

"PermitLimit": 100

}

}

},

{

"Type": "AzureMonitorLogger",

"Name": "azure-monitor-logger",

"Properties": {

"WorkspaceId": "<workspace-id>",

"Key": "<key>",

"LogPrompt": true,

"LogResponse": false,

"LogClient": true

}

}

],

"Pipelines": [

{

"Name": "MyPipeline",

"Host": "prioritypipeline.mydomain.com",

"EndpointSelector": "my-endpoint-selector",

"AuthProvider": "simple-aad",

"Steps": [

"window-rate-limiter",

"azure-monitor-logger"

],

"OpenTelemetryConfig": {

"AddClientNameTag": true,

"Transmit": true

}

}

]

}

}