Hardware-accelerated, simultaneous localization and mapping (SLAM) using stereo visual inertial odometry (SVIO).

Learn how to use this package by watching our on-demand webinar: Pinpoint, 250 fps, ROS 2 Localization with vSLAM on Jetson

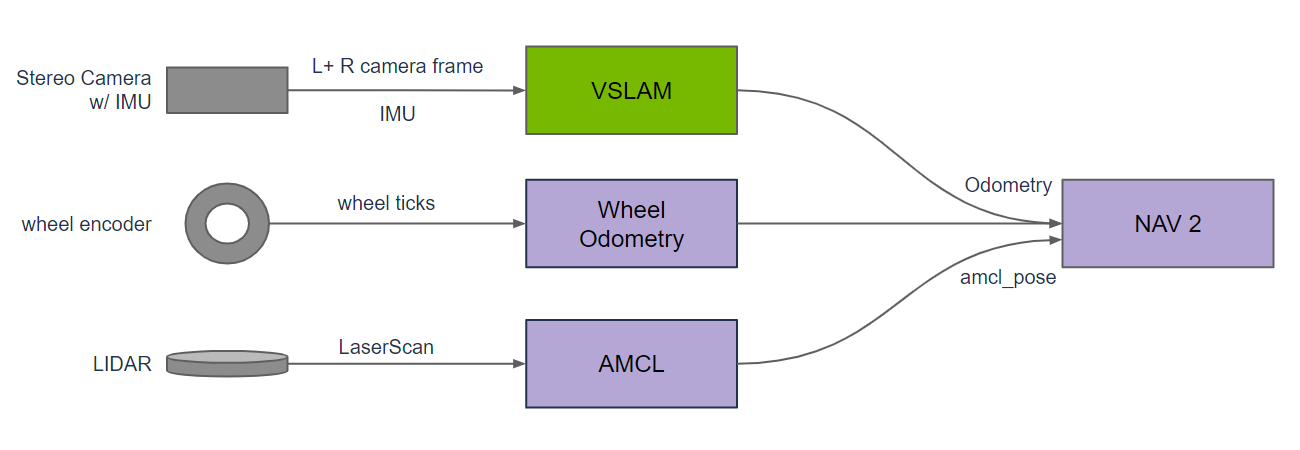

Isaac ROS Visual SLAM provides a high-performance, best-in-class ROS 2 package for VSLAM (visual simultaneous localization and mapping). This package uses a stereo camera with an IMU to estimate odometry as an input to navigation. It is GPU accelerated to provide real-time, low-latency results in a robotics application. VSLAM provides an additional odometry source for mobile robots (ground based) and can be the primary odometry source for drones.

VSLAM provides a method for visually estimating the position of a robot relative to its start position, known as VO (visual odometry). This is particularly useful in environments where GPS is not available (such as indoors) or intermittent (such as urban locations with structures blocking line of sight to GPS satellites). This method is designed to use left and right stereo camera frames and an IMU (inertial measurement unit) as input. It uses input stereo image pairs to find matching key points in the left and right images; using the baseline between the left and right camera, it can estimate the distance to the key point. Using two consecutive input stereo image pairs, VSLAM can track the 3D motion of key points between the two consecutive images to estimate the 3D motion of the camera-which is then used to compute odometry as an output to navigation. Compared to the classic approach to VSLAM, this method uses GPU acceleration to find and match more key points in real-time, with fine tuning to minimize overall reprojection error.

Key points depend on distinctive features in the left and right camera image that can be repeatedly detected with changes in size, orientation, perspective, lighting, and image noise. In some instances, the number of key points may be limited or entirely absent; for example, if the camera field of view is only looking at a large solid colored wall, no key points may be detected. If there are insufficient key points, this module uses motion sensed with the IMU to provide a sensor for motion, which, when measured, can provide an estimate for odometry. This method, known as VIO (visual-inertial odometry), improves estimation performance when there is a lack of distinctive features in the scene to track motion visually.

SLAM (simultaneous localization and mapping) is built on top of VIO, creating a map of key points that can be used to determine if an area is previously seen. When VSLAM determines that an area is previously seen, it reduces uncertainty in the map estimate, which is known as loop closure. VSLAM uses a statistical approach to loop closure that is more compute efficient to provide a real time solution, improving convergence in loop closure.

There are multiple methods for estimating odometry as an input to navigation. None of these methods are perfect; each has limitations because of systematic flaws in the sensor providing measured observations, such as missing LIDAR returns absorbed by black surfaces, inaccurate wheel ticks when the wheel slips on the ground, or a lack of distinctive features in a scene limiting key points in a camera image. A practical approach to tracking odometry is to use multiple sensors with diverse methods so that systemic issues with one method can be compensated for by another method. With three separate estimates of odometry, failures in a single method can be detected, allowing for fusion of the multiple methods into a single higher quality result. VSLAM provides a vision- and IMU-based solution to estimating odometry that is different from the common practice of using LIDAR and wheel odometry. VSLAM can even be used to improve diversity, with multiple stereo cameras positioned in different directions to provide multiple, concurrent visual estimates of odometry.

To learn more about VSLAM, refer to the cuVSLAM SLAM documentation.

VSLAM is a best-in-class package with the lowest translation and rotational error as measured on KITTI Visual Odometry / SLAM Evaluation 2012 for real-time applications.

| Method | Runtime | Translation | Rotation | Platform |

|---|---|---|---|---|

| VSLAM | 0.007s | 0.94% | 0.0019 deg/m | Jetson AGX Xavier aarch64 |

| ORB-SLAM2 | 0.06s | 1.15% | 0.0027 deg/m | 2 cores @ >3.5 GHz x86_64 |

In addition to results from standard benchmarks, we test loop closure for VSLAM on sequences of over 1000 meters, with coverage for indoor and outdoor scenes.

| Sample Graph |

Input Size |

AGX Orin |

Orin NX |

Orin Nano 8GB |

x86_64 w/ RTX 4060 Ti |

|---|---|---|---|---|---|

| Visual SLAM Node |

720p |

228 fps 40 ms |

127 fps 74 ms |

113 fps 65 ms |

456 fps 37 ms |

Please visit the Isaac ROS Documentation to learn how to use this repository.

Update 2023-10-18: Improved stability.