Why does the perceptive field formula differ from the calculation?

Alexander-Serov opened this issue · 6 comments

Describe the bug

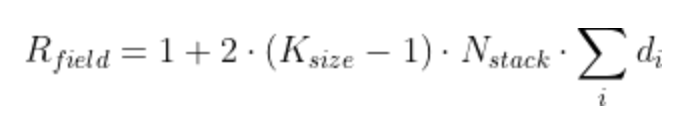

The readme provides the following perception field calculation formula:

https://user-images.githubusercontent.com/12395799/106308730-6d4c5180-6261-11eb-82e9-a12a1958058d.png

where one sees (k[n]-1) under the sum.

However, the current code (v.3.3.1) uses k[n] in the expression (

Line 248 in 455a035

I was wondering if it's a bug or I am missing something?

After all, the difference is a factor of k/(k-1), which does matter for applications. For kernel_size = 3 (default), this is 1.5 times.

Btw, there seems to be a square bracket out of place in the formula.

Paste a snippet

Irrelevant.

Dependencies

Irrelevant.

@Alexander-Serov yeah there's something wrong here. I'll try to investigate that more.

Thanks for raising this problem! It has been merged to master: bb92425.

A new version 3.4.0 was released.

I found this formula empirically by trying a lot of combinations of different parameters. I'm pretty sure it is correct when using the TCN. But theoretically, the 2 is not justified. It seems however the 2 present in the formula might be just a problem of notation and parameterization (For example it's possible that if we want to specify dilations 1-2-4-8, we should only give to 1-2-4 to TensorFlow). One's should inspect the Conv1D code to see.

I added a note in the README. For later work.