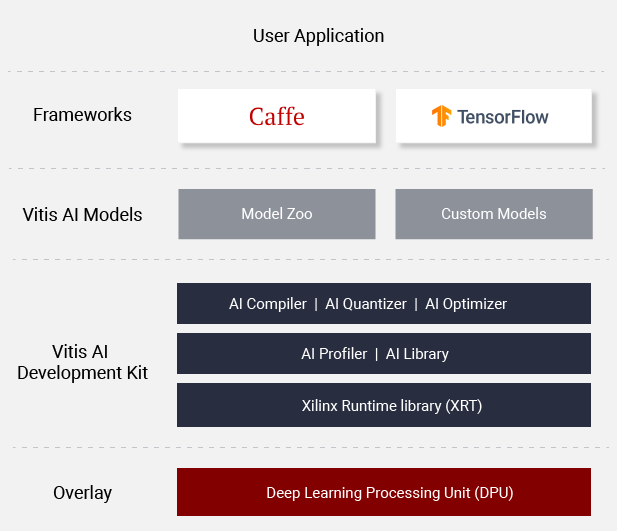

Vitis AI is Xilinx’s development stack for AI inference on Xilinx hardware platforms, including both edge devices and Alveo cards. It consists of optimized IP, tools, libraries, models, and example designs. It is designed with high efficiency and ease of use in mind, unleashing the full potential of AI acceleration on Xilinx FPGA and ACAP.

Vitis AI is composed of the following key components:

- AI Model Zoo - A comprehensive set of pre-optimized models that are ready to deploy on Xilinx devices.

- AI Optimizer - An optional model optimizer that can prune a model by up to 90%. It is seperately available with commercial licenses.

- AI Quantizer - A powerful quantizer that supports model quantization, calibration, and fine tuning.

- AI Compiler - Compiles the quantized model to a high-efficient instruction set and data flow.

- AI Profiler - Perform an in-depth analysis of the efficiency and utilization of AI inference implementation.

- AI Library - Offers high-level yet optimized C++ APIs for AI applications from edge to cloud.

- DPU - Efficient and scalable IP cores can be customized to meet the needs for many different applications

Learn More: Vitis AI Overview

- Release Notes

- Alveo U50 support with DPUv3E, a throughput optimized CNN overlay

- Tensorflow 1.15 support

- VART (Vitis AI Runtime) with unified API and samples for Zynq, ZU+ and Alveo

- Vitis AI library fully open source

- Whole Application Acceleration example on Alveo

Two options are available for installing the containers with the Vitis AI tools and resources.

- Pre-built containers on Docker Hub: /xilinx/vitis-ai

- Build containers locally with Docker recipes: Docker Recipes

-

Install Docker - if Docker not installed on your machine yet

-

Clone the Vitis-AI repository to obtain the examples, reference code, and scripts.

git clone https://github.com/Xilinx/Vitis-AI cd Vitis-AI -

- Run the CPU image from docker hub

./docker_run.sh xilinx/vitis-aior

- build the CPU image locally and run it

cd docker ./docker_build_cpu.sh # After build finished cd .. ./docker_run.sh xilinx/vitis-ai-cpu:latestor

- build the GPU image locally and run it

cd docker ./docker_build_gpu.sh # After build finished cd .. ./docker_run.sh xilinx/vitis-ai-gpu:latest -

Get started with examples

Vitis AI offers a unified set of high-level C++/Python programming APIs to run AI applications across edge-to-cloud platforms, including DPUv1 and DPUv3 for Alveo,

and DPUv2 for Zynq Ultrascale+ MPSoC and Zynq-7000. It brings the benefits to easily port AI applications from cloud to edge and vice versa.

7 samples in VART Samples are available to help you get familiar with the unfied programming APIs.

| ID | Example Name | Models | Framework | Notes |

|---|---|---|---|---|

| 1 | resnet50 | ResNet50 | Caffe | Image classification with VART C++ APIs. |

| 2 | resnet50_mt_py | ResNet50 | TensorFlow | Multi-threading image classification with VART Python APIs. |

| 3 | inception_v1_mt_py | Inception-v1 | TensorFlow | Multi-threading image classification with VART Python APIs. |

| 4 | pose_detection | SSD, Pose detection | Caffe | Pose detection with VART C++ APIs. |

| 5 | video_analysis | SSD | Caffe | Traffic detection with VART C++ APIs. |

| 6 | adas_detection | YOLO-v3 | Caffe | ADAS detection with VART C++ APIs. |

| 7 | segmentation | FPN | Caffe | Semantic segmentation with VART C++ APIs. |

For more information, please refer to Vitis AI User Guide