Join Slack | Documentation | Blog | Twitter

Deepchecks is a Python package for comprehensively validating your machine learning models and data with minimal effort. This includes checks related to various types of issues, such as model performance, data integrity, distribution mismatches, and more.

This README refers to the Tabular version of deepchecks.

- Check out the Deepchecks for Computer Vision & Images subpackage for more details about deepchecks for CV, currently in beta release.

- Check out the Deepchecks for NLP subpackage for more details about deepchecks for NLP, currently in beta release.

pip install deepchecks -U --userNote: Vision & NLP Install

To install deepchecks together with the Computer Vision Submodule that is currently in beta release, replace

deepcheckswith"deepchecks[vision]"as follows:pip install "deepchecks[vision]" -U --userTo install deepchecks together with the NLP Submodule that is currently in beta release, replace

deepcheckswith"deepchecks[nlp]"as follows:pip install "deepchecks[nlp]" -U --user

conda install -c conda-forge deepchecksHead over to one of our following quickstart tutorials, and have deepchecks running on your environment in less than 5 min:

- Train-Test Validation Quickstart (loans data)

- Data Integrity Quickstart (avocado sales data)

- Model Evaluation Quickstart (wine quality data)

Recommended - download the code and run it locally on the built-in dataset and (optional) model, or replace them with your own.

Play with some of the existing checks in our Interactive Checks Demo, and see how they work on various datasets with custom corruptions injected.

A Suite runs a collection of Checks with optional Conditions added to them.

Example for running a suite on given datasets and with a supported model:

from deepchecks.tabular.suites import model_evaluation

suite = model_evaluation()

result = suite.run(train_dataset=train_dataset, test_dataset=test_dataset, model=model)

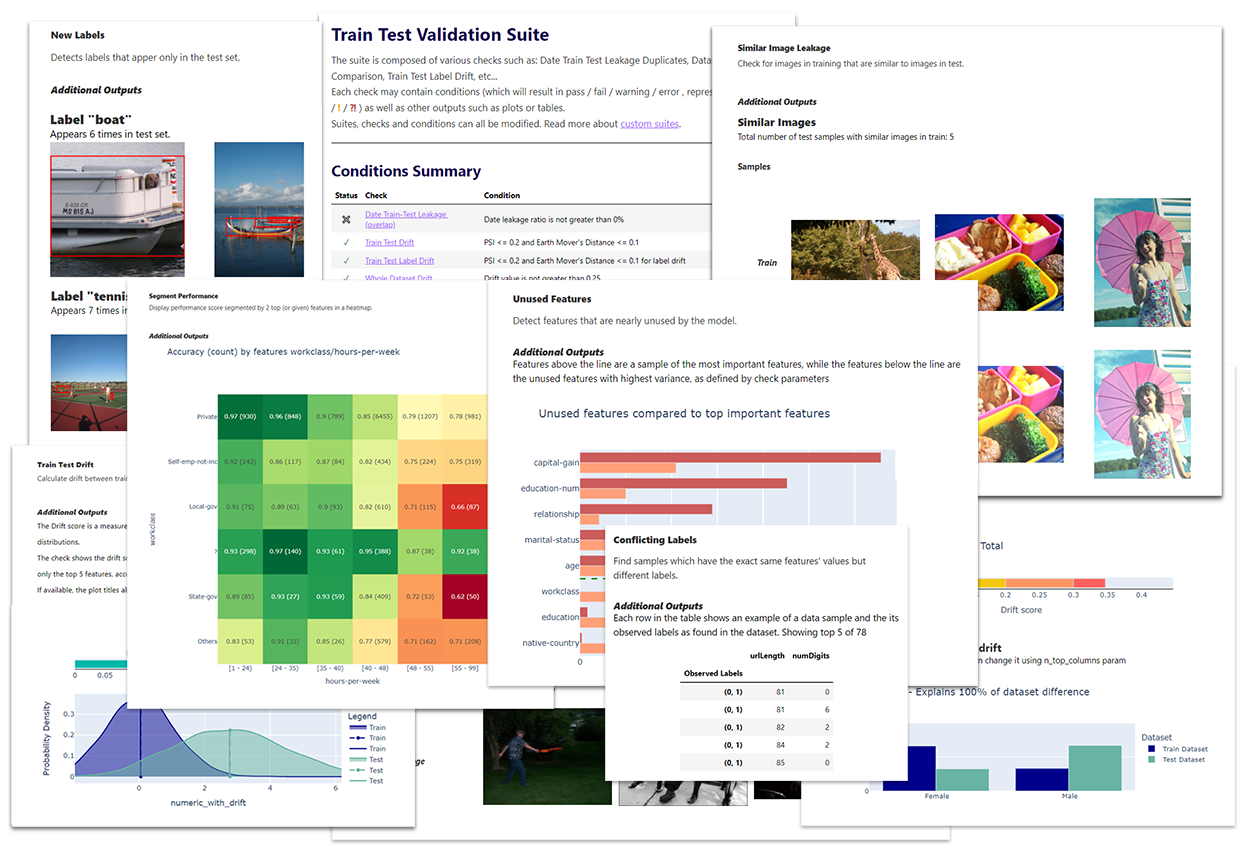

result.save_as_html() # replace this with result.show() or result.show_in_window() to see results inline or in windowWhich will result in a report that looks like this:

Note:

- Results can be displayed in various manners, or exported to an html report, saved as JSON, or integrated with other tools (e.g. wandb).

- Other suites that run only on the data (

data_integrity,train_test_validation) don't require a model as part of the input.

See the full code tutorials here.

In the following section you can see an example of how the output of a single check without a condition may look.

To run a specific single check, all you need to do is import it and then to run it with the required (check-dependent) input parameters. More details about the existing checks and the parameters they can receive can be found in our API Reference.

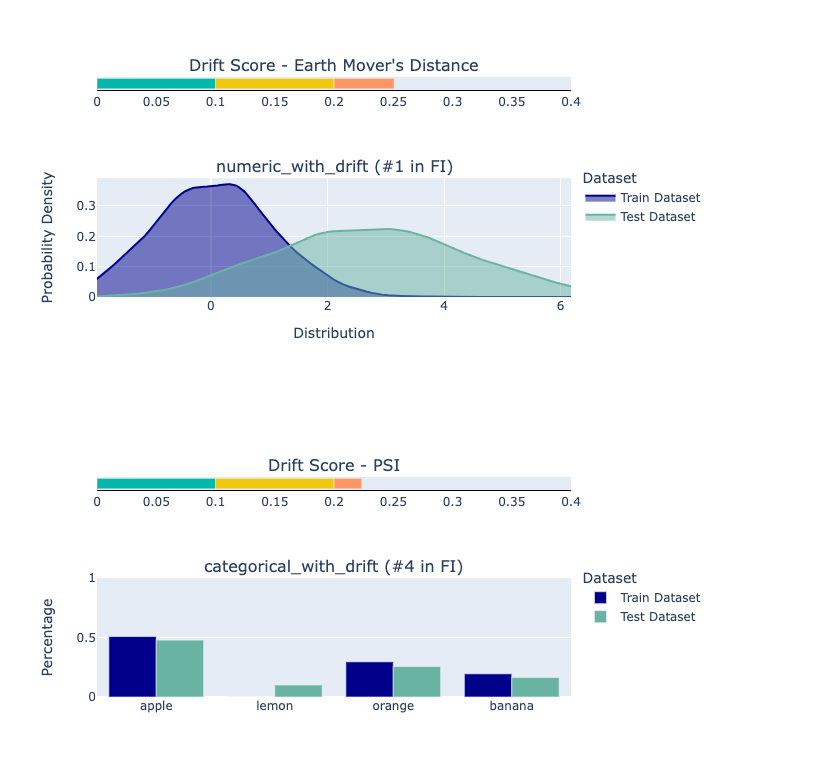

from deepchecks.tabular.checks import FeatureDrift

import pandas as pd

train_df = pd.read_csv('train_data.csv')

test_df = pd.read_csv('test_data.csv')

# Initialize and run desired check

FeatureDrift().run(train_df, test_df)Will produce output of the type:

The Drift score is a measure for the difference between two distributions, in this check - the test and train distributions.

The check shows the drift score and distributions for the features, sorted by feature importance and showing only the top 5 features, according to feature importance. If available, the plot titles also show the feature importance (FI) rank.

While you’re in the research phase, and want to validate your data, find potential methodological problems, and/or validate your model and evaluate it.

See more about typical usage scenarios and the built-in suites in the docs.

Each check enables you to inspect a specific aspect of your data and models. They are the basic building block of the deepchecks package, covering all kinds of common issues, such as:

- Weak Segments Performance

- Feature Drift

- Date Train Test Leakage Overlap

- Conflicting Labels

and many more checks.

Each check can have two types of results:

- A visual result meant for display (e.g. a figure or a table).

- A return value that can be used for validating the expected check results (validations are typically done by adding a "condition" to the check, as explained below).

A condition is a function that can be added to a Check, which returns a pass ✓, fail ✖ or warning ! result, intended for validating the Check's return value. An example for adding a condition would be:

from deepchecks.tabular.checks import BoostingOverfit

BoostingOverfit().add_condition_test_score_percent_decline_not_greater_than(threshold=0.05)which will return a check failure when running it if there is a difference of more than 5% between the best score achieved on the test set during the boosting iterations and the score achieved in the last iteration (the model's "original" score on the test set).

An ordered collection of checks, that can have conditions added to them. The Suite enables displaying a concluding report for all of the Checks that ran.

See the list of predefined existing suites for tabular data to learn more about the suites you can work with directly and also to see a code example demonstrating how to build your own custom suite.

The existing suites include default conditions added for most of the checks. You can edit the preconfigured suites or build a suite of your own with a collection of checks and optional conditions.

- The deepchecks package installed

- JupyterLab or Jupyter Notebook or any Python IDE

Depending on your phase and what you wish to validate, you'll need a subset of the following:

- Raw data (before pre-processing such as OHE, string processing, etc.), with optional labels

- The model's training data with labels

- Test data (which the model isn't exposed to) with labels

- A supported

model (e.g. scikit-learn models, XGBoost, any model implementing the

predictmethod in the required format)

The package currently supports tabular data and is in:

- beta release for the Computer Vision subpackage.

- beta release for the NLP subpackage.

- https://docs.deepchecks.com/ - HTML documentation (latest release)

- https://docs.deepchecks.com/dev - HTML documentation (dev version - git main branch)

- Join our Slack Community to connect with the maintainers and follow users and interesting discussions

- Post a Github Issue to suggest improvements, open an issue, or share feedback.

Thanks goes to these wonderful people (emoji key):

This project follows the all-contributors specification. Contributions of any kind are welcome!