This repository contains the Python implementation of star-convex object detection for 2D and 3D images, as described in the papers:

-

Uwe Schmidt, Martin Weigert, Coleman Broaddus, and Gene Myers.

Cell Detection with Star-convex Polygons.

International Conference on Medical Image Computing and Computer-Assisted Intervention (MICCAI), Granada, Spain, September 2018. -

Martin Weigert, Uwe Schmidt, Robert Haase, Ko Sugawara, and Gene Myers.

Star-convex Polyhedra for 3D Object Detection and Segmentation in Microscopy.

The IEEE Winter Conference on Applications of Computer Vision (WACV), Snowmass Village, Colorado, March 2020

Please cite the paper(s) if you are using this code in your research.

The following figure illustrates the general approach for 2D images. The training data consists of corresponding pairs of input (i.e. raw) images and fully annotated label images (i.e. every pixel is labeled with a unique object id or 0 for background). A model is trained to densely predict the distances (r) to the object boundary along a fixed set of rays and object probabilities (d), which together produce an overcomplete set of candidate polygons for a given input image. The final result is obtained via non-maximum suppression (NMS) of these candidates.

The approach for 3D volumes is similar to the one described for 2D, using pairs of input and fully annotated label volumes as training data.

If you want to know more about the concepts and practical applications of StarDist, please have a look at the following webinar that was given at NEUBIAS Academy @Home 2020:

This package is compatible with Python 3.6 - 3.10.

If you only want to use a StarDist plugin for a GUI-based software, please read this.

-

Please first install TensorFlow (either TensorFlow 1 or 2) by following the official instructions. For GPU support, it is very important to install the specific versions of CUDA and cuDNN that are compatible with the respective version of TensorFlow. (If you need help and can use

conda, take a look at this.) -

StarDist can then be installed with

pip:-

If you installed TensorFlow 2 (version 2.x.x):

pip install stardist -

If you installed TensorFlow 1 (version 1.x.x):

pip install "stardist[tf1]"

-

- Depending on your Python installation, you may need to use

pip3instead ofpip. - You can find out which version of TensorFlow is installed via

pip show tensorflow. - We provide pre-compiled binaries ("wheels") that should work for most Linux, Windows, and macOS platforms. If you're having problems, please see the troubleshooting section below.

- (Optional) You need to install gputools if you want to use OpenCL-based computations on the GPU to speed up training.

- (Optional) You might experience improved performance during training if you additionally install the Multi-Label Anisotropic 3D Euclidean Distance Transform (MLAEDT-3D).

We provide example workflows for 2D and 3D via Jupyter notebooks that illustrate how this package can be used.

Currently we provide some pretrained models in 2D that might already be suitable for your images:

| key | Modality (Staining) | Image format | Example Image | Description |

|---|---|---|---|---|

2D_versatile_fluo 2D_paper_dsb2018 |

Fluorescence (nuclear marker) | 2D single channel |  |

Versatile (fluorescent nuclei) and DSB 2018 (from StarDist 2D paper) that were both trained on a subset of the DSB 2018 nuclei segmentation challenge dataset. |

2D_versatile_he |

Brightfield (H&E) | 2D RGB |  |

Versatile (H&E nuclei) that was trained on images from the MoNuSeg 2018 training data and the TNBC dataset from Naylor et al. (2018). |

You can access these pretrained models from stardist.models.StarDist2D

from stardist.models import StarDist2D

# prints a list of available models

StarDist2D.from_pretrained()

# creates a pretrained model

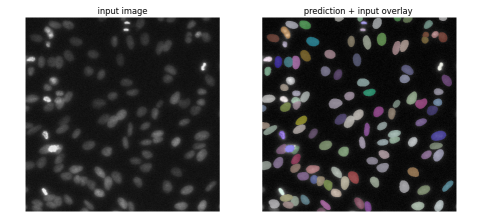

model = StarDist2D.from_pretrained('2D_versatile_fluo')And then try it out with a test image:

from stardist.data import test_image_nuclei_2d

from stardist.plot import render_label

from csbdeep.utils import normalize

import matplotlib.pyplot as plt

img = test_image_nuclei_2d()

labels, _ = model.predict_instances(normalize(img))

plt.subplot(1,2,1)

plt.imshow(img, cmap="gray")

plt.axis("off")

plt.title("input image")

plt.subplot(1,2,2)

plt.imshow(render_label(labels, img=img))

plt.axis("off")

plt.title("prediction + input overlay")To train a StarDist model you will need some ground-truth annotations: for every raw training image there has to be a corresponding label image where all pixels of a cell region are labeled with a distinct integer (and background pixels are labeled with 0). To create such annotations in 2D, there are several options, among them being Fiji, Labkit, or QuPath. In 3D, there are fewer options: Labkit and Paintera (the latter being very sophisticated but having a steeper learning curve).

Although each of these provide decent annotation tools, we currently recommend using Labkit (for 2D or 3D images) or QuPath (for 2D):

- Install Fiji and the Labkit plugin

- Open the (2D or 3D) image and start Labkit via

Plugins > Labkit > Open Current Image With Labkit - Successively add a new label and annotate a single cell instance with the brush tool until all cells are labeled.

(Always disableallow overlapping labelsor – in older versions of LabKit – enable theoverrideoption.) - Export the label image via

Save Labeling...andFile format > TIF Image

Additional tips:

- The Labkit viewer uses BigDataViewer and its keybindings (e.g. s for contrast options, CTRL+Shift+mouse-wheel for zoom-in/out etc.)

- For 3D images (XYZ) it is best to first convert it to a (XYT) timeseries (via

Re-Order Hyperstackand swappingzandt) and then use [ and ] in Labkit to walk through the slices.

- Install QuPath

- Create a new project (

File -> Project...-> Create project) and add your raw images - Annotate nuclei/objects

- Run this script to export the annotations (save the script and drag it on QuPath. Then execute it with

Run for project). The script will create aground_truthfolder within your QuPath project that includes both theimagesandmaskssubfolder that then can directly be used with StarDist.

To see how this could be done, have a look at the following example QuPath project (data courtesy of Romain Guiet, EPFL).

StarDist also supports multi-class prediction, i.e. each found object instance can additionally be classified into a fixed number of discrete object classes (e.g. cell types):

Please see the multi-class example notebook if you're interested in this.

StarDist contains the stardist.matching submodule that provides functions to compute common instance segmentation metrics between ground-truth label masks and predictions (not necessarily from StarDist). Currently available metrics are

tp,fp,fnprecision,recall,accuracy,f1panoptic_qualitymean_true_score,mean_matched_score

which are computed by matching ground-truth/prediction objects if their IoU exceeds a threshold (by default 50%). See the documentation of stardist.matching.matching for a detailed explanation.

Here is an example how to use it:

# create some example ground-truth and dummy prediction data

from stardist.data import test_image_nuclei_2d

from scipy.ndimage import rotate

_, y_true = test_image_nuclei_2d(return_mask=True)

y_pred = rotate(y_true, 2, order=0, reshape=False)

# compute metrics between ground-truth and prediction

from stardist.matching import matching

metrics = matching(y_true, y_pred)

print(metrics)Matching(criterion='iou', thresh=0.5, fp=88, tp=37, fn=88, precision=0.296,

recall=0.296, accuracy=0.1737, f1=0.296, n_true=125, n_pred=125,

mean_true_score=0.19490, mean_matched_score=0.65847, panoptic_quality=0.19490)

If you want to compare a list of images you can use stardist.matching.matching_dataset:

from stardist.matching import matching_dataset

metrics = matching_dataset([y_true, y_true], [y_pred, y_pred])

print(metrics)DatasetMatching(criterion='iou', thresh=0.5, fp=176, tp=74, fn=176, precision=0.296,

recall=0.296, accuracy=0.1737, f1=0.296, n_true=250, n_pred=250,

mean_true_score=0.19490, mean_matched_score=0.6584, panoptic_quality=0.1949, by_image=False)

- Please first take a look at the frequently asked questions (FAQ).

- If you need further help, please go to the image.sc forum and try to find out if the issue you're having has already been discussed or solved by other people. If not, feel free to create a new topic there and make sure to use the tag

stardist(we are monitoring all questions with this tag). When opening a new topic, please provide a clear and concise description to understand and ideally reproduce the issue you're having (e.g. including a code snippet, Python script, or Jupyter notebook). - If you have a technical question related to the source code or believe to have found a bug, feel free to open an issue, but please check first if someone already created a similar issue.

If pip install stardist fails, it could be because there are no compatible wheels (.whl) for your platform (see list). In this case, pip tries to compile a C++ extension that our Python package relies on (see below). While this often works on Linux out of the box, it will likely fail on Windows and macOS without installing a suitable compiler. (Note that you can enforce compilation by installing via pip install stardist --no-binary :stardist:.)

Installation without using wheels requires Python 3.6 (or newer) and a working C++ compiler. We have only tested GCC (macOS, Linux), Clang (macOS), and Visual Studio (Windows 10). Please open an issue if you have problems that are not resolved by the information below.

If available, the C++ code will make use of OpenMP to exploit multiple CPU cores for substantially reduced runtime on modern CPUs. This can be important to prevent slow model training.

The default C/C++ compiler Clang that comes with the macOS command line tools (installed via xcode-select --install) does not support OpenMP out of the box, but it can be added. Alternatively, a suitable compiler can be installed from conda-forge. Please see this detailed guide for more information on both strategies (although written for scikit-image, it also applies here).

A third alternative (and what we did until StarDist 0.8.1) is to install the OpenMP-enabled GCC compiler via Homebrew with brew install gcc (e.g. installing gcc-10/g++-10 or newer). After that, you can build the package like this (adjust compiler names/paths as necessary):

CC=gcc-10 CXX=g++-10 pip install stardist

If you use conda on macOS and after import stardist see errors similar to Symbol not found: _GOMP_loop_nonmonotonic_dynamic_next, please see this issue for a temporary workaround.

As of StarDist 0.8.2, we provide arm64 wheels that should work with macOS on Apple Silicon (M1 chip or newer).

We recommend setting up an arm64 conda environment with GPU-accelerated TensorFlow following Apple's instructions (ensure you are using macOS 12 Monterey or newer) using conda-forge miniforge3 or mambaforge. Then install stardist using pip.

conda create -y -n stardist-env python=3.9

conda activate stardist-env

conda install -c apple tensorflow-deps

pip install tensorflow-macos tensorflow-metal

pip install stardist

Please install the Build Tools for Visual Studio 2019 (or newer) from Microsoft to compile extensions for Python 3.6+ (see this for further information). During installation, make sure to select the C++ build tools. Note that the compiler comes with OpenMP support.

We currently provide a ImageJ/Fiji plugin that can be used to run pretrained StarDist models on 2D or 2D+time images. Installation and usage instructions can be found at the plugin page.

We made a plugin for the Python-based multi-dimensional image viewer napari. It directly uses the StarDist Python package and works for 2D and 3D images. Please see the code repository for further details.

Inspired by the Fiji plugin, Pete Bankhead made a custom implementation of StarDist 2D for QuPath to use pretrained models. Please see this page for documentation and installation instructions.

Based on the Fiji plugin, Deborah Schmidt made a StarDist 2D plugin for Icy to use pretrained models. Please see the code repository for further details.

Stefan Helfrich has modified the Fiji plugin to be compatible with KNIME. Please see this page for further details.

@inproceedings{schmidt2018,

author = {Uwe Schmidt and Martin Weigert and Coleman Broaddus and Gene Myers},

title = {Cell Detection with Star-Convex Polygons},

booktitle = {Medical Image Computing and Computer Assisted Intervention - {MICCAI}

2018 - 21st International Conference, Granada, Spain, September 16-20, 2018, Proceedings, Part {II}},

pages = {265--273},

year = {2018},

doi = {10.1007/978-3-030-00934-2_30}

}

@inproceedings{weigert2020,

author = {Martin Weigert and Uwe Schmidt and Robert Haase and Ko Sugawara and Gene Myers},

title = {Star-convex Polyhedra for 3D Object Detection and Segmentation in Microscopy},

booktitle = {The IEEE Winter Conference on Applications of Computer Vision (WACV)},

month = {March},

year = {2020},

doi = {10.1109/WACV45572.2020.9093435}

}