English | 简体中文

SOFA (Singing-Oriented Forced Aligner) is a forced alignment tool designed specifically for singing voice.

It has the following advantages:

- Easy to install

Note: SOFA is still in beta and may contain many bugs, and effectiveness is not guaranteed. If any issues are encountered or improvements are suggested, please feel free to raise an issue.

- Use

git cloneto download the code from this repository - Install conda

- Create a conda environment, requiring Python version

3.8conda create -n SOFA python=3.8 -y conda activate SOFA

- Go to the pytorch official website to install torch

- (Optional, to improve wav file reading speed) Go to the pytorch official website to install torchaudio

- Install other Python libraries

pip install -r requirements.txt

-

Download the model file. You can find the trained models in the releases of this repository or in the pretrained model sharing category of the discussion section, with the file extension

.ckpt. -

Place the dictionary file in the

/dictionaryfolder. The default dictionary isopencpop-extension.txt -

Prepare the data for forced alignment and place it in a folder (by default in the

/segmentsfolder), with the following format- segments - singer1 - segment1.lab - segment1.wav - segment2.lab - segment2.wav - ... - singer2 - segment1.lab - segment1.wav - ...Ensure that the

.wavfiles and their corresponding.labfiles are in the same folder.The

.labfile is the transcription for the.wavfile with the same name. The file extension for the transcription can be changed using the--in_formatparameter.After the transcription is converted into a phoneme sequence by the

g2pmodule, it is fed into the model for alignment.For example, when using the

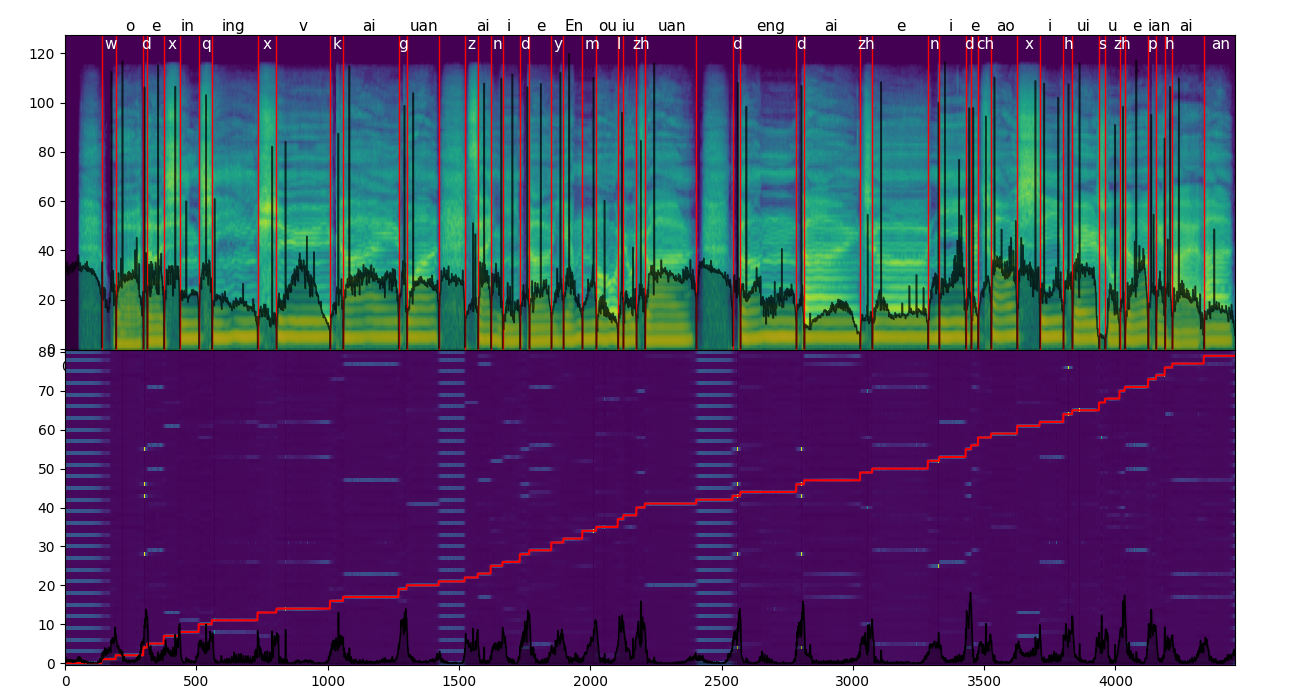

DictionaryG2Pmodule and theopencpop-extensiondictionary by default, if the content of the transcription is:gan shou ting zai wo fa duan de zhi jian, theg2pmodule will convert it based on the dictionary into the phoneme sequenceg an sh ou t ing z ai w o f a duan d e zh ir j ian. For how to use otherg2pmodules, see g2p module usage instructions. -

Command-line inference

Use

python infer.pyto perform inference.Parameters that need to be specified:

--ckpt: (must be specified) The path to the model weights;--folder: The folder where the data to be aligned is stored (default issegments);--in_format: The file extension of the transcription (default islab);--out_formats: The annotation format of the inferred files, multiple formats can be specified, separated by commas (default isTextGrid,htk).--dictionary: The dictionary file (default isdictionary/opencpop-extension.txt);

python infer.py --ckpt checkpoint_path --folder segments_path --dictionary dictionary_path -out_formats output_format1,output_format2...

-

Retrieve the Final Annotation

The final annotation is saved in a folder, the name of which is the annotation format you have chosen. This folder is located in the same directory as the wav files used for inference.

- Using a custom g2p instead of a dictionary

- In the matching mode, you can activate it by specifying

-mduring inference. It finds the most probable contiguous sequence segment within the given phoneme sequence, rather than having to use all the phonemes.

-

Follow the steps above for setting up the environment. It is recommended to install torchaudio for faster binarization speed;

-

Place the training data in the

datafolder in the following format:- data - full_label - singer1 - wavs - audio1.wav - audio2.wav - ... - transcriptions.csv - singer2 - wavs - ... - transcriptions.csv - weak_label - singer3 - wavs - ... - transcriptions.csv - singer4 - wavs - ... - transcriptions.csv - no_label - audio1.wav - audio2.wav - ...Regarding the format of

transcriptions.csv, see: qiuqiao#5Where:

transcriptions.csvonly needs to have the correct relative path to thewavsfolder;The

transcriptions.csvinweak_labeldoes not need to have aph_durcolumn; -

Modify

binarize_config.yamlas needed, then executepython binarize.py; -

Download the pre-trained model you need from releases, modify

train_config.yamlas needed, then executepython train.py -p path_to_your_pretrained_model; -

For training visualization:

tensorboard --logdir=ckpt/.