🎯 Distilling Dataset in an Optimization-Free manner.

🎯 The distillation process is Architecture-Free. (Getting over the Cross-Architecture problem.)

🎯 Distilling large-scale datasets (ImageNet-1K) efficiently.

🎯 The distilled datasets are high-quality and versatile.

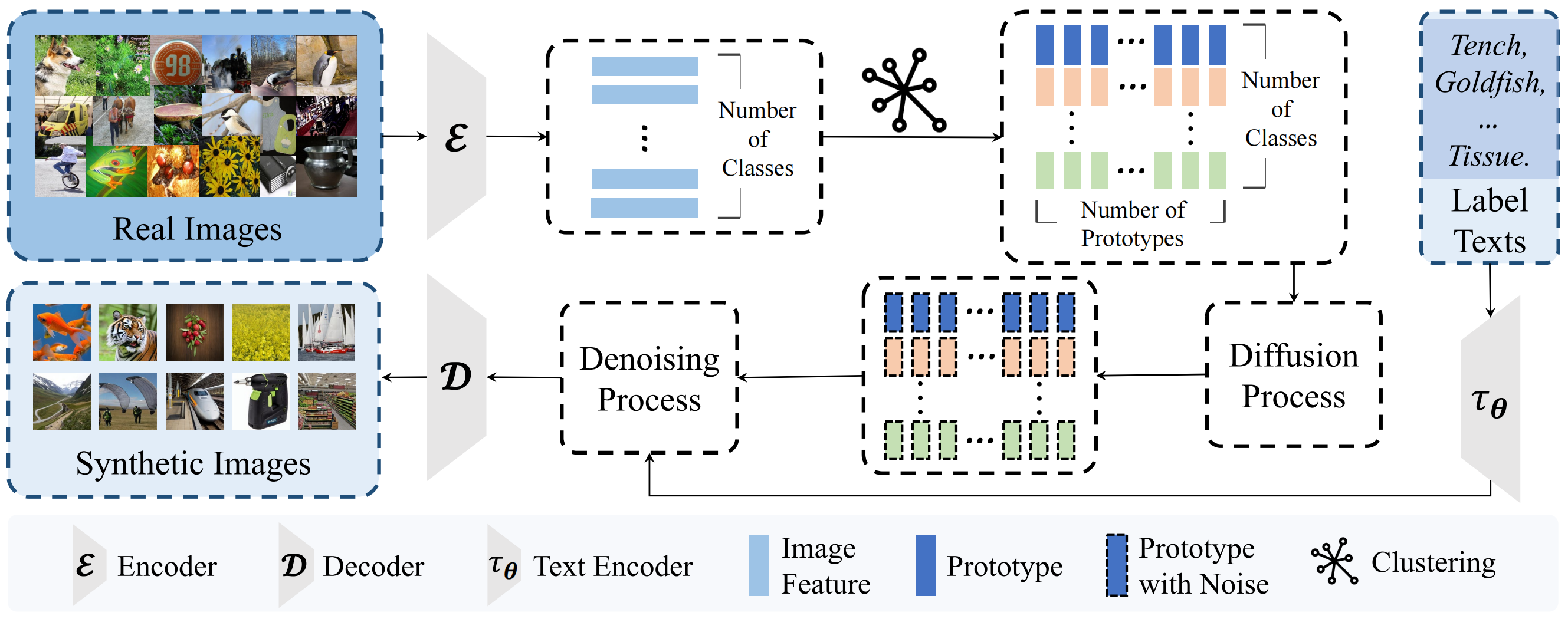

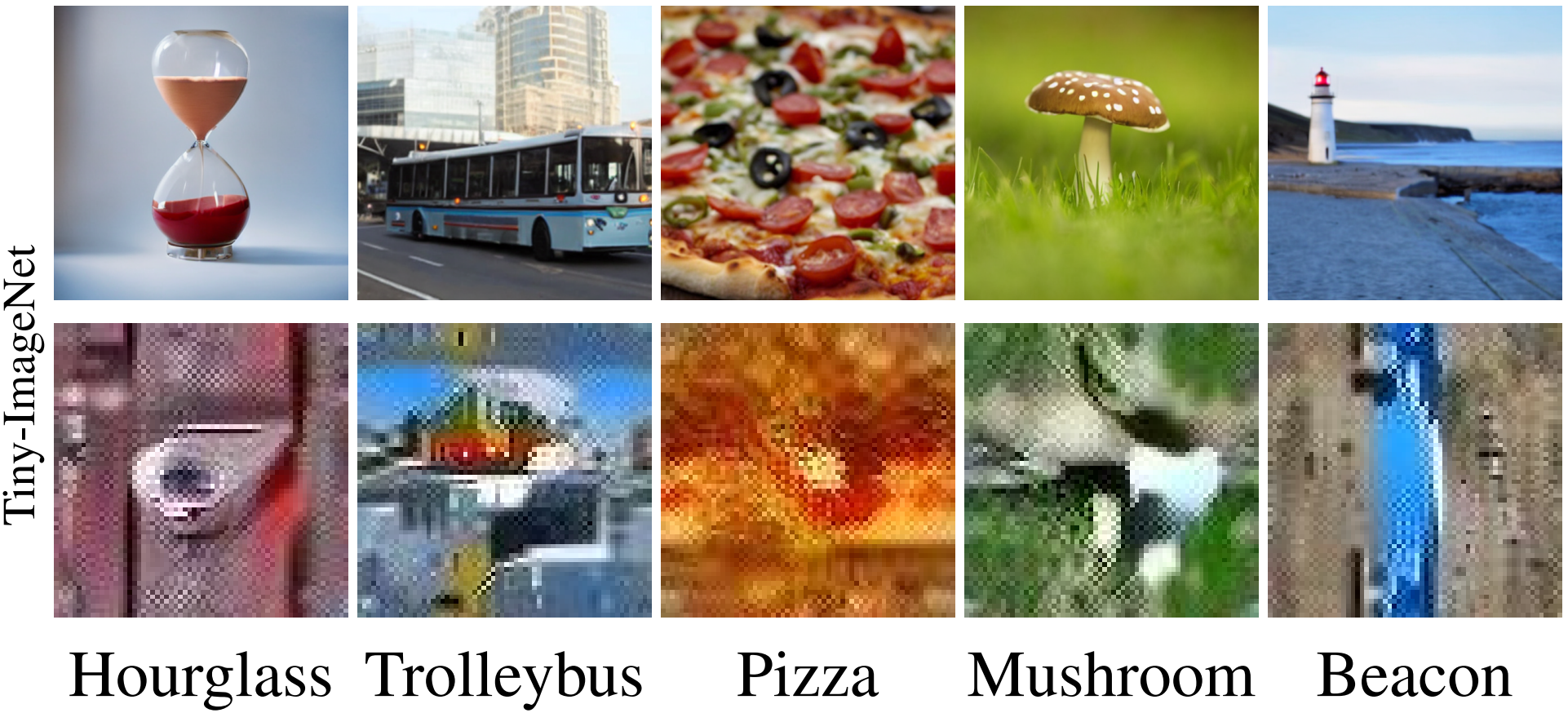

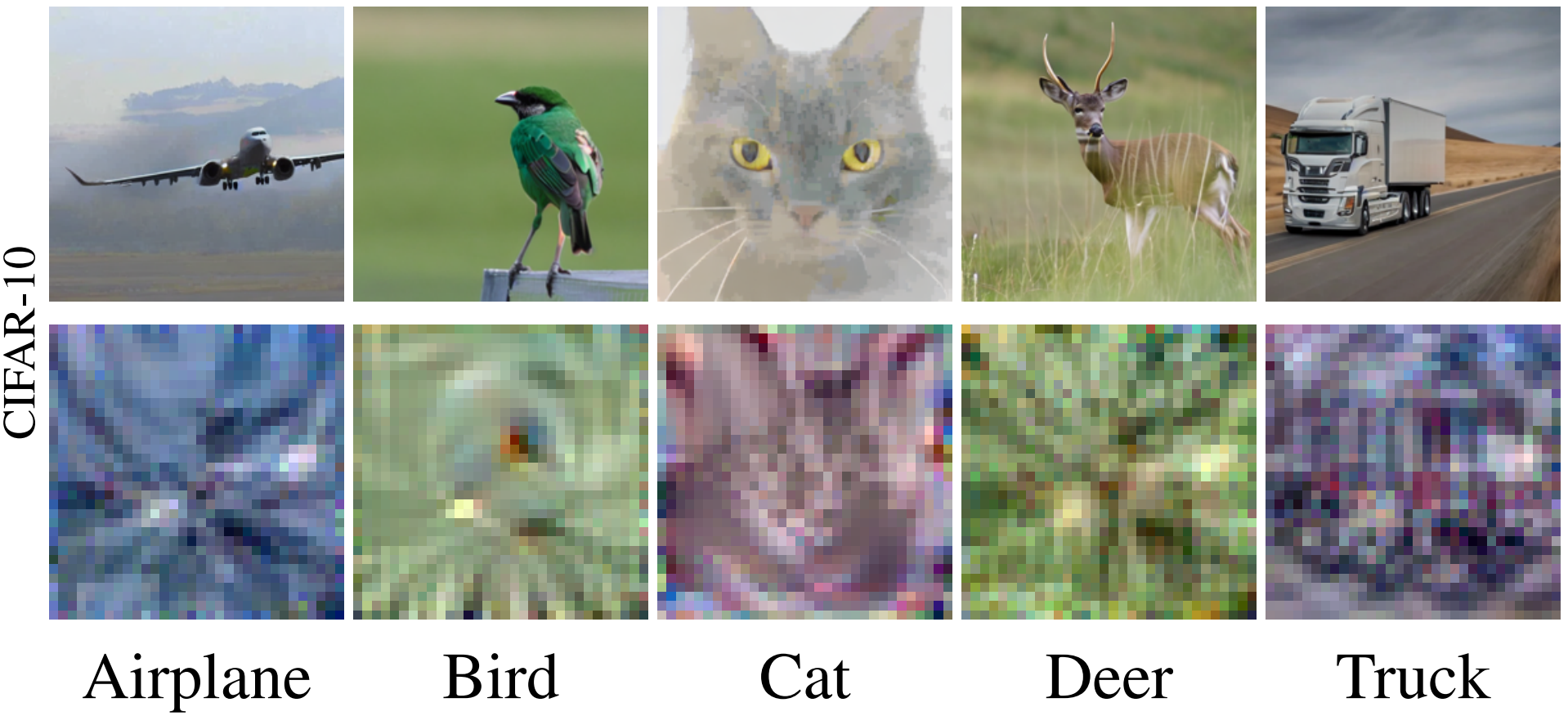

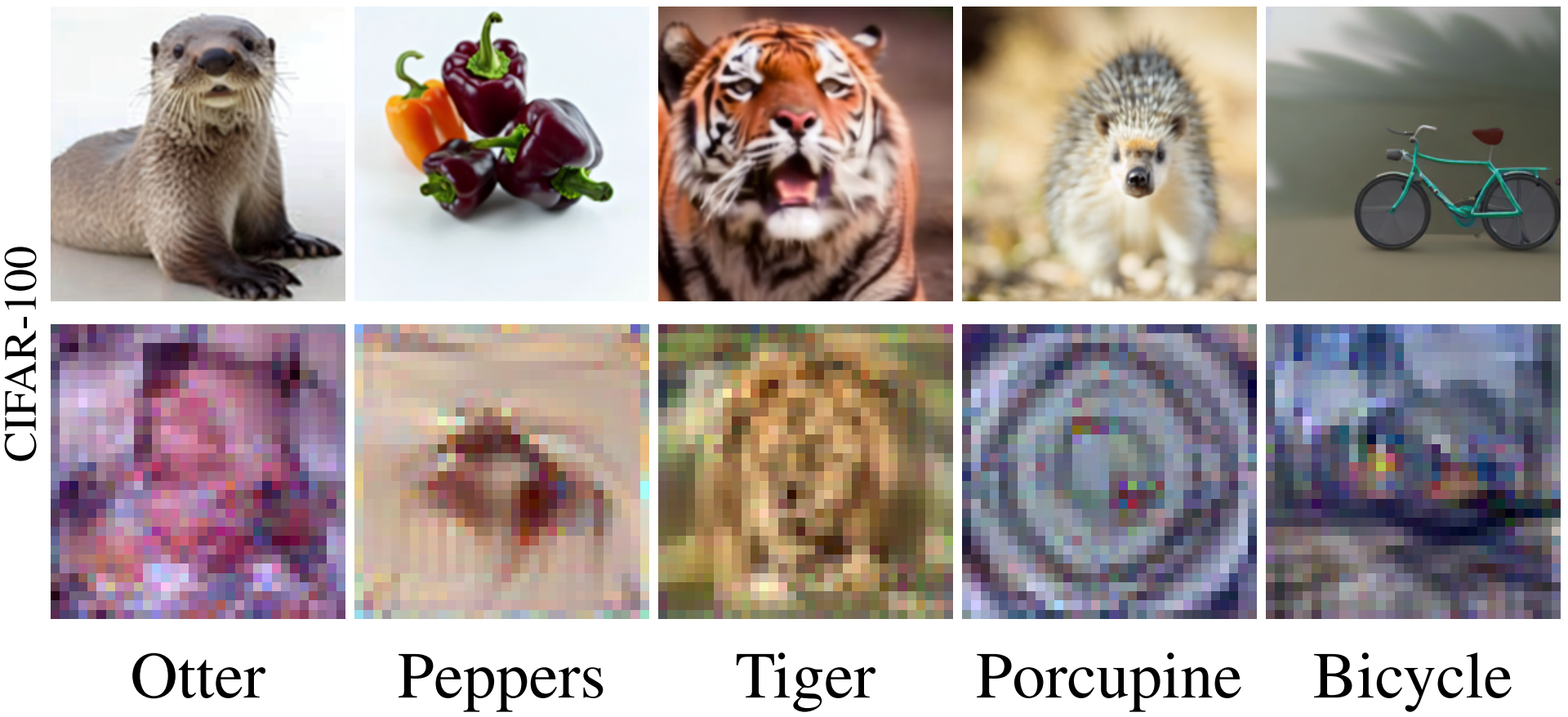

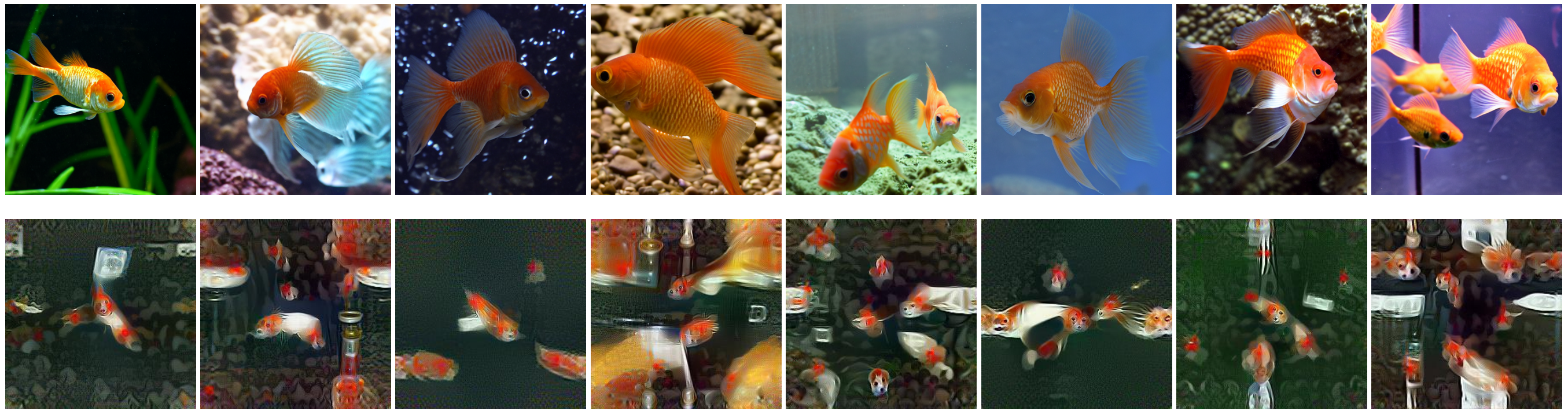

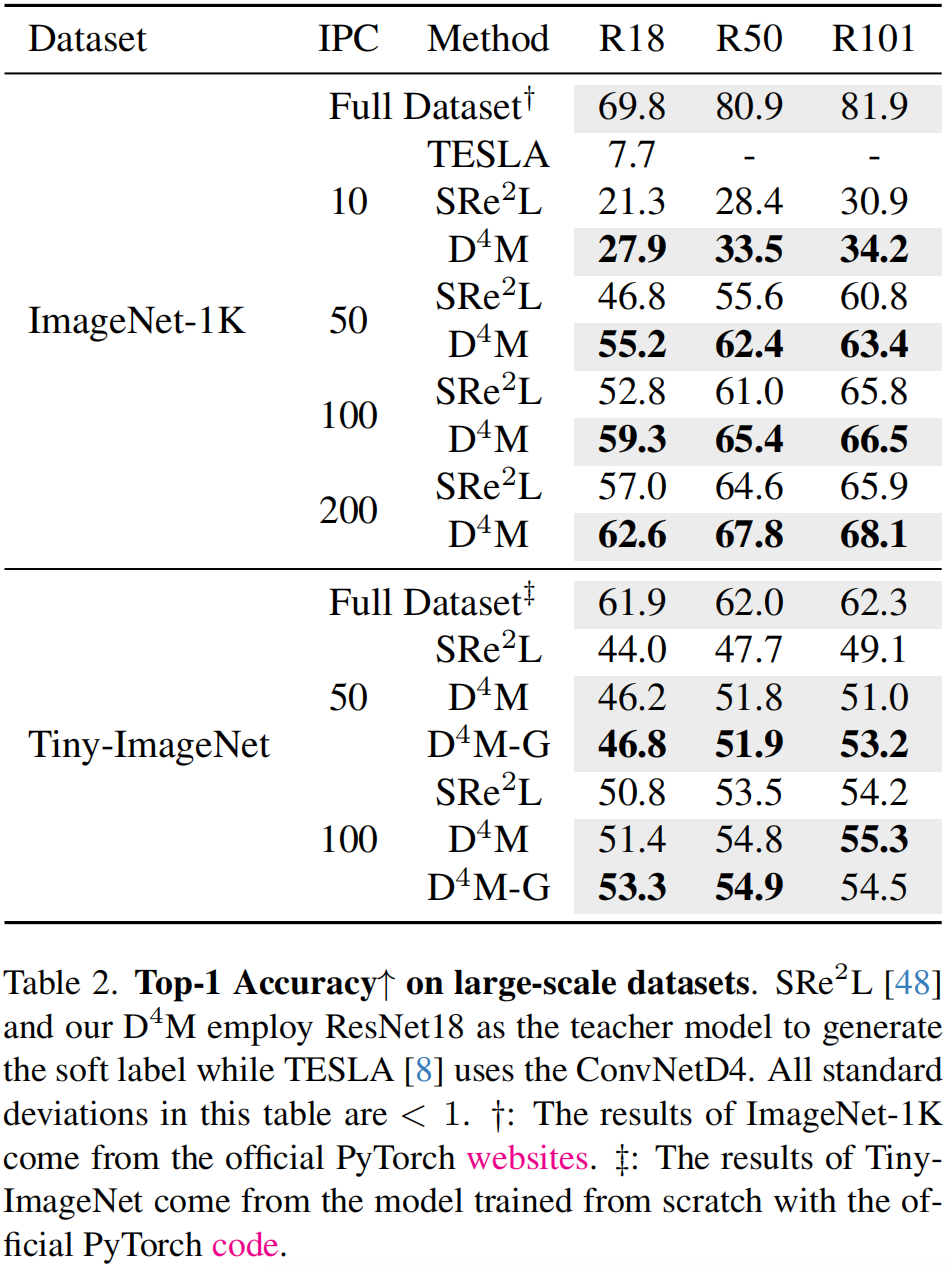

Dataset distillation offers a lightweight synthetic dataset for fast network training with promising test accuracy. We advocate for designing an economical dataset distillation framework that is independent of the matching architectures. With empirical observations, we argue that constraining the consistency of the real and synthetic image spaces will enhance the cross-architecture generalization. Motivated by this, we introduce Dataset Distillation via Disentangled Diffusion Model (D4M), an efficient framework for dataset distillation. Compared to architecture-dependent methods, D4M employs latent diffusion model to guarantee consistency and incorporates label information into category prototypes. The distilled datasets are versatile, eliminating the need for repeated generation of distinct datasets for various architectures. Through comprehensive experiments, D4M demonstrates superior performance and robust generalization, surpassing the SOTA methods across most aspects.

- Python >=3.9

- Pytorch >= 1.12.1

- Torchvision >= 0.13.1

You can install or upgrade the latest version of Diffusers library according to this page.

Step 1:

Copy the pipeline scripts (generate latents pipeline and synthesis images pipeline) into the path of Diffusers Library: diffusers/src/diffusers/pipelines/stable_diffusion.

Step 2: Modify Diffusers source code according to scripts/README.md.

cd distillation

sh gen_prototype_imgnt.shcd distillation

sh gen_syn_image_imgnt.shActually, if you don't need the JSON files (prototype) for exploration, you could combine the generate and synthesis processes into one, skipping the I/O steps.

cd matching

sh matching.shcd validate

sh train_FKD.shFor more qualitative results, please see the supplementary in our paper.

Our code is developed based on the following codebases, thanks for sharing!

- Squeeze, Recover and Relabel: Dataset Condensation at ImageNet Scale From A New Perspective

- FKD: A Fast Knowledge Distillation Framework for Visual Recognition

If you find this work helpful, please cite:

@InProceedings{Su_2024_CVPR,

author = {Su, Duo and Hou, Junjie and Gao, Weizhi and Tian, Yingjie and Tang, Bowen},

title = {D{\textasciicircum}4M: Dataset Distillation via Disentangled Diffusion Model},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2024},

pages = {5809-5818}

}