😊 EasyAnimate is an end-to-end solution for generating high-resolution and long videos. We can train transformer based diffusion generators, train VAEs for processing long videos, and preprocess metadata.

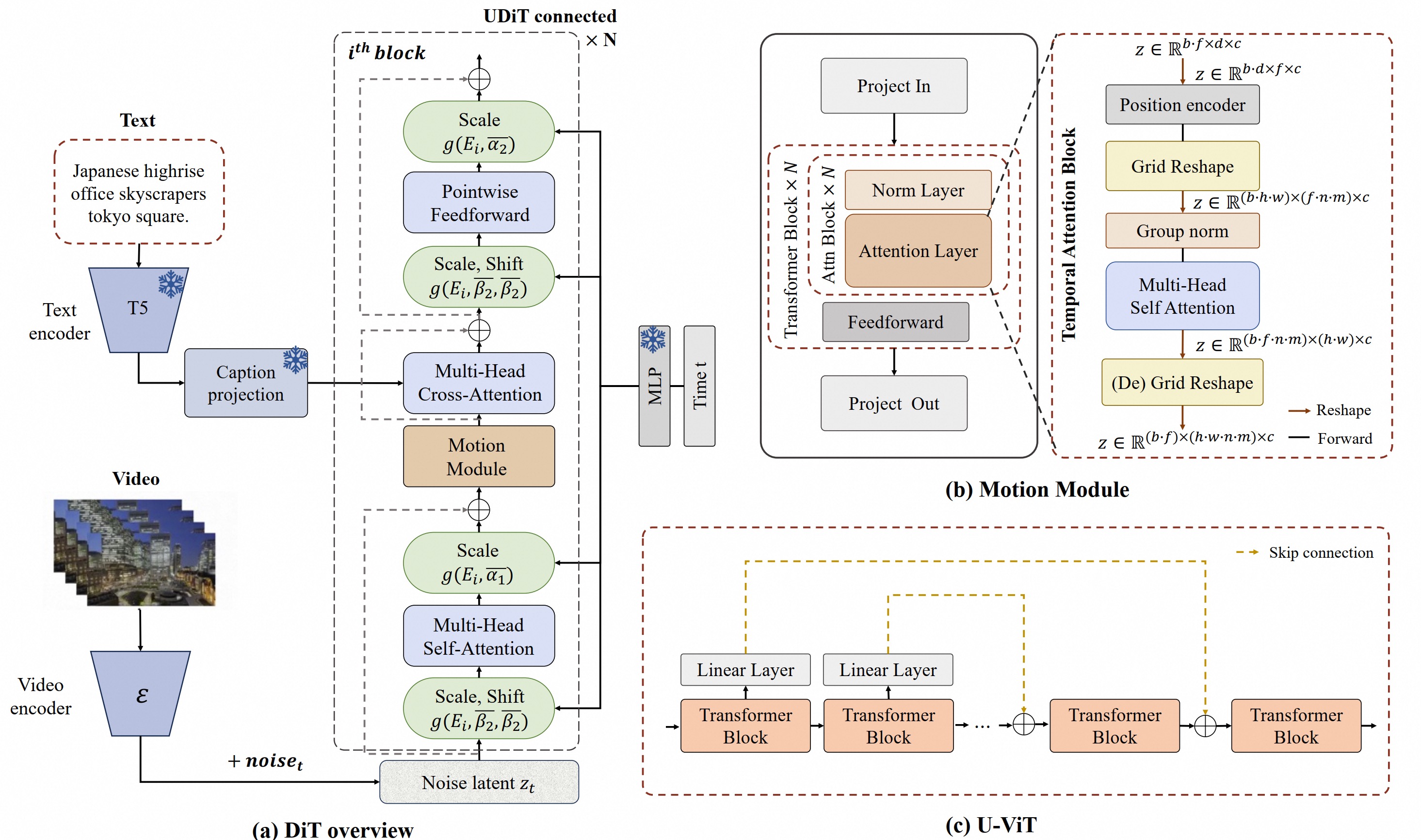

😊 Based on Sora like structure and DIT, we use transformer as a diffuser for video generation. We built easyanimate based on motion module, u-vit and slice-vae. In the future, we will try more training programs to improve the effect.

😊 Welcome!

English | 简体中文

- Table of Contents

- Introduction

- Quick Start

- How to use

- Model zoo

- Algorithm Detailed

- TODO List

- Contact Us

- Reference

- License

EasyAnimate is a pipeline based on the transformer architecture that can be used to generate AI photos and videos, train baseline models and Lora models for the Diffusion Transformer. We support making predictions directly from the pre-trained EasyAnimate model to generate videos of about different resolutions, 6 seconds with 24 fps (1 ~ 144 frames, in the future, we will support longer videos). Users are also supported to train their own baseline models and Lora models to perform certain style transformations.

We will support quick pull-ups from different platforms, refer to Quick Start.

What's New:

- ModelScope-Sora "Data Directors" creative sprint has been annouced using EasyAnimate as the training backbone to investigate the influence of data preprocessing. Please visit the competition's official website for more information. [ 2024.06.17 ]

- Updated to v2, supports a maximum of 144 frames (768x768, 6s, 24fps) for generation. [ 2024.05.26 ]

- Create Code! Support for Windows and Linux Now. [ 2024.04.12 ]

Function:

These are our generated results GALLERY (Click the image below to see the video):

Our UI interface is as follows:

DSW has free GPU time, which can be applied once by a user and is valid for 3 months after applying.

Aliyun provide free GPU time in Freetier, get it and use in Aliyun PAI-DSW to start EasyAnimate within 5min!

If you are using docker, please make sure that the graphics card driver and CUDA environment have been installed correctly in your machine.

Then execute the following commands in this way:

EasyAnimateV2:

# pull image

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

# enter image

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

# clone code

git clone https://github.com/aigc-apps/EasyAnimate.git

# enter EasyAnimate's dir

cd EasyAnimate

# download weights

mkdir models/Diffusion_Transformer

mkdir models/Motion_Module

mkdir models/Personalized_Model

wget https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/Diffusion_Transformer/EasyAnimateV2-XL-2-512x512.tar -O models/Diffusion_Transformer/EasyAnimateV2-XL-2-512x512.tar

cd models/Diffusion_Transformer/

tar -xvf EasyAnimateV2-XL-2-512x512.tar

cd ../../

(Obsolete) EasyAnimateV1:

# pull image

docker pull mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

# enter image

docker run -it -p 7860:7860 --network host --gpus all --security-opt seccomp:unconfined --shm-size 200g mybigpai-public-registry.cn-beijing.cr.aliyuncs.com/easycv/torch_cuda:easyanimate

# clone code

git clone https://github.com/aigc-apps/EasyAnimate.git

# enter EasyAnimate's dir

cd EasyAnimate

# download weights

mkdir models/Diffusion_Transformer

mkdir models/Motion_Module

mkdir models/Personalized_Model

wget https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/Motion_Module/easyanimate_v1_mm.safetensors -O models/Motion_Module/easyanimate_v1_mm.safetensors

wget https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/Personalized_Model/easyanimate_portrait.safetensors -O models/Personalized_Model/easyanimate_portrait.safetensors

wget https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/Personalized_Model/easyanimate_portrait_lora.safetensors -O models/Personalized_Model/easyanimate_portrait_lora.safetensors

wget https://pai-aigc-photog.oss-cn-hangzhou.aliyuncs.com/easyanimate/Diffusion_Transformer/PixArt-XL-2-512x512.tar -O models/Diffusion_Transformer/PixArt-XL-2-512x512.tar

cd models/Diffusion_Transformer/

tar -xvf PixArt-XL-2-512x512.tar

cd ../../

We have verified EasyAnimate execution on the following environment:

The detailed of Linux:

- OS: Ubuntu 20.04, CentOS

- python: py3.10 & py3.11

- pytorch: torch2.2.0

- CUDA: 11.8

- CUDNN: 8+

- GPU: Nvidia-A10 24G & Nvidia-A100 40G & Nvidia-A100 80G

We need about 60GB available on disk (for saving weights), please check!

We'd better place the weights along the specified path:

EasyAnimateV2:

📦 models/

├── 📂 Diffusion_Transformer/

│ └── 📂 EasyAnimateV2-XL-2-512x512/

├── 📂 Personalized_Model/

│ └── your trained trainformer model / your trained lora model (for UI load)

(Obsolete) EasyAnimateV1:

📦 models/

├── 📂 Diffusion_Transformer/

│ └── 📂 PixArt-XL-2-512x512/

├── 📂 Motion_Module/

│ └── 📄 easyanimate_v1_mm.safetensors

├── 📂 Personalized_Model/

│ ├── 📄 easyanimate_portrait.safetensors

│ └── 📄 easyanimate_portrait_lora.safetensors

- Step 1: Download the corresponding weights and place them in the models folder.

- Step 2: Modify prompt, neg_prompt, guidance_scale, and seed in the predict_t2v.py file.

- Step 3: Run the predict_t2v.py file, wait for the generated results, and save the results in the samples/easyanimate-videos folder.

- Step 4: If you want to combine other backbones you have trained with Lora, modify the predict_t2v.py and Lora_path in predict_t2v.py depending on the situation.

- Step 1: Download the corresponding weights and place them in the models folder.

- Step 2: Run the app.py file to enter the graph page.

- Step 3: Select the generated model based on the page, fill in prompt, neg_prompt, guidance_scale, and seed, click on generate, wait for the generated result, and save the result in the samples folder.

A complete EasyAnimate training pipeline should include data preprocessing, Video VAE training, and Video DiT training. Among these, Video VAE training is optional because we have already provided a pre-trained Video VAE.

We have provided a simple demo of training the Lora model through image data, which can be found in the wiki for details.

A complete data preprocessing link for long video segmentation, cleaning, and description can refer to README in the video captions section.

If you want to train a text to image and video generation model. You need to arrange the dataset in this format.

📦 project/

├── 📂 datasets/

│ ├── 📂 internal_datasets/

│ ├── 📂 videos/

│ │ ├── 📄 00000001.mp4

│ │ ├── 📄 00000001.jpg

│ │ └── 📄 .....

│ └── 📄 json_of_internal_datasets.json

The json_of_internal_datasets.json is a standard JSON file. The file_path in the json can to be set as relative path, as shown in below:

[

{

"file_path": "videos/00000001.mp4",

"text": "A group of young men in suits and sunglasses are walking down a city street.",

"type": "video"

},

{

"file_path": "train/00000001.jpg",

"text": "A group of young men in suits and sunglasses are walking down a city street.",

"type": "image"

},

.....

]You can also set the path as absolute path as follow:

[

{

"file_path": "/mnt/data/videos/00000001.mp4",

"text": "A group of young men in suits and sunglasses are walking down a city street.",

"type": "video"

},

{

"file_path": "/mnt/data/train/00000001.jpg",

"text": "A group of young men in suits and sunglasses are walking down a city street.",

"type": "image"

},

.....

]Video VAE training is an optional option as we have already provided pre trained Video VAEs. If you want to train video vae, you can refer to README in the video vae section.

If the data format is relative path during data preprocessing, please set scripts/train_t2iv.sh as follow.

export DATASET_NAME="datasets/internal_datasets/"

export DATASET_META_NAME="datasets/internal_datasets/json_of_internal_datasets.json"

If the data format is absolute path during data preprocessing, please set scripts/train_t2iv.sh as follow.

export DATASET_NAME=""

export DATASET_META_NAME="/mnt/data/json_of_internal_datasets.json"

Then, we run scripts/train_t2iv.sh.

sh scripts/train_t2iv.sh(Obsolete) EasyAnimateV1:

If you want to train EasyAnimateV1. Please switch to the git branch v1.EasyAnimateV2:

| Name | Type | Storage Space | Url | Hugging Face | Description |

|---|---|---|---|---|---|

| EasyAnimateV2-XL-2-512x512.tar | EasyAnimateV2 | 16.2GB | Download | 🤗Link | EasyAnimateV2 official weights for 512x512 resolution. Training with 144 frames and fps 24 |

| EasyAnimateV2-XL-2-768x768.tar | EasyAnimateV2 | 16.2GB | Download | 🤗Link | EasyAnimateV2 official weights for 768x768 resolution. Training with 144 frames and fps 24 |

| easyanimatev2_minimalism_lora.safetensors | Lora of Pixart | 485.1MB | Download | - | A lora training with a specifial type images. Images can be downloaded from Url. |

(Obsolete) EasyAnimateV1:

| Name | Type | Storage Space | Url | Description |

|---|---|---|---|---|

| easyanimate_v1_mm.safetensors | Motion Module | 4.1GB | download | Training with 80 frames and fps 12 |

| Name | Type | Storage Space | Url | Description |

|---|---|---|---|---|

| PixArt-XL-2-512x512.tar | Pixart | 11.4GB | download | Pixart-Alpha official weights |

| easyanimate_portrait.safetensors | Checkpoint of Pixart | 2.3GB | download | Training with internal portrait datasets |

| easyanimate_portrait_lora.safetensors | Lora of Pixart | 654.0MB | download | Training with internal portrait datasets |

Video Cut

For long video cut, EasyAnimate utilizes PySceneDetect to identify scene changes within the video and performs scene cutting based on certain threshold values to ensure consistency in the themes of the video segments. After cutting, we only keep segments with lengths ranging from 3 to 10 seconds for model training.

Video Cleaning and Description

Following SVD's data preparation process, EasyAnimate provides a simple yet effective data processing pipeline for high-quality data filtering and labeling. It also supports distributed processing to accelerate the speed of data preprocessing. The overall process is as follows:

- Duration filtering: Analyze the basic information of the video to filter out low-quality videos that are short in duration or low in resolution.

- Aesthetic filtering: Filter out videos with poor content (blurry, dim, etc.) by calculating the average aesthetic score of uniformly distributed 4 frames.

- Text filtering: Use easyocr to calculate the text proportion of middle frames to filter out videos with a large proportion of text.

- Motion filtering: Calculate interframe optical flow differences to filter out videos that move too slowly or too quickly.

- Text description: Recaption video frames using videochat2 and vila. PAI is also developing a higher quality video recaption model, which will be released for use as soon as possible.

We have adopted PixArt-alpha as the base model and modified the VAE and DiT model structures on this basis to better support video generation. The overall structure of EasyAnimate is as follows:

The diagram below outlines the pipeline of EasyAnimate. It includes the Text Encoder, Video VAE (video encoder and decoder), and Diffusion Transformer (DiT). The T5 Encoder is used as the text encoder. Other components are detailed in the sections below.

To introduce feature information along the temporal axis, EasyAnimate incorporates the Motion Module to achieve the expansion from 2D images to 3D videos. For better generation effects, it jointly finetunes the Backbone together with the Motion Module, thereby achieving image generation and video generation within a single Pipeline.

Additionally, referencing U-ViT, it introduces a skip connection structure into EasyAnimate to further optimize deeper features by incorporating shallow features. A fully connected layer is also zero-initialized for each skip connection structure, allowing it to be applied as a plug-in module to previously trained and well-performing DiTs.

Moreover, it proposes Slice VAE, which addresses the memory difficulties encountered by MagViT when dealing with long and large videos, while also achieving greater compression in the temporal dimension during video encoding and decoding stages compared to MagViT.

For more details, please refer to arxiv.

- Support model with larger resolution.

- Support video inpaint model.

- Use Dingding to search group 77450006752 or Scan to join

- You need to scan the image to join the WeChat group or if it is expired, add this student as a friend first to invite you.

- magvit: https://github.com/google-research/magvit

- PixArt: https://github.com/PixArt-alpha/PixArt-alpha

- Open-Sora-Plan: https://github.com/PKU-YuanGroup/Open-Sora-Plan

- Open-Sora: https://github.com/hpcaitech/Open-Sora

- Animatediff: https://github.com/guoyww/AnimateDiff

This project is licensed under the Apache License (Version 2.0).