This is not an official Google product

This repo includes 4 demos from my Google Next talk and Google I/O talk on the Cloud ML APIs. To run the demos, follow the instructions below.

cdintovision-api-firebase- Create a project in the Firebase console and install the Firebase CLI

- Run

firebase loginvia the CLI and thenfirebase init functionsto initialize the Firebase SDK for Cloud Functions. When prompted, don't overwritefunctions/package.jsonorfunctions/index.js. - In your Cloud console for the same project, enable the Vision API

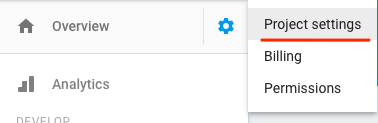

- Generate a service account for your project by navigating to the "Project settings" tab in your Firebase console and then selecting "Service Accouts". Click "Generate New Private Key" and save the file to your

functions/directory in a file calledkeyfile.json:

- In

functions/index.jsreplace both instances ofyour-firebase-project-idwith the ID of your Firebase project - Deploy your Cloud Function by running

firebase deploy --only functions - From the Authentication tab in your Firebase console, enable Twitter authentication (you can use whichever auth provider you'd like, I chose Twitter).

- Run the frontend locally by running

firebase servefrom thevision-api-firebase/directory of this project. Navigate tolocalhost:5000to try uploading a photo. After uploading a photo check your Functions logs and then your Firebase Database to confirm the function executed correctly. - Deploy the frontend by running

firebase deploy --only hosting. For future deploys you can runfirebase deployto deploy Functions and Hosting simultaneously.

cdintospeech/- Make sure you have SoX installed. On a Mac:

brew install sox --with-flac - Run the script:

bash request.sh

cdintonatural-language/- Generate Twitter Streaming API credentials and copy them to

local.json - Create a Google Cloud project, generate a JSON keyfile, and add the filepath to

local.json - Create a BigQuery dataset and table, add them to

local.json - Generate an API key and add it to

local.json - Change line 37 to filter tweets on whichver terms you'd like

- Run the script:

node twitter.js

cdintovision-speech-nl-translate- Make sure you've set up your GOOGLE_APPLICATION_CREDENTIALS with a Cloud project that has the Vision, Speech, NL, and Translation APIs enabled

- Run the script:

python textify.py - Note: if you're running it with image OCR, copy an image file to your local directory