BOVText: A Large-Scale, Bilingual Open World Dataset for Video Text Spotting

Updated on December 10, 2021 (Release all dataset(2021 videos))

Updated on June 06, 2021 (Added evaluation metric)

Released on May 26, 2021

YouTube Demo | Homepage | Paper

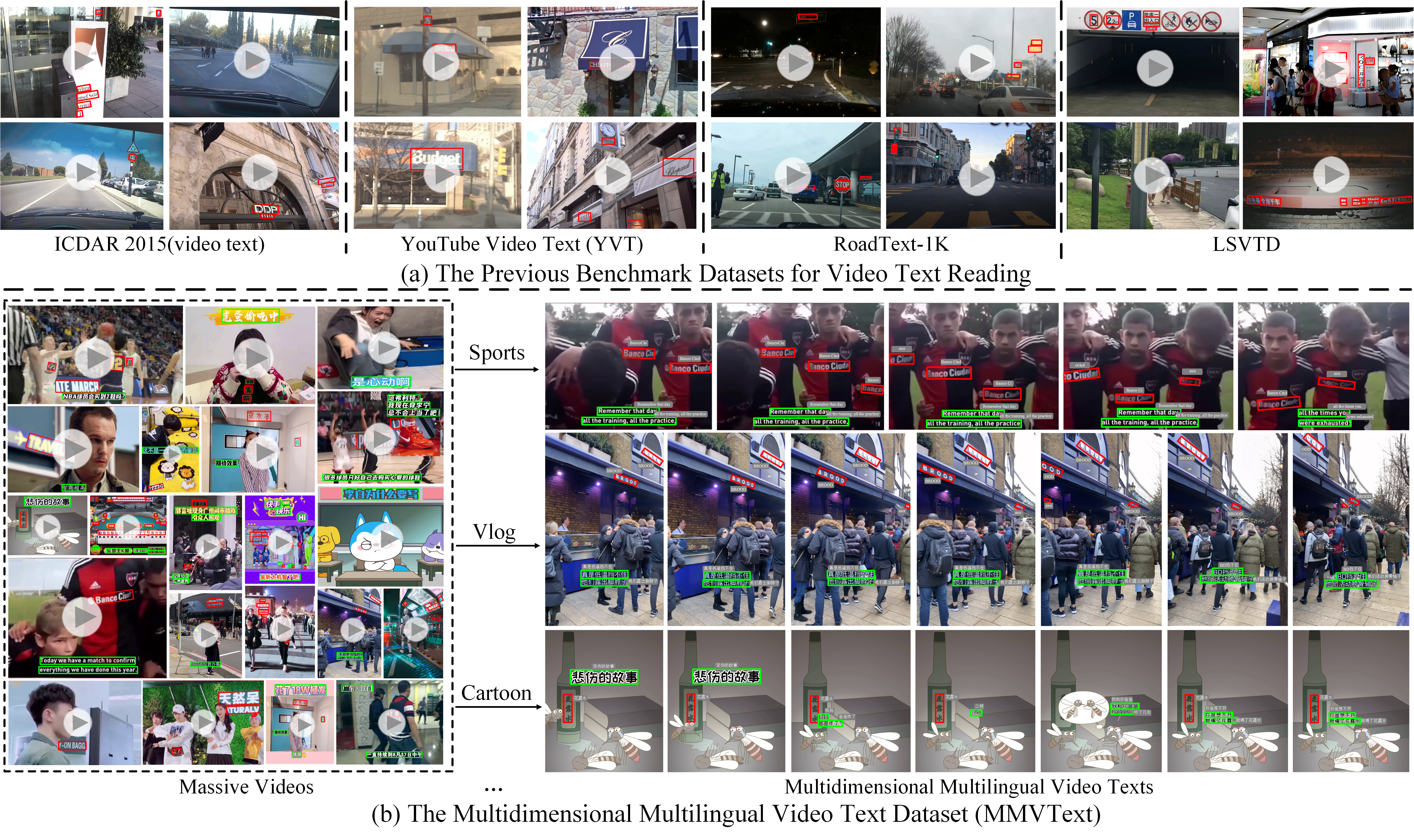

We create a new large-scale benchmark dataset named Bilingual, Open World Video Text(BOVText), the first large-scale and multilingual benchmark for video text spotting in a variety of scenarios. All data are collected from KuaiShou and YouTube

There are mainly three features for BOVText:

- Large-Scale: we provide 2,000+ videos with more than 1,750,000 frame images, four times larger than the existing largest dataset for text in videos.

- Open Scenario:BOVText covers 30+ open categories with a wide selection of various scenarios, e.g., life vlog, sports news, automatic drive, cartoon, etc. Besides, caption text and scene text are separately tagged for the two different representational meanings in the video. The former represents more theme information, and the latter is the scene information.

- Bilingual:BOVText provides Bilingual text annotation to promote multiple cultures live and communication.

The proposed BOVText support four task(text detection, recognition, tracking, spotting), but mainly includes two tasks:

- Video Frames Detection.

- Video Frames Recognition.

- Video Text Tracking.

- End to End Text Spotting in Videos.

MOTP (Multiple Object Tracking Precision)[1], MOTA (Multiple Object Tracking Accuracy) and IDF1[3,4] as the three important metrics are used to evaluate task1 (text tracking) for MMVText. In particular, we make use of the publicly available py-motmetrics library (https://github.com/cheind/py-motmetrics) for the establishment of the evaluation metric.

Word recognition evaluation is case-insensitive, and accent-insensitive. The transcription '###' or "#1" is special, as it is used to define text areas that are unreadable. During the evaluation, such areas will not be taken into account: a method will not be penalised if it does not detect these words, while a method that detects them will not get any better score.

The objective of this task is to obtain the location of words in the video in terms of their affine bounding boxes. The task requires that words are both localised correctly in every frame and tracked correctly over the video sequence. Please output the json file as following:

Output

.

├-Cls10_Program_Cls10_Program_video11.json

│-Cls10_Program_Cls10_Program_video12.json

│-Cls10_Program_Cls10_Program_video13.json

├-Cls10_Program_Cls10_Program_video14.json

│-Cls10_Program_Cls10_Program_video15.json

│-Cls10_Program_Cls10_Program_video16.json

│-Cls11_Movie_Cls11_Movie_video17.json

│-Cls11_Movie_Cls11_Movie_video18.json

│-Cls11_Movie_Cls11_Movie_video19.json

│-Cls11_Movie_Cls11_Movie_video20.json

│-Cls11_Movie_Cls11_Movie_video21.json

│-...

And then cd Evaluation_Protocol/Task1_VideoTextTracking,

run following script:

python evaluation.py --groundtruths ./Test/Annotation --tests ./output

Please output the json file like task 3.

cd Evaluation_Protocol/Task2_VideoTextSpotting,

run following script:

python evaluation.py --groundtruths ./Test/Annotation --tests ./output

We create a single JSON file for each video in the dataset to store the ground truth in a structured format, following the naming convention: gt_[frame_id], where frame_id refers to the index of the video frame in the video

In a JSON file, each gt_[frame_id] corresponds to a list, where each line in the list correspond to one word in the image and gives its bounding box coordinates, transcription, text type(caption or scene text) and tracking ID, in the following format:

{

“frame_1”:

[

{

"points": [x1, y1, x2, y2, x3, y3, x4, y4],

“tracking ID”: "1" ,

“transcription”: "###",

“category”: title/caption/scene text,

“language”: Chinese/English,

“ID_transcription“: complete words for the whole trajectory

},

…

{

"points": [x1, y1, x2, y2, x3, y3, x4, y4],

“tracking ID”: "#" ,

“transcription”: "###",

“category”: title/caption/scene text,

“language”: Chinese/English,

“ID_transcription“: complete words for the whole trajectory

}

],

“frame_2”:

[

{

"points": [x1, y1, x2, y2, x3, y3, x4, y4],

“tracking ID”: "1" ,

“transcription”: "###",

“category”: title/caption/scene text,

“language”: Chinese/English,

“ID_transcription“: complete words for the whole trajectory

},

…

{

"points": [x1, y1, x2, y2, x3, y3, x4, y4],

“tracking ID”: "#" ,

“transcription”: "###",

“category”: title/caption/scene text,

“language”: Chinese/English,

“ID_transcription“: complete words for the whole trajectory

}

],

……

}

- The BOVText dataset is available for non-commercial research purposes only.

- Please download the agreement and read it carefully.

- Please ask your supervisor/advisor to sign the agreement appropriately and then send the scanned version (example) to Weijia Wu (weijiawu98@gmail.com).

- After verifying your request, we will contact you with the dataset download link.

Important Announcements: we expand the data size from 1,850 videos to 2,021 videos, causing the performance difference between arxiv paper and the NeurIPS version. Therefore, please refer to the latest arXiv paper, while existing ambiguity.

</tbody>

| Method | Text Tracking Performance/% | End to End Video Text Spotting/% | Published at | ||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| MOTA | MOTP | IDP | IDR | IDF1 | MOTA | MOTP | IDP | IDR | IDF1 | ||

| EAST+CRNN | -21.6 | 75.8 | 29.9 | 26.5 | 28.1 | -79.3 | 76.3 | 6.8 | 6.9 | 6.8 | - |

| TransVTSpotter | 68.2 | 82.1 | 71.0 | 59.7 | 64.7 | -1.4 | 82.0 | 43.6 | 38.4 | 40.8 | - |

The author will plays an active participant in the video text field and maintaining the dataset at least before 2023 years. And the maintenance plan as the following:

- Merging and releasing the whole dataset after further review. (Around before November, 2021)

- Updating evaluation guidance and script code for four tasks(detection, tracking, recognition, and spotting). (Around before November, 2021)

- Hosting a competition concerning our work for promotional and publicity. (Around before March,2022)

More video-and-language tasks will be supported in our dataset:

- Text-based Video Retrieval[5] (Around before March,2022)

- Text-based Video Caption[6] (Around before September,2023)

- Text-based VQA[7][8] (TED)

- update evaluation metric

- update data and annotation link

- update evaluation guidance

- update Baseline(TransVTSpotter)

- ...

@article{wu2021opentext,

title={A Bilingual, OpenWorld Video Text Dataset and End-to-end Video Text Spotter with Transformer},

author={Weijia Wu, Debing Zhang, Yuanqiang Cai, Sibo Wang, Jiahong Li, Zhuang Li, Yejun Tang, Hong Zhou},

journal={35th Conference on Neural Information Processing Systems (NeurIPS 2021) Track on Datasets and Benchmarks},

year={2021}

}

Affiliations: Zhejiang University, MMU of Kuaishou Technology

Authors: Weijia Wu(Zhejiang University), Debing Zhang(Kuaishou Technology), Zhuang Li (lizhuang05@kuaishou.com) Jiahong Li(lijiahong@kuaishou.com)

Suggestions and opinions of this dataset (both positive and negative) are greatly welcome. Please contact the authors by sending email to

weijiawu96@gmail.com.

The project is open source under CC-by 4.0 license (see the LICENSE file).

Only for research purpose usage, it is not allowed for commercial purpose usage.

The videos were partially downloaded from internet videos and some may be subject to copyright. We don't own the copyright of those videos and only provide them for non-commercial research purposes only. For each video from internet videos, while we tried to identify video that are licensed under a Creative Commons Attribution license, we make no representations or warranties regarding the license status of each video and you should verify the license for each image yourself.

Except where otherwise noted, content on this site is licensed under a Creative Commons Attribution 4.0 License.

[1] Dendorfer, P., Rezatofighi, H., Milan, A., Shi, J., Cremers, D., Reid, I., Roth, S., Schindler, K., & Leal-Taixe, L. (2019). CVPR19 Tracking and Detection Challenge: How crowded can it get?. arXiv preprint arXiv:1906.04567.

[2] Bernardin, K. & Stiefelhagen, R. Evaluating Multiple Object Tracking Performance: The CLEAR MOT Metrics. Image and Video Processing, 2008(1):1-10, 2008.

[3] Ristani, E., Solera, F., Zou, R., Cucchiara, R. & Tomasi, C. Performance Measures and a Data Set for Multi-Target, Multi-Camera Tracking. In ECCV workshop on Benchmarking Multi-Target Tracking, 2016.

[4] Li, Y., Huang, C. & Nevatia, R. Learning to associate: HybridBoosted multi-target tracker for crowded scene. In Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, 2009.

[5] Anand Mishra, Karteek Alahari, and CV Jawahar. Image retrieval using textual cues. In Proceedings of the IEEE International Conference on Computer Vision, pages 3040–3047, 2013.

[6] Oleksii Sidorov, Ronghang Hu, Marcus Rohrbach, and Amanpreet Singh. Textcaps: a dataset for image captioning with reading comprehension. In European Conference on Computer Vision, pages 742–758. Springer, 2020.

[7] Minesh Mathew, Dimosthenis Karatzas, C. V. Jawahar, "DocVQA: A Dataset for VQA on Document Images", arXiv:2007.00398 [cs.CV], WACV 2021

[8] Minesh Mathew, Ruben Tito, Dimosthenis Karatzas, R. Manmatha, C.V. Jawahar, "Document Visual Question Answering Challenge 2020", arXiv:2008.08899 [cs.CV], DAS 2020