Learning Situation Hyper-Graphs for Video Question Answering [CVPR 2023]

Aisha Urooj Khan, Hilde Kuehne, Bo Wu, Kim Chheu, Walid Bousselhum, Chuang Gan, Niels Da Vitoria Lobo, Mubarak Shah

Official Pytorch implementation and pre-trained models for Learning Situation Hyper-Graphs for Video Question Answering (coming soon).

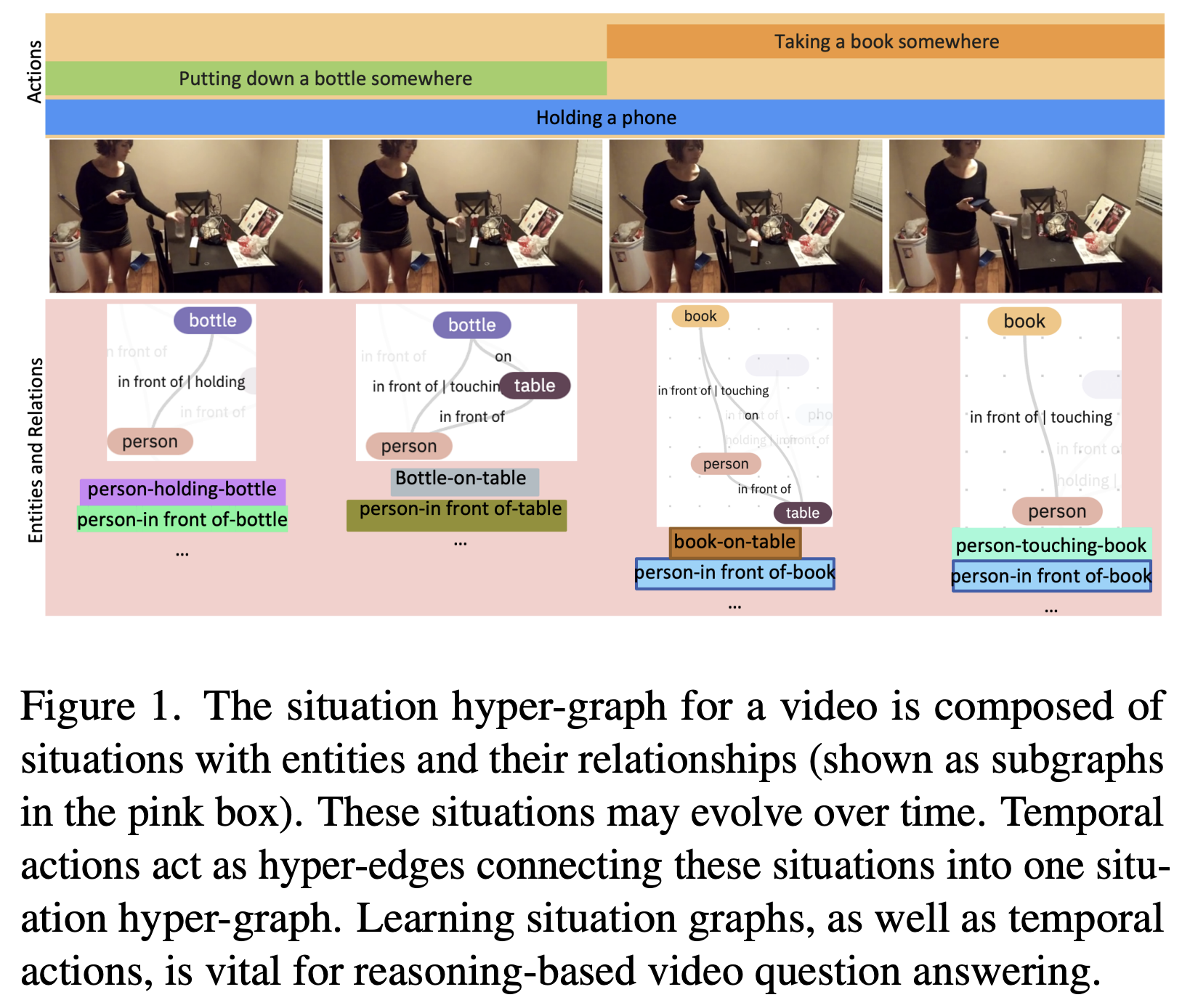

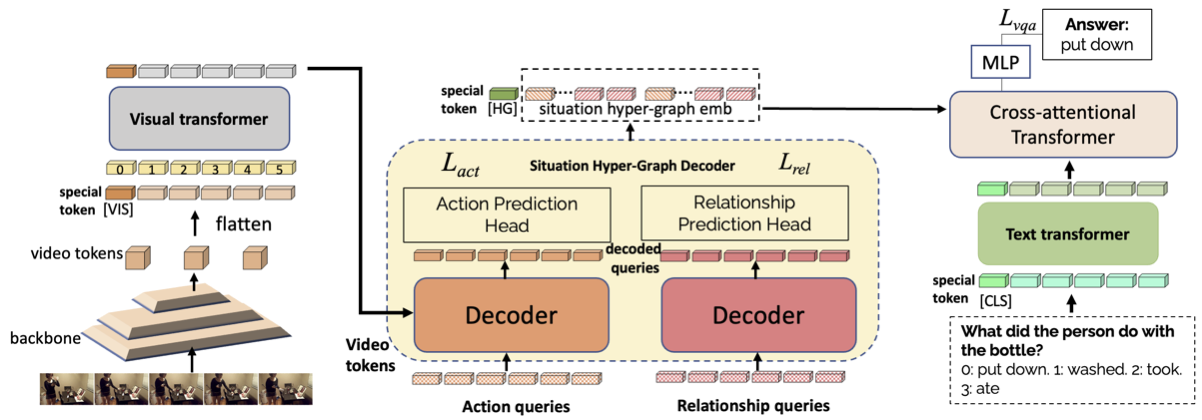

Answering questions about complex situations in videos requires not only capturing the presence of actors, objects, and their relations but also the evolution of these relationships over time. A situation hyper-graph is a representation that describes situations as scene sub-graphs for video frames and hyper-edges for connected sub-graphs and has been proposed to capture all such information in a compact structured form. In this work, we propose an architecture for Video Question Answering (VQA) that enables answering questions related to video content by predicting situation hyper-graphs, coined Situation Hyper-Graph based Video Question Answering (SHG-VQA). To this end, we train a situation hyper-graph decoder to implicitly identify graph representations with actions and object/human-object relationships from the input video clip. and to use cross-attention between the predicted situation hyper-graphs and the question embedding to predict the correct answer. The proposed method is trained in an end-to-end manner and optimized by a VQA loss with the cross-entropy function and a Hungarian matching loss for the situation graph prediction. The effectiveness of the proposed architecture is extensively evaluated on two challenging benchmarks: AGQA and STAR. Our results show that learning the underlying situation hypergraphs helps the system to significantly improve its performance for novel challenges of video question-answering task.

We report results on two reasoning-based video question answering datasets: AGQA and STAR.

Instructions to preprocess AGQA and STAR (to be added)

Download train and val split for the balanced version of AGQA 2.0 we used in our experiments from here.

If this work and/or its findings are useful for your research, please cite our paper.

Please contact 'aishaurooj@gmail.com'