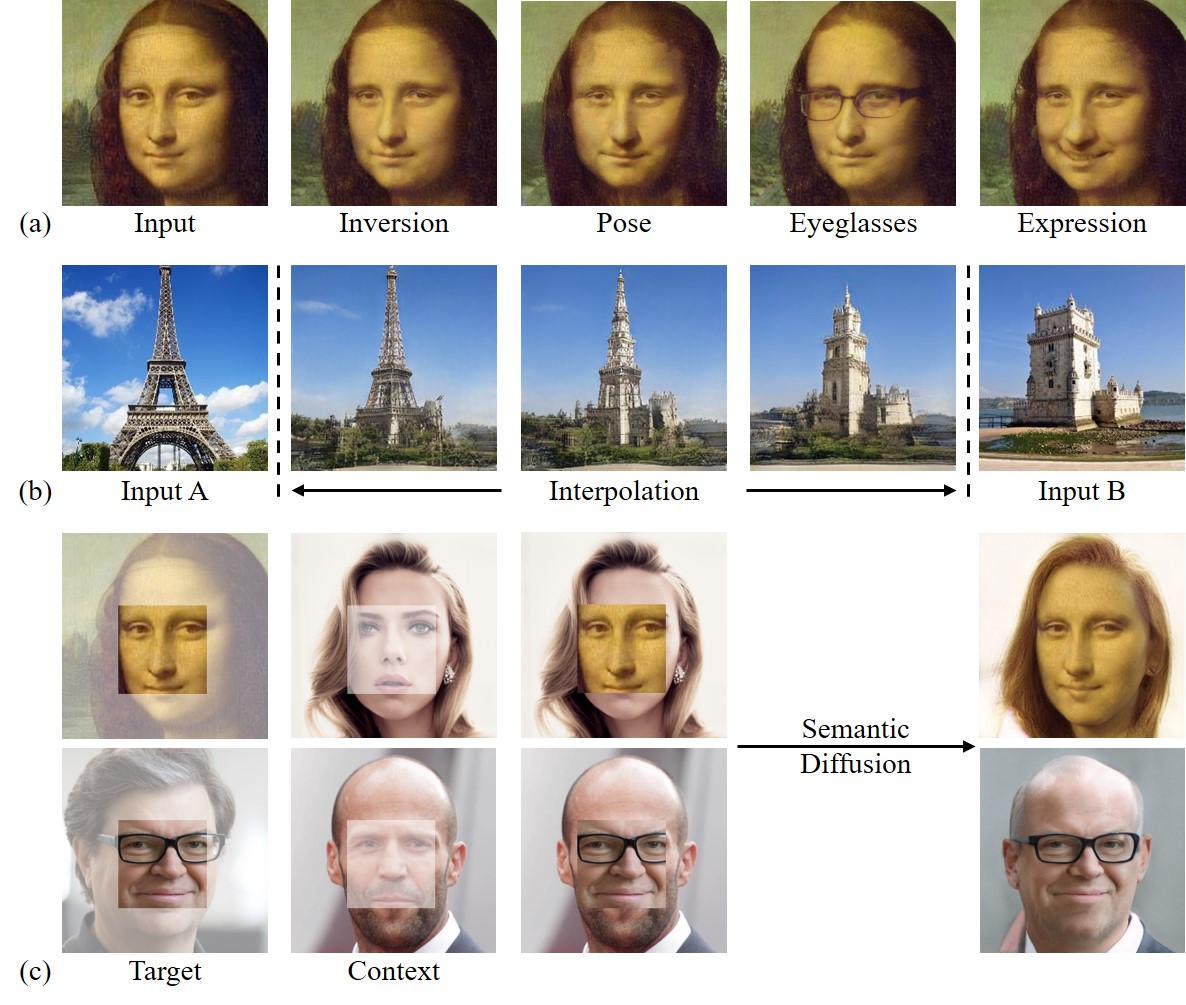

Figure: Real image editing using the proposed In-Domain GAN inversion with a fixed GAN generator.

In-Domain GAN Inversion for Real Image Editing

Jiapeng Zhu*, Yujun Shen*, Deli Zhao, Bolei Zhou

European Conference on Computer Vision (ECCV) 2020

In the repository, we propose an in-domain GAN inversion method, which not only faithfully reconstructs the input image but also ensures the inverted code to be semantically meaningful for editing. Basically, the in-domain GAN inversion contains two steps:

- Training domain-guided encoder.

- Performing domain-regularized optimization.

NEWS: Please also find this repo, which is friendly to PyTorch users!

[Paper] [Project Page] [Demo]

Please download the pre-trained models from the following links. For each model, it contains the GAN generator and discriminator, as well as the proposed domain-guided encoder.

| Path | Description |

|---|---|

| face_256x256 | In-domain GAN trained with FFHQ dataset. |

| tower_256x256 | In-domain GAN trained with LSUN Tower dataset. |

| bedroom_256x256 | In-domain GAN trained with LSUN Bedroom dataset. |

MODEL_PATH='styleganinv_face_256.pkl'

IMAGE_LIST='examples/test.list'

python invert.py $MODEL_PATH $IMAGE_LISTNOTE: We find that 100 iterations are good enough for inverting an image, which takes about 8s (on P40). But users can always use more iterations (much slower) for a more precise reconstruction.

MODEL_PATH='styleganinv_face_256.pkl'

TARGET_LIST='examples/target.list'

CONTEXT_LIST='examples/context.list'

python diffuse.py $MODEL_PATH $TARGET_LIST $CONTEXT_LISTNOTE: The diffusion process is highly similar to image inversion. The main difference is that only the target patch is used to compute loss for masked optimization.

SRC_DIR='results/inversion/test'

DST_DIR='results/inversion/test'

python interpolate.py $MODEL_PATH $SRC_DIR $DST_DIRIMAGE_DIR='results/inversion/test'

BOUNDARY='boundaries/expression.npy'

python manipulate.py $MODEL_PATH $IMAGE_DIR $BOUNDARYNOTE: Boundaries are obtained using InterFaceGAN.

STYLE_DIR='results/inversion/test'

CONTENT_DIR='results/inversion/test'

python mix_style.py $MODEL_PATH $STYLE_DIR $CONTENT_DIRThe GAN model used in this work is StyleGAN. Beyond the original repository, we make following changes:

- Change repleated

$w$ for all layers to different $w$s (Line 428-435 in filetraining/networks_stylegan.py). - Add the domain-guided encoder in file

training/networks_encoder.py. - Add losses for training the domain-guided encoder in file

training/loss_encoder.py. - Add schedule for training the domain-guided encoder in file

training/training_loop_encoder.py. - Add a perceptual model (VGG16) for computing perceptual loss in file

perceptual_model.py. - Add training script for the domain-guided encoder in file

train_encoder.py.

python train.pyModify train_encoder.py as follows:

- Add training and test dataset path to

Data_dirintrain_encoder.py. - Add generator path to

Decoder_pklintrain_encoder.py.

python train_encoder.py@inproceedings{zhu2020indomain,

title = {In-domain GAN Inversion for Real Image Editing},

author = {Zhu, Jiapeng and Shen, Yujun and Zhao, Deli and Zhou, Bolei},

booktitle = {Proceedings of European Conference on Computer Vision (ECCV)},

year = {2020}

}