This repository contains the implementation of the following paper:

Removing Diffraction Image Artifacts in Under-Display Camera via Dynamic Skip Connection Network

Ruicheng Feng, Chongyi Li, Huaijin Chen, Shuai Li, Chen Change Loy, Jinwei Gu

Computer Vision and Pattern Recognition (CVPR), 2021

[Paper] [Project Page]

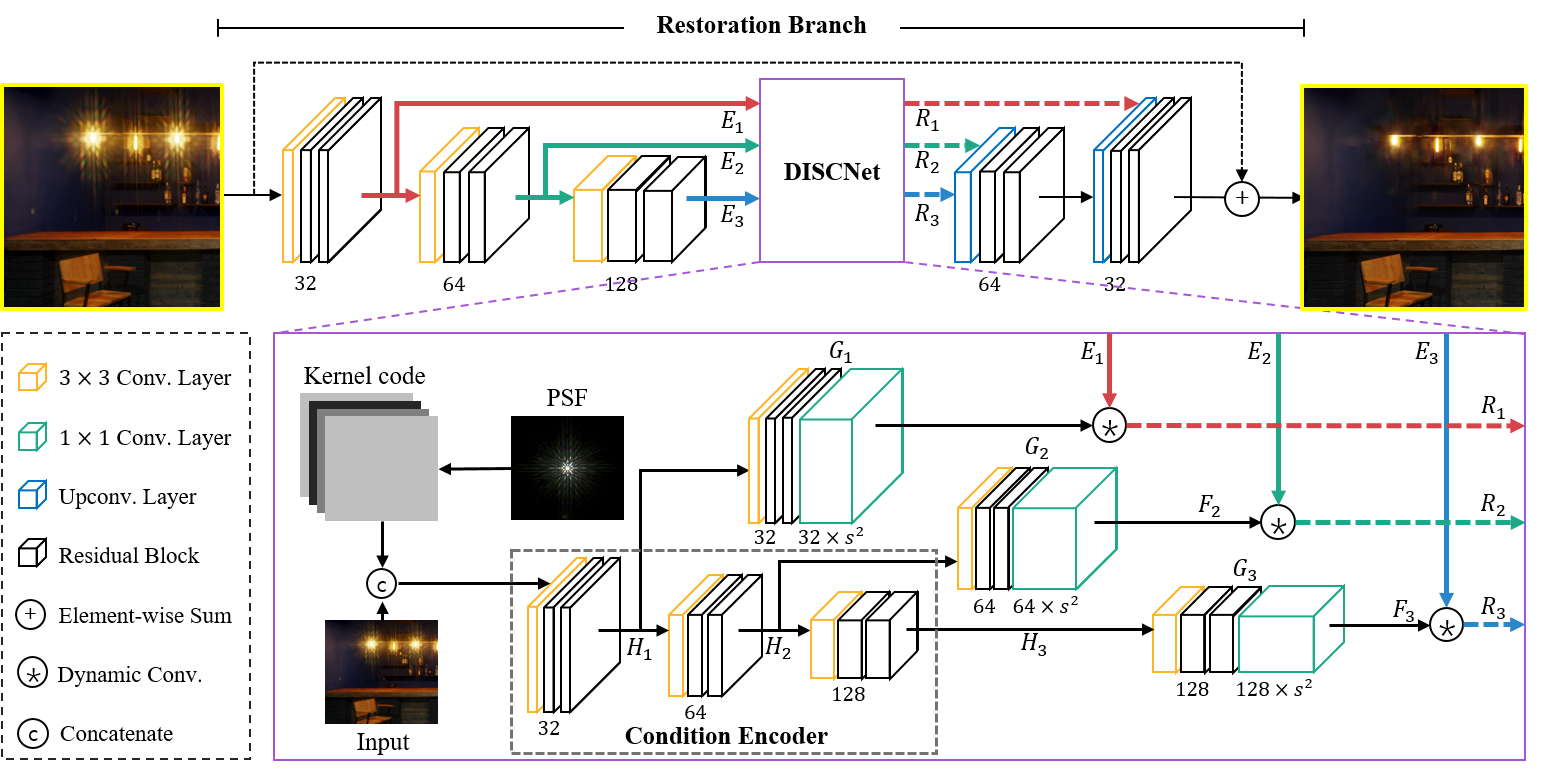

Overview of our proposed DISCNet. The main restoration branch consists of an encoder and a decoder, with feature maps propagated and transformed by DISCNet through skip connections. DISCNet applies multi-scale dynamic convolutions using generated filters conditioned on PSF kernel code and spatial information from input images.

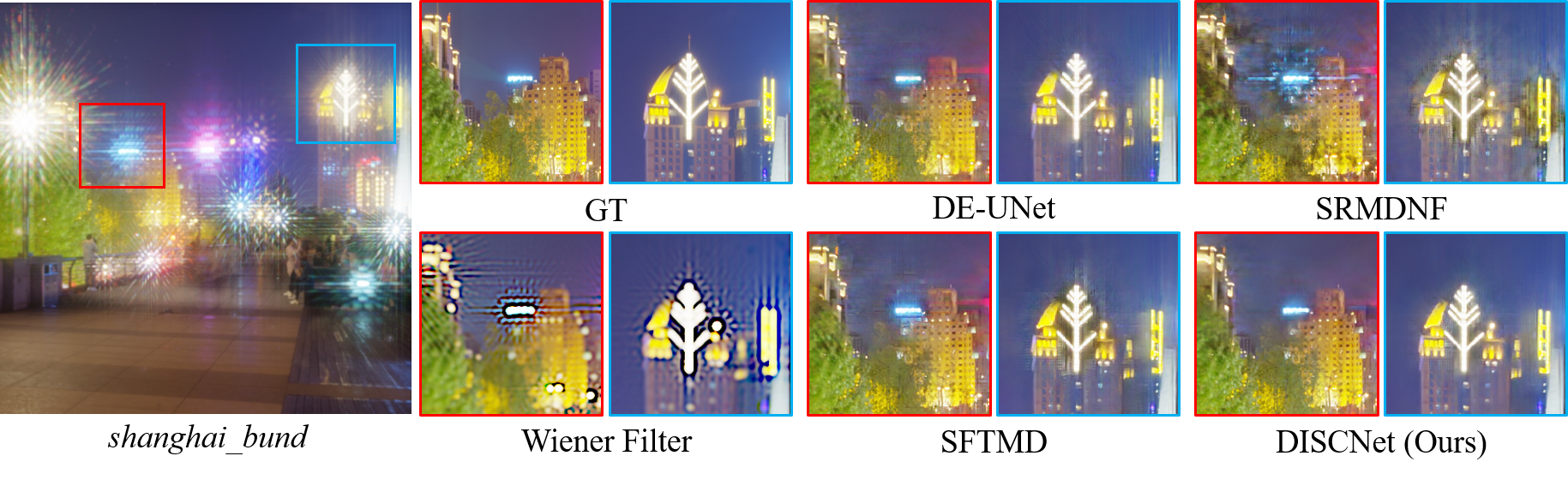

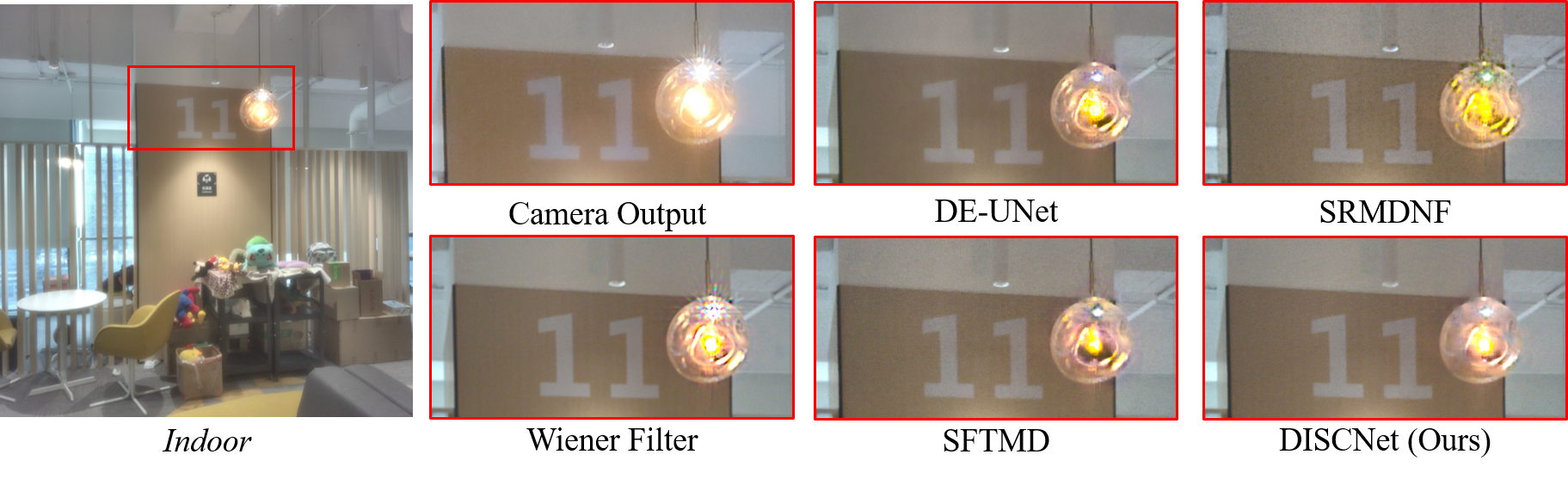

Figure: Removing Diffraction artifacts from Under-Display Camera (UDC) images using the proposed DISCNet. The major degradations caused by light diffraction are a combination of flare, blur, and haze.

- Python >= 3.7 (Recommend to use Anaconda or Miniconda)

- PyTorch >= 1.3

- NVIDIA GPU + CUDA

-

Clone repo

git clone https://github.com/jnjaby/DISCNet.git

-

Install Dependencies

cd DISCNet pip install -r requirements.txt -

Install BasicSR

Compile BasicSR without cuda extensions for DCN (Remember to modify the CUDA paths in

make.shand make sure that your GCC version: gcc >= 5)sh ./make.sh

You can use the provided download script. The script considerably reduce your workload by automatically downloading all the requested files, retrying each file on error, and unzip files.

Alternatively, you can also grab the data directly from GoogleDrive, unzip and put them into ./datasets.

Please refer to Datasets.md for pre-processing and more details.

We provide quick test code with the pretrained model. The testing command assumes using single GPU testing. Please see TrainTest.md if you prefer using slurm.

-

Download pretrained models from Google Drive.

python scripts/download_pretrained_models.py --method=DISCNet

-

Modify the paths to dataset and pretrained model in the following yaml files for configuration.

./options/test/DISCNet_test_synthetic_data.yml ./options/test/DISCNet_test_real_data.yml

-

Run test code for synthetic data.

python -u basicsr/test.py -opt "options/test/DISCNet_test_synthetic_data.yml" --launcher="none"

Check out the results in

./results. -

Run test code for real data.

Feed the real data to the pre-trained network.

python -u basicsr/test.py -opt "options/test/DISCNet_test_real_data.yml" --launcher="none"

The results are in

./results(Pre-ISP versions, saved as.pngand.npy.)(Optional) If you wish to further improve the visual quality, apply the post-processing pipeline.

cd datasets python data_scripts/post_process.py \ --data_path="../results/DISCNet_test_real_data/visualization/ZTE_real_rot_5" \ --ref_path="datasets/real_data" \ --save_path="../results/post_images"

All logging files in the training process, e.g., log message, checkpoints, and snapshots, will be saved to ./experiments and ./tb_logger directory.

-

Prepare datasets. Please refer to

Dataset Preparation. -

Modify config files.

./options/train/DISCNet_train.yml

-

Run training code (Slurm Training). Kindly checkout TrainTest.md and use single GPU training, distributed training, or slurm training as per your preference.

srun -p [partition] --mpi=pmi2 --job-name=DISCNet --gres=gpu:2 --ntasks=2 --ntasks-per-node=2 --cpus-per-task=2 --kill-on-bad-exit=1 \ python -u basicsr/train.py -opt "options/train/DISCNet_train.yml" --launcher="slurm"

If you find our repo useful for your research, please consider citing our paper:

@InProceedings{Feng_2021_CVPR,

author = {Feng, Ruicheng and Li, Chongyi and Chen, Huaijin and Li, Shuai and Loy, Chen Change and Gu, Jinwei},

title = {Removing Diffraction Image Artifacts in Under-Display Camera via Dynamic Skip Connection Network},

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

month = {June},

year = {2021},

pages = {662-671}

}This project is open sourced under MIT license. The code framework is mainly modified from BasicSR. Please refer to the original repo for more usage and documents.

If you have any question, please feel free to contact us via ruicheng002@ntu.edu.sg.