Extending the functionality of MLLMs by integrating an additional region-level vision encoder.

- Clone this repository and navigate to PVIT folder

git clone https://github.com/THUNLP-MT/PVIT.git

cd PVIT- Install Package

conda create -n pvit python=3.9.6

conda activate pvit

pip install -r requirements.txt- Install RegionCLIP

git clone https://github.com/microsoft/RegionCLIP.git

pip install -e RegionCLIPClick here for more details.

To get PVIT weights, please first download weights of LLaMA and RegionCLIP. For RegionCLIP, please download regionclip_pretrained-cc_rn50x4.pth.

Click here for PVIT checkpoints. Please put all the weights in folder model_weights and merge PVIT weights with LLaMA weights through the following command.

BASE_MODEL=model_weights/llama-7b TARGET_MODEL=model_weights/pvit DELTA=model_weights/pvit-delta ./scripts/delta_apply.shWe provide prompts and few-shot examples used when querying ChatGPT in both task-specific instruction data generation and general instruction data generation (Figure 3 (b) and Figure 3 (c) in our paper).

The data_generation/task-specific folder includes seeds, prompts and examples in single-turn conversation generation and multi-turn conversation generation. Single-turn conversation generation includes five types of tasks: small object recognition, object relationship-based reasoning, optical character recognition (OCR), object attribute-based reasoning, and same-category object discrimination,

The data_generation/general folder includes seeds, prompts and examples used in general instruction data generation.

To run our demo, you need to prepare PVIT checkpoints locally. Please follow the instructions here to download and merge the checkpoints.

To run the demo, please first launch a web server with the following command.

MODEL_PATH=model_weights/pvit CONTROLLER_PORT=39996 WORKER_PORT=40004 ./scripts/model_up.shRun the following command to run a Streamlit demo locally. The port of MODEL_ADDR should be consistant with WORKER_PORT.

MODEL_ADDR=http://0.0.0.0:40004 ./scripts/run_demo.shRun the following command to do cli inference locally. The port of MODEL_ADDR should be consistant with WORKER_PORT.

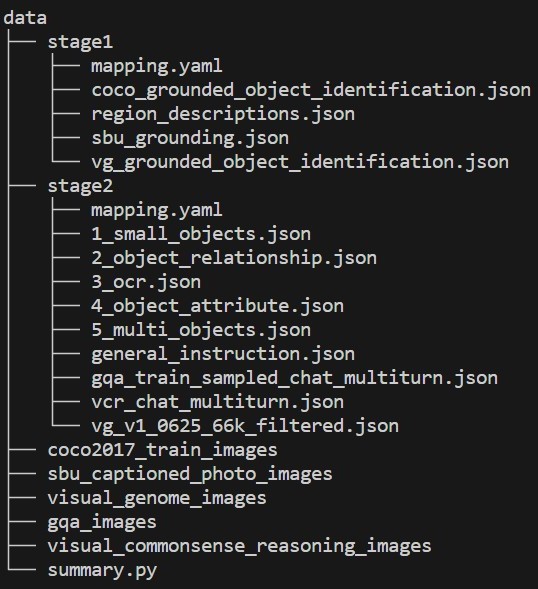

MODEL_ADDR=http://0.0.0.0:40004 ./scripts/run_cli.shYou can download stage1 and stage2 training data on huggingface. You are required to download pictures of COCO2017 Train, SBU Captioned Photo, Visual Genome, GQA and Visual Commonsense Reasoning datasets as well. Please put stage1 and stage2 data, and the downloaded pictures in folder data as follows. You can modify image_paths in data/stage1/mapping.yaml and data/stage2/mapping.yaml to change the path of downloaded pictures.

Our model is trained in two stages. In stage 1, we initialize the model with the pre-trained LLaVA, and only train the linear projection layer that is responsible for transforming the region features. In stage 2, we only keep the parameters of the image encoder and the region encoder frozen, and fine-tune the rest of the model.

To train PVIT, please download the pretrained LLaVA checkpoints, and put it in folder model_weights.

The following commands are for stage 1 training.

export MODEL_PATH="model_weights/llava-lightning-7b-v1"

export REGION_CLIP_PATH="model_weights/regionclip_pretrained-cc_rn50x4.pth"

export DATA_PATH="data/stage1"

export OUTPUT_DIR="checkpoints/stage1_ckpt"

export PORT=25001

./scripts/train_stage1.shThe following commands are for stage 2 training.

export MODEL_PATH="checkpoints/stage1_ckpt"

export REGION_CLIP_PATH="model_weights/regionclip_pretrained-cc_rn50x4.pth"

export DATA_PATH="data/stage2"

export OUTPUT_DIR="checkpoints/stage2_ckpt"

export PORT=25001

./scripts/train_stage2.shWe propose FineEval dataset for human evaluation. See folder fine_eval for the dataset and model outputs. The files in the folder are as follows.

images: Image files of FineEval dataset.instructions.jsonl: Questions of FineEval dataset.pvit.jsonl: The results of PVIT (ours) model.llava.jsonl: The results of LLaVA model.shikra.jsonl: The results of Shikra model.gpt4roi.jsonl: The results of GPT4RoI model.

To run PVIT on FineEval dataset, you can launch a web server and run the following command. The port of MODEL_ADDR should be consistant with WORKER_PORT.

MODEL_ADDR=http://0.0.0.0:40004 ./scripts/run_fine_eval.shIf you find PVIT useful for your research and applications, please cite using this BibTeX:

@misc{chen2023positionenhanced,

title={Position-Enhanced Visual Instruction Tuning for Multimodal Large Language Models},

author={Chi Chen and Ruoyu Qin and Fuwen Luo and Xiaoyue Mi and Peng Li and Maosong Sun and Yang Liu},

year={2023},

eprint={2308.13437},

archivePrefix={arXiv},

primaryClass={cs.CV}

}- LLaVA: the codebase we built upon, which has the amazing multi-modal capabilities!

- Vicuna: the codebase LLaVA built upon, and the base model Vicuna-13B that has the amazing language capabilities!

- RegionCLIP: Our prompt encoder.

- Instruction Tuning with GPT-4

- LLaVA: Large Language and Vision Assistant

- LLaVA-Med: Training a Large Language-and-Vision Assistant for Biomedicine in One Day

- Otter: In-Context Multi-Modal Instruction Tuning

- Shikra: Unleashing Multimodal LLM’s Referential Dialogue Magic

- GPT4RoI: Instruction Tuning Large Language Model on Region-of-Interest