Phidias: A Generative Model for Creating 3D Content from Text, Image, and 3D Conditions with Reference-Augmented Diffusion

phidias_video.mp4

Project page | Paper | Video

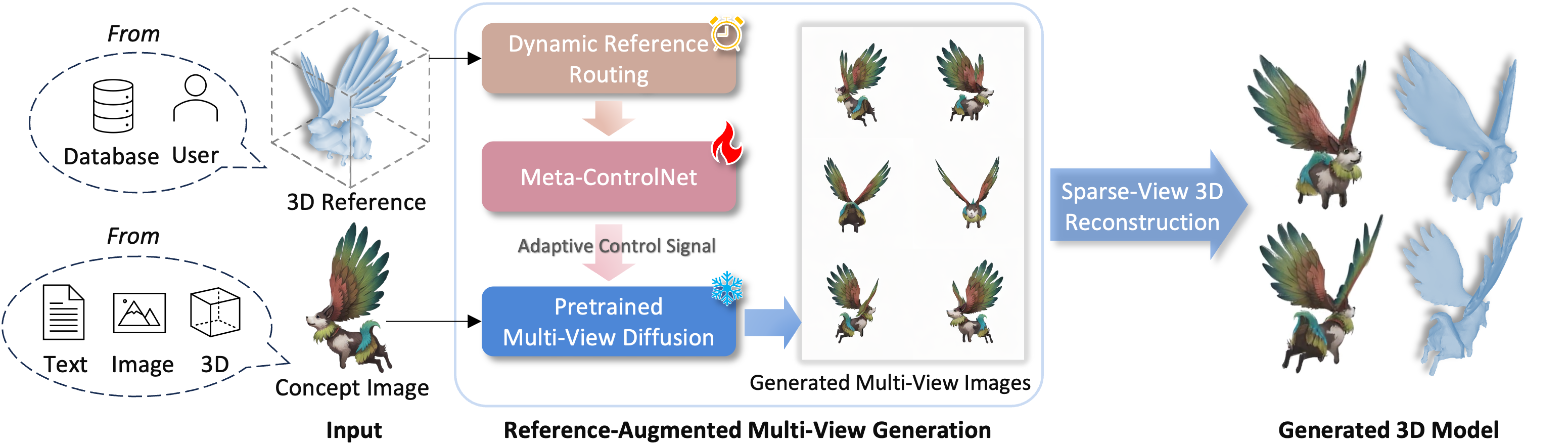

In 3D modeling, designers often use an existing 3D model as a reference to create new ones. This practice has inspired the development of Phidias, a novel generative model that uses diffusion for reference-augmented 3D generation. Given an image, our method leverages a retrieved or user-provided 3D reference model to guide the generation process, thereby enhancing the generation quality, generalization ability, and controllability. Our model integrates three key components: 1) meta-ControlNet that dynamically modulates the conditioning strength, 2) dynamic reference routing that mitigates misalignment between the input image and 3D reference, and 3) self-reference augmentations that enable self-supervised training with a progressive curriculum. Collectively, these designs result in a clear improvement over existing methods. Phidias establishes a unified framework for 3D generation using text, image, and 3D conditions with versatile applications.

If you find this work helpful for your research, please cite:

@article{wang2024phidias,

title={Phidias: A Generative Model for Creating 3D Content from Text, Image, and 3D Conditions with Reference-Augmented Diffusion},

author={Zhenwei Wang and Tengfei Wang and Zexin He and Gerhard Hancke and Ziwei Liu and Rynson W.H. Lau},

eprint={2409.11406},

archivePrefix={arXiv},

primaryClass={cs.CV},

year={2024},

url={https://arxiv.org/abs/2409.11406},

}