Jiayi Liu, Ali Mahdavi-Amiri, Manolis Savva

Presented at ICCV 2023

Project | Arxiv | Paper | Data (alternative link for data: OneDrive)

We recommend the use of miniconda to manage system dependencies. The environment was tested on Ubuntu 20.04.4 LTS with a single GPU (12 GB memory required at minimum).

Create an environment from the environment.yml file.

conda env create -f environment.yml

conda activate paris

Then install the torch bindings for tiny-cuda-nn:

pip install ninja git+https://github.com/NVlabs/tiny-cuda-nn/#subdirectory=bindings/torch

We release both synthetic and real data shown in the paper here. Once downloaded, folders data and load should be put directly under the project directory.

If you find it slow to download the data from our server, please try this OneDrive link instead.

PARIS

├── data # for GT motion and meshes

│ ├── sapien # synthetic data

│ │ │ ├── [category]

│ ├── realscan # real scan data

│ │ │ ├── [category]

├── load # for input RGB images

Our synthetic data is preprocessed from the PartNet-Mobility dataset. If you would like to try out more examples, you can refer to preprocess.py to generate the two input states by articulating one part and save the meshes. Then you can render the multi-view images with <state>.obj as training data.

Downloaded pretrained models from here and put the folder under the project directory.

You can render the intermediate states of the object by trying the --predict mode.

python launch.py --predict \

--config pretrain/storage/config/parsed.yaml \

--resume pretrain/storage/ckpt/last.ckpt \

dataset.n_interp=3 # the number of interpolated states to show

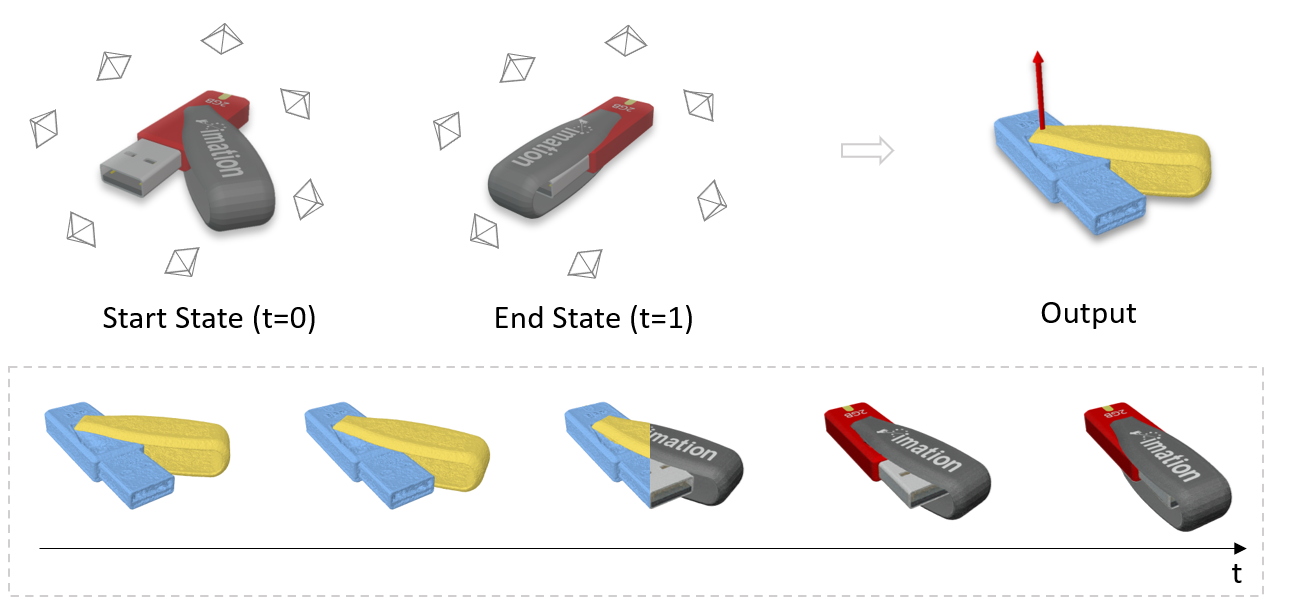

This will give you a image grid under exp/sapien/storage folder as below. It shows the interpolation from state t=0 to t=1 with ground truth at both ends.

Got errors running the command above?

If you are not on a local machine, you might encounter an issue from opencv and open3d saying

ImportError: libGL.so.1: cannot open shared object file: No such file or directory

You can obtain the missing files by installing libglfw3-dev

apt update && apt install -y libglfw3-dev

python launch.py --test \

--config pretrain/storage/config/parsed.yaml \

--resume pretrain/storage/ckpt/last.ckpt

Run python launch.py --train to train the model from the scratch. For example, to train the storage above, run the following command:

python launch.py --train \

--config configs/prismatic.yaml \

source=sapien/storage/45135

For objects with a revolute joint, run with --config configs/revolute.yaml.

If the motion type is not given, it can be also estimated by running with --config configs/se3.yaml in ~5k steps. We recommend to switch back to the specialized configuration once the motion type is known to further estimate other motion parameters for better performance. Please check out our paper for details.

@inproceedings{jiayi2023paris,

title={PARIS: Part-level Reconstruction and Motion Analysis for Articulated Objects},

author={Liu, Jiayi and Mahdavi-Amiri, Ali and Savva, Manolis},

booktitle={Proceedings of the IEEE/CVF International Conference on Computer Vision},

pages={352--363},

year={2023}

}

The NeRF implementation is powered by tiny-cuda-nn and nerfacc. The codebase is partially borrowed from instant-nsr-pl. Please check out their great work for more details!