Pytorch implementation of FlowNet by Dosovitskiy et al.

This repository is a torch implementation of FlowNet, by Alexey Dosovitskiy et al. in PyTorch. See Torch implementation here

This code is mainly inspired from official imagenet example. It has not been tested for multiple GPU, but it should work just as in original code.

The code provides a training example, using the flying chair dataset , with data augmentation. An implementation for Scene Flow Datasets may be added in the future.

Two neural network models are currently provided :

- FlowNetS

- FlowNetSBN

- FlowNetC

- FlowNetCBN

Thanks to Kaixhin you can download a pretrained version of FlowNetS (from caffe, not from pytorch) here. This folder also contains trained networks from scratch.

Directly feed the downloaded Network to the script, you don't need to uncompress it even if your desktop environment tells you so.

These networks expect a BGR input in range [-0.5,0.5] (compared to RGB in pytorch). However, BGR order is not very important.

pytorch >= 0.4.1

tensorboard-pytorch

tensorboardX >= 1.4

spatial-correlation-sampler>=0.0.8

imageio

argparse

First, you need to download the the flying chair dataset . It is ~64GB big and we recommend you put it in a SSD Drive.

Default HyperParameters provided in main.py are the same as in the caffe training scripts.

- Example usage for FlowNetS :

python main.py /path/to/flying_chairs/ -b8 -j8 -a flownetsWe recommend you set j (number of data threads) to high if you use DataAugmentation as to avoid data loading to slow the training.

For further help you can type

python main.py -hTensorboard-pytorch is used for logging. To visualize result, simply type

tensorboard --logdir=/path/to/checkointsModels can be downloaded here in the pytorch folder.

Models were trained with default options unless specified. Color warping was not used.

| Arch | learning rate | batch size | epoch size | filename | validation EPE |

|---|---|---|---|---|---|

| FlowNetS | 1e-4 | 8 | 2700 | flownets_EPE1.951.pth.tar | 1.951 |

| FlowNetS BN | 1e-3 | 32 | 695 | flownets_bn_EPE2.459.pth.tar | 2.459 |

| FlowNetC | 1e-4 | 8 | 2700 | flownetc_EPE1.766.pth.tar | 1.766 |

Note : FlowNetS BN took longer to train and got worse results. It is strongly advised not to you use it for Flying Chairs dataset.

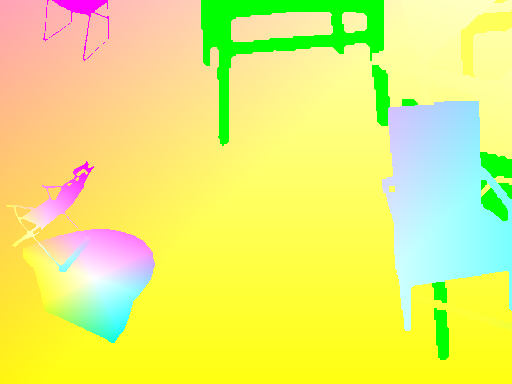

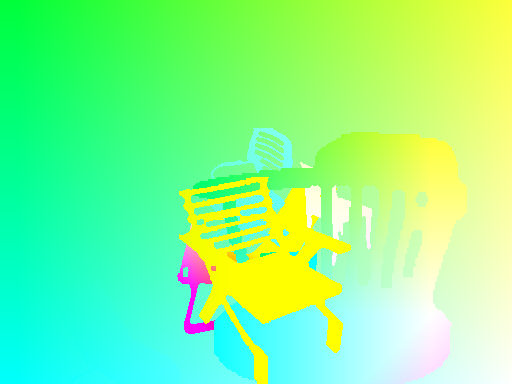

Prediction are made by FlowNetS.

Exact code for Optical Flow -> Color map can be found here

| Input | prediction | GroundTruth |

|---|---|---|

|

|

|

|

|

|

|

|

|

In order to have coherent transformations between inputs and target, we must define new transformations that take both input and target, as a new random variable is defined each time a random transformation is called.

To allow data augmentation, we have considered rotation and translations for inputs and their result on target flow Map. Here is a set of things to take care of in order to achieve a proper data augmentation

If you apply a transformation on img1, you have to apply the very same to Flow Map, to get coherent origin points for flow.

Given a translation (tx,ty) applied on img2, we will have

flow[:,:,0] += tx

flow[:,:,1] += ty

A scale applied on both img1 and img2 with a zoom parameters alpha multiplies the flow by the same amount

flow *= alpha

A rotation applied on both images by an angle theta also rotates flow vectors (flow[i,j]) by the same angle

\for_all i,j flow[i,j] = rotate(flow[i,j], theta)

rotate: x,y,theta -> (x*cos(theta)-x*sin(theta), y*cos(theta), x*sin(theta))

We consider the angle theta small enough to linearize cos(theta) to 1 and sin(theta) to theta .

x flow map ( flow[:,:,0] ) will get a shift proportional to distance from center horizontal axis j-h/2

y flow map ( flow[:,:,1] ) will get a shift proportional to distance from center vertical axis i-w/2

\for_all i,j flow[i,j] += theta*(j-h/2), theta*(i-w/2)