Barbershop: GAN-based Image Compositing using Segmentation Masks

Peihao Zhu, Rameen Abdal, John Femiani, Peter Wonka

arXiv | BibTeX | Project Page | Video

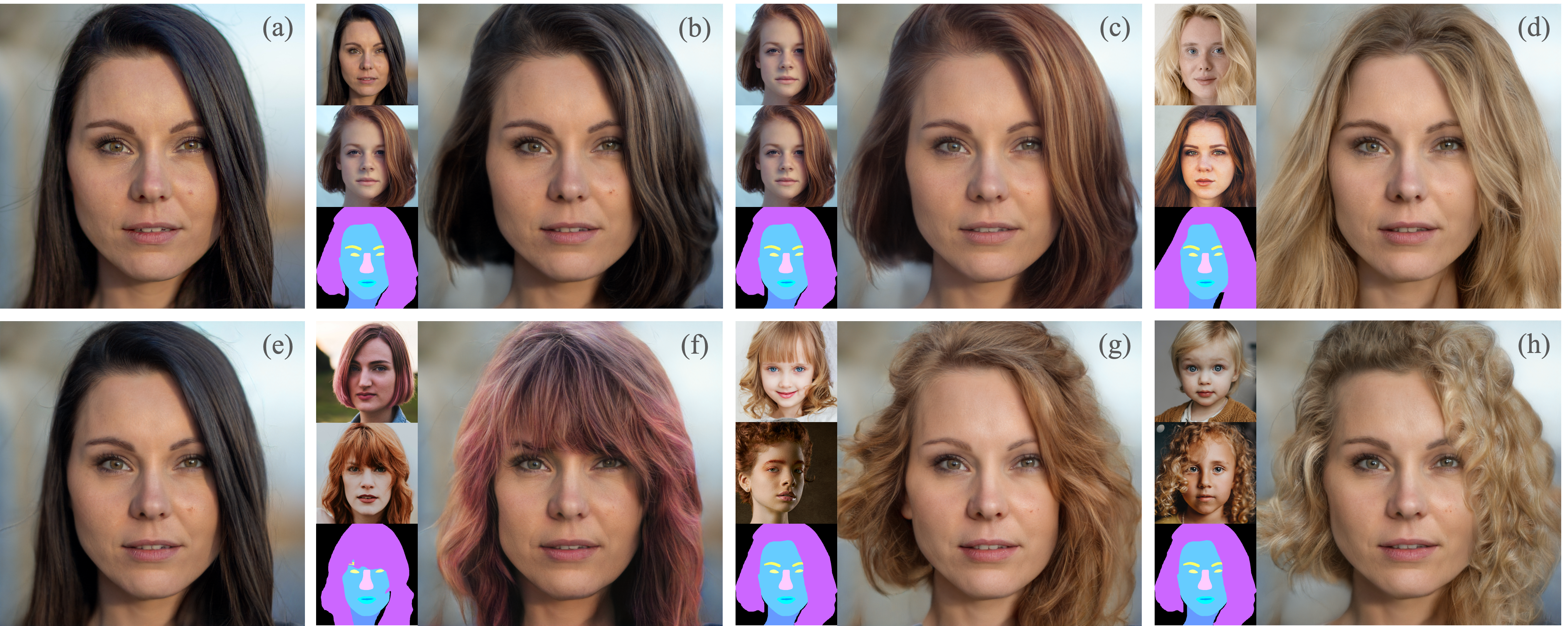

Abstract Seamlessly blending features from multiple images is extremely challenging because of complex relationships in lighting, geometry, and partial occlusion which cause coupling between different parts of the image. Even though recent work on GANs enables synthesis of realistic hair or faces, it remains difficult to combine them into a single, coherent, and plausible image rather than a disjointed set of image patches. We present a novel solution to image blending, particularly for the problem of hairstyle transfer, based on GAN-inversion. We propose a novel latent space for image blending which is better at preserving detail and encoding spatial information, and propose a new GAN-embedding algorithm which is able to slightly modify images to conform to a common segmentation mask. Our novel representation enables the transfer of the visual properties from multiple reference images including specific details such as moles and wrinkles, and because we do image blending in a latent-space we are able to synthesize images that are coherent. Our approach avoids blending artifacts present in other approaches and finds a globally consistent image. Our results demonstrate a significant improvement over the current state of the art in a user study, with users preferring our blending solution over 95 percent of the time.

Official Implementation of Barbershop. KEEP UPDATING! Please Git Pull the latest version.

2021/12/27 Add dilation and erosion parameters to smooth the boundary.

2021/12/24 Important Update: Add semantic mask inpainting module to solve the occlusion problem. Please git pull the latest version.

2021/12/18 Add a rough version of the project.

2021/06/02 Add project page.

- Clone the repository:

git clone https://github.com/ZPdesu/Barbershop.git

cd Barbershop

- Dependencies:

We recommend running this repository using Anaconda. All dependencies for defining the environment are provided inenvironment/environment.yaml.

Please download the II2S

and put them in the input/face folder.

Preprocess your own images. Please put the raw images in the unprocessed folder.

python align_face.py

Produce realistic results:

python main.py --im_path1 90.png --im_path2 15.png --im_path3 117.png --sign realistic --smooth 5

Produce results faithful to the masks:

python main.py --im_path1 90.png --im_path2 15.png --im_path3 117.png --sign fidelity --smooth 5

Now, Dockerfile is ready and you can use this code in docker too.

docker build -t barbershop .

docker run --name barbershop --gpus all --rm -it -v <absolute_local_path>:/workspace/ barbershop --im_path1 90.png --im_path2 117.png --im_path3 15.png --sign fidelity --smooth 5

- add a detailed readme

- update mask inpainting code

- integrate image encoder

- add preprocessing step

- ...

This code borrows heavily from II2S.

@misc{zhu2021barbershop,

title={Barbershop: GAN-based Image Compositing using Segmentation Masks},

author={Peihao Zhu and Rameen Abdal and John Femiani and Peter Wonka},

year={2021},

eprint={2106.01505},

archivePrefix={arXiv},

primaryClass={cs.CV}

}