This is the official repository of our paper Cross3DVG: Cross-Dataset 3D Visual Grounding on Different RGB-D Scans (3DV 2024) by Taiki Miyanishi, Daichi Azuma, Shuhei Kurita, and Motoaki Kawanabe.

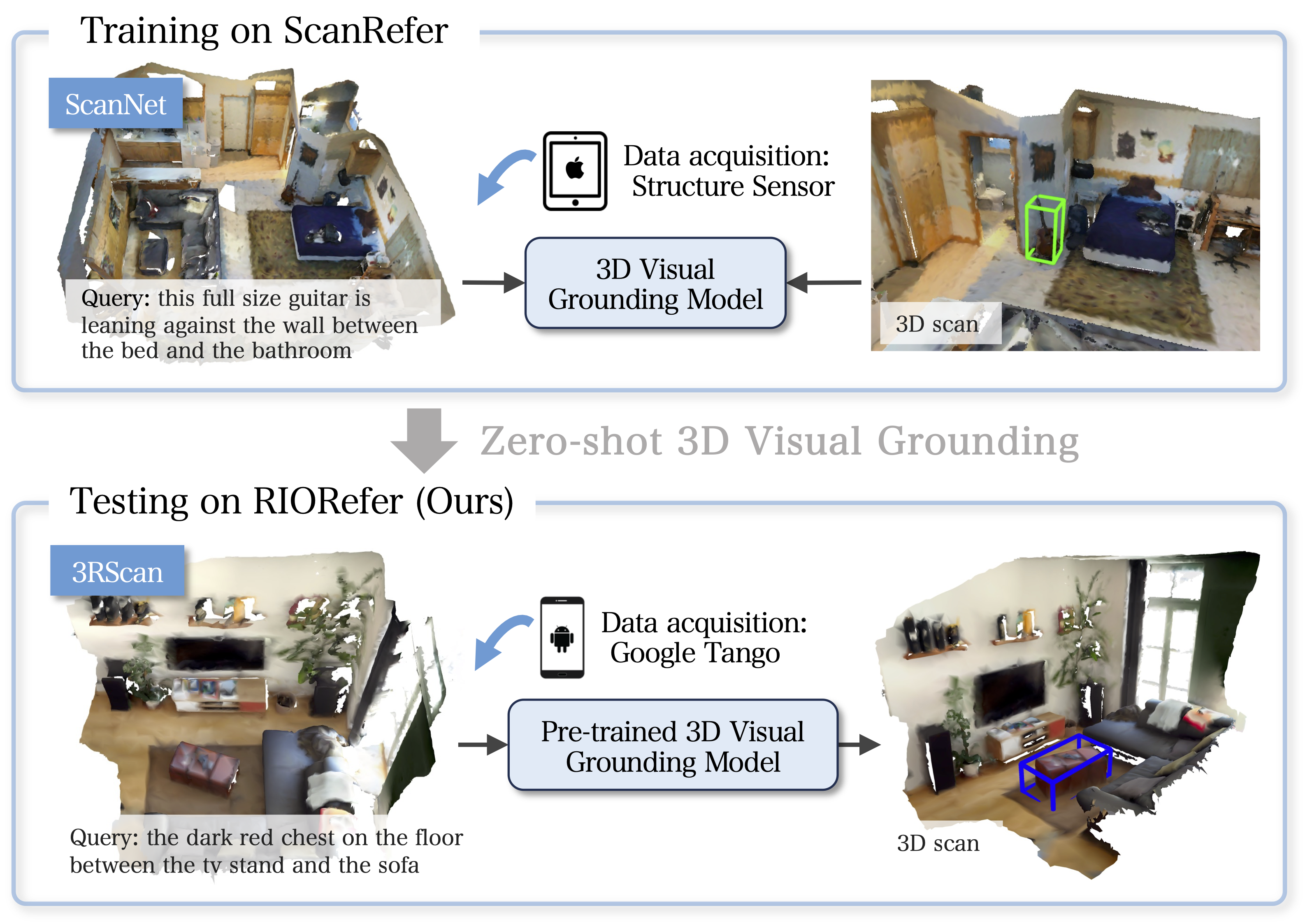

We present a novel task for cross-dataset visual grounding in 3D scenes (Cross3DVG), which overcomes limitations of existing 3D visual grounding models, specifically their restricted 3D resources and consequent tendencies of overfitting a specific 3D dataset. We created RIORefer, a large-scale 3D visual grounding dataset, to facilitate Cross3DVG. It includes more than 63k diverse descriptions of 3D objects within 1,380 indoor RGB-D scans from 3RScan, with human annotations. After training the Cross3DVG model using the source 3D visual grounding dataset, we evaluate it without target labels using the target dataset with, e.g., different sensors, 3D reconstruction methods, and language annotators. Comprehensive experiments are conducted using established visual grounding models and with CLIP-based multi-view 2D and 3D integration designed to bridge gaps among 3D datasets. For Cross3DVG tasks, (i) cross-dataset 3D visual grounding exhibits significantly worse performance than learning and evaluation with a single dataset because of the 3D data and language variants across datasets. Moreover, (ii) better object detector and localization modules and fusing 3D data and multi-view CLIP-based image features can alleviate this lower performance. Our Cross3DVG task can provide a benchmark for developing robust 3D visual grounding models to handle diverse 3D scenes while leveraging deep language understanding.

# create and activate the conda environment

conda create -n cross3dvg python=3.10

conda activate cross3dvg

# install PyTorch

pip install torch==1.13.1+cu116 torchvision==0.14.1+cu116 torchaudio==0.13.1 --extra-index-url https://download.pytorch.org/whl/cu116

# istall the necessary packages with `requirements.txt`:

pip install -r requirements.txt

# install the PointNet++:

cd lib/pointnet2

python setup.py installThis code has been tested with Python 3.10, pytorch 1.13.1, and CUDA 11.6 on Ubuntu 20.04. We recommend the use of miniconda.

- Please refer to ScanRefer for data preprocessing details.

-

Download the ScanNet v2 dataset and the extracted ScanNet frames and place both them in

data/scannet/. The raw dataset files should be organized as follows:Cross3DVG ├── data │ ├── scannet │ │ ├── scans │ │ │ ├── [scene_id] │ │ │ │ ├── [scene_id]_vh_clean_2.ply │ │ │ │ ├── [scene_id]_vh_clean_2.0.010000.segs.json │ │ │ │ ├── [scene_id].aggregation.json │ │ │ │ ├── [scene_id].txt │ │ ├── frames_square │ │ │ ├── [scene_id] │ │ │ │ ├── color │ │ │ │ ├── pose

-

Pre-process ScanNet data. A folder named

scannet200_data/will be generated underdata/scannet/after running the following command:cd data/scannet/ python prepare_scannet200.py

- Download the GLoVE embeddings (~990MB) and place them in

data/.

- Download the 3RScan dataset and place it in

data/rio/scans.Cross3DVG ├── data │ ├── rio │ │ ├── scans │ │ │ ├── [scan_id] │ │ │ │ ├── mesh.refined.v2.obj │ │ │ │ ├── mesh.refined.mtl │ │ │ │ ├── mesh.refined_0.png │ │ │ │ ├── sequence │ │ │ │ ├── labels.instances.annotated.v2.ply │ │ │ │ ├── mesh.refined.0.010000.segs.v2.json │ │ │ │ ├── semseg.v2.json

- Pre-process ScanNet data. A folder named

rio200_data/will be generated underdata/rio/after running the following command:cd data/rio/ python prepare_rio200.py

-

Download the ScanRefer dataset (train/val). The raw dataset files should be organized as follows:

Cross3DVG ├── dataset │ ├── scanrefer │ │ ├── metadata │ │ │ ├── ScanRefer_filtered_train.json │ │ │ ├── ScanRefer_filtered_val.json

-

Pre-process ScanRefer data. Folders named

text_feature/andimage_feature/are generaetd underdata/scanrefer/after running the following command:python scripts/compute_text_clip_features.py --encode_scannet_all --encode_scanrefer --use_norm python scripts/compute_image_clip_features.py --encode_scanrefer --use_norm

-

Download the RIORefer dataset (train/val). The raw dataset files should be organized as follows:

Cross3DVG ├── dataset │ ├── riorefer │ │ ├── metadata │ │ │ ├── RIORefer_train.json │ │ │ ├── RIORefer_val.json

-

Pre-process ScanRefer data. Folders named

text_feature/andimage_feature/are generated underdata/riorefer/, after running the following command:python scripts/compute_text_clip_features.py --encode_rio_all --encode_riorefer --use_norm python scripts/compute_image_clip_features.py --encode_riorefer --use_norm

To train the Cross3DVG model with multiview CLIP-based image features, run the command:

python scripts/train.py --dataset {scanrefer/riorefer} --coslr --use_normal --use_text_clip --use_image_clip --use_text_clip_norm --use_image_clip_norm --match_joint_scoreTo see more training options, please run scripts/train.py -h.

To evaluate the trained models, please find the folder under outputs/ and run the command:

python scripts/eval.py --output outputs --folder <folder_name> --dataset {scanrefer/riorefer} --no_nms --force --reference --repeat 5The training information is saved in the info.json file located within the outputs/<folder_name>/ directory.

Performance (ScanRefer -> RIORefer):

| Split | IoU | Unique | Multiple | Overall |

|---|---|---|---|---|

| Val | 0.25 | 37.37 | 18.52 | 24.78 (36.56) |

| Val | 0.5 | 19.77 | 10.15 | 13.34 (20.21) |

(...) denotes the pefromance of RIORefer -> RIORefer.

Performance (RIORefer -> ScanRefer):

| Split | IoU | Unique | Multiple | Overall |

|---|---|---|---|---|

| Val | 0.25 | 47.90 | 24.25 | 33.34 (48.74) |

| Val | 0.5 | 26.03 | 15.40 | 19.48 (32.69) |

(...) denotes the pefromance of ScanRefer -> ScanRefer.

If you find our work helpful for your research. Please consider citing our paper.

@inproceedings{miyanishi_2024_3DV,

title={Cross3DVG: Cross-Dataset 3D Visual Grounding on Different RGB-D Scans},

author={Miyanishi, Taiki and Azuma, Daichi and Kurita, Shuhei and Kawanabe, Motoaki},

booktitle={The 10th International Conference on 3D Vision},

year={2024}

}We would like to thank authors of the following codebase.