Muhammad Maaz* , Hanoona Rasheed* , Salman Khan and Fahad Khan

* Equally contributing first authors

| Demo | Paper | Demo Clips | Offline Demo | Training | Video Instruction Data | Quantitative Evaluation | Qualitative Analysis |

|---|---|---|---|---|---|---|---|

|

|

|

Offline Demo | Training | Video Instruction Dataset | Quantitative Evaluation | Qualitative Analysis |

- Sep-30: Our VideoInstruct100K dataset can be downloaded from HuggingFace/VideoInstruct100K. 🔥🔥

- Jul-15: Our quantitative evaluation benchmark for Video-based Conversational Models now has its own dedicated website: https://mbzuai-oryx.github.io/Video-ChatGPT. 🔥🔥

- Jun-28: Updated GitHub readme featuring benchmark comparisons of Video-ChatGPT against recent models - Video Chat, Video LLaMA, and LLaMA Adapter. Amid these advanced conversational models, Video-ChatGPT continues to deliver state-of-the-art performance.:fire::fire:

- Jun-08 : Released the training code, offline demo, instructional data and technical report. All the resources including models, datasets and extracted features are available here. 🔥🔥

- May-21 : Video-ChatGPT: demo released.

🔥🔥 You can try our demo using the provided examples or by uploading your own videos HERE. 🔥🔥

🔥🔥 Or click the image to try the demo! 🔥🔥

![]() You can access all the videos we demonstrate on here.

You can access all the videos we demonstrate on here.

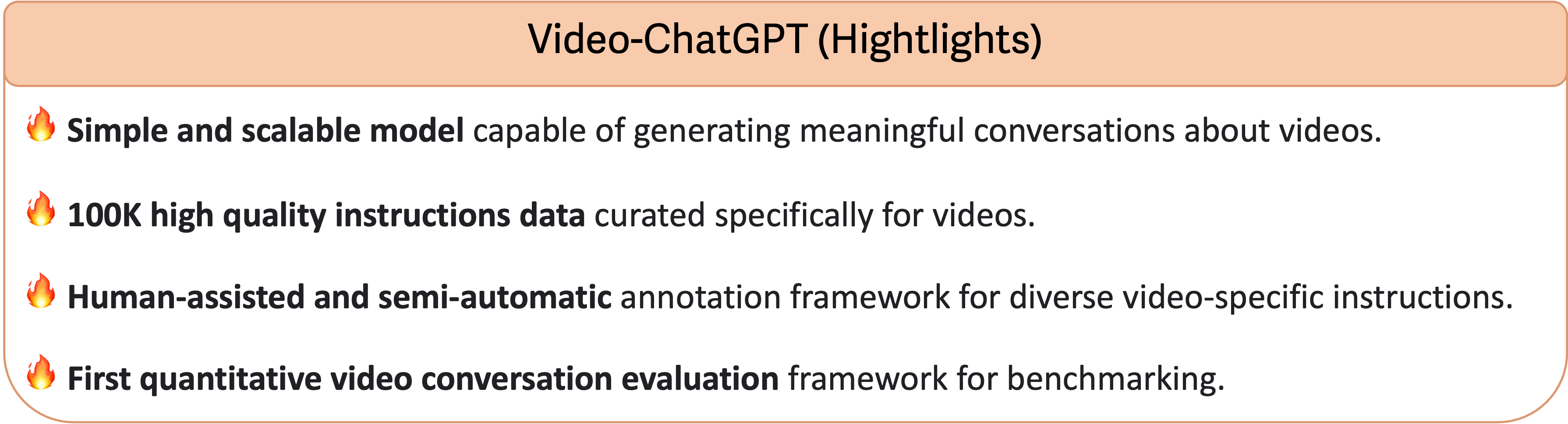

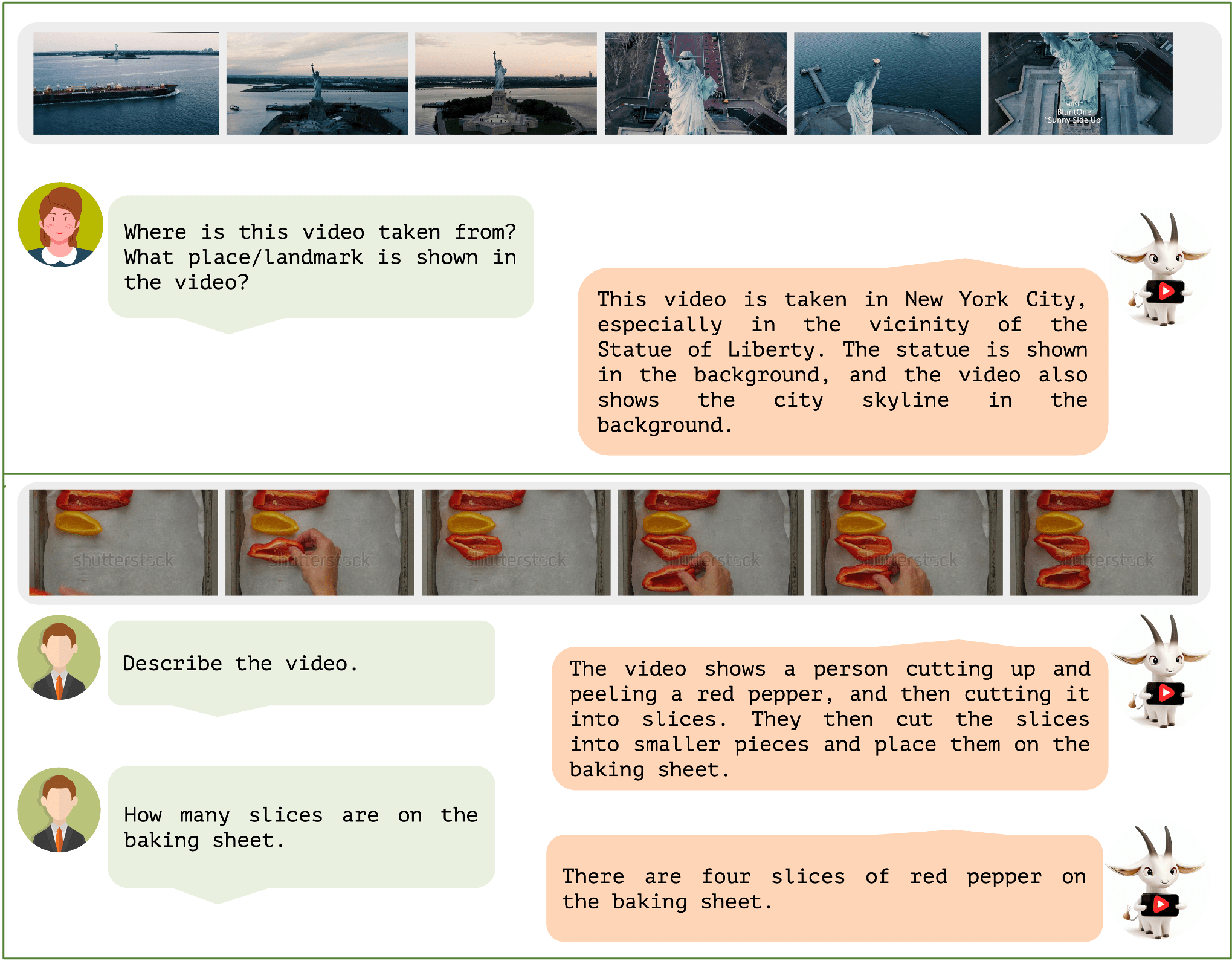

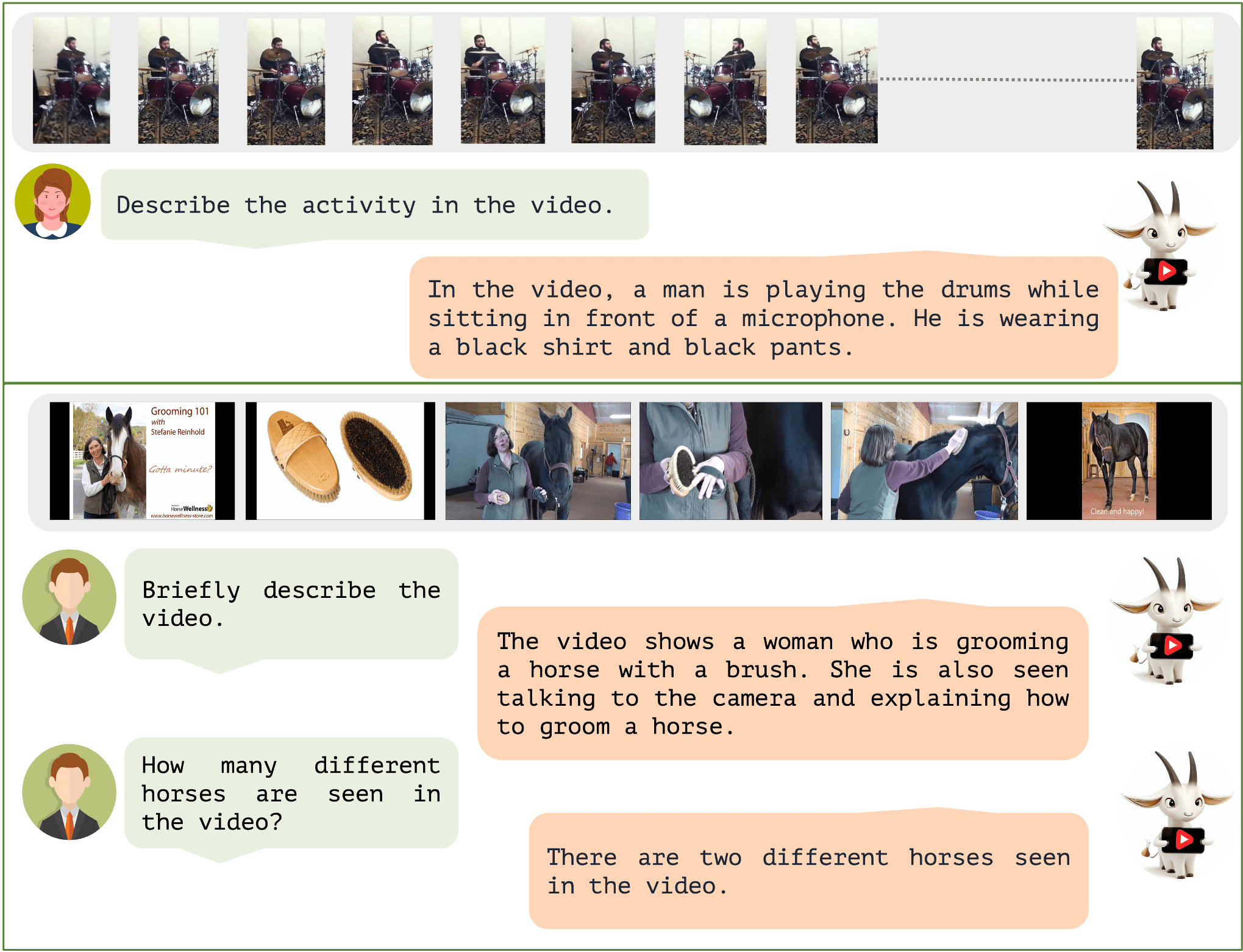

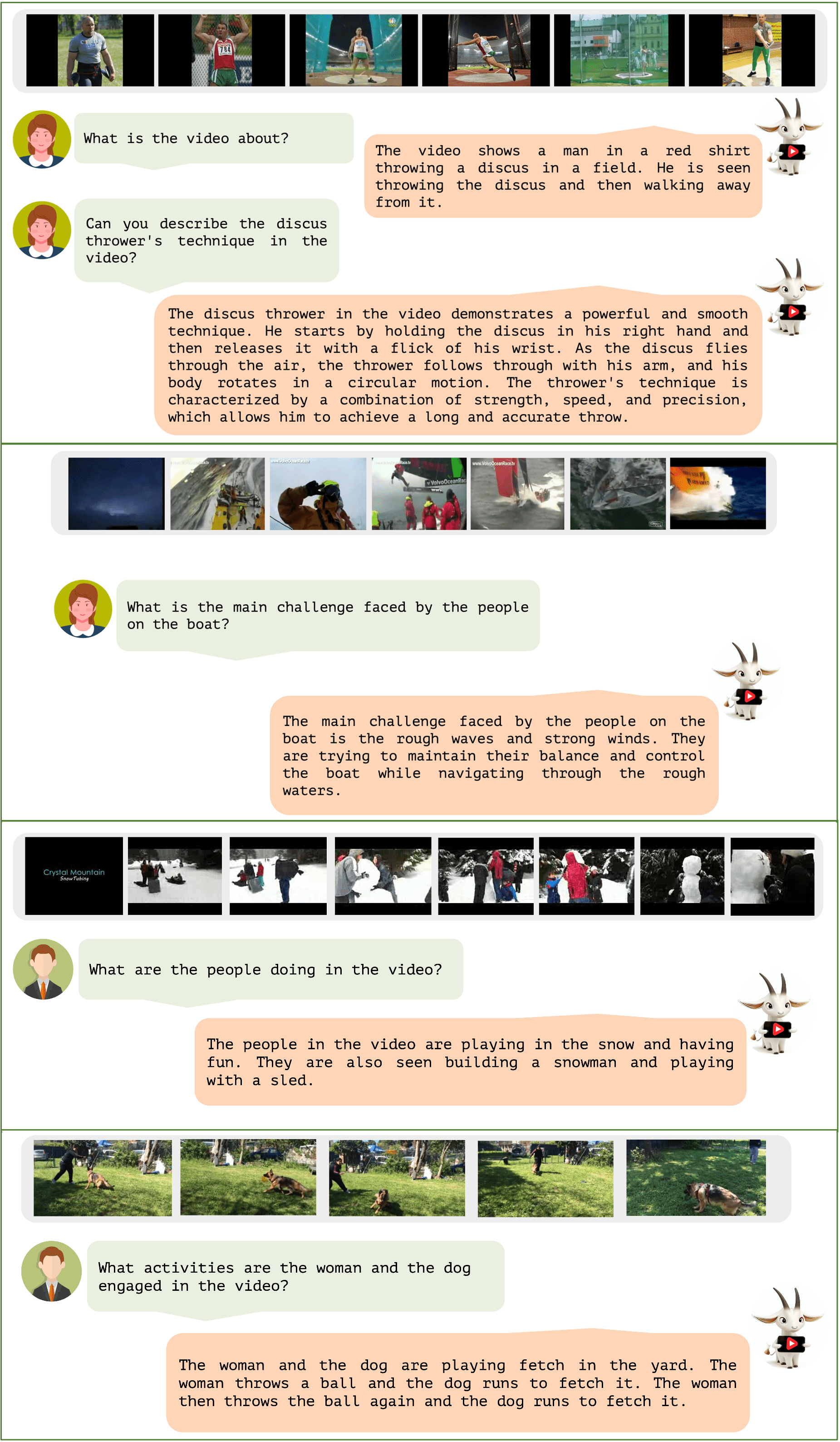

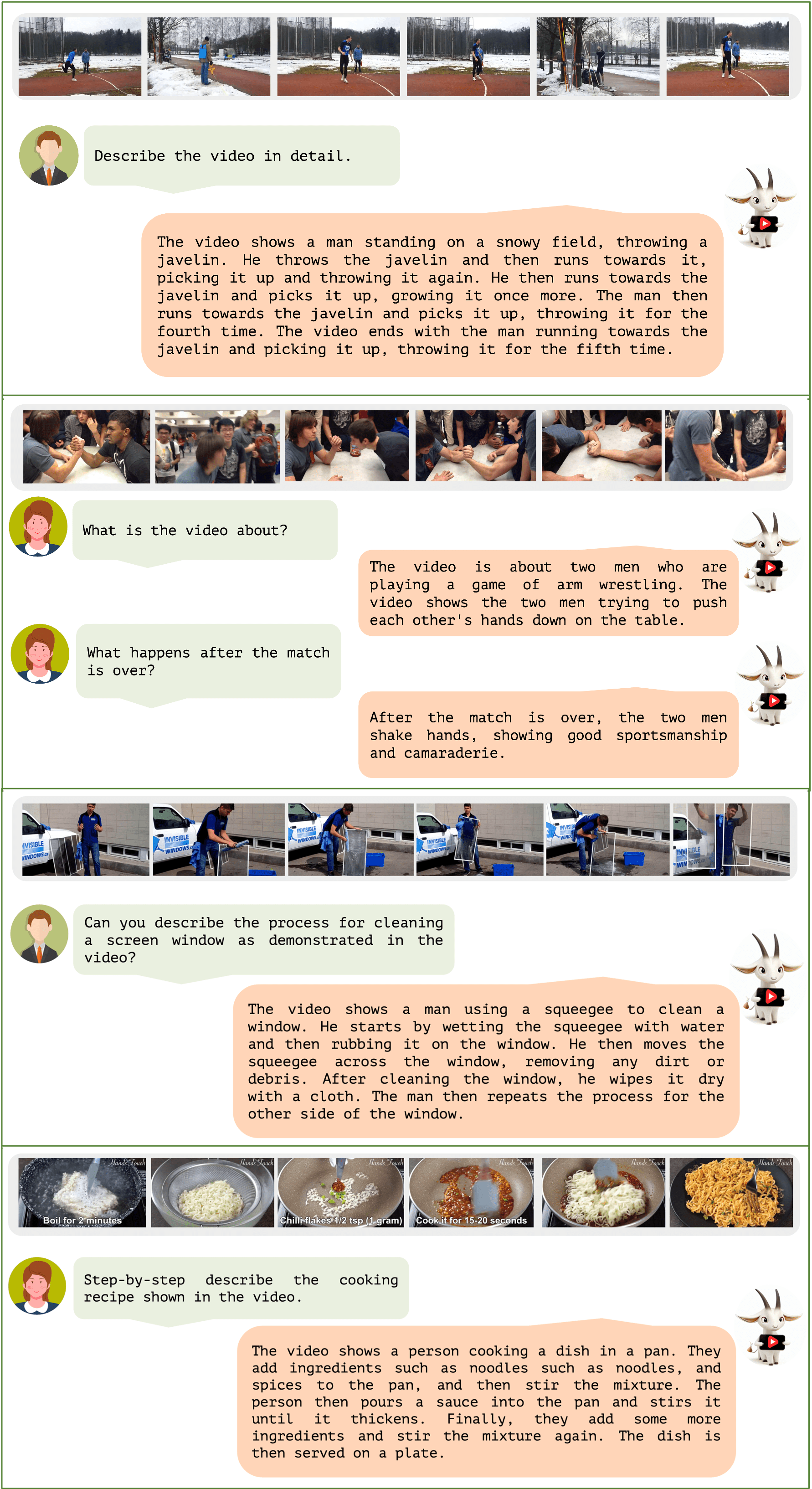

Video-ChatGPT is a video conversation model capable of generating meaningful conversation about videos. It combines the capabilities of LLMs with a pretrained visual encoder adapted for spatiotemporal video representation.

- We introduce 100K high-quality video-instruction pairs together with a novel annotation framework that is scalable and generates a diverse range of video-specific instruction sets of high-quality.

- We develop the first quantitative video conversation evaluation framework for benchmarking video conversation models.

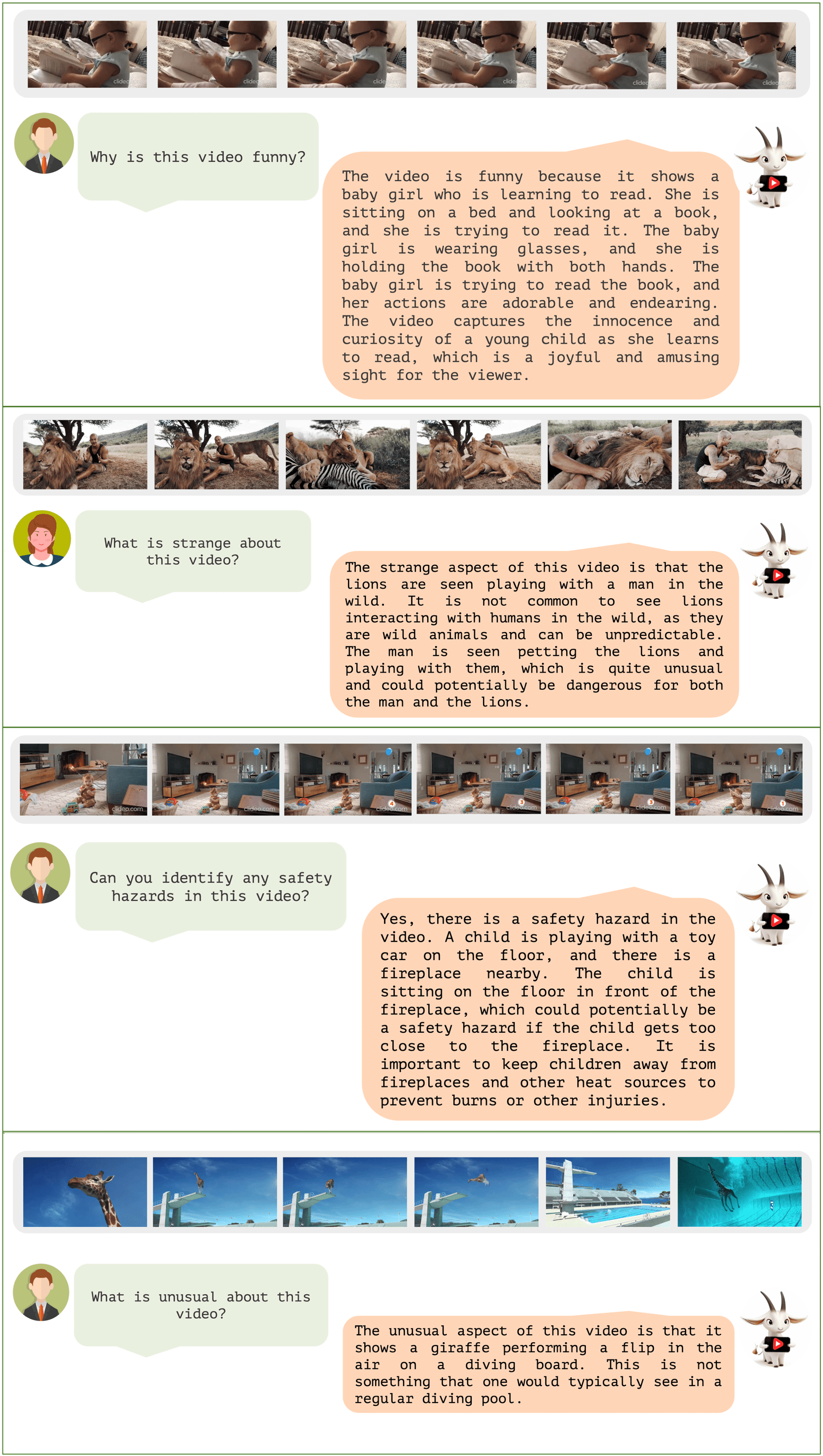

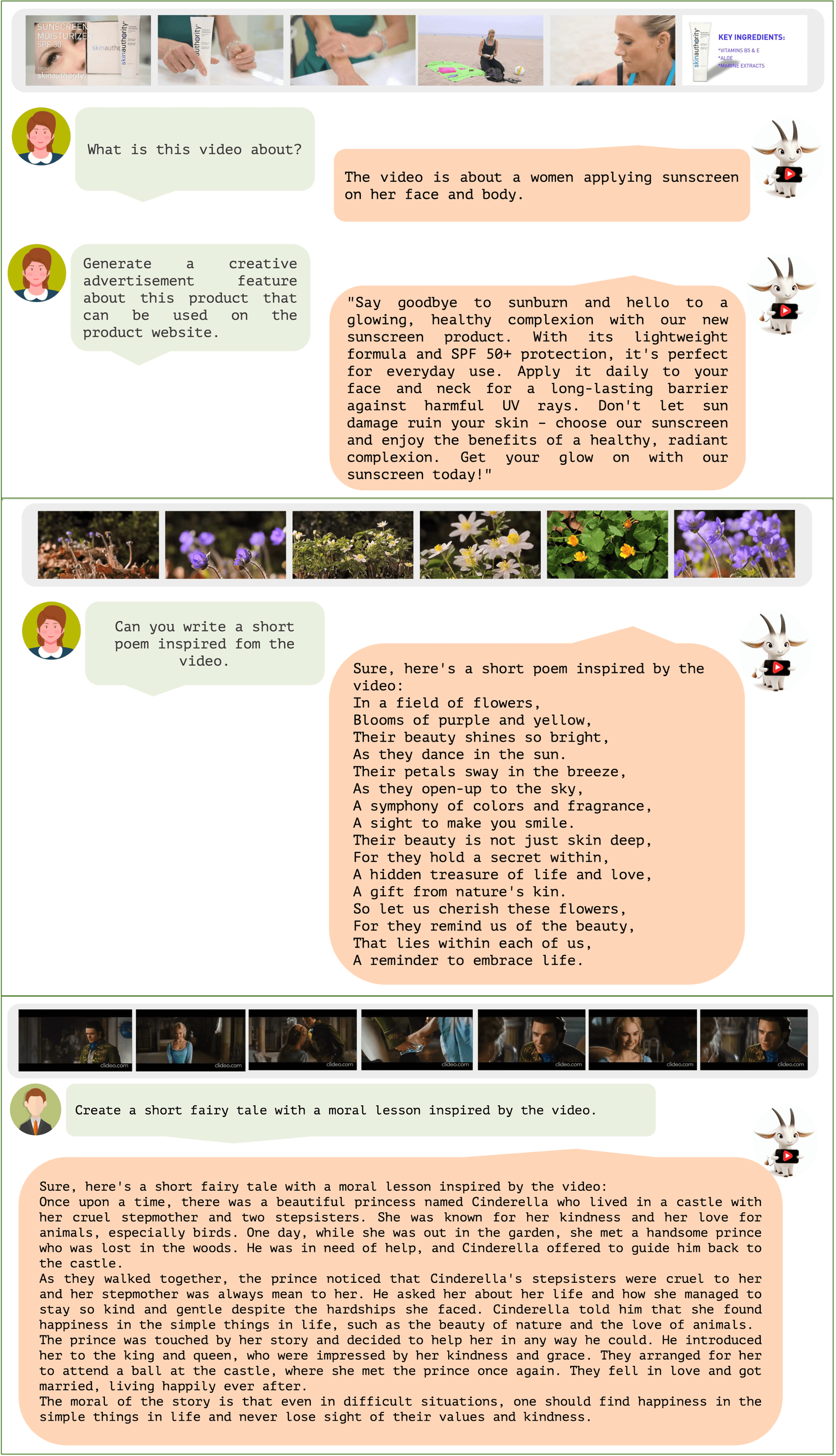

- Unique multimodal (vision-language) capability combining video understanding and language generation that is comprehensively evaluated using quantitative and qualitiative comparisons on video reasoning, creativitiy, spatial and temporal understanding, and action recognition tasks.

We recommend setting up a conda environment for the project:

conda create --name=video_chatgpt python=3.10

conda activate video_chatgpt

git clone https://github.com/mbzuai-oryx/Video-ChatGPT.git

cd Video-ChatGPT

pip install -r requirements.txt

export PYTHONPATH="./:$PYTHONPATH"Additionally, install FlashAttention for training,

pip install ninja

git clone https://github.com/HazyResearch/flash-attention.git

cd flash-attention

git checkout v1.0.7

python setup.py installTo run the demo offline, please refer to the instructions in offline_demo.md.

For training instructions, check out train_video_chatgpt.md.

We are releasing our 100,000 high-quality video instruction dataset that was used for training our Video-ChatGPT model. You can download the dataset from here. More details on our human-assisted and semi-automatic annotation framework for generating the data are available at VideoInstructionDataset.md.

Our paper introduces a new Quantitative Evaluation Framework for Video-based Conversational Models. To explore our benchmarks and understand the framework in greater detail, please visit our dedicated website: https://mbzuai-oryx.github.io/Video-ChatGPT.

For detailed instructions on performing quantitative evaluation, please refer to QuantitativeEvaluation.md.

Video-based Generative Performance Benchmarking and Zero-Shot Question-Answer Evaluation tables are provided for a detailed performance overview.

| Model | MSVD-QA | MSRVTT-QA | TGIF-QA | Activity Net-QA | ||||

|---|---|---|---|---|---|---|---|---|

| Accuracy | Score | Accuracy | Score | Accuracy | Score | Accuracy | Score | |

| FrozenBiLM | 32.2 | -- | 16.8 | -- | 41.0 | -- | 24.7 | -- |

| Video Chat | 56.3 | 2.8 | 45.0 | 2.5 | 34.4 | 2.3 | 26.5 | 2.2 |

| LLaMA Adapter | 54.9 | 3.1 | 43.8 | 2.7 | - | - | 34.2 | 2.7 |

| Video LLaMA | 51.6 | 2.5 | 29.6 | 1.8 | - | - | 12.4 | 1.1 |

| Video-ChatGPT | 64.9 | 3.3 | 49.3 | 2.8 | 51.4 | 3.0 | 35.2 | 2.7 |

| Evaluation Aspect | Video Chat | LLaMA Adapter | Video LLaMA | Video-ChatGPT |

|---|---|---|---|---|

| Correctness of Information | 2.23 | 2.03 | 1.96 | 2.40 |

| Detail Orientation | 2.50 | 2.32 | 2.18 | 2.52 |

| Contextual Understanding | 2.53 | 2.30 | 2.16 | 2.62 |

| Temporal Understanding | 1.94 | 1.98 | 1.82 | 1.98 |

| Consistency | 2.24 | 2.15 | 1.79 | 2.37 |

A Comprehensive Evaluation of Video-ChatGPT's Performance across Multiple Tasks.

- LLaMA: A great attempt towards open and efficient LLMs!

- Vicuna: Has the amazing language capabilities!

- LLaVA: our architecture is inspired from LLaVA.

- Thanks to our colleagues at MBZUAI for their essential contribution to the video annotation task, including Salman Khan, Fahad Khan, Abdelrahman Shaker, Shahina Kunhimon, Muhammad Uzair, Sanoojan Baliah, Malitha Gunawardhana, Akhtar Munir, Vishal Thengane, Vignagajan Vigneswaran, Jiale Cao, Nian Liu, Muhammad Ali, Gayal Kurrupu, Roba Al Majzoub, Jameel Hassan, Hanan Ghani, Muzammal Naseer, Akshay Dudhane, Jean Lahoud, Awais Rauf, Sahal Shaji, Bokang Jia, without which this project would not be possible.

If you're using Video-ChatGPT in your research or applications, please cite using this BibTeX:

@article{Maaz2023VideoChatGPT,

title={Video-ChatGPT: Towards Detailed Video Understanding via Large Vision and Language Models},

author={Muhammad Maaz, Hanoona Rasheed, Salman Khan and Fahad Khan},

journal={ArXiv 2306.05424},

year={2023}

}

This work is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.

Looking forward to your feedback, contributions, and stars! 🌟 Please raise any issues or questions here.