Authors:

Semantically be able to search through a database of videos (using generated summaries)

Take a look at our poster!

- System Overview

- Video Summarization Overview

- Example output

- User Interface

- Set Up

- Training Captioning Network

- Plan

- Data Sets

- Citations

The video below shows exactly how the entire system works end to end.

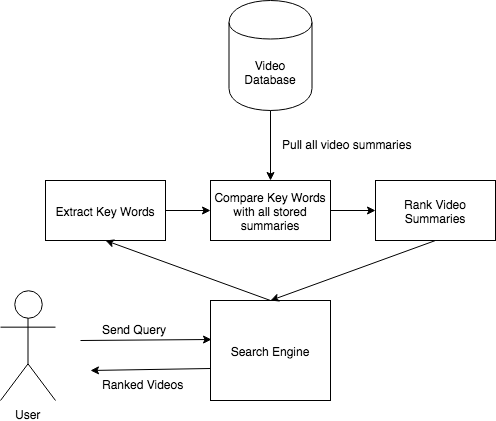

The user facing system described here is the overview of the overall system architecture.

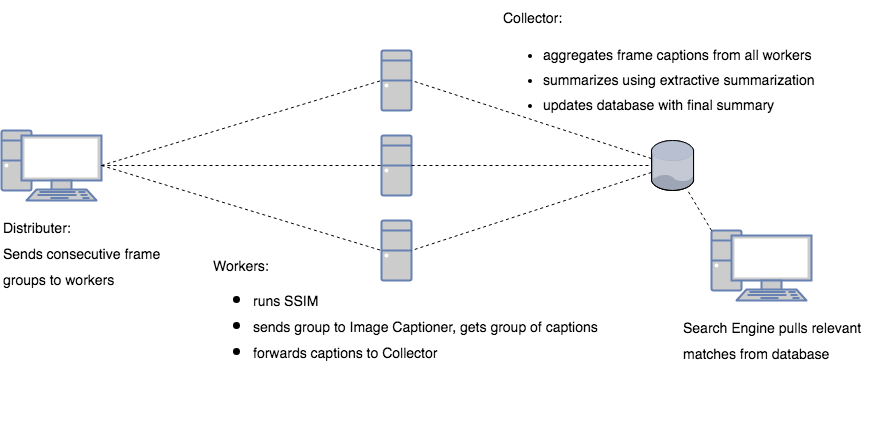

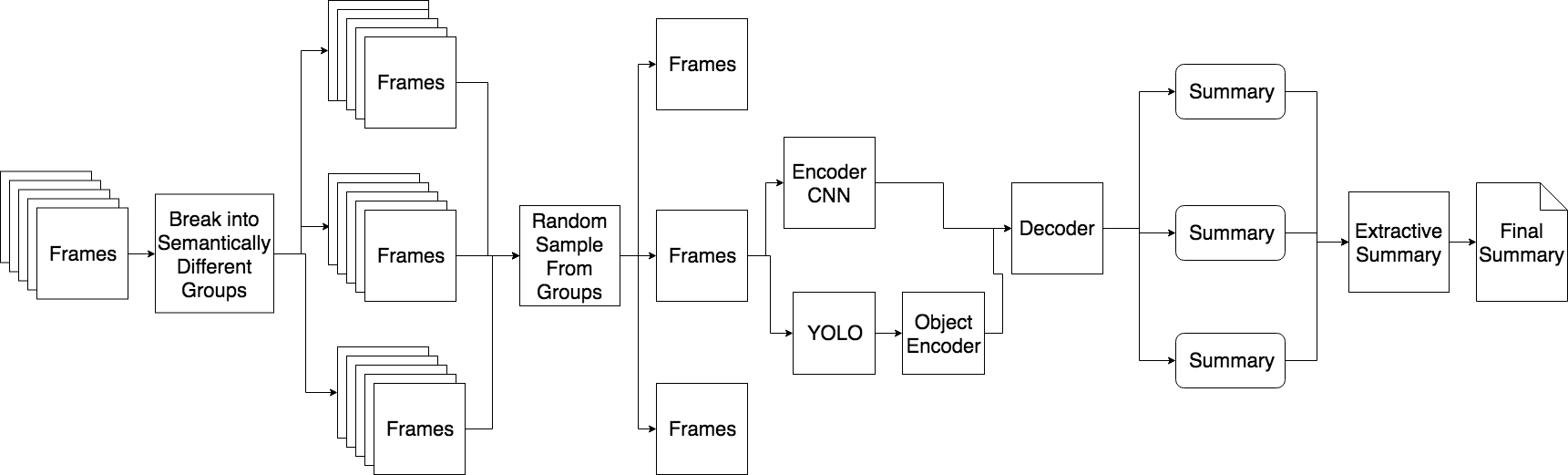

The backend and video summarizing system was distributed in an attempt to tackle large videos. The architecture is described in the image below

In this project, we attempted to solve video summarizatoin using image captioning. The architecture and motivation is explained in this section.

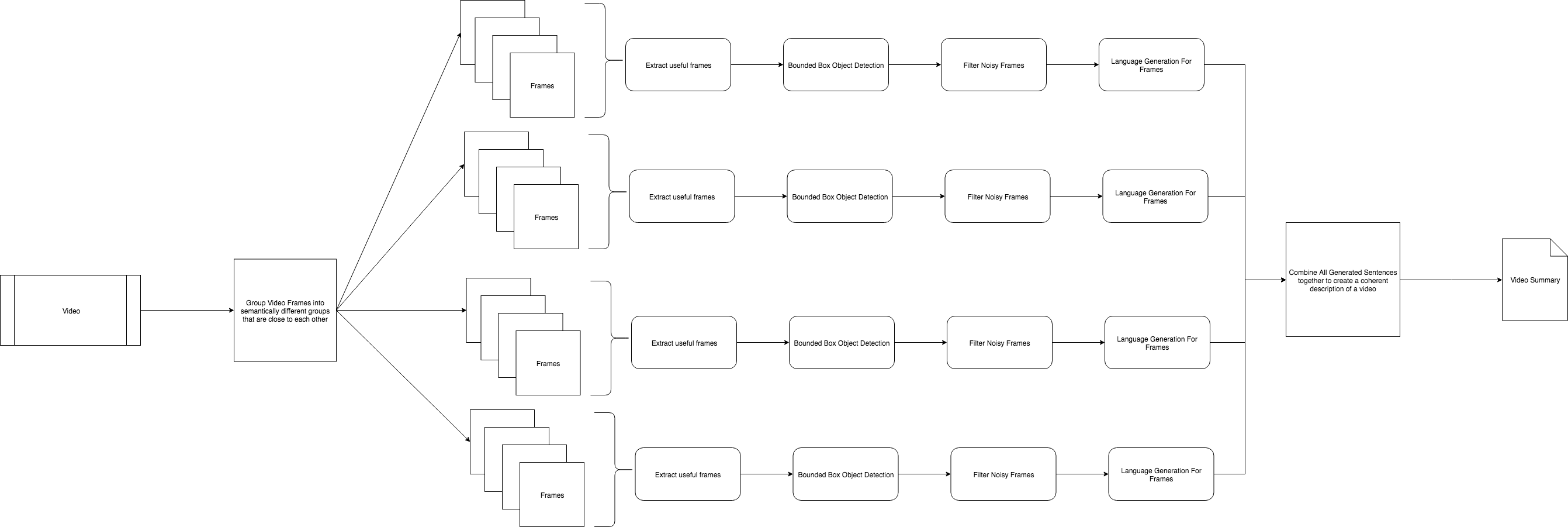

Below is the initial architecture of the video summarization network used to generate video summaries.

We converted this into the following network for the final project

We can walk through the steps occuring with explantions here:

-

We break apart frames into semantically different groups.

- Here we use

SSMI(structured similarity measurment index) to determine if two frames are similar - We define a threshold for comparison

- Any sequence of frames within that threshold belongs to a specific group.

- Here we use

-

Random Sample from each group

- Since each group are all the semantically similar frames, to reduce the redundancies in the frame captions we try to remove similar frames by selecting a very small subset (1-5) frames from each group

-

Feed each selected frame to an image captioning network to determine what happens in the frame

- This uses an Encoder-Decoder model for captioning the images as descibed in Object2Text

- Model description

EncoderEncoderCNN- Uses ResNet-152 pretrained to feed all the features to an encoded feature vector

YoloEncoder- From a frame performs bounding box object detection on the frame to determine the objects and the bounding boxes for all of them.

- Uses RNN structure (LSTM for this model) to encode the sequence of objects and their names

- Uses the resul to create another encoded feature vector

Decoder- Combines the two feature vectors from the

EncoderCNNand theYoloEncoderto create a new feature vector, and uses that feature vector as input to start language generation for the frame caption

- Combines the two feature vectors from the

- Training

- Dataset: uses COCO for training

- Bounding Box: during train uses

TinyYOLOfor faster training time as well as allowing the network to use a less reliable network to train on, and the more reliable version during testing

-

Uses

Extractive Summarizationto select unique phrases from all the frame captions seletected to create a reasonable description of what occured in the video.

The next section shows example output:

Given a minute long video of traffic in Dhaka Bangladesh.

(

'a man riding a bike down a street next to a large truck .',

'a man riding a bike down a street next to a traffic light .',

'a green truck with a lot of cars on it',

'a green truck with a lot of cars on the road .',

'a city bus driving down a street next to a traffic light .'

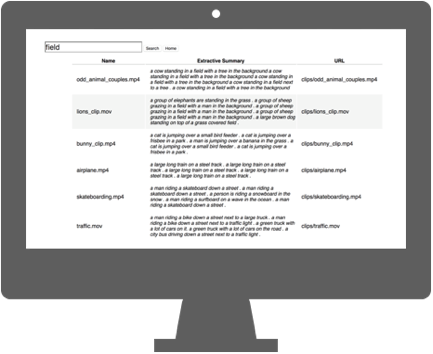

)To use our search engine we built a Flask based application similar to google to search through our database.

This page features the main search functionality. A simplistic design similar to Google.

This page features all the results for a given query. Every video in our database is returned in sorted order for relevance. We use TF-IDF scoring for a query to a rank for each of the summaries.

To set up the python code create a python3 environment with the following:

# create a virtual environment

$ python3 -m venv env

# activate environment

$ source env/bin/activate

# install all requirements

$ pip install -r requirements.txt

# install data files

$ python dataloader.pyIf you add a new package you will have to update the requirements.txt with the following command:

# add new packages

$ pip freeze > requirements.txtAnd if you want to deactivate the virtual environment

# decativate the virtual env

$ deactivatepython VideoSearchEngine/ImageCaptioningNoYolo/resize.py --image_dir data/coco/train2014/

python VideoSearchEngine/ImageCaptioningNoYolo/resize.py --image_dir data/coco/val2014/ --output_dir data/val_resized2014Our project will, broadly defined, be attempting video searching through video summarization. To do this we propose the following objectives and resulting action plan:

- Break videos down into semantically different groups of frames

- Recognize objects in an image (i.e. a frame)

- Convert a frame to text

- Merge summaries of all frames of a video into one large overall summary

- Build a search engine to query videos via summary.

Lots of labeled data for text generation of video summaries.

Paper about how data was collected and performance.

The location of the video dataset: Source

Consists of labeled images for image captioning

Consists of action videos that can be used to test summaries.

The "MED Summaries" is a new dataset for evaluation of dynamic video summaries. It contains annotations of 160 videos: a validation set of 60 videos and a test set of 100 videos. There are 10 event categories in the test set.

- Microsoft Research Paper on Video Summarization

- YOLO Paper for bounding box object detection

- Using YOLO for image captioning

- Unsupervised Video Summarization with Adversarial Networks

- Long-term Recurrent Convolutional Networks

- Coherent Multi-Sentence Video Description with Variable Level of Detail