Finetune ALL LLMs with ALL Adapeters on ALL Platforms!

| Model | LoRA | QLoRA | AdaLoRA | Prefix Tuning | P-Tuning | Prompt Tuning |

|---|---|---|---|---|---|---|

| Bloom | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| LLaMA | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| LLaMA2 | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| ChatGLM | ✅ | ✅ | ✅ | ☑️ | ☑️ | ☑️ |

| ChatGLM2 | ✅ | ✅ | ✅ | ☑️ | ☑️ | ☑️ |

| Qwen | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| Baichuan | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

You can Finetune LLM on

- Windows

- Linux

- Mac M1/2

You can Handle train / test Data with

- Terminal

- File

- DataBase

You can Do various Task

- CausalLM (default)

- SequenceClassification

P.S. Unfortunately, SuperAdapters do not support qlora on Mac, please use lora/adalora instead.

CentOS:

yum install -y xz-develUbuntu:

apt-get install -y liblzma-devMacOS:

brew install xzIf you want to use gpu on Mac, Please read How to use GPU on Mac

pip install --pre torch torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/nightly/cpu

pip install -r requirements.txtpython finetune.py --model_type chatglm --data "data/train/" --model_path "LLMs/chatglm/chatglm-6b/" --adapter "lora" --output_dir "output/chatglm"python inference.py --model_type chatglm --instruction "Who are you?" --model_path "LLMs/chatglm/chatglm-6b/" --adapter_weights "output/chatglm" --max_new_tokens 32python finetune.py --model_type llama --data "data/train/" --model_path "LLMs/open-llama/open-llama-3b/" --adapter "lora" --output_dir "output/llama"python inference.py --model_type llama --instruction "Who are you?" --model_path "LLMs/open-llama/open-llama-3b" --adapter_weights "output/llama" --max_new_tokens 32python finetune.py --model_type bloom --data "data/train/" --model_path "LLMs/bloom/bloomz-560m" --adapter "lora" --output_dir "output/bloom"python inference.py --model_type bloom --instruction "Who are you?" --model_path "LLMs/bloom/bloomz-560m" --adapter_weights "output/bloom" --max_new_tokens 32python finetune.py --model_type qwen --data "data/train/" --model_path "LLMs/Qwen/Qwen-7b-chat" --adapter "lora" --output_dir "output/Qwen"python inference.py --model_type qwen --instruction "Who are you?" --model_path "LLMs/Qwen/Qwen-7b-chat" --adapter_weights "output/Qwen" --max_new_tokens 32python finetune.py --model_type baichuan --data "data/train/" --model_path "LLMs/baichuan/baichuan-7b" --adapter "lora" --output_dir "output/baichuan"python inference.py --model_type baichuan --instruction "Who are you?" --model_path "LLMs/baichuan/baichuan-7b" --adapter_weights "output/baichuan" --max_new_tokens 32You need to specify task_type('classify') and labels

python finetune.py --model_type llama --data "data/train/alpaca_tiny_classify.json" --model_path "LLMs/open-llama/open-llama-3b" --adapter "lora" --output_dir "output/llama" --task_type classify --labels '["0", "1"]' --disable_wandbpython inference.py --model_type llama --data "data/train/alpaca_tiny_classify.json" --model_path "LLMs/open-llama/open-llama-3b" --adapter_weights "output/llama" --task_type classify --labels '["0", "1"]' --disable_wandb- You need to install a MySQL, and put the db config into the system env.

Eg.

export LLM_DB_HOST='127.0.0.1'

export LLM_DB_PORT=3306

export LLM_DB_USERNAME='YOURUSERNAME'

export LLM_DB_PASSWORD='YOURPASSWORD'

export LLM_DB_NAME='YOURDBNAME'

- create the necessary tables

source xxxx.sql- db_iteration: [train/test] The record's set name.

- db_type: [test] The record is whether "train" or "test".

- db_test_iteration: [test] The record's test set name.

- finetune (use chatglm for example)

python finetune.py --model_type chatglm --fromdb --db_iteration xxxxxx --model_path "LLMs/chatglm/chatglm-6b/" --adapter "lora" --output_dir "output/chatglm" --disable_wandb- eval

python inference.py --model_type chatglm --fromdb --db_iteration xxxxxx --db_type 'test' --db_test_iteration yyyyyyy --model_path "LLMs/chatglm/chatglm-6b/" --adapter_weights "output/chatglm" --max_new_tokens 6usage: finetune.py [-h] [--data DATA] [--model_type {llama,chatglm,chatglm2,bloom}] [--task_type {seq2seq,classify}] [--labels LABELS] [--model_path MODEL_PATH] [--output_dir OUTPUT_DIR] [--disable_wandb]

[--adapter {lora,adalora,prompt,p_tuning,prefix}] [--lora_r LORA_R] [--lora_alpha LORA_ALPHA] [--lora_dropout LORA_DROPOUT]

[--lora_target_modules LORA_TARGET_MODULES [LORA_TARGET_MODULES ...]] [--adalora_init_r ADALORA_INIT_R] [--adalora_tinit ADALORA_TINIT] [--adalora_tfinal ADALORA_TFINAL]

[--adalora_delta_t ADALORA_DELTA_T] [--num_virtual_tokens NUM_VIRTUAL_TOKENS] [--mapping_hidden_dim MAPPING_HIDDEN_DIM] [--epochs EPOCHS] [--learning_rate LEARNING_RATE]

[--cutoff_len CUTOFF_LEN] [--val_set_size VAL_SET_SIZE] [--group_by_length] [--logging_steps LOGGING_STEPS] [--load_8bit] [--add_eos_token]

[--resume_from_checkpoint [RESUME_FROM_CHECKPOINT]] [--per_gpu_train_batch_size PER_GPU_TRAIN_BATCH_SIZE] [--gradient_accumulation_steps GRADIENT_ACCUMULATION_STEPS] [--fromdb]

[--db_iteration DB_ITERATION]

Finetune for all.

optional arguments:

-h, --help show this help message and exit

--data DATA the data used for instructing tuning

--model_type {llama,chatglm,chatglm2,bloom,qwen}

--task_type {seq2seq,classify}

--labels LABELS Labels to classify, only used when task_type is classify

--model_path MODEL_PATH

--output_dir OUTPUT_DIR

The DIR to save the model

--disable_wandb Disable report to wandb

--adapter {lora,adalora,prompt,p_tuning,prefix}

--lora_r LORA_R

--lora_alpha LORA_ALPHA

--lora_dropout LORA_DROPOUT

--lora_target_modules LORA_TARGET_MODULES [LORA_TARGET_MODULES ...]

the module to be injected, e.g. q_proj/v_proj/k_proj/o_proj for llama, query_key_value for bloom&GLM

--adalora_init_r ADALORA_INIT_R

--adalora_tinit ADALORA_TINIT

number of warmup steps for AdaLoRA wherein no pruning is performed

--adalora_tfinal ADALORA_TFINAL

fix the resulting budget distribution and fine-tune the model for tfinal steps when using AdaLoRA

--adalora_delta_t ADALORA_DELTA_T

interval of steps for AdaLoRA to update rank

--num_virtual_tokens NUM_VIRTUAL_TOKENS

--mapping_hidden_dim MAPPING_HIDDEN_DIM

--epochs EPOCHS

--learning_rate LEARNING_RATE

--cutoff_len CUTOFF_LEN

--val_set_size VAL_SET_SIZE

--group_by_length

--logging_steps LOGGING_STEPS

--load_8bit

--add_eos_token

--resume_from_checkpoint [RESUME_FROM_CHECKPOINT]

resume from the specified or the latest checkpoint, e.g. `--resume_from_checkpoint [path]` or `--resume_from_checkpoint`

--per_gpu_train_batch_size PER_GPU_TRAIN_BATCH_SIZE

Batch size per GPU/CPU for training.

--gradient_accumulation_steps GRADIENT_ACCUMULATION_STEPS

--fromdb

--db_iteration DB_ITERATION

The record's set name.usage: inference.py [-h] [--instruction INSTRUCTION] [--input INPUT] [--data DATA] [--model_type {llama,chatglm,chatglm2,bloom}] [--task_type {seq2seq,classify}] [--labels LABELS] [--model_path MODEL_PATH]

[--adapter_weights ADAPTER_WEIGHTS] [--load_8bit] [--temperature TEMPERATURE] [--top_p TOP_P] [--top_k TOP_K] [--max_new_tokens MAX_NEW_TOKENS] [--fromdb] [--db_type DB_TYPE]

[--db_iteration DB_ITERATION] [--db_test_iteration DB_TEST_ITERATION]

Inference for all.

optional arguments:

-h, --help show this help message and exit

--debug Debug Mode to output detail info

--instruction INSTRUCTION

--input INPUT

--data DATA The DIR of test data

--model_type {llama,chatglm,chatglm2,bloom,qwen}

--task_type {seq2seq,classify}

--labels LABELS Labels to classify, only used when task_type is classify

--model_path MODEL_PATH

--adapter_weights ADAPTER_WEIGHTS

The DIR of adapter weights

--load_8bit

--temperature TEMPERATURE

temperature higher, LLM is more creative

--top_p TOP_P

--top_k TOP_K

--max_new_tokens MAX_NEW_TOKENS

--fromdb

--db_type DB_TYPE The record is whether 'train' or 'test'.

--db_iteration DB_ITERATION

The record's set name.

--db_test_iteration DB_TEST_ITERATION

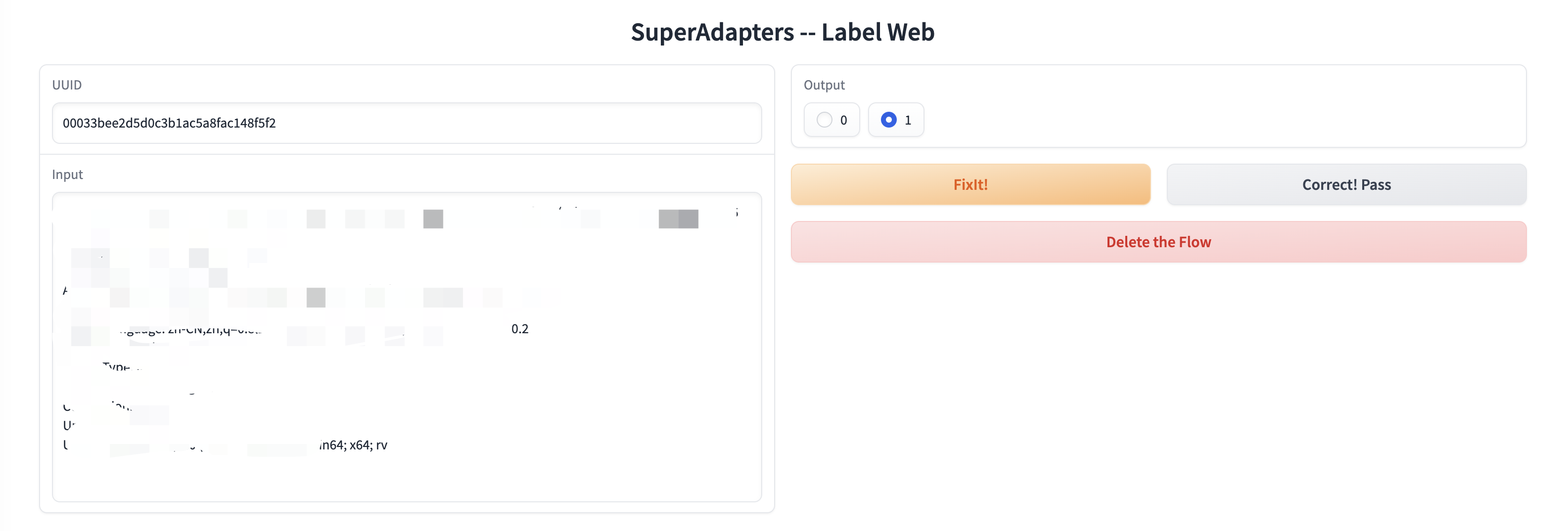

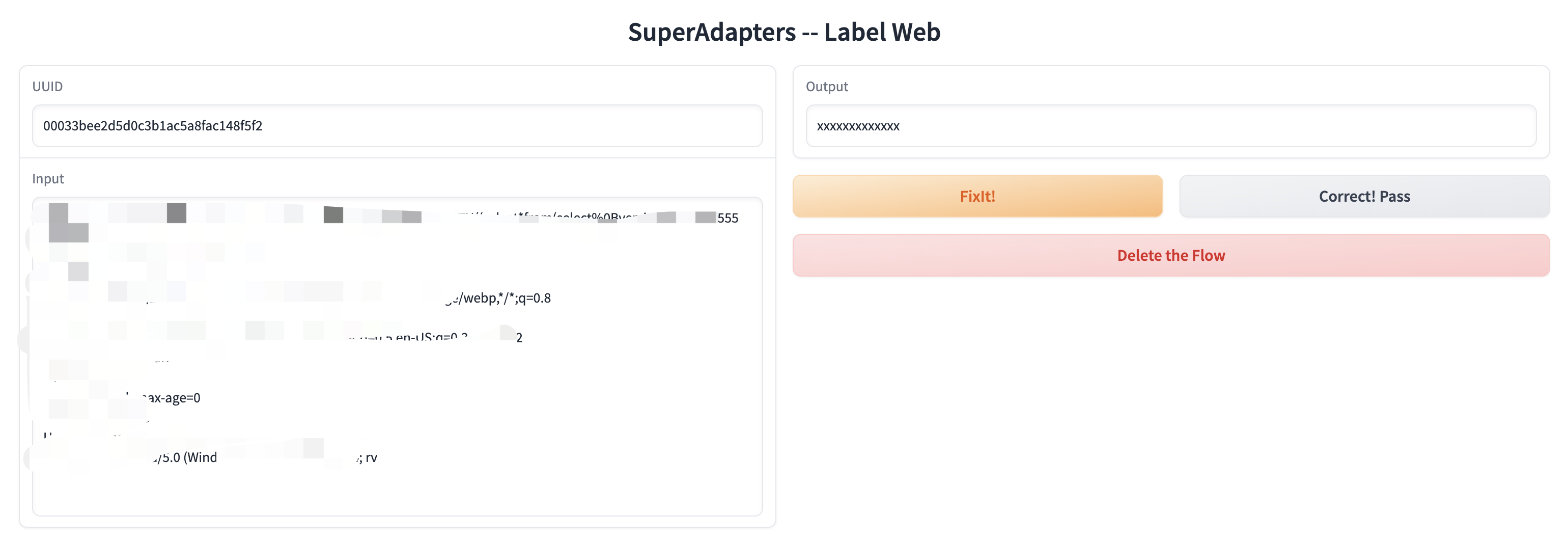

The record's test set name.python web/label.pypython web/label.py --type chat