This is an unofficial PyTorch implementation of DeepLab v2 [1] with a ResNet-101 backbone. COCO-Stuff dataset [2] and PASCAL VOC dataset [3] are supported. The initial weights (.caffemodel) officially provided by the authors are can be converted/used without building the Caffe API. DeepLab v3/v3+ models with the identical backbone are also included (although not tested). torch.hub is supported.

Pretrained models are provided for each training set. Note that the 2D interpolation ways are different from the original, which leads to a bit better results.

| Train set | Eval set | CRF? | Code | Pixel Accuracy |

Mean Accuracy |

Mean IoU | FreqW IoU |

|---|---|---|---|---|---|---|---|

|

10k train † (Model) |

10k val † | Original [2] | 65.1 | 45.5 | 34.4 | 50.4 | |

| Ours | 65.8 | 45.7 | 34.8 | 51.2 | |||

| ✓ | Ours | 67.1 | 46.4 | 35.6 | 52.5 | ||

|

164k train (Model) |

10k val | Ours | 68.4 | 55.6 | 44.2 | 55.1 | |

| ✓ | Ours | 69.2 | 55.9 | 45.0 | 55.9 | ||

| 164k val | Ours | 66.8 | 51.2 | 39.1 | 51.5 | ||

| ✓ | Ours | 67.6 | 51.5 | 39.7 | 52.3 |

† Images and labels are pre-warped to square-shape 513x513

| Train set | Eval set | CRF? | Code | Pixel Accuracy |

Mean Accuracy |

Mean IoU | FreqW IoU |

|---|---|---|---|---|---|---|---|

|

trainaug (Model) |

val | Original [3] | - | - | 76.35 | - | |

| Ours | 94.64 | 86.50 | 76.65 | 90.41 | |||

| ✓ | Original [3] | - | - | 77.69 | - | ||

| Ours | 95.04 | 86.64 | 77.93 | 91.06 |

- Python 2.7+/3.6+

- Anaconda environement

Then setup from conda_env.yaml. Please modify cuda option as needed (default: cudatoolkit=10.0)

$ conda env create -f configs/conda_env.yaml

$ conda activate deeplab-pytorchSetup instruction is provided in each link.

- Run the script below to download caffemodel pre-trained on ImageNet and 91-class COCO (1GB+).

$ bash scripts/setup_caffemodels.sh- Convert the caffemodel to pytorch compatible. No need to build the official Caffe API!

# This generates "deeplabv1_resnet101-coco.pth" from "init.caffemodel"

$ python convert.py --dataset cocoPlease see ./scripts/train_eval.sh for example usage.

Usage: main.py train [OPTIONS]

Training DeepLab by v2 protocol

Options:

-c, --config-path FILENAME Dataset configuration file in YAML [required]

--cuda / --cpu Enable CUDA if available [default: --cuda]

--help Show this message and exit.

To monitor a loss, lr values, and gpu usage:

$ tensorboard --logdir data/logsCommon settings:

- Model: DeepLab v2 with ResNet-101 backbone. Dilated rates of ASPP are (6, 12, 18, 24). Output stride is 8.

- Multi-GPU: All the GPUs visible to the process are used. Please specify the scope with

CUDA_VISIBLE_DEVICES=. - Multi-scale loss: Loss is defined as a sum of responses from multi-scale inputs (1x, 0.75x, 0.5x) and element-wise max across the scales. The unlabeled class is ignored in the loss computation.

- Gradient accumulation: The mini-batch of 10 samples is not processed at once due to the high occupancy of GPU

memories. Instead, gradients of small batches of 5 samples are accumulated for 2 iterations, and weight updating is

performed at the end (

batch_size * iter_size = 10). GPU memory usage is approx. 11.2 GB with the default setting (tested on the single Titan X). You can reduce it with a smallbatch_size. - Learning rate: Stochastic gradient descent (SGD) is used with momentum of 0.9 and initial learning rate of

2.5e-4. Polynomial learning rate decay is employed; the learning rate is multiplied by

(1-iter/iter_max)**powerat every 10 iterations. - Monitoring: Moving average loss (

average_lossin Caffe) can be monitored in TensorBoard.

- #Iterations: Updated 100k iterations.

- #Classes: The label indices range from 0 to 181 and the model outputs a 182-dim categorical distribution, but only 171 classes are supervised with COCO-Stuff. Index 255 is an unlabeled class to be ignored.

- Preprocessing: (1) Input images are randomly re-scaled by factors ranging from 0.5 to 1.5, (2) padded if needed, and (3) randomly cropped to 321x321.

- #Iterations: Updated 20k iterations.

- #Classes: Same as the 164k version above.

- Preprocessing: (1) Input images are initially warped to 513x513 squares, (2) randomly re-scaled by factors ranging from 0.5 to 1.5, (3) padded if needed, and (4) randomly cropped to 321x321 so that the input size is fixed during training.

- #Iterations: Updated 20k iterations.

- #Classes: 20 foreground objects + 1 background. Index 255 is an unlabeled class to be ignored.

- Preprocessing: (1) Input images are randomly re-scaled by factors ranging from 0.5 to 1.5, (2) padded if needed, and (3) randomly cropped to 321x321.

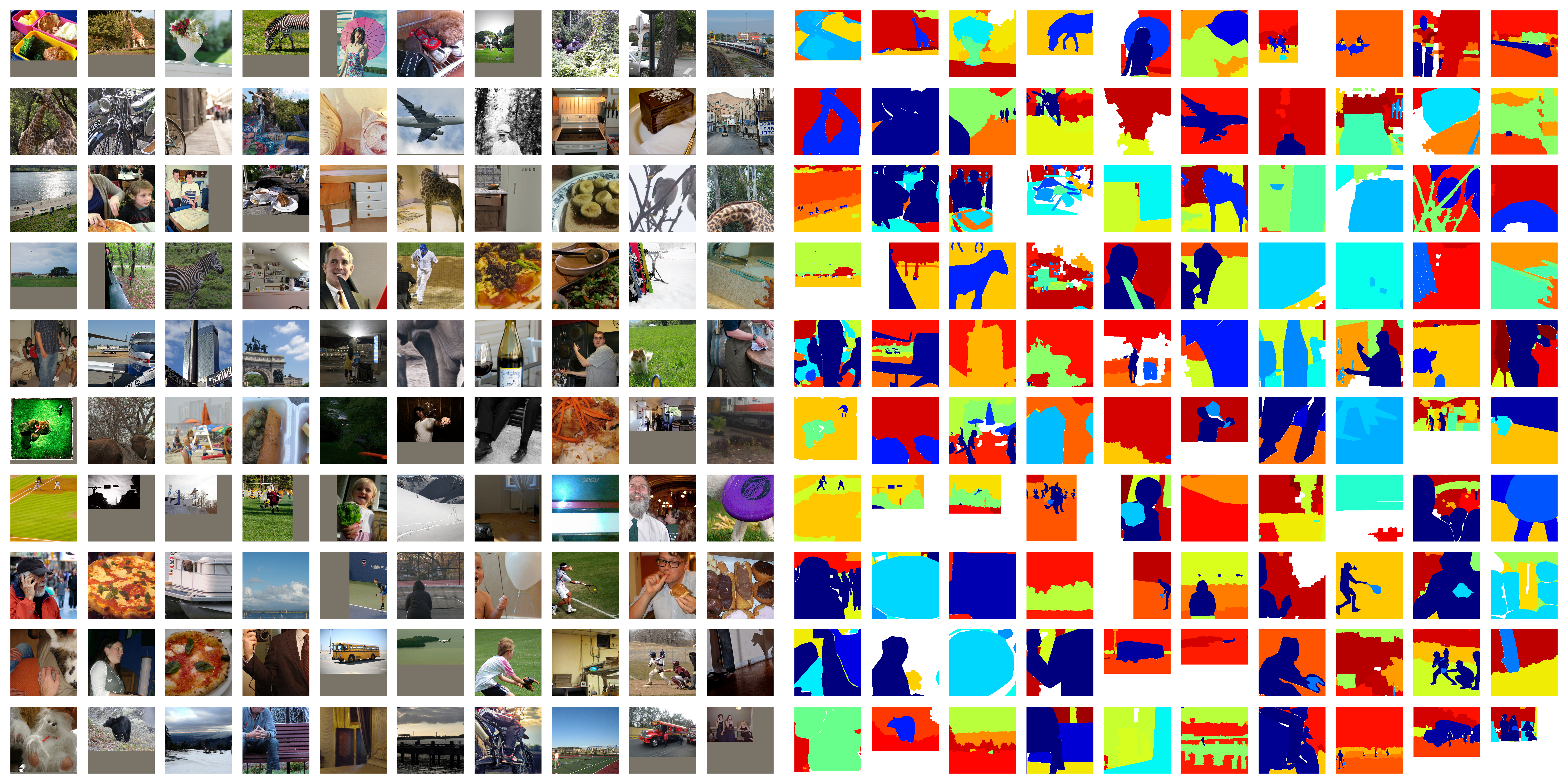

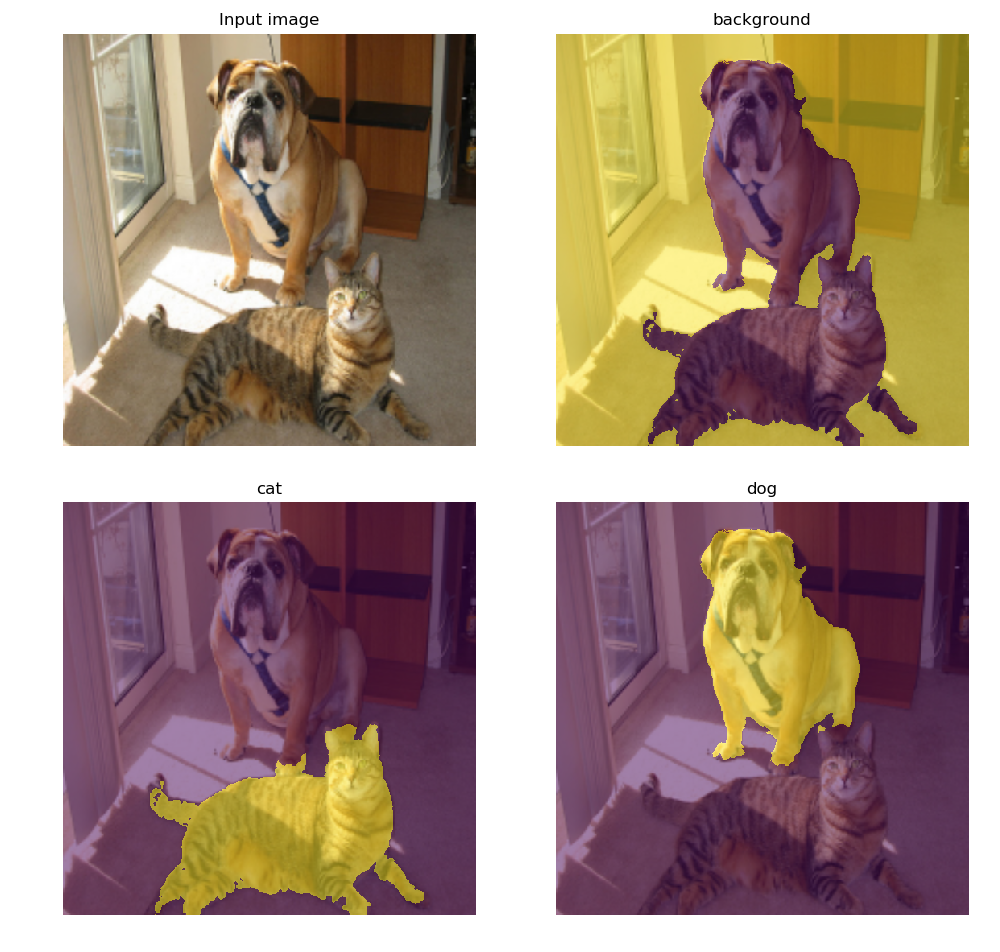

Processed image vs. label examples in COCO-Stuff:

To compute scores in:

- Pixel accuracy

- Mean accuracy

- Mean IoU

- Frequency weighted IoU

Usage: main.py test [OPTIONS]

Evaluation on validation set

Options:

-c, --config-path FILENAME Dataset configuration file in YAML [required]

-m, --model-path PATH PyTorch model to be loaded [required]

--cuda / --cpu Enable CUDA if available [default: --cuda]

--help Show this message and exit.

To perform CRF post-processing:

Usage: main.py crf [OPTIONS]

CRF post-processing on pre-computed logits

Options:

-c, --config-path FILENAME Dataset configuration file in YAML [required]

-j, --n-jobs INTEGER Number of parallel jobs [default: # cores]

--help Show this message and exit.

| COCO-Stuff 164k | COCO-Stuff 10k | PASCAL VOC 2012 | Pretrained COCO |

|---|---|---|---|

|

|

|

|

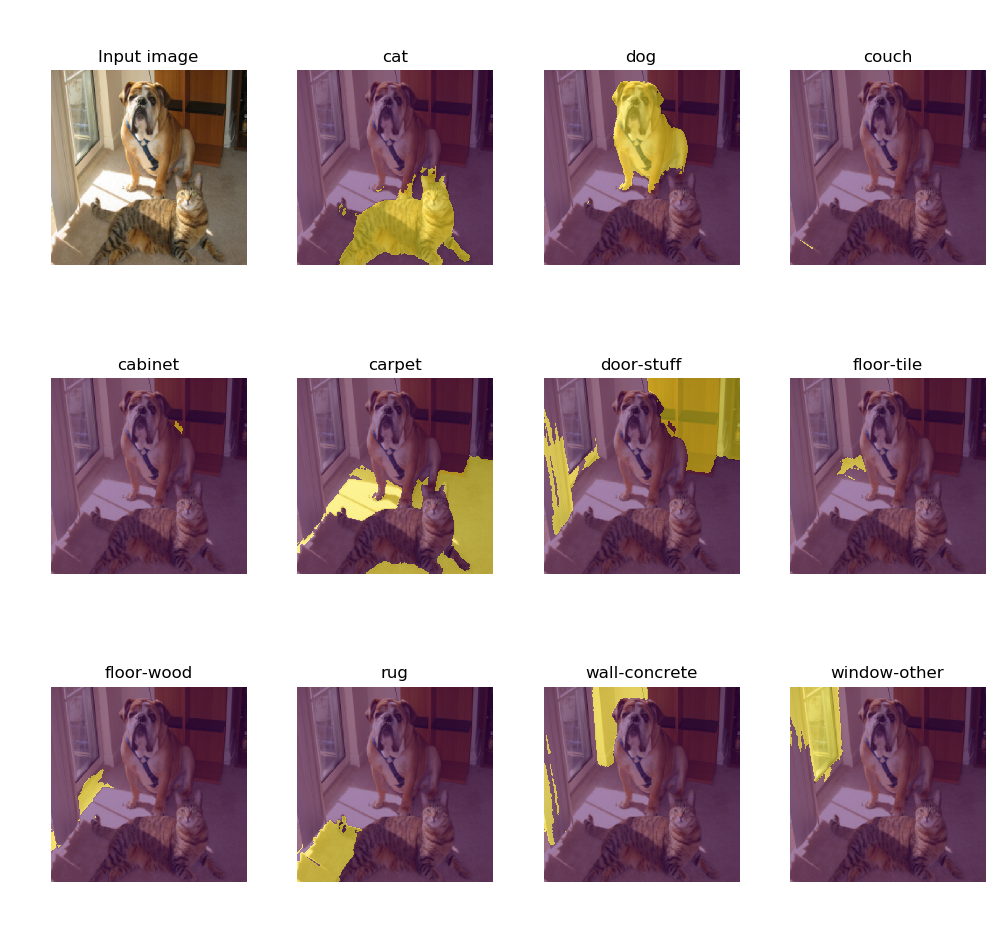

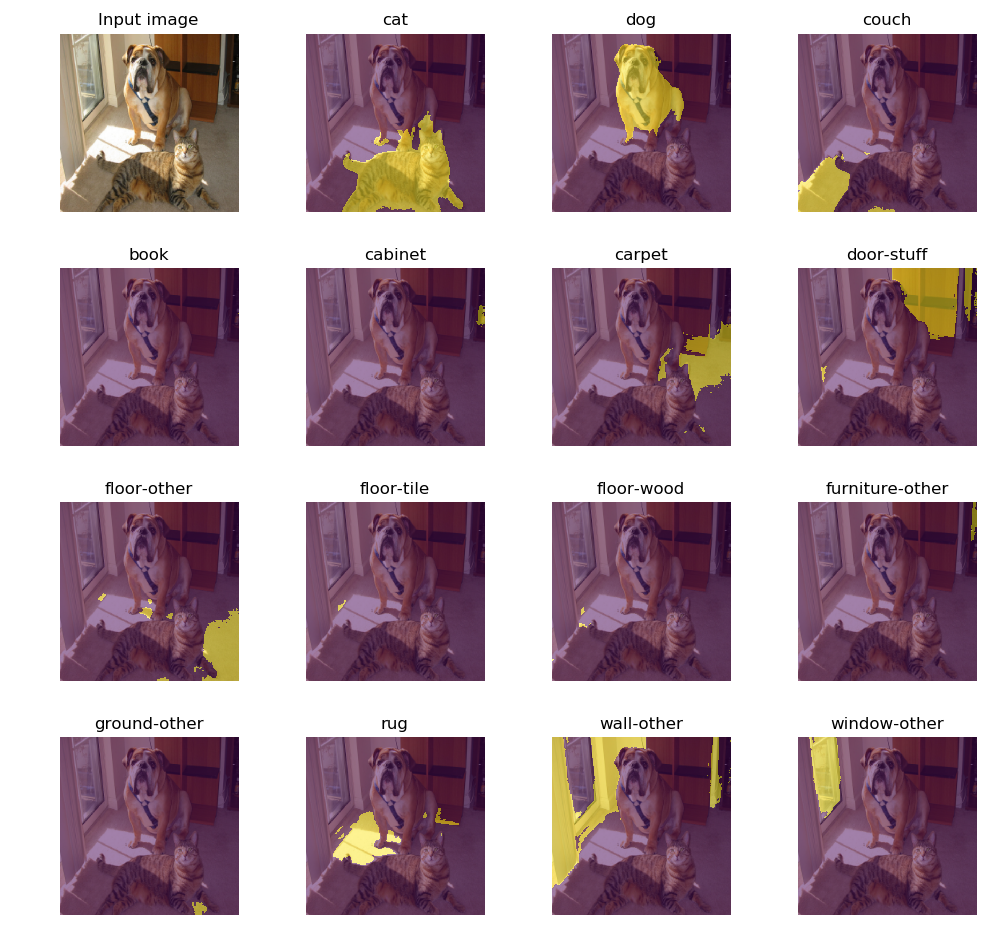

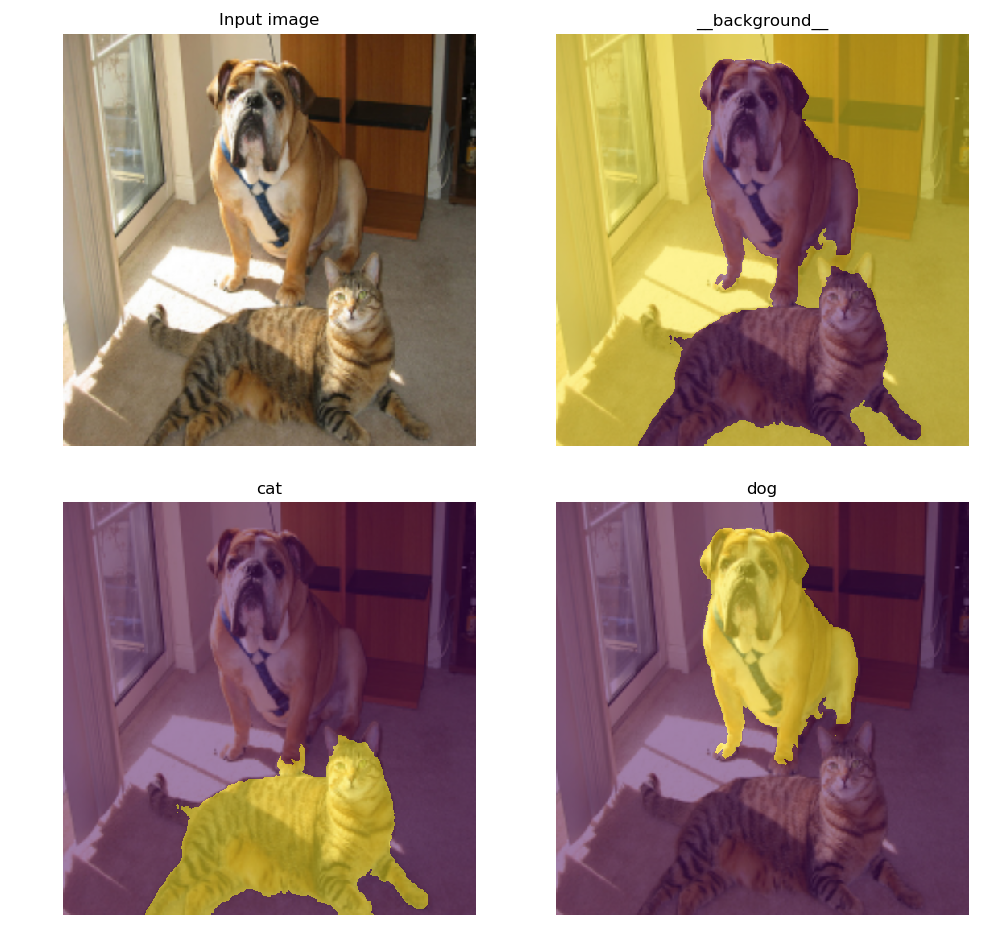

Usage: demo.py single [OPTIONS]

Inference from a single image

Options:

-c, --config-path FILENAME Dataset configuration file in YAML [required]

-m, --model-path PATH PyTorch model to be loaded [required]

-i, --image-path PATH Image to be processed [required]

--cuda / --cpu Enable CUDA if available [default: --cuda]

--crf CRF post-processing [default: False]

--help Show this message and exit.

A class of mouseovered pixel is shown in terminal.

Usage: demo.py live [OPTIONS]

Inference from camera stream

Options:

-c, --config-path FILENAME Dataset configuration file in YAML [required]

-m, --model-path PATH PyTorch model to be loaded [required]

--cuda / --cpu Enable CUDA if available [default: --cuda]

--crf CRF post-processing [default: False]

--camera-id INTEGER Device ID [default: 0]

--help Show this message and exit.

Model setup with 3 lines.

import torch.hub

model = torch.hub.load("kazuto1011/deeplab-pytorch", "deeplabv2_resnet101", n_classes=182)

model.load_state_dict(torch.load("deeplabv2_resnet101_msc-cocostuff164k-100000.pth"))- While the official code employs 1/16 bilinear interpolation (

Interplayer) for downsampling a label for only 0.5x input, this codebase does for both 0.5x and 0.75x inputs with nearest interpolation (PIL.Image.resize, related issue). - Bilinear interpolation on images and logits is performed with the

align_corners=False.

This codebase only supports DeepLab v2 training which freezes batch normalization layers, although v3/v3+ protocols require training them. If training their parameters on multiple GPUs as well in your projects, please install the extra library below.

pip install torch-encodingBatch normalization layers in a model are automatically switched in libs/models/resnet.py.

try:

from encoding.nn import SyncBatchNorm

_BATCH_NORM = SyncBatchNorm

except:

_BATCH_NORM = nn.BatchNorm2d-

L.-C. Chen, G. Papandreou, I. Kokkinos, K. Murphy, A. L. Yuille. DeepLab: Semantic Image Segmentation with Deep Convolutional Nets, Atrous Convolution, and Fully Connected CRFs. IEEE TPAMI, 2018.

Project / Code / arXiv paper -

H. Caesar, J. Uijlings, V. Ferrari. COCO-Stuff: Thing and Stuff Classes in Context. In CVPR, 2018.

Project / arXiv paper -

M. Everingham, L. Van Gool, C. K. I. Williams, J. Winn, A. Zisserman. The PASCAL Visual Object Classes (VOC) Challenge. IJCV, 2010.

Project / Paper