Paper | Model | Website and Examples | More Examples | Demo

📣 We are inviting collaborators and sponsors to train TANGO on larger datasets, like AudioSET

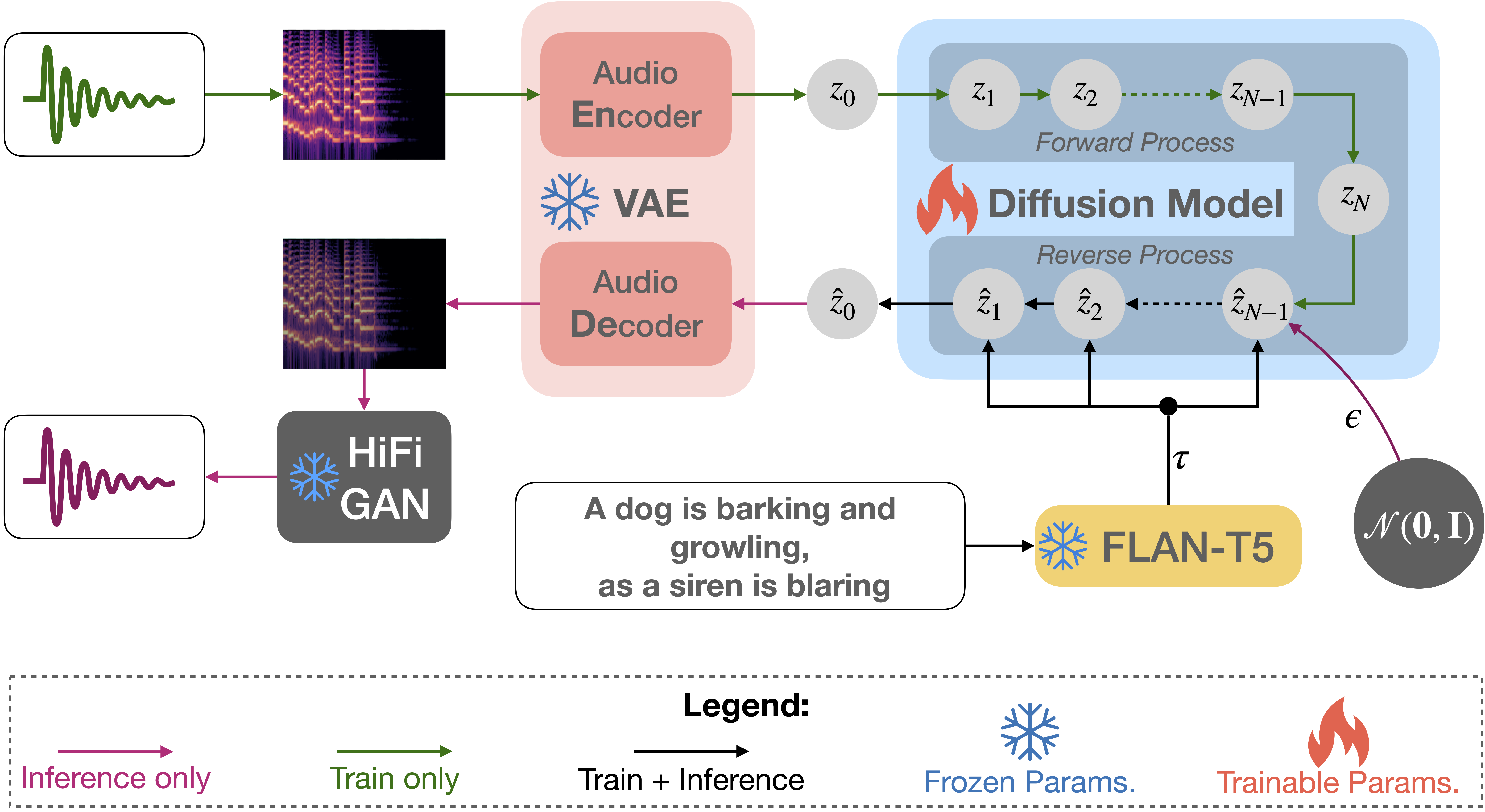

TANGO is a latent diffusion model (LDM) for text-to-audio (TTA) generation. TANGO can generate realistic audios including human sounds, animal sounds, natural and artificial sounds and sound effects from textual prompts. We use the frozen instruction-tuned LLM Flan-T5 as the text encoder and train a UNet based diffusion model for audio generation. We perform comparably to current state-of-the-art models for TTA across both objective and subjective metrics, despite training the LDM on a 63 times smaller dataset. We release our model, training, inference code, and pre-trained checkpoints for the research community.

Download the TANGO model and generate audio from a text prompt:

import IPython

import soundfile as sf

from tango import Tango

tango = Tango("declare-lab/tango")

prompt = "An audience cheering and clapping"

audio = tango.generate(prompt)

sf.write(f"{prompt}.wav", audio, samplerate=16000)

IPython.display.Audio(data=audio, rate=16000)CheerClap.webm

The model will be automatically downloaded and saved in cache. Subsequent runs will load the model directly from cache.

The generate function uses 100 steps by default to sample from the latent diffusion model. We recommend using 200 steps for generating better quality audios. This comes at the cost of increased run-time.

prompt = "Rolling thunder with lightning strikes"

audio = tango.generate(prompt, steps=200)

IPython.display.Audio(data=audio, rate=16000)Thunder.webm

Use the generate_for_batch function to generate multiple audio samples for a batch of text prompts:

prompts = [

"A car engine revving",

"A dog barks and rustles with some clicking",

"Water flowing and trickling"

]

audios = tango.generate_for_batch(prompts, samples=2)This will generate two samples for each of the three text prompts.

More generated samples are shown here.

Our code is built on pytorch version 1.13.1+cu117. We mention torch==1.13.1 in the requirements file but you might need to install a specific cuda version of torch depending on your GPU device type.

Install requirements.txt. You will also need to install the diffusers package from the directory provided in this repo:

git clone https://github.com/declare-lab/tango/

cd tango

pip install -r requirements.txt

cd diffusers

pip install -e .You might also need to install libsndfile1 for soundfile to work properly in linux:

(sudo) apt-get install libsndfile1Follow the instructions given in the AudioCaps repository for downloading the data. The audio locations and corresponding captions are provided in our data directory. The *.json files are used for training and evaluation. Once you have downloaded your version of the data you should be able to map it using the file ids to the file locations provided in our data/*.json files.

Note that we cannot distribute the data because of copyright issues.

We use the accelerate package from Hugging Face for multi-gpu training. Run accelerate config from terminal and set up your run configuration by the answering the questions asked.

You can now train TANGO on the AudioCaps dataset using:

accelerate launch train.py \

--text_encoder_name="google/flan-t5-large" \

--scheduler_name="stabilityai/stable-diffusion-2-1" \

--unet_model_config="configs/diffusion_model_config.json" \

--freeze_text_encoder --augment --snr_gamma 5 \The argument --augment uses augmented data for training as reported in our paper. We recommend training for at-least 40 epochs, which is the default in train.py.

To start training from our released checkpoint use the --hf_model argument.

accelerate launch train.py \

--hf_model "declare-lab/tango" \

--unet_model_config="configs/diffusion_model_config.json" \

--freeze_text_encoder --augment --snr_gamma 5 \Check train.py and train.sh for the full list of arguments and how to use them.

Checkpoint from training will be saved in the saved/*/ directory.

To perform audio generation and objective evaluation in AudioCaps test set from a checkpoint:

CUDA_VISIBLE_DEVICES=0 python inference.py \

--original_args="saved/*/summary.jsonl" \

--model="saved/*/best/pytorch_model_2.bin" \Check inference.py and inference.sh for the full list of arguments and how to use them.

We use functionalities from audioldm_eval for objective evalution in inference.py. It requires the gold reference audio files and generated audio files to have the same name. You need to create the directory data/audiocaps_test_references/subset and keep the reference audio files there. The files should have names as following: output_0.wav, output_1.wav and so on. The indices should correspond to the corresponding line indices in data/test_audiocaps_subset.json.

We use the term subset as some data instances originally released in AudioCaps have since been removed from YouTube and are no longer available. We thus evaluated our models on all the instances which were available as of 8th April, 2023.

We use wandb to log training and infernce results.

| Model | Datasets | Text | #Params | FD ↓ | KL ↓ | FAD ↓ | OVL ↑ | REL ↑ |

|---|---|---|---|---|---|---|---|---|

| Ground truth | − | − | − | − | − | − | 91.61 | 86.78 |

| DiffSound | AS+AC | ✓ | 400M | 47.68 | 2.52 | 7.75 | − | − |

| AudioGen | AS+AC+8 others | ✗ | 285M | − | 2.09 | 3.13 | − | − |

| AudioLDM-S | AC | ✗ | 181M | 29.48 | 1.97 | 2.43 | − | − |

| AudioLDM-L | AC | ✗ | 739M | 27.12 | 1.86 | 2.08 | − | − |

| AudioLDM-M-Full-FT‡ | AS+AC+2 others | ✗ | 416M | 26.12 | 1.26 | 2.57 | 79.85 | 76.84 |

| AudioLDM-L-Full‡ | AS+AC+2 others | ✗ | 739M | 32.46 | 1.76 | 4.18 | 78.63 | 62.69 |

| AudioLDM-L-Full-FT | AS+AC+2 others | ✗ | 739M | 23.31 | 1.59 | 1.96 | − | − |

| TANGO | AC | ✓ | 866M | 24.52 | 1.37 | 1.59 | 85.94 | 80.36 |

Please consider citing the following article if you found our work useful:

@article{ghosal2023tango,

title={Text-to-Audio Generation using Instruction Tuned LLM and Latent Diffusion Model},

author={Ghosal, Deepanway and Majumder, Navonil and Mehrish, Ambuj and Poria, Soujanya},

journal={arXiv preprint arXiv:2304.13731},

year={2023}

}TANGO is not always able to finely control its generations over textual control prompts as it is trained only on the small AudioCaps dataset. For example, the generations from TANGO for prompts Chopping tomatoes on a wooden table and Chopping potatoes on a metal table are very similar. Chopping vegetables on a table also produces similar audio samples. Training text-to-audio generation models on larger datasets is thus required for the model to learn the composition of textual concepts and varied text-audio mappings. In the future, we plan to improve TANGO by training it on larger datasets and enhancing its compositional and controllable generation ability.

We borrow the code in audioldm and audioldm_eval from the AudioLDM repositories. We thank the AudioLDM team for open-sourcing their code.