DebateLLM is a library that encompasses a variety of debating protocols and prompting strategies, aimed at enhancing the accuracy of Large Language Models (LLMs) in Q&A datasets.

Our research (mostly using GPT-3.5) reveals that no single debate or prompting strategy consistently outperforms others across all scenarios. Therefore it is important to experiment with various approaches to find what works best for each dataset. However, implementing each protocol can be time-consuming. We therefore built and open-sourced DebateLLM to facilitate its use by the research community. It enables researchers to test various implementations from the literature on their specific problems (medical or otherwise), potentially driving further advancements in the intelligent prompting of LLMs.

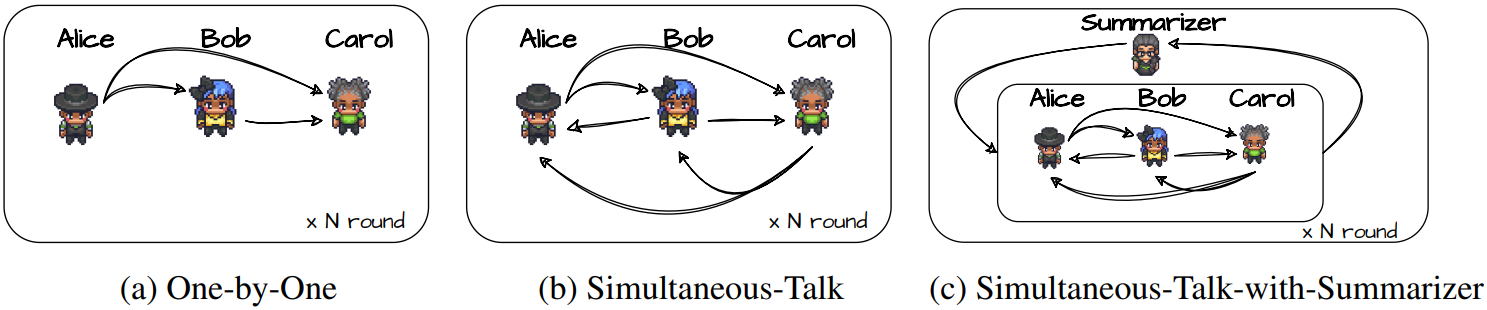

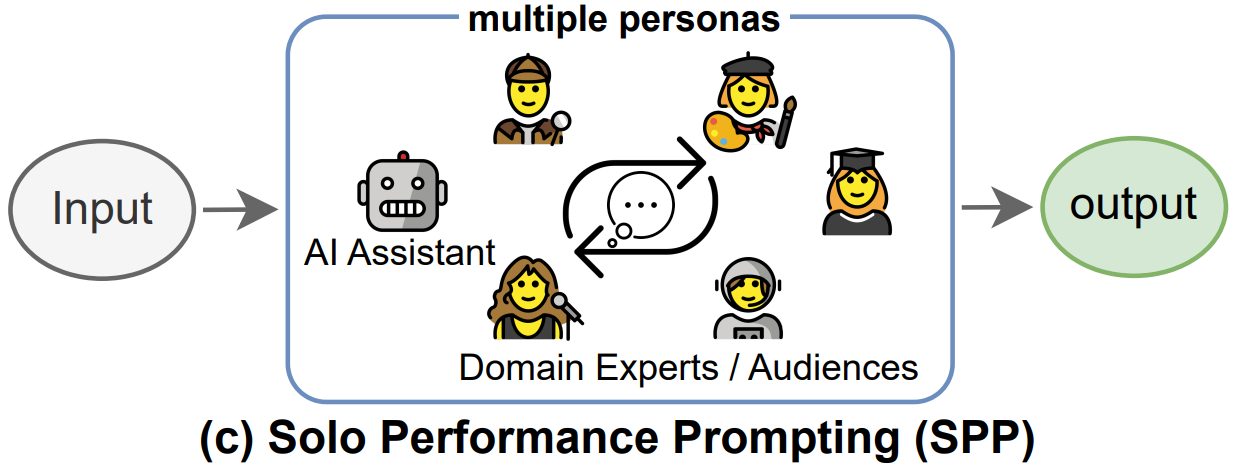

We have various system implementations:

|

|

|

| Society of Minds | Medprompt | Multi-Persona |

|

|

|

| Ensemble Refinement | ChatEval | Solo Performance Prompting |

To set up the DebateLLM environment, execute the following command:

make build_venvTo run an experiment:

-

Activate the Python virtual environment:

source venv/bin/activate -

Execute the evaluation script:

python ./experiments/evaluate.py

You can modify experiment parameters by using Hydra configs located in the

conffolder. The main configuration file is found underconf/config.yaml. Changes at the database and system levels can be made by updating the configs inconf/datasetandconf/system.

To launch multiple experiments:

python ./scripts/launch_experiments.pyTo visualize the results with Neptune:

- Run the visualisation script:

python ./scripts/visualise_results.py

- The output results will be saved to ./data/charts/.

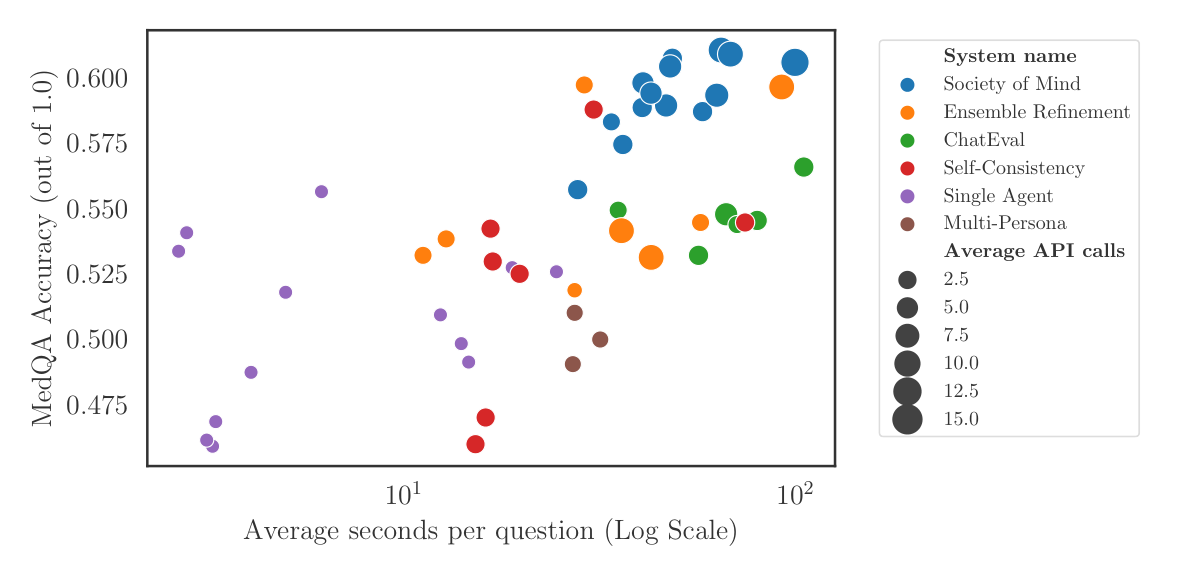

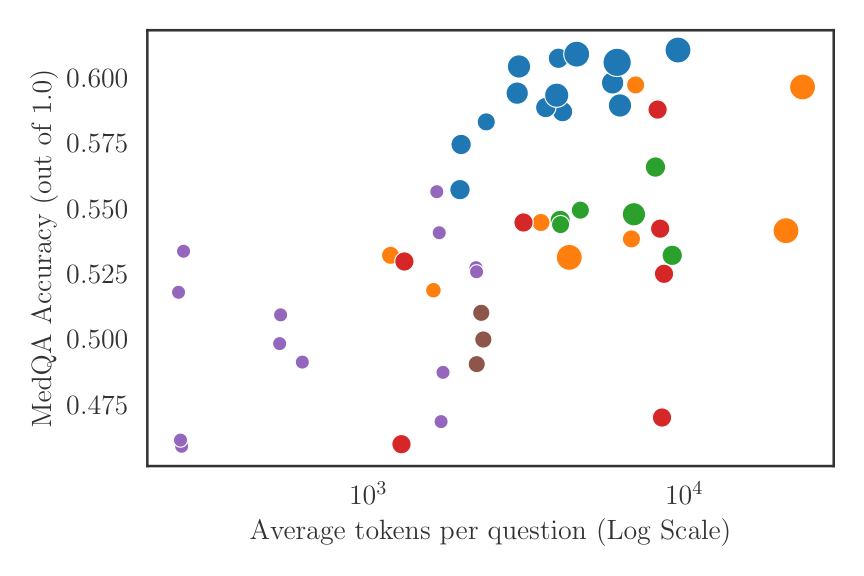

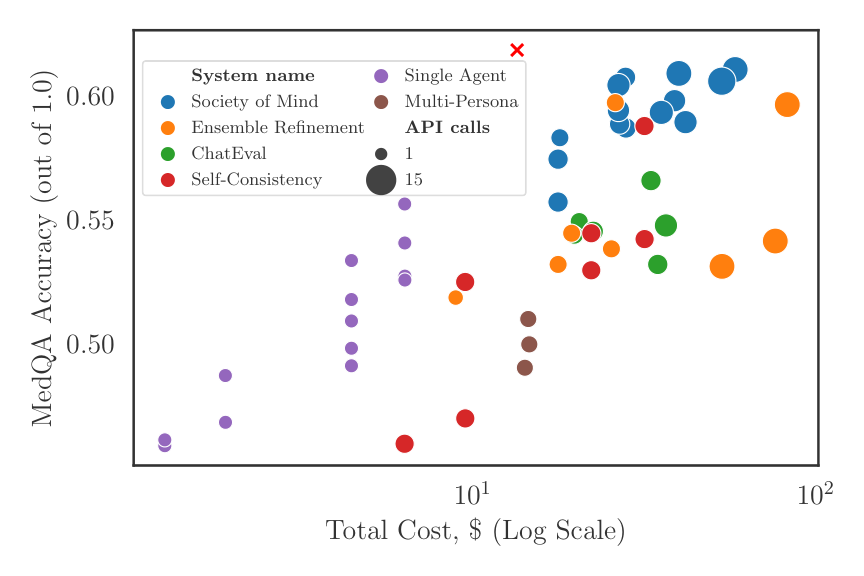

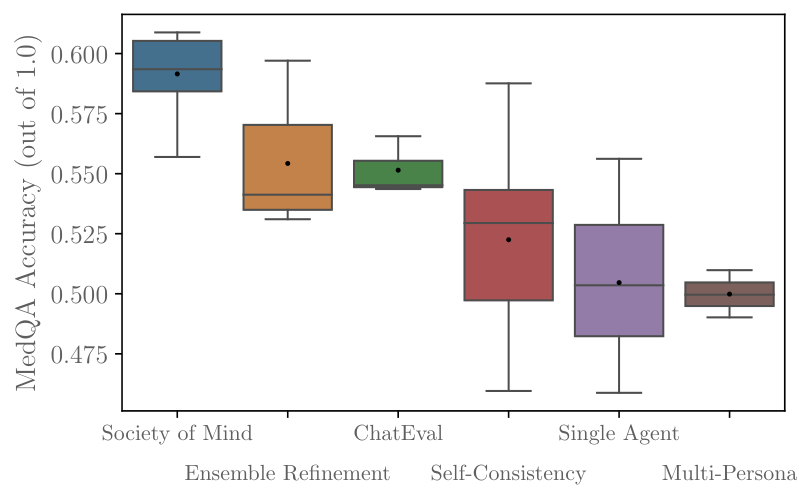

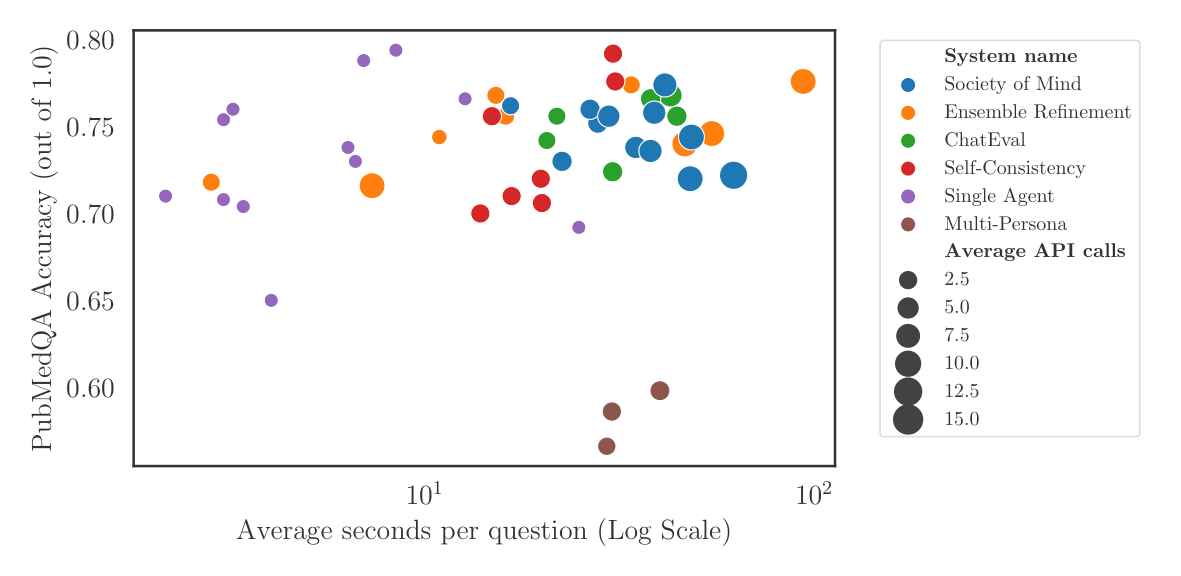

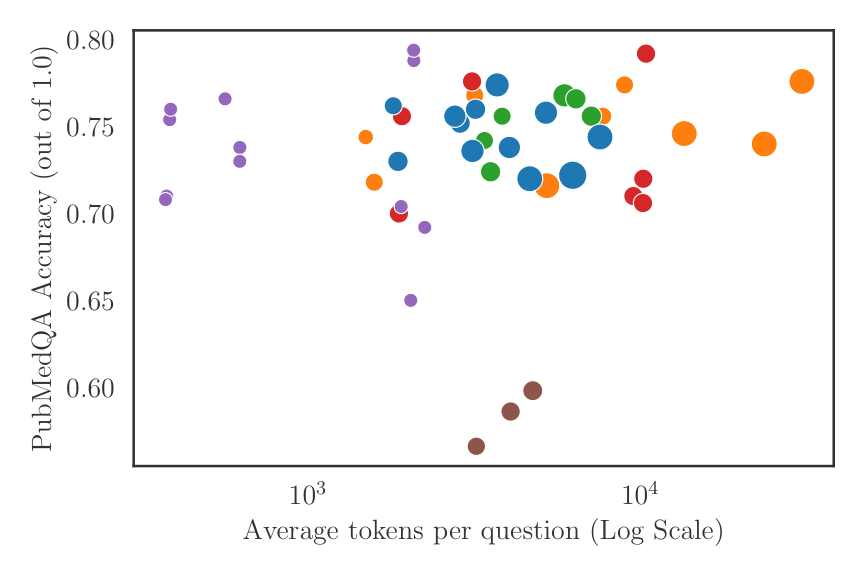

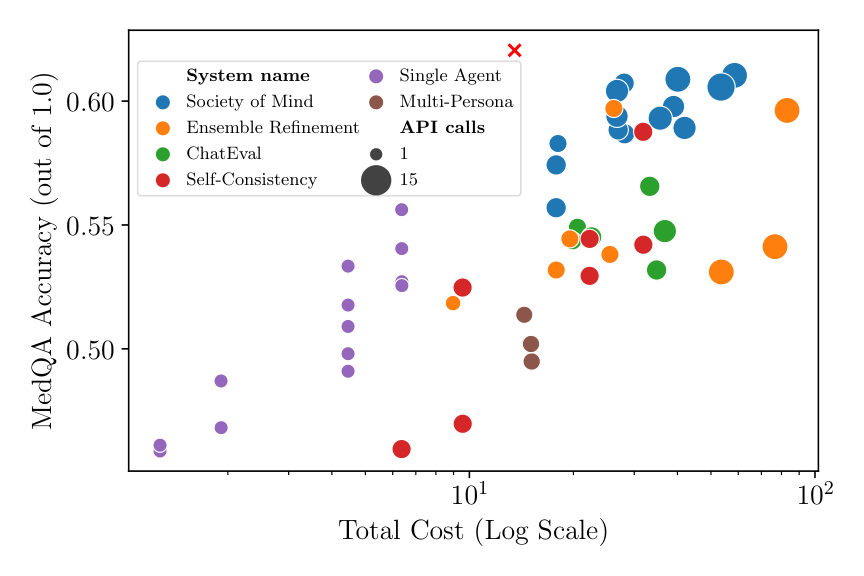

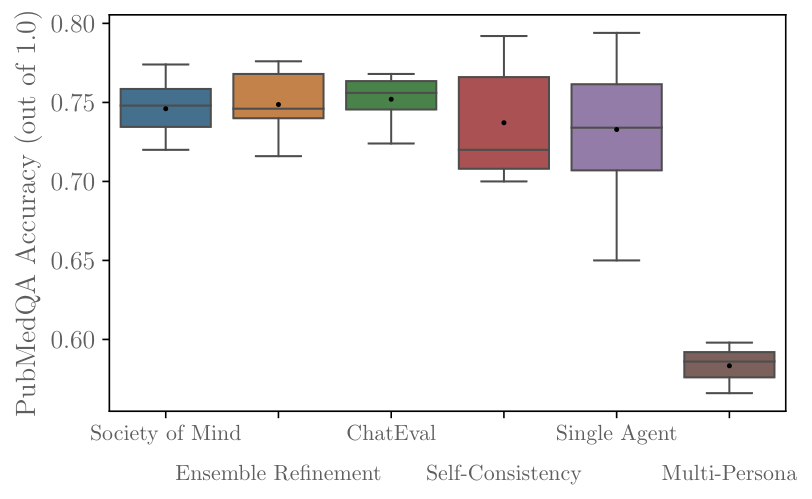

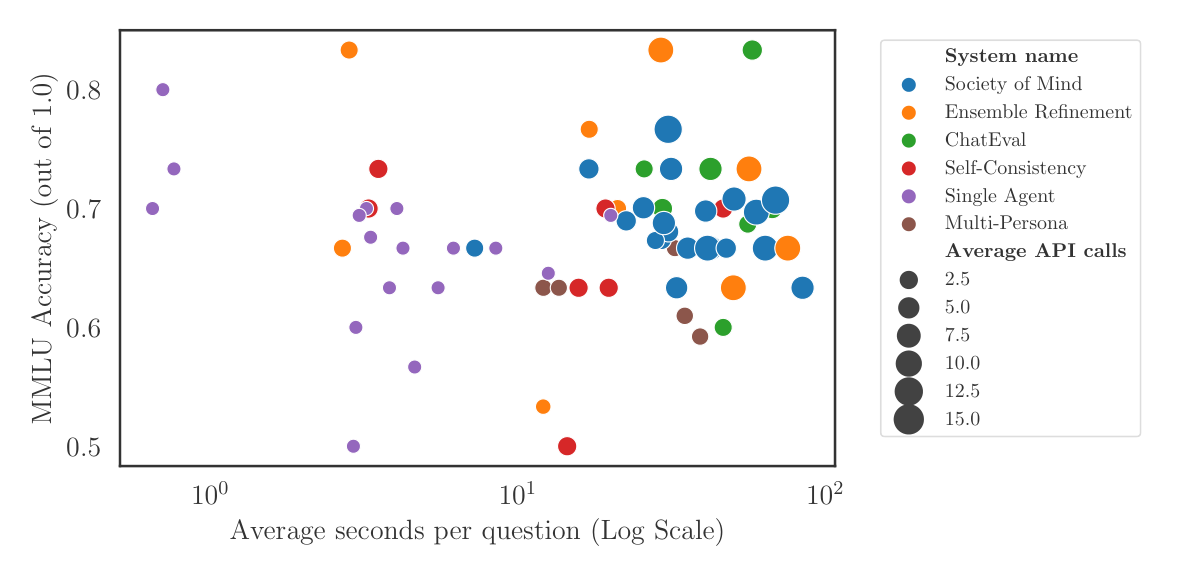

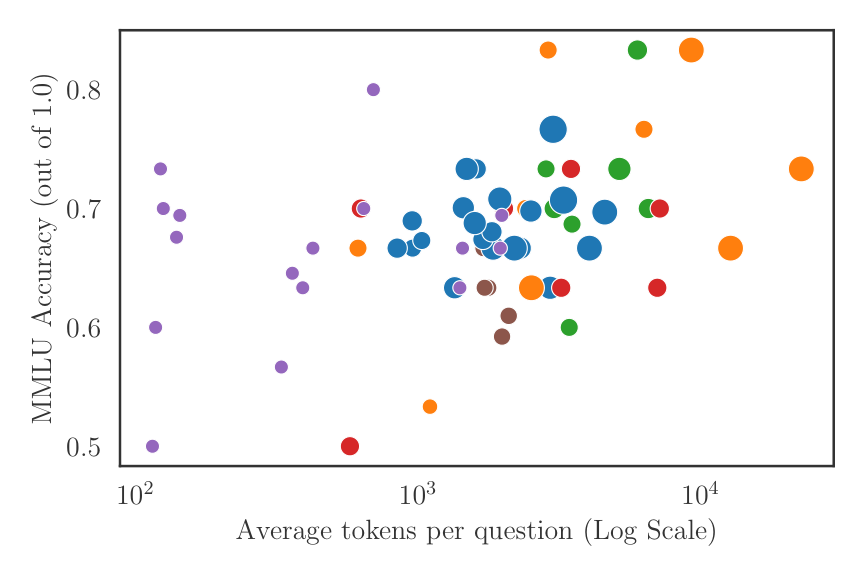

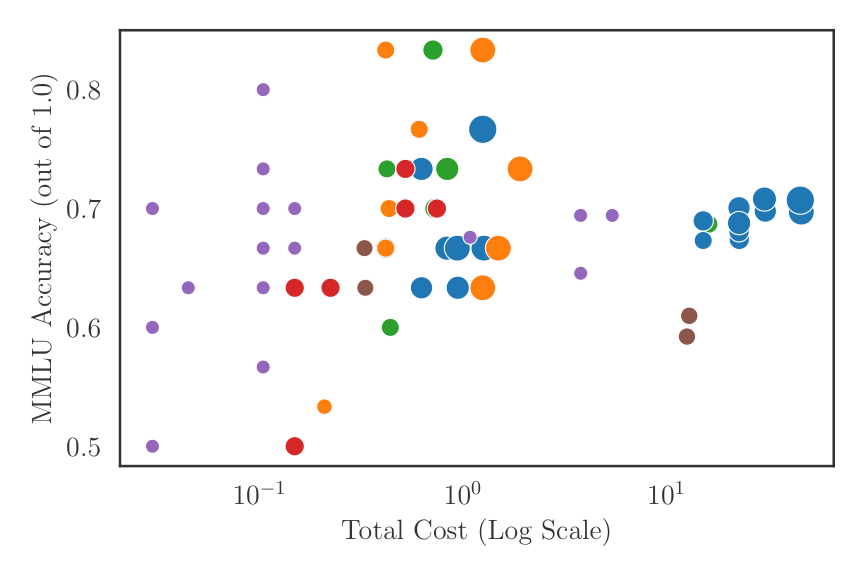

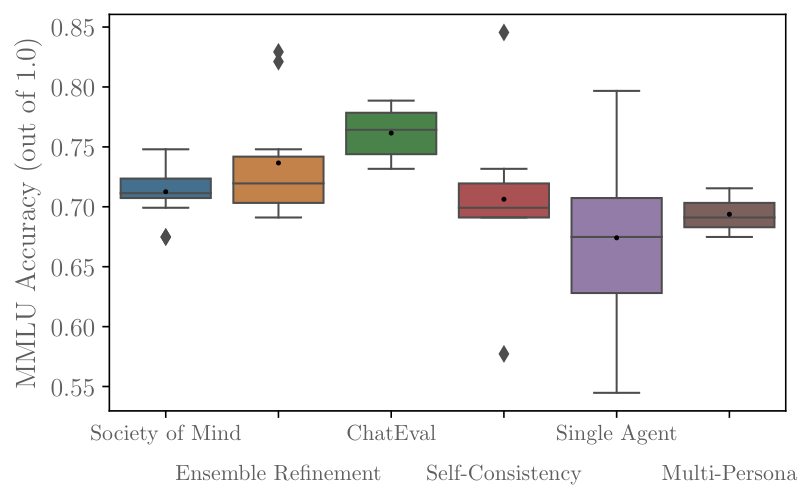

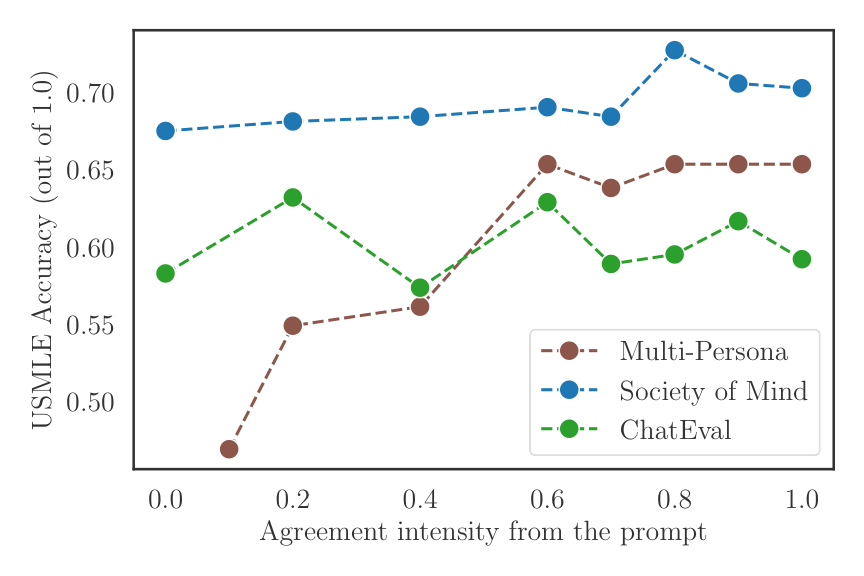

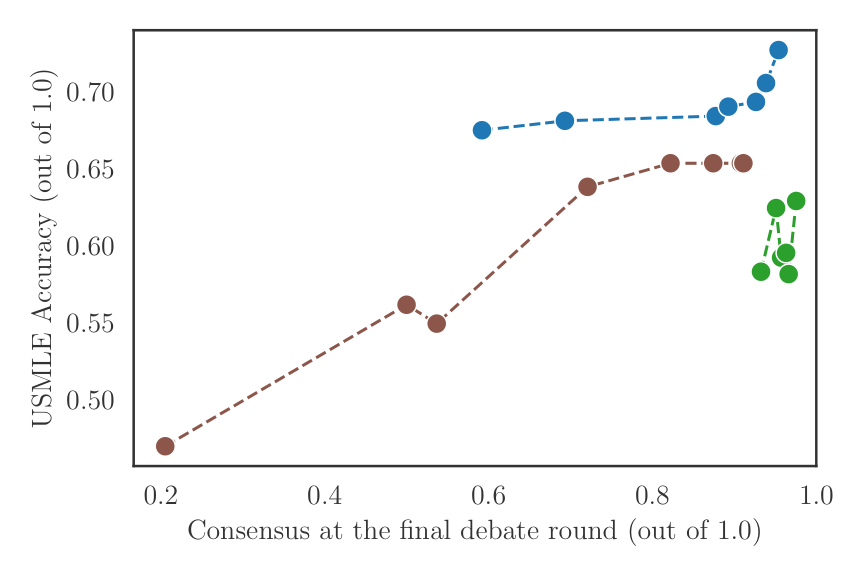

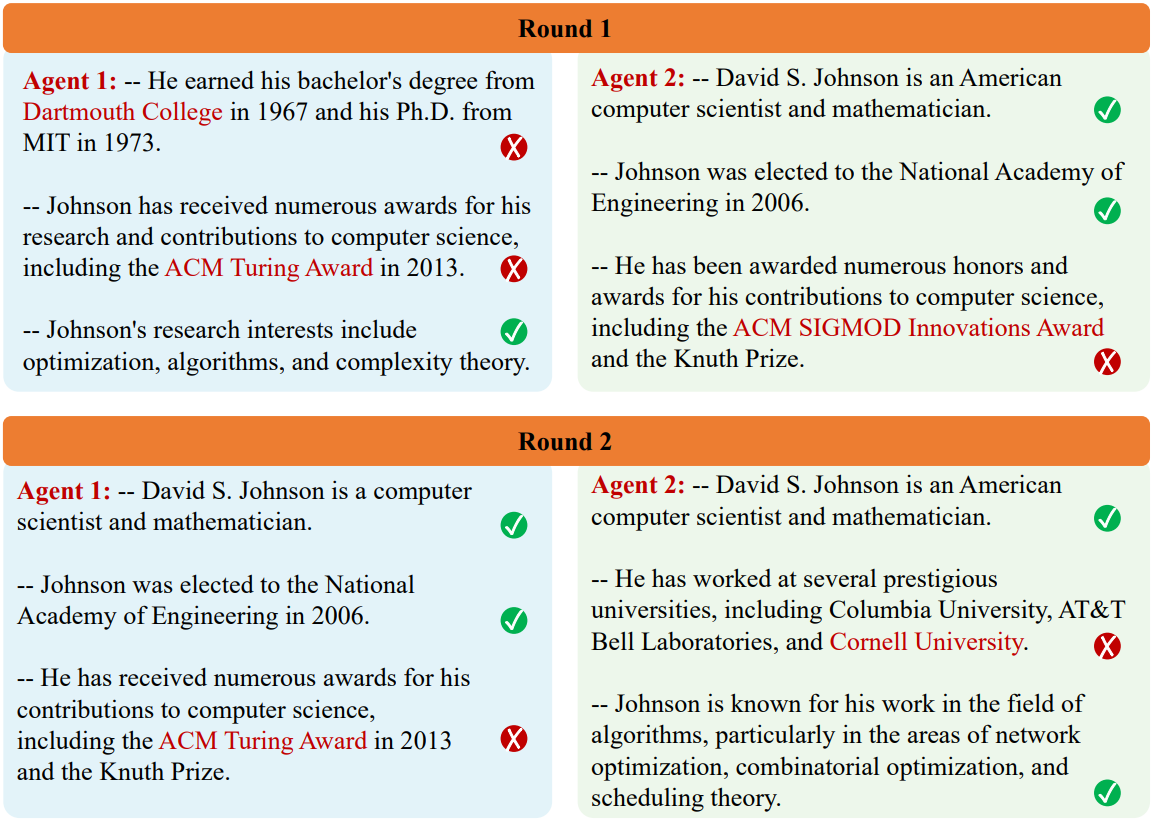

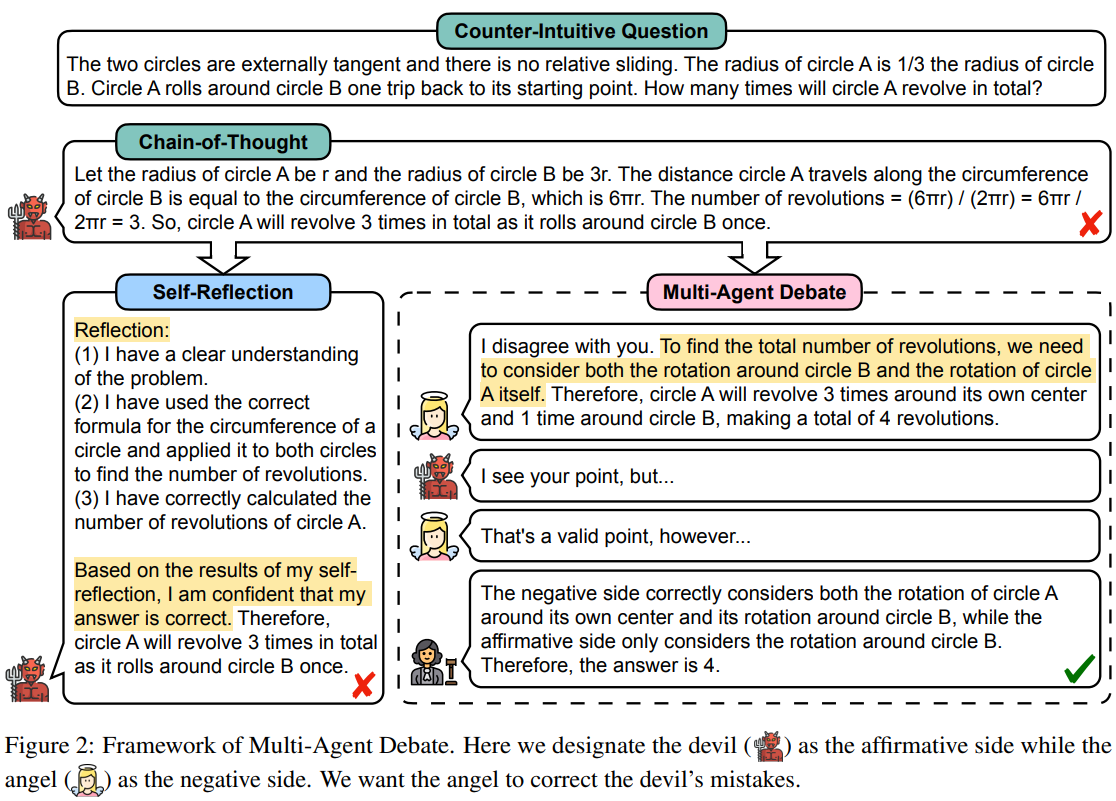

Our benchmarks showcase DebateLLM's performance on MedQA, PubMedQA, and MMLU datasets, focusing on accuracy versus cost, time efficiency, token economy, and agent agreement impact. These visualizations illustrate the balance between accuracy and computational cost, the speed and quality of responses, linguistic efficiency, and the effects of consensus strategies in medical Q&A contexts. Each dataset highlights the varied capabilities of DebateLLM's strategies.

Modulating the agreement intensity provides a substantial improvement in performance for various models. For Multi-Persona, there is an approximate 15% improvement, and for Society of Minds (SoM), an approximate 5% improvement on the USMLE dataset. The 90% agreement intensity prompts applied to Multi-Persona demonstrate a new high score on the MedQA dataset, highlighted in the MedQA dataset cost plot as a red cross.

The benchmarks indicate the effectiveness of various strategies and models implemented within DebateLLM. For detailed analysis and discussion, refer to our paper.

Please read our contributing docs for details on how to submit pull requests, our Contributor License Agreement and community guidelines.

If you use DebateLLM in your work, please cite our paper:

@article{smit2024mad,

title={Should we be going MAD? A Look at Multi-Agent Debate Strategies for LLMs},

author={Smit, Andries and Duckworth, Paul and Grinsztajn, Nathan and Barrett, Thomas D. and Pretorius, Arnu},

journal={arXiv preprint arXiv:2311.17371},

year={2024},

url={https://arxiv.org/abs/2311.17371}

}

Link to the paper: Benchmarking Multi-Agent Debate between Language Models for Medical Q&A.