Investigation under the development of the master thesis "DeepRL-based Motion Planning for Indoor Mobile Robot Navigation" @ Institute of Systems and Robotics - University of Coimbra (ISR-UC)

| Module | Software/Hardware |

|---|---|

| Python IDE | Pycharm |

| Deep Learning library | Tensorflow + Keras |

| GPU | GeForce GeForce GTX 1060 |

| Interpreter | Python 3.8 |

| Packages | requirements.txt |

To setup Pycharm + Anaconda + GPU, consult the setup file here.

To import the required packages (requirements.txt), download the file into the project folder and type the following instruction in the project environment terminal:

pip install -r requirements.txt

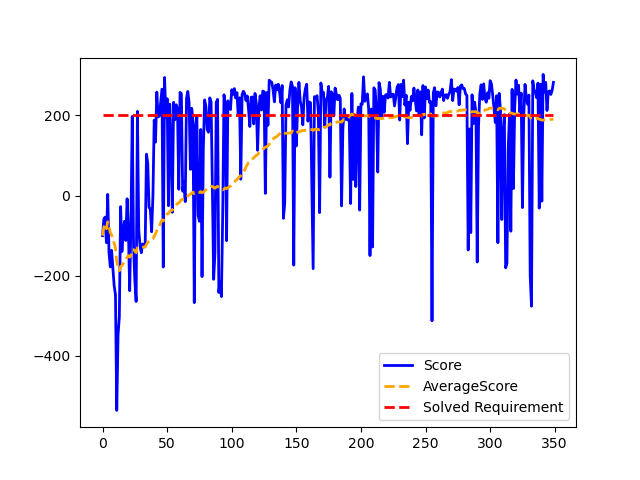

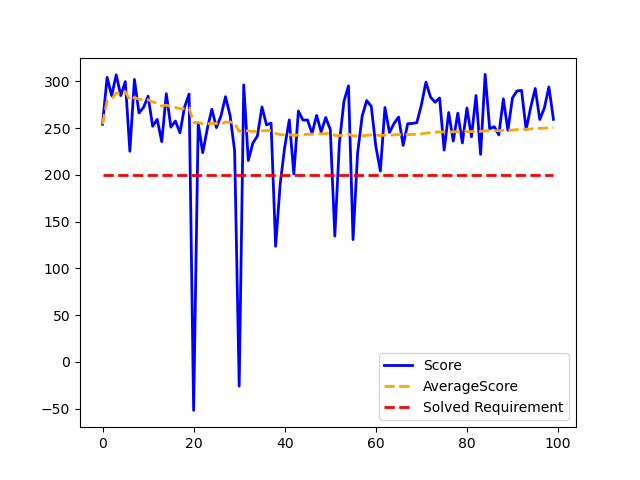

The training process generates a .txt file that track the network models (in 'tf' and .h5 formats) which achieved the solved requirement of the environment. Additionally, an overview image (graph) of the training procedure is created.

To perform several training procedures, the .txt, .png, and directory names must be change. Otherwise, the information of previous training models will get overwritten, and therefore lost.

Regarding testing the saved network models, if using the .h5 model, a 5 episode training is required to initialize/build the keras.model network. Thus, the warnings above mentioned are also appliable to this situation.

Loading the saved model in 'tf' is the recommended option. After finishing the testing, an overview image (graph) of the training procedure is also generated.

Actions:

0 - No action

1 - Fire left engine

2 - Fire main engine

3 - Fire right engine

States:

0 - Lander horizontal coordinate

1 - Lander vertical coordinate

2 - Lander horizontal speed

3 - Lander vertical speed

4 - Lander angle

5 - Lander angular speed

6 - Bool: 1 if first leg has contact, else 0

7 - Bool: 1 if second leg has contact, else 0

Rewards:

Moving from the top of the screen to the landing pad gives a scalar reward between (100-140)

Negative reward if the lander moves away from the landing pad

If the lander crashes, a scalar reward of (-100) is given

If the lander comes to rest, a scalar reward of (100) is given

Each leg with ground contact corresponds to a scalar reward of (10)

Firing the main engine corresponds to a scalar reward of (-0.3) per frame

Firing the side engines corresponds to a scalar reward of (-0.3) per frame

Episode termination:

Lander crashes

Lander comes to rest

Episode length > 400

Solved Requirement:

Average reward of 200.0 over 100 consecutive trials

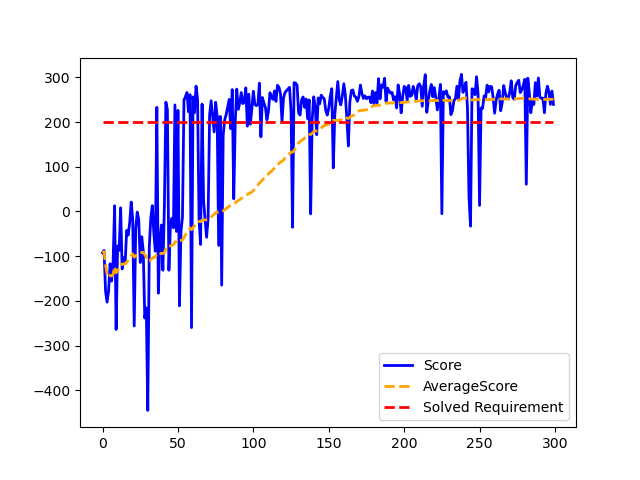

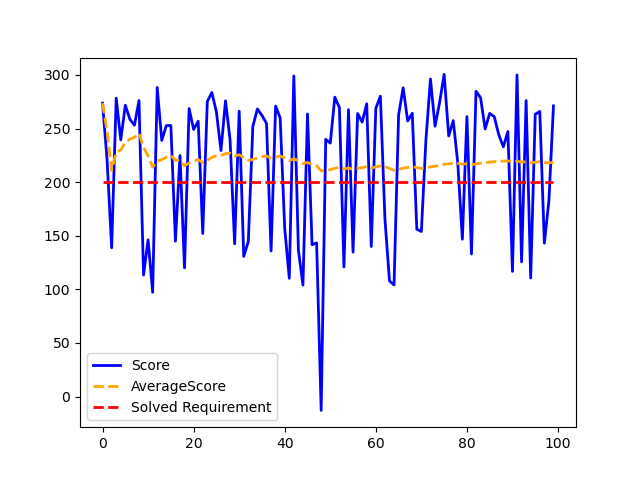

| Train | Test | ||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

Network model used for testing: 'saved_networks/dqn_model104' ('tf' model, also available in .h5)

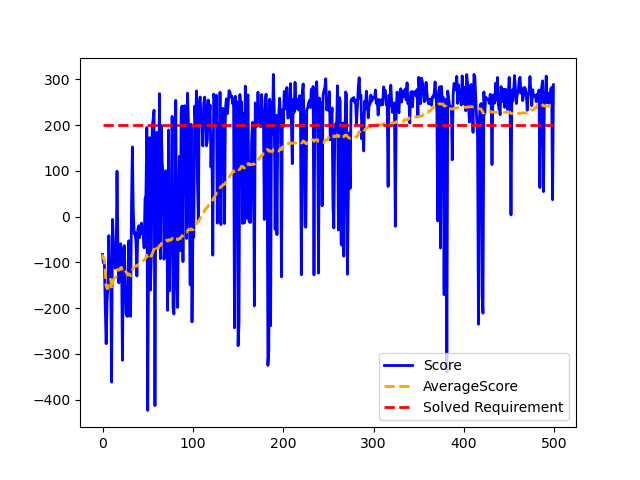

| Train | Test | ||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

Network model used for testing: 'saved_networks/duelingdqn_model123' ('tf' model, also available in .h5)

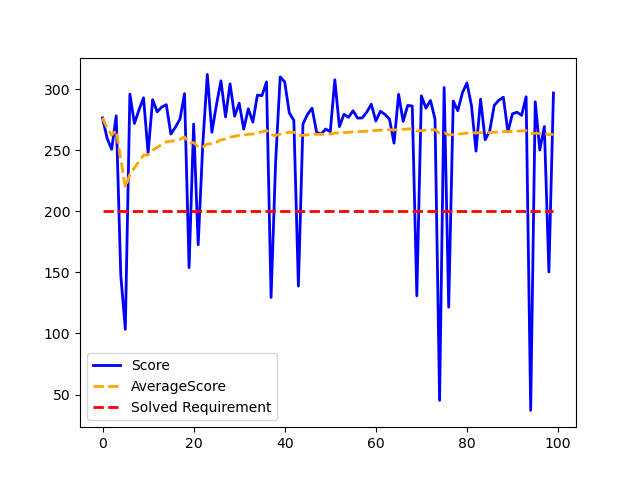

| Train | Test | ||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

Network model used for testing: 'saved_networks/d3qn_model5' ('tf' model, also available in .h5)