postgresql, data engineering, ETL, start database schema, data modeling

A startup called Sparkify wants to analyze the data they've been collecting on songs and user activity on their new music streaming application. The analytics team is particularly interested in understanding what songs users are listening to. Currently, they don't have an easy way to query their data, which resides in a directory of JSON logs on user activity on the application, as well as a directory with JSON meta-data on the songs in their application.

They'd like a data engineer to create a Postgres database with tables designed to optimize queries on song play analysis. The role of this project is to create a database schema and ETL pipeline for this analysis.

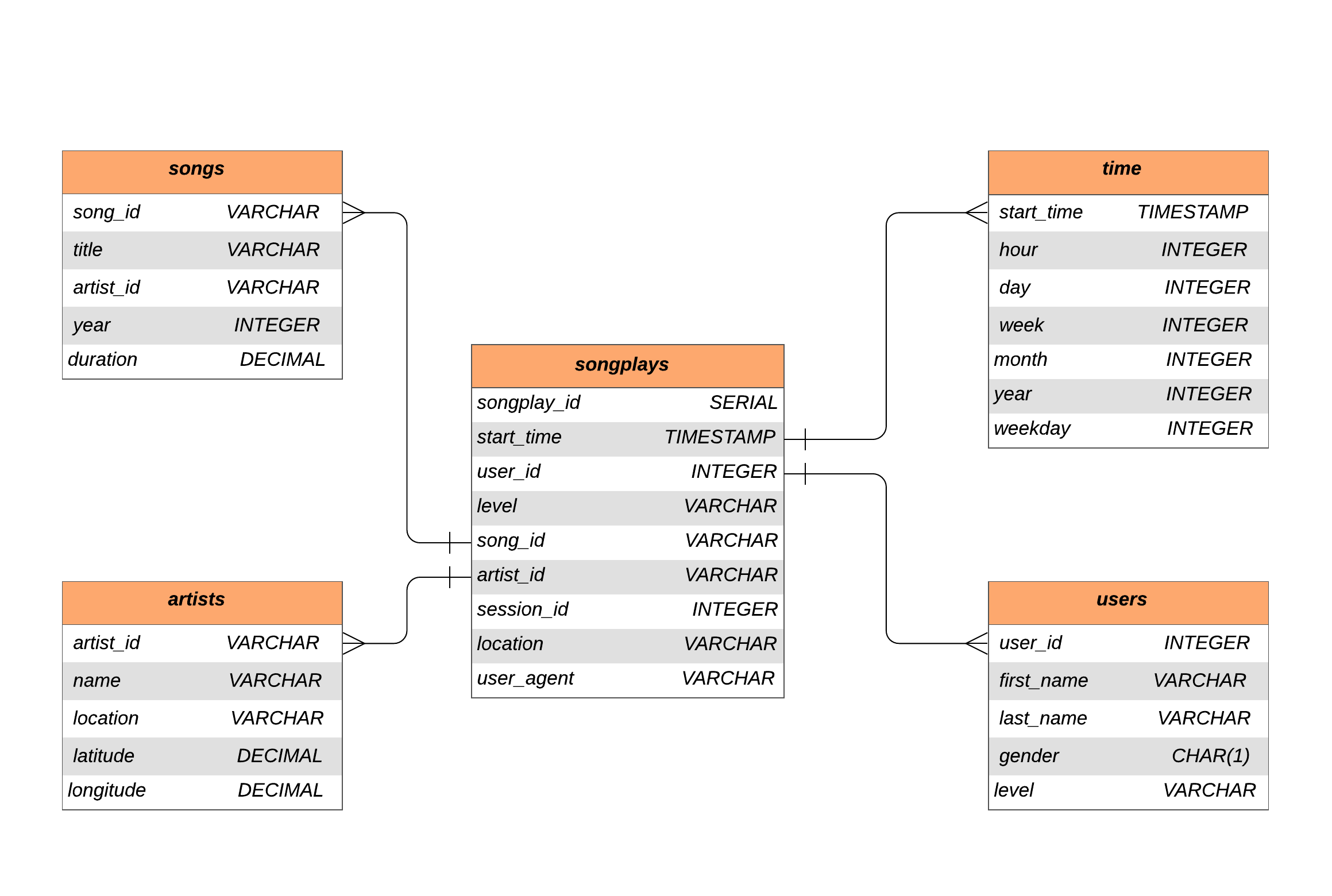

In this project, we will model the data with Postgres and build an ETL pipeline using Python. The fact and dimension tables for a star database schema for a particular analytic focus is defined, and an ETL pipeline that transfers data from files in two local directories into these tables in Postgres using Python and SQL was developed.

- python

- PostgreSQL

- iPython notebooks

You will not be able to run test.ipynb, etl.ipynb, or etl.py until you have run create_tables.py at least once to create the sparkifydb database, which these other files connect to. Always, close the connection to the database on exit.

Songs dataset is a subset of Million Song Dataset. Each file in the dataset is in JSON format and contains meta-data about a song and the artist of that song.

Sample Record :

{"num_songs": 1, "artist_id": "ARJIE2Y1187B994AB7", "artist_latitude": null, "artist_longitude": null, "artist_location": "", "artist_name": "Line Renaud", "song_id": "SOUPIRU12A6D4FA1E1", "title": "Der Kleine Dompfaff", "duration": 152.92036, "year": 0}

Logs dataset is generated by Event Simulator. These log files in JSON format simulate activity logs from a music streaming application based on specified configurations.

Sample Record :

{"artist": null, "auth": "Logged In", "firstName": "Walter", "gender": "M", "itemInSession": 0, "lastName": "Frye", "length": null, "level": "free", "location": "San Francisco-Oakland-Hayward, CA", "method": "GET","page": "Home", "registration": 1540919166796.0, "sessionId": 38, "song": null, "status": 200, "ts": 1541105830796, "userAgent": "\"Mozilla\/5.0 (Macintosh; Intel Mac OS X 10_9_4) AppleWebKit\/537.36 (KHTML, like Gecko) Chrome\/36.0.1985.143 Safari\/537.36\"", "userId": "39"}

The Star Database Schema used for data modeling in this ETL pipeline. There is one fact table containing all the metrics (facts) associated to each event (user actions), and four dimensions tables, containing associated information such as user name, artist name, song meta-data etc. This model enables to search the database schema with the minimum number of SQL JOINs possible and enable fast read queries. The amount of data we need to analyze is not big enough to require big data solutions or NoSQL databases.

An entity relationship diagram (ERD) of the data model is given below.

Files in this repository:

| File / Folder | Description |

|---|---|

| data | Folder at the root of the project, where all song and log data JSONS reside |

| images | Folder at the root of the project, where images are stored |

| sql_queries.py | Contains the SQL queries for data modeling and ETL |

| create_tables.py | Drops and creates tables. (Reset the tables) |

| test.ipynb | Exploring the database tables |

| etl.ipynb | Processes a file from song_data and log_data and loads the data into tables |

| etl.py | Processes all files from song_data and log_data and loads them into tables |

| README | Readme file |

Clone the repository into a local machine using

git clone https://github.com/AvinashBolleddula/Data-Modeling-with-PostgresThese are the prerequisites to run the program.

- python 3.8.3

- PostgreSQL

- psycopg2 python library

Follow the steps to extract and load the data into the data model.

-

Navigate to

Data Modeling with Postgresfolder -

Run

create_tables.pyto create/reset the tables bypython create_tables.py

-

Run ETL process and load data into database by

python etl.py

-

Check whether the data has been loaded into database by executing queries in

test.ipynb

Distributed under the MIT License. See LICENSE for more information.

Avinash B - avinash.bolleddula@gmail.com

Project Link: https://github.com/AvinashBolleddula/Data-Modeling-with-Postgres