- It's a

spring boot microserviceto process big file efficiently and store into DB.

- There is big file approx 1 GB, we need to process and store.

- We can generate mock data from this website

- Currently, we need to focus on 1 file later if required we can update the logic to support multiple files.

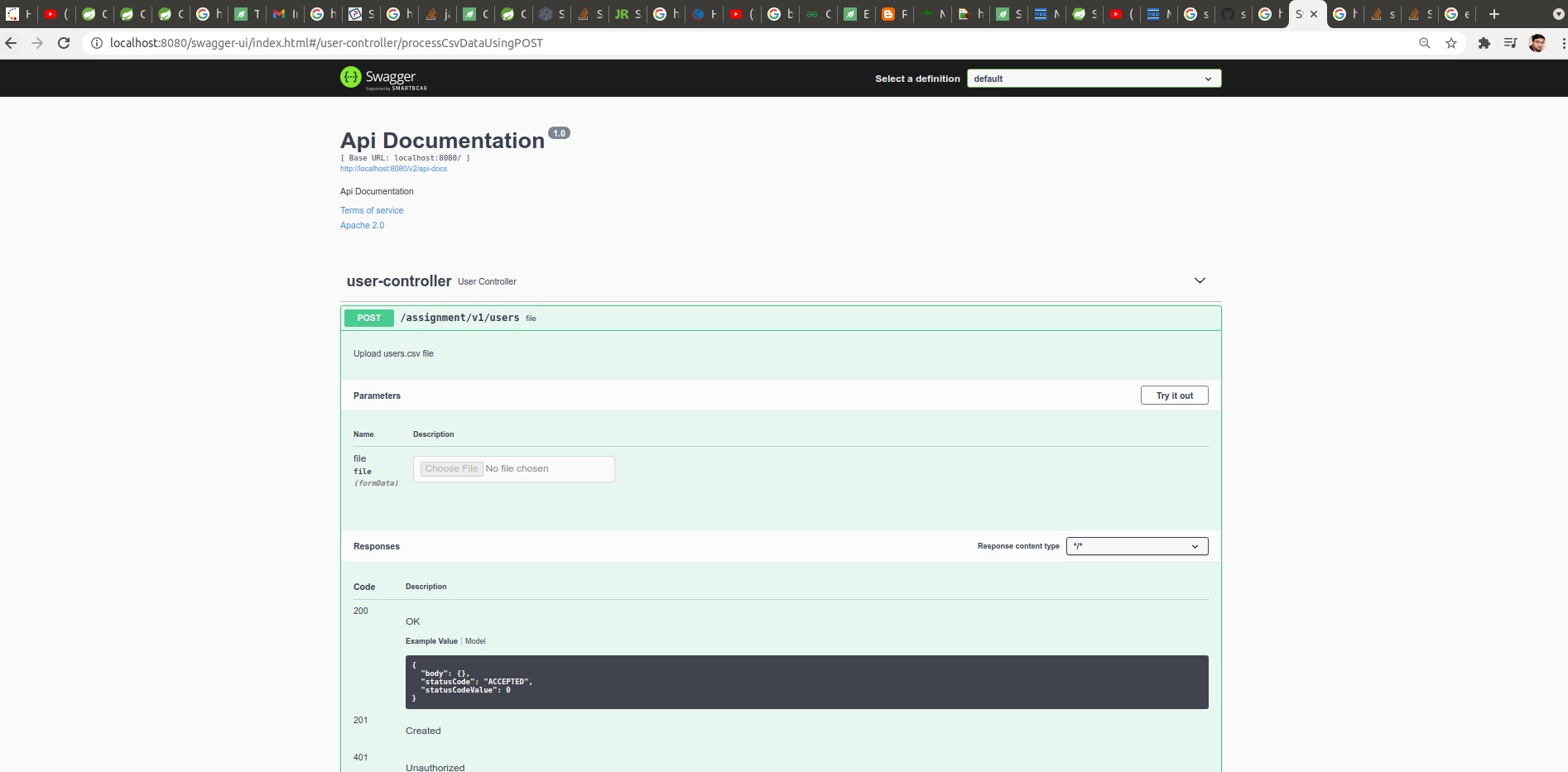

- We need to create

Spring BootRest API with one end point to take file as a parameter. - Backend we need to Process via Spring Batch to store this file into DB.

- We should fine tune spring batch to achieve good performance with the help of

TaskExecutor

- We should fine tune spring batch to achieve good performance with the help of

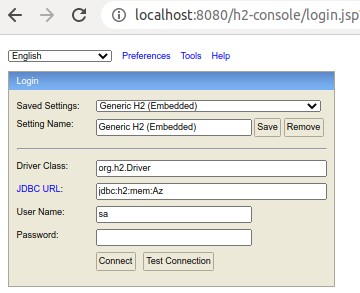

- We need to use

H2in-memory DB. - We will consider

users.csvwithcolumns [first_name, last_name, age]to be uploaded.

- It's a gradle project simply run below commands to run

./gradlew clean build bootRun

- Swagger already integrated please check this url

| first_name | last_name | age |

|---|---|---|

| Ali | Azhar | 1 |

| Umaima | Azhar | 5 |

| Muhammad | Zuhayr Azhar | 3 |

- Below are my Ubuntu machine details:

| Architecture | x86_64 |

| CPU op-mode(s) | 32-bit, 64-bit |

| Byte Order | Little Endian |

| CPU(s) | 12 |

| On-line CPU(s) list | 0-11 |

| Thread(s) per core | 2 |

| Core(s) per socket | 6 |

| Socket(s) | 1 |

| NUMA node(s) | 1 |

| Vendor ID | GenuineIntel |

| CPU family | 6 |

| Model | 158 |

| Model name | Intel(R) Core(TM) i7-8700 CPU @ 3.20GHz |

| Stepping | 10 |

- As above mentioned I have 6 core and two thread per core possible to run in my machine.

- So I have done

TaskExecutoron top of my machine.

@Bean

public TaskExecutor taskExecutor() {

ThreadPoolTaskExecutor executor = new ThreadPoolTaskExecutor();

executor.setCorePoolSize(12);

executor.setMaxPoolSize(12);

executor.setQueueCapacity(200);

executor.setThreadNamePrefix("userThread-");

executor.initialize();

return executor;

}

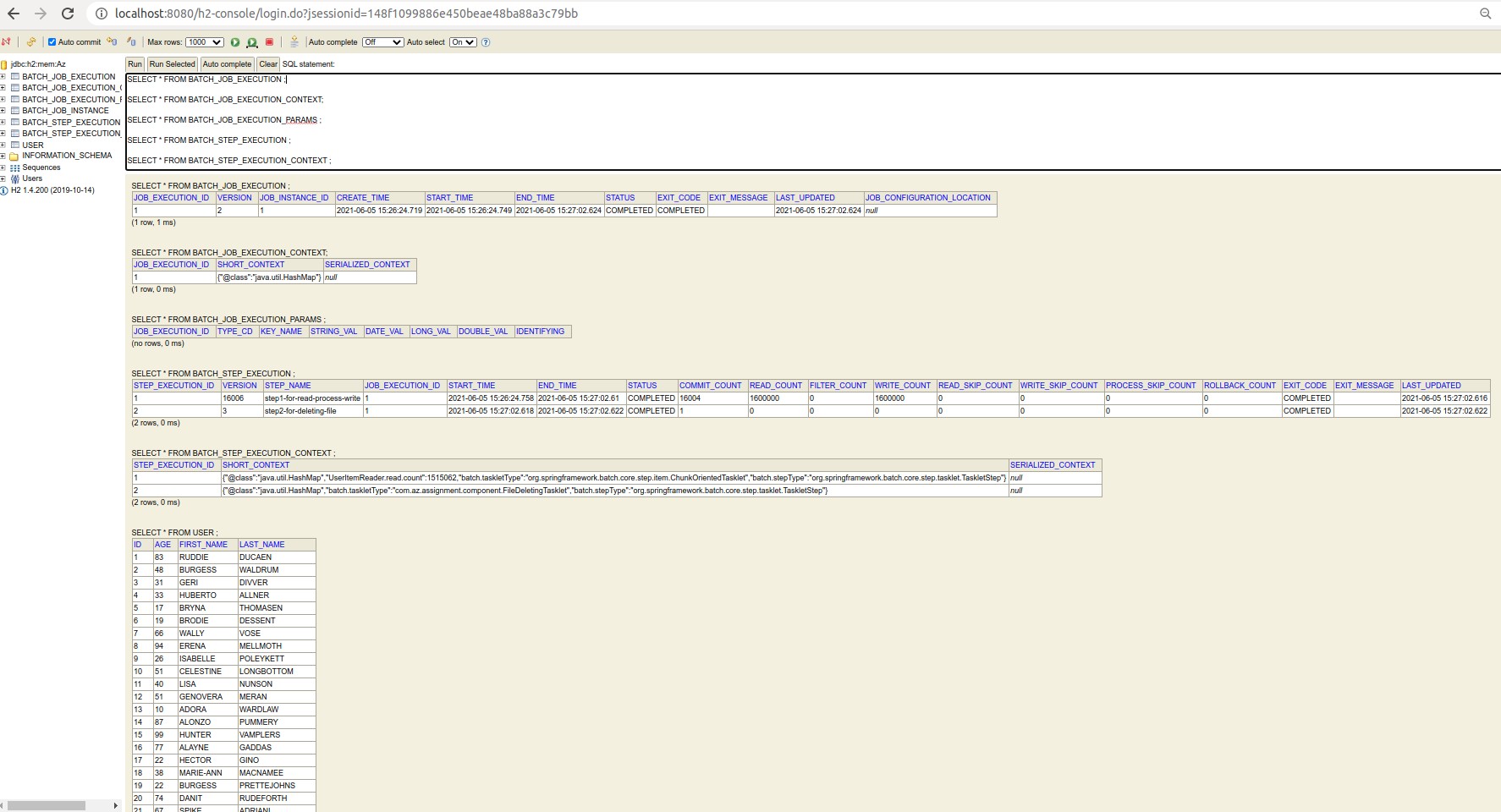

- We can cross verify data from db as well.

- Please have a look on this url

- Spring Batch creating extra table to monitor Spring Batch performance/details

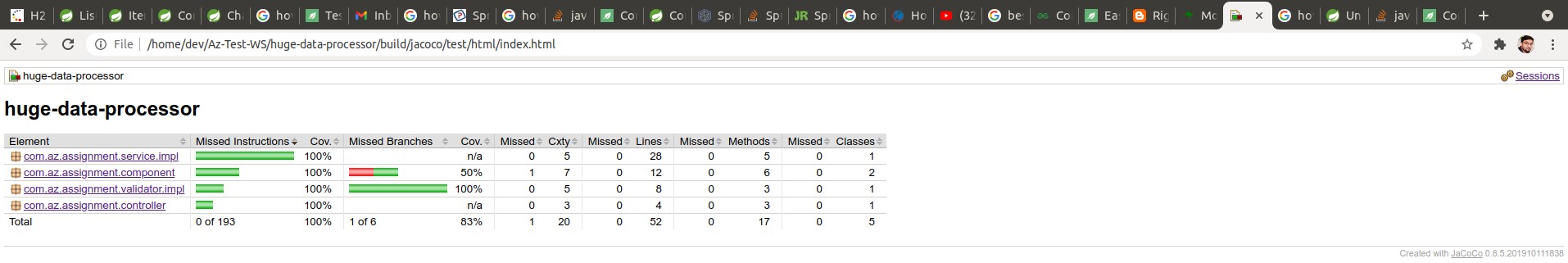

- For Unit test coverage report please check here after project build

huge-data-processor/build/jacoco/test/html/index.html

- 16 lack records file size 28 MB processed in 18s

- Current

csvfile have only three columns if we increase columns then 16 lack records file size will around 400 MB. - I believe we can process 1 GB data.

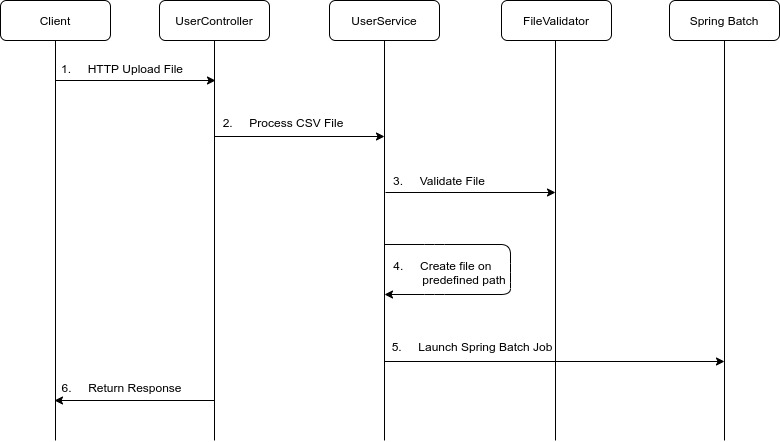

- Data process sequence diagram:

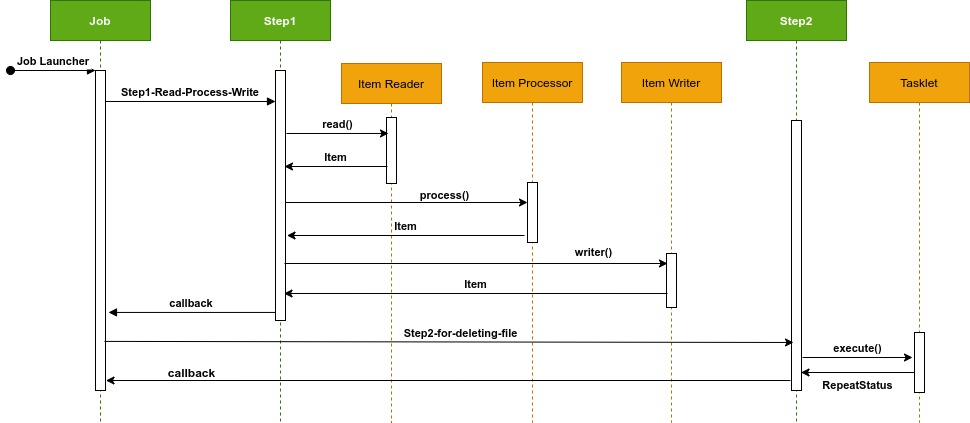

- Spring Batch Job sequence diagram: