The process of accelerating models, deploying models to a competent platform and tuning deployment parameters to make the best use of compute resources and reduce cost to reach the desired performance SLA (e.g. latency, throughput) is not only necessary but also vital for the production of machine learning services. This recipe is aiming at providing a one-stop experience for users to execute the complete process from optimization to profiling on Azure Machine Learning.

Azure Machine Learning Model Optimizer (preview) provides fully managed experience that makes it easy to benchmark your model performance.

-

Use the benchmarking tool of your choice.

-

Easy to use CLI experience.

-

Support for CI/CD MLOps pipelines to automate profiling.

-

Thorough performance report containing latency percentiles and resource utilization metrics.

The Azure Machine Learning Model Optimizer currently consistes of the following 5 tools:

-

aml-olive-optimizer: An optimizer based on "Olive". "Olive" is a hardware-aware model optimization tool that composes industry-leading techniques across model compression, optimization, and compilation.. For detailed info please refer to this link. link. IMPORTANT: We didn't support Openvino related engines due to FFmpeg package vulnerability. -

aml-wrk-profiler: A profiler based on "wrk". "wrk" is a modern HTTP benchmarking tool capable of generating significant load when run on a single multi-core CPU. It combines a multithreaded design with scalable event notification systems such as epoll and kqueue. For detailed info please refer to this link. -

aml-wrk2-profiler: A profiler based on "wrk2". "wrk2" is "wrk" modified to produce a constant throughput load, and accurate latency details to the high 9s (i.e. can produce accuracy 99.9999% if run long enough). In addition to wrk's arguments, wrk2 takes a throughput argument (in total requests per second) via either the --rate or -R parameters (default is 1000). For detailed info please refer to this link. -

aml-labench-profiler: A profiler based on "LaBench". "LaBench" (for LAtency BENCHmark) is a tool that measures latency percentiles of HTTP GET or POST requests under very even and steady load. For detailed info please refer to this link. -

aml-online-endpoints-deployer: A deployer that deploys models as azureml online-endpoints. The deployer usesaz cliandmlextension for the deployment job. For detailed info regarding usingaz clifor deploying online-endpoints, please refer to this link. -

aml-online-endpoints-deleter: A deleter that deletes azureml online-endpoints and online-deployments. The deleter also usesaz cliandmlextension for the deletion job. For detailed info regarding usingaz clifor deleting online-endpoints, please refer to this link.

-

Azure subscription. If you don't have an Azure subscription, sign up to try the free or paid version of Azure Machine Learning today.

-

Azure CLI and ML extension. For more information, see Install, set up, and use the CLI (v2) (preview).

Please follow this example and get started with the Azure Machine Learning Model Optimizer experience.

You will need a compute to host the optimizer, run the optimization program and generate final reports. We would suggest you to use the same sku type that you intend to deploy your model with.

az ml compute create --name $OPTIMIZER_COMPUTE_NAME --size $INFERENCE_SERVICE_COMPUTE_SIZE --identity-type SystemAssigned --type amlcomputeWe provided two published optimzer images that wrapped olive, you can try with mcr.microsoft.com/azureml/aml-olive-optimizer:latest and mcr.microsoft.com/azureml/aml-olive-optimizer-gpu:latest.

User need to prepare an optimization configuration json file(required) and/or model folder and/or code folder as they need. Below is a sample configuration file. For detailed configuration definitions, please refer to Olive Optimizer Configuration. And we also prepared some job templates to show how to create a job with published image.

{

"input_model":{

"type": "PyTorchModel",

"config": {

"hf_config": {

"model_name": "Intel/bert-base-uncased-mrpc",

"task": "text-classification",

"dataset": {

"data_name":"glue",

"subset": "mrpc",

"split": "validation",

"input_cols": ["sentence1", "sentence2"],

"label_cols": ["label"],

"batch_size": 1

}

},

"io_config" : {

"input_names": ["input_ids", "attention_mask", "token_type_ids"],

"input_shapes": [[1, 128], [1, 128], [1, 128]],

"input_types": ["int64", "int64", "int64"],

"output_names": ["output"],

"dynamic_axes": {

"input_ids": {"0": "batch_size", "1": "seq_length"},

"attention_mask": {"0": "batch_size", "1": "seq_length"},

"token_type_ids": {"0": "batch_size", "1": "seq_length"}

}

}

}

},

"systems": {

"local_system": {

"type": "LocalSystem",

"config": {

"accelerators": ["CPU"]

}

}

},

"evaluators": {

"common_evaluator": {

"metrics":[

{

"name": "accuracy",

"type": "accuracy",

"sub_types": [

{"name": "accuracy_score", "priority": 1, "goal": {"type": "max-degradation", "value": 0.01}}

]

},

{

"name": "latency",

"type": "latency",

"sub_types": [

{"name": "avg", "priority": 2, "goal": {"type": "percent-min-improvement", "value": 20}}

]

}

]

}

},

"passes": {

"conversion": {

"type": "OnnxConversion",

"config": {

"target_opset": 13

}

},

"transformers_optimization": {

"type": "OrtTransformersOptimization",

"config": {

"model_type": "bert",

"num_heads": 12,

"hidden_size": 768,

"float16": false

}

},

"perf_tuning": {

"type": "OrtPerfTuning",

"config": {

"input_names": ["input_ids", "attention_mask", "token_type_ids"],

"input_shapes": [[1, 128], [1, 128], [1, 128]],

"input_types": ["int64", "int64", "int64"]

}

}

},

"engine": {

"log_severity_level": 0,

"search_strategy": {

"execution_order": "joint",

"search_algorithm": "tpe",

"search_algorithm_config": {

"num_samples": 3,

"seed": 0

}

},

"evaluator": "common_evaluator",

"host": "local_system",

"target": "local_system",

"execution_providers": ["CPUExecutionProvider"],

"clean_cache": true,

"cache_dir": "cache"

}

}Optimization job use olive configuration directy, but still have some limitations:

-

Systems Information: only support

LocalSystemnow, if config include system typeAzureMLand/orDocker, will fail. -

Engine Information:

-

search_strategy: setoutput_model_numto 1 if config not include. If user want to have more than one best candidate models, please setoutput_model_numin advance. -

packaging_config: set as default packaging config, and don's support override.

"packaging_config": { "type": "Zipfile", "name": "OutputModels" },

-

plot_pareto_frontier: set astrue, which means will plot the pareto frontier of the search results. -

output_dir: set to job's default artifact output files./outputs. All default output files will put here. Suggest user set outputsoptimized_parametersandoptimized_modelin job template.

-

Below is a template yaml file that defines an olive optimization job. For detailed info regarding how to construct a command job yaml file, see AzureML Job Yaml Schema

Template1:

$schema: https://azuremlschemas.azureedge.net/latest/commandJob.schema.json

command: >

python -m aml_olive_optimizer

--config_path ${{inputs.config}}

--code ${{inputs.code_path}}

--model_path ${{inputs.model}}

--optimized_parameters_path ${{outputs.optimized_parameters}}

--optimized_model_path ${{outputs.optimized_model}}

experiment_name: optimization-demo-job

name: $OPTIMIZER_JOB_NAME

tags:

optimizationTool: olive

environment:

# For aceleration on CPU:

image: mcr.microsoft.com/azureml/aml-olive-optimizer:20230620.v1

# For aceleration on GPU:

# image: mcr.microsoft.com/azureml/aml-olive-optimizer-gpu:20230620.v1

compute: azureml:$OPTIMIZER_COMPUTE_NAME

inputs:

config:

type: uri_file

path: ../resnet/resnet.json

model:

type: uri_folder

path: ../resnet/models

code_path:

type: uri_folder

path: ../resnet

outputs:

optimized_parameters:

type: uri_folder

optimized_model:

type: uri_folderHINT: config_path and model_path are optional input parameters. User decides use either or both of them.

Template2:

$schema: https://azuremlschemas.azureedge.net/latest/commandJob.schema.json

command: >

python -m aml_olive_optimizer

--config_path ${{inputs.config}}

--code ${{inputs.code_path}}

--optimized_parameters_path ${{outputs.optimized_parameters}}

--optimized_model_path ${{outputs.optimized_model}}

experiment_name: optimization-demo-job

name: $OPTIMIZER_JOB_NAME

tags:

optimizationTool: olive

environment:

# For aceleration on CPU:

image: mcr.microsoft.com/azureml/aml-olive-optimizer:20230620.v1

# For aceleration on GPU:

# image: mcr.microsoft.com/azureml/aml-olive-optimizer-gpu:20230620.v1

compute: azureml:$OPTIMIZER_COMPUTE_NAME

inputs:

config:

type: uri_file

path: ../bert/bert.json

code_path:

type: uri_folder

path: ../bert/code

outputs:

optimized_parameters:

type: uri_folder

optimized_model:

type: uri_folderYou may create this olive optimizer job with the following command:

az ml job create --name $OPTIMIZER_JOB_NAME --file optimizer_job.ymlThe olive optimizer job will generate below output files into job's default artifact output folder ./outputs.

*_footprints.json: A dictionary of all the footprints generated during the optimization process.*_pareto_frontier_footprints.json: A dictionary of the footprints that are on the Pareto frontier based on the metrics goal you set in config ofevaluators.metrics.*_pareto_frontier_footprints_footprints_chart.html: Dump pareto_frontier points to html.OutputModels.zip: Generate a ZIP file which includes 3 folders:CandidateModels,SampleCodeandONNXRuntimePackages. Details please find in: Packaging Olive artifacts.OutputModels: Decompressed zip package.

You may download the optimized_parameters file and optimized_model with the following command, they can be used as inputs of the online-endpoints deployer job.

az ml job download --name $OPTIMIZER_JOB_NAME --output-name optimized_parameters --download-path $OPTIMIZER_DOWNLOAD_FOLDER

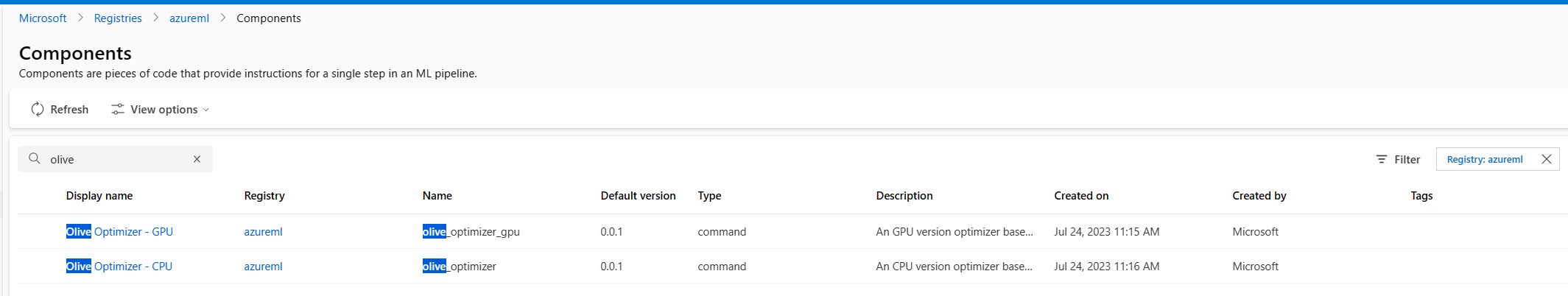

az ml job download --name $OPTIMIZER_JOB_NAME --output-name optimized_model --download-path $OPTIMIZER_DOWNLOAD_FOLDERWe also onboard olive components and user can use these components easily.

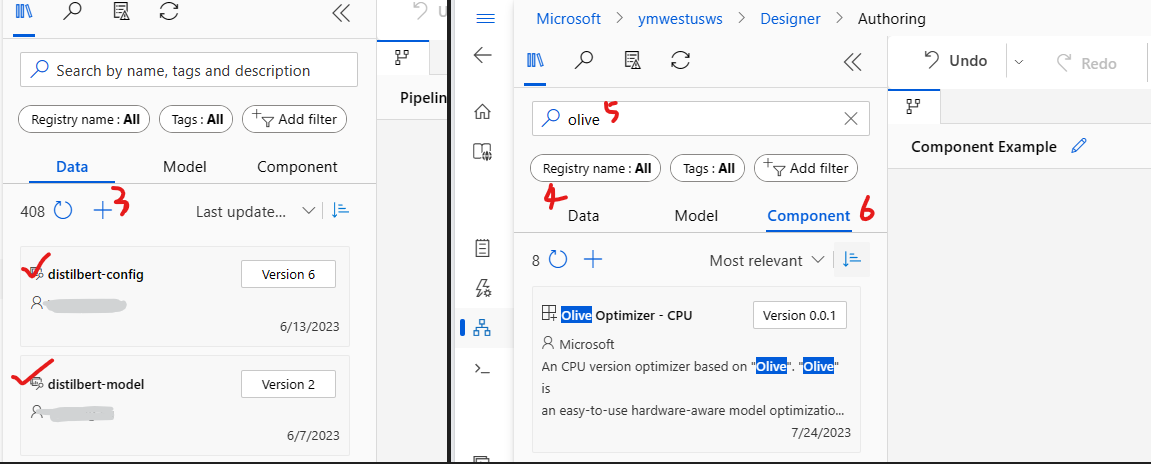

User can use this pre-defined and published components via UX and the input settings are same with Option1.

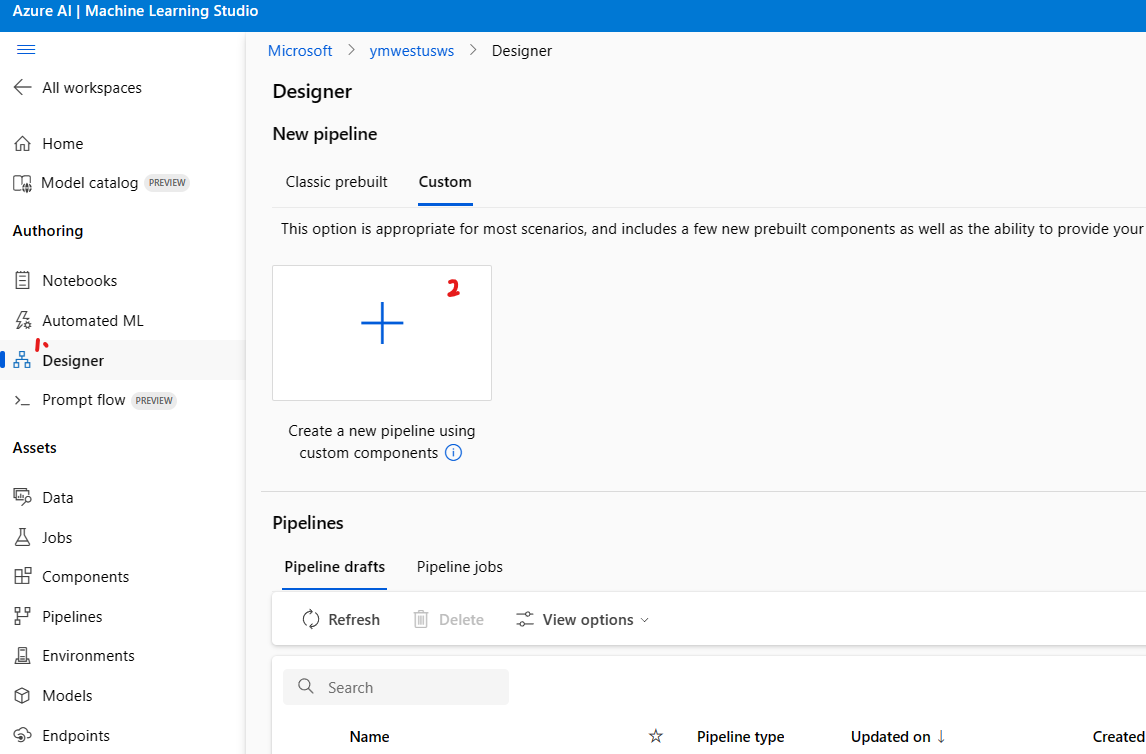

- Launch Azure Machine Learning Studio of your workspace

- Click

DesignerandCustombutton - Choose

Create a new pipeline using custom components - Click

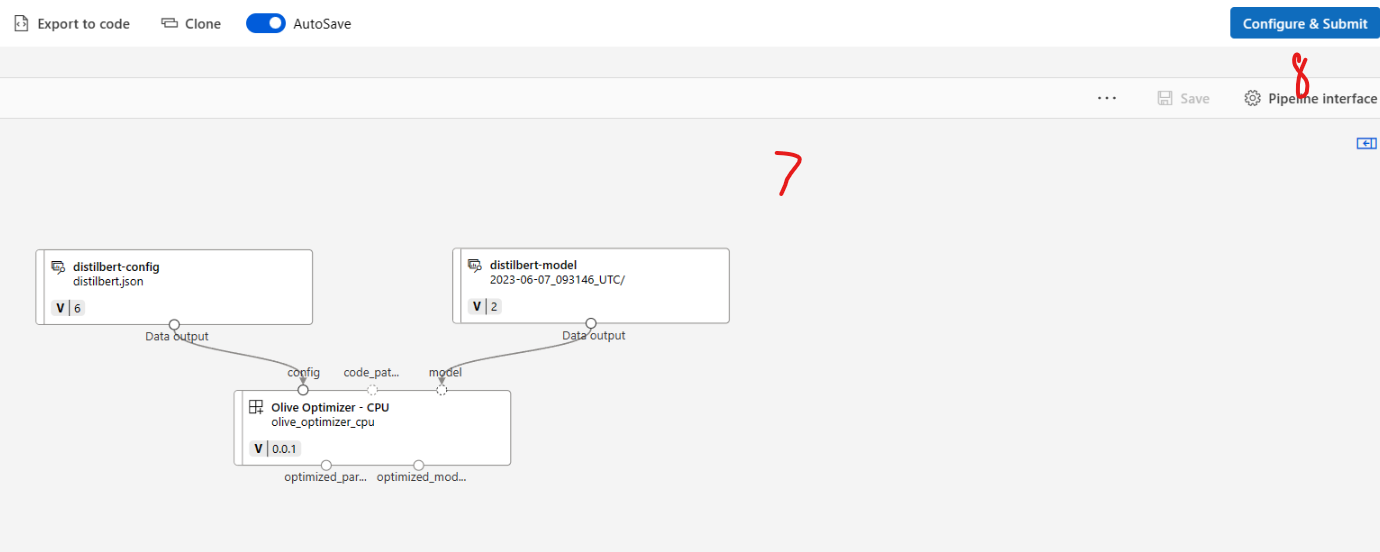

Datato register inputs - As example, We registered olive configuration and model folder as inputs to optimize distilbert model

- Search

oliveand clickComponent - You can use published components either

Olive Optimizer - CPUorOlive Optimizer - GPUwhich were created by Microsoft - Drag the inputs and components and construct all modules

- Click

Configure&Submitto choose the compute, input settings, output settings etc - Then you can run the pipeline job and get the result, you can also use the optimizer outputs in following steps such as profiling/deploy

-

You will need a compute to host the deployer, run the online-endpoints deployment program and generate final reports. You may choose any sku type for this job.

az ml compute create --name $DEPLOYER_COMPUTE_NAME --size $DEPLOYER_COMPUTE_SIZE --identity-type SystemAssigned --type amlcompute

-

Create proper role assignment for accessing online endpoint resources. The compute needs to have contributor role to the machine learning workspace. For more information, see Assign Azure roles using Azure CLI.

compute_info=`az ml compute show --name $DEPLOYER_COMPUTE_NAME --query '{"id": id, "identity_object_id": identity.principal_id}' -o json` workspace_resource_id=`echo $compute_info | jq -r '.id' | sed 's/\(.*\)\/computes\/.*/\1/'` identity_object_id=`echo $compute_info | jq -r '.identity_object_id'` az role assignment create --role Contributor --assignee-object-id $identity_object_id --assignee-principal-type ServicePrincipal --scope $workspace_resource_id if [[ $? -ne 0 ]]; then echo "Failed to create role assignment for compute $DEPLOYER_COMPUTE_NAME" && exit 1; fi

Prepare an online-endpoint configuration yaml file. Below is a sample configuration file. For detailed info regarding how to construct an online-endpoint yaml file, see AzureML Online-Endpoint Yaml Schema.

$schema: https://azuremlschemas.azureedge.net/latest/managedOnlineEndpoint.schema.json

name: $ENDPOINT_NAME

auth_mode: keyPrepare an online-deployment configuration yaml file. Below is a sample configuration file. For detailed info regarding how to construct an online-deployment yaml file, see AzureML Online-Deployment Yaml Schema.

$schema: https://azuremlschemas.azureedge.net/latest/managedOnlineDeployment.schema.json

name: $DEPLOYMENT_NAME

endpoint_name: $ENDPOINT_NAME

model:

name: optimized-distilbert-model

version: 1

path: <% MODEL_FOLDER_PATH %>

code_configuration:

code: <% CODE_FOLDER_PATH %>

scoring_script: score.py

environment:

conda_file: <% ENVIRONMENT_FOLDER_PATH %>/conda.yml

image: mcr.microsoft.com/azureml/openmpi4.1.0-ubuntu20.04:latest

instance_type: $INFERENCE_SERVICE_COMPUTE_SIZE

instance_count: 1Below is a sample yaml file that defines an online-endpoints deployer job. For detailed info regarding how to construct a command job yaml file, see AzureML Job Yaml Schema

$schema: https://azuremlschemas.azureedge.net/latest/commandJob.schema.json

command:

python -m aml_online_endpoints_deployer --endpoint_yaml_path ${{inputs.endpoint}} --deployment_yaml_path ${{inputs.deployment}} --model_folder_path ${{inputs.model}} --environment_folder_path ${{inputs.environment}} --code_folder_path ${{inputs.code}} --optimized_parameters_path ${{inputs.optimized_parameters}}

experiment_name: deployment-demo-jobs

environment:

image: mcr.microsoft.com/azureml/aml-online-endpoints-deployer:20230306.1

name: $DEPLOYER_JOB_NAME

tags:

endpoint: $ENDPOINT_NAME

deployment: $DEPLOYMENT_NAME

compute: azureml:$DEPLOYER_COMPUTE_NAME

inputs:

endpoint:

type: uri_file

path: endpoint.yml

deployment:

type: uri_file

path: deployment.yml

model:

type: uri_folder

path: ../distilbert_model/optimized_model

environment:

type: uri_folder

path: ../distilbert_model/environment

code:

type: uri_folder

path: ../distilbert_model/code

optimized_parameters:

type: uri_file

path: optimized_parameters.jsonYou may create this online-endpoints deployer job with the following command:

az ml job create --name $DEPLOYER_JOB_NAME --file deployer_job.ymlThe online-endpoints deployer job will generate 1 output file.

deployment_settings.json: This file contains the detailed online-deployment information.

You may download the deployment_settings.json file with the following command, this file can later be used as an input of the profiler job.

az ml job download --name $DEPLOYER_JOB_NAME --all --download-path $DEPLOYER_DOWNLOAD_FOLDER-

You will need a compute to host the profiler, run the profiling program and generate final reports. Please choose a compute SKU with proper network bandwidth (considering the inference request payload size and profiling traffic, we'd recommend Standard_F4s_v2) in the same region as the online endpoint or your model inference service.

az ml compute create --name $PROFILER_COMPUTE_NAME --size $PROFILER_COMPUTE_SIZE --identity-type SystemAssigned --type amlcompute

-

Create proper role assignment for accessing online endpoint resources. The compute needs to have contributor role to the machine learning workspace. For more information, see Assign Azure roles using Azure CLI.

compute_info=`az ml compute show --name $PROFILER_COMPUTE_NAME --query '{"id": id, "identity_object_id": identity.principal_id}' -o json` workspace_resource_id=`echo $compute_info | jq -r '.id' | sed 's/\(.*\)\/computes\/.*/\1/'` identity_object_id=`echo $compute_info | jq -r '.identity_object_id'` az role assignment create --role Contributor --assignee-object-id $identity_object_id --assignee-principal-type ServicePrincipal --scope $workspace_resource_id if [[ $? -ne 0 ]]; then echo "Failed to create role assignment for compute $PROFILER_COMPUTE_NAME" && exit 1; fi

Prepare a profiling configuration json file. Below is a sample configuration file. For detailed info about profiler job configs, see AzureML Wrk Profiler Configs, AzureML Wrk2 Profiler Configs and AzureML Labench Profiler Configs

{

"version": 1.0,

"profiler_config": {

"duration_sec": 300,

"connections": 1

}

}Prepare a scoring target configuration json file. You may use the deployment_settings.json file from the deployer job outputs. Below is a sample configuration file. For detailed info about scoring target configurations, please refer to Scoring target configuration

{

"version": "1.0",

"deployment_settings": {

"subscription_id": "636d700c-4412-48fa-84be-452ac03d34a1",

"resource_group": "model-profiler",

"workspace_name": "profilervalidation",

"endpoint_name": "distilbert-endpt",

"deployment_name": "distilbert-dep",

"sku": "Standard_F8s_v2",

"location": "eastus",

"instance_count": 1,

"worker_count": 1,

"max_concurrent_requests_per_instance": 1

}

}Below is a sample yaml file that defines a wrk profiling job. For detailed info regarding how to construct a command job yaml file, see AzureML Job Yaml Schema

$schema: https://azuremlschemas.azureedge.net/latest/commandJob.schema.json

command: >

python -m aml_wrk_profiler --config_path ${{inputs.config}} --scoring_target_path ${{inputs.scoring_target}} --payload_path ${{inputs.payload}}

experiment_name: profiling-demo-jobs

name: $PROFILER_JOB_NAME

environment:

image: mcr.microsoft.com/azureml/aml-wrk-profiler:20230303.2

tags:

deployment: $DEPLOYMENT_NAME

compute: azureml:$PROFILER_COMPUTE_NAME

inputs:

config:

type: uri_file

path: config.json

scoring_target:

type: uri_file

path: deployment_settings.json

payload:

type: uri_file

path: payload.jsonlYou may create this profiling job with the following command:

az ml job create --file profiler_job.ymlThe profiler job will generate 1 output file.

report.json: This file contains detailed profiling results.

You may download the report.json file with the following command.

az ml job download --name $PROFILER_JOB_NAME --all --download-path $PROFILER_DOWNLOAD_FOLDER-

You will need a compute to host the deleter, run the online-endpoints deletion program and generate final reports. You may choose any sku type for this job, and you may also choose to reuse the compute for the online-endpoints deployer job.

az ml compute create --name $DELETER_COMPUTE_NAME --size $DELETER_COMPUTE_SIZE --identity-type SystemAssigned --type amlcompute

-

Create proper role assignment for accessing online endpoint resources. The compute needs to have contributor role to the machine learning workspace. For more information, see Assign Azure roles using Azure CLI.

compute_info=`az ml compute show --name $DELETER_COMPUTE_NAME --query '{"id": id, "identity_object_id": identity.principal_id}' -o json` workspace_resource_id=`echo $compute_info | jq -r '.id' | sed 's/\(.*\)\/computes\/.*/\1/'` identity_object_id=`echo $compute_info | jq -r '.identity_object_id'` az role assignment create --role Contributor --assignee-object-id $identity_object_id --assignee-principal-type ServicePrincipal --scope $workspace_resource_id if [[ $? -ne 0 ]]; then echo "Failed to create role assignment for compute $DELETER_COMPUTE_NAME" && exit 1; fi

Below is a sample yaml file that defines an online-endpoints deleter job. For detailed info regarding how to construct a command job yaml file, see AzureML Job Yaml Schema

$schema: https://azuremlschemas.azureedge.net/latest/commandJob.schema.json

command:

python -m aml_online_endpoints_deleter --config_path ${{inputs.config}} --delete_endpoint ${{inputs.delete_endpoint}}

experiment_name: deletion-demo-job

environment:

image: profilervalidationacr.azurecr.io/aml-online-endpoints-deleter:20230401.80737331

name: $DELETER_JOB_NAME

tags:

action: delete

endpoint: $ENDPOINT_NAME

deployment: $DEPLOYMENT_NAME

compute: azureml:$DELETER_COMPUTE_NAME

inputs:

config:

type: uri_file

path: deployment_settings.json

delete_endpoint: TrueYou may create this online-endpoints deleter job with the following command:

az ml job create --file deleter_job.ymlThe deleter job won't generate any output files, but you may also use the below command for downloading all std_out logs.

az ml job download --name $DELETER_JOB_NAME --all --download-path $DELETER_DOWNLOAD_FOLDERCurrently we support most OLive configs, but still have below special settings and limitations:

-

Systems Information:

Configuration Definition Example Default Values typeThe type of the system. Only support "LocalSystem" for olive optimizer, "AzureML" and "Docker" are not supported. "LocalSystem" - -

Engine Information:

Configuration Definition Example Default Values packaging_configOlive artifacts packaging configurations. If not specified, Olive will not package artifacts DON'T SET, WILL BE OVERRIDED BY DEFAULT VALUE "packaging_config": { "type": "Zipfile", "name": "OutputModels" }

output_model_numThe number of output models from the engine based on metric priority. If user want to have more than one best candidate models, please set "output_model_num" below "search_strategy" in advance. 1 1 plot_pareto_frontierThis decides whether to plot the pareto frontier of the search results. true true output_dirThe directory to store the output of the engine. Set to job's default artifact output files "./outputs" DON'T SET, WILL BE OVERRIDED BY DEFAULT VALUE "./outputs"

| Configuration | Definition | Example | Default Values |

|---|---|---|---|

subscription_id | [Optional] the subscription id of the online endpoint | ea4faa5b-5e44-4236-91f6-5483d5b17d14 | subscription id of the profiling job |

resource_group | [Optional] the resource group of the online endpoint | my-rg | resource group of the profiling job |

workspace_name | [Optional] the workspace name of the online endpoint | my-ws | workspace of the profiling job |

endpoint_name |

[Optional] the name of the online endpoint Required, if users want to get resource usage metrics in the profiling reports, such as CpuUtilizationPercentage, CpuMemoryUtilizationPercentage, etc. If | my-endpoint | - |

deployment_name |

[Optional] the name of the online deployment Required, if users want to get resource usage metrics in the profiling reports, such as CpuUtilizationPercentage, CpuMemoryUtilizationPercentage, etc. If | my-deployment | - |

identity_access_token |

[Optional] an optional aad token for retrieving endpoint scoring_uri, access_key, and resource usage metrics. This will not be necessary for the following scenario:

It's recommended to assign appropriate permissions to the aml compute rather than providing this aad token, since the aad token might be expired during the profiling job | - | - |

scoring_uri |

[Optional] users are optional to provide this env var as instead of the If If both | https://my-inference-service-uri.com | - |

scoring_headers |

[Optional] users may use this env var to provide any headers necessary when invoking the profiling target. One Required field inside the scoring_headers dict is “Authorization”. If If both |

{

"Content-Type": "application/json",

"Authorization": "Bearer < auth_key >",

"azureml-model-deployment": "< deployment_name >"

} | - |

sku |

[Optional] used together with If | Standard_F2s_v2 | - |

location |

[Optional] used together with If | eastus2 | - |

instance_count |

[Optional] used together with If | 1 | - |

worker_count |

[Optional] the profiling tool would set the default traffic concurrency basing on If users choose to provide the concurrency setting specifically in the profiling_config.json file, then neither If concurrency is not provided specifically by the user, and the user did not provide Basic logic for setting the default traffic concurrency: if | 1 | - |

max_concurrent_requests_per_instance |

[Optional] the profiling tool would set the default traffic concurrency basing on If users choose to provide the concurrency setting specifically in the profiling_config.json file, then neither If concurrency is not provided specifically by the user, and the user did not provide Basic logic for setting the default traffic concurrency: if | 1 | - |

| Configuration | Definition | Example | Default Values |

|---|---|---|---|

duration_sec | [Optional] duration in seconds for running the profiler | 600 | 300 |

connections |

[Optional] no. of connections for the profiler The default value will be set to the value of max_concurrent_requests_per_instance The value is [Required] if the online-endpoint/online-deployment info is not provided, otherwise an error will be thrown | 10 | - |

threads | [Optional] no. of threads allocated for the profiler | 3 | 1 |

payload |

[Optional] users may use this param to provide a single string format payload data for invoking the scoring target. If inputs.payload is provided in the profiler_job.yml file, this env var will be ignored. | '{"data": [[1,2,3,4,5,6,7,8,9,10], [10,9,8,7,6,5,4,3,2,1]]}' | - |

| Configuration | Definition | Example | Default Values |

|---|---|---|---|

duration_sec | [Optional] duration in seconds for running the profiler | 600 | 300 |

connections |

[Optional] no. of connections for the profiler The default value will be set to the value of max_concurrent_requests_per_instance The value is [Required] if the online-endpoint/online-deployment info is not provided, otherwise an error will be thrown | 10 | - |

threads | [Optional] no. of threads allocated for the profiler | 3 | 1 |

payload |

[Optional] users may use this param to provide a single string format payload data for invoking the scoring target. If inputs.payload is provided in the profiler_job.yml file, this env var will be ignored. | '{"data": [[1,2,3,4,5,6,7,8,9,10], [10,9,8,7,6,5,4,3,2,1]]}' | - |

target_rps | [Optional] target requests per second for the profiler | 100 | 50 |

| Configuration | Definition | Example | Default Values |

|---|---|---|---|

duration_sec | [Optional] duration in seconds for running the profiler | 600 | 300 |

clients |

[Optional] no. of clients for the profiler. The default value will be set to the value of max_concurrent_requests_per_instance | 10 | - |

timeout_sec | [Optional] timeout in seconds for each request | 20 | 10 |

payload |

[Optional] users may use this param to provide a single string format payload data for invoking the scoring target. If inputs.payload is provided in the profiler_job.yml file, this env var will be ignored. | '{"data": [[1,2,3,4,5,6,7,8,9,10], [10,9,8,7,6,5,4,3,2,1]]}' | - |

target_rps | [Optional] target requests per second for the profiler | 100 | 50 |

This project welcomes contributions and suggestions. Most contributions require you to agree to a Contributor License Agreement (CLA) declaring that you have the right to, and actually do, grant us the rights to use your contribution. For details, visit https://cla.microsoft.com.

When you submit a pull request, a CLA-bot will automatically determine whether you need to provide a CLA and decorate the PR appropriately (e.g., label, comment). Simply follow the instructions provided by the bot. You will only need to do this once across all repositories using our CLA.

This project has adopted the Microsoft Open Source Code of Conduct. For more information see the Code of Conduct FAQ or contact opencode@microsoft.com with any additional questions or comments.

For any questions, bugs and requests of new features, please contact us at miroptprof@microsoft.com

Trademarks This project may contain trademarks or logos for projects, products, or services. Authorized use of Microsoft trademarks or logos is subject to and must follow Microsoft’s Trademark & Brand Guidelines. Use of Microsoft trademarks or logos in modified versions of this project must not cause confusion or imply Microsoft sponsorship. Any use of third-party trademarks or logos are subject to those third-party’s policies.