By B-O-W with help of Graypit

Prerequisites

The tutorial assumes some basic familiarity with Kubernetes and kubectl but does not assume any pre-existing deployment.

It also assumes that you are familiar with the usual Terraform plan/apply workflow. If you're new to Terraform itself, refer first to the Getting Started tutorial.

For this tutorial, you will need:

- an AWS account

- the AWS CLI, installed and configured

- AWS IAM Authenticator

- the Kubernetes CLI, also known as

kubectl

1. Create Kubernetes Cluster with Terraform

The configuration is organized across multiple files:

versions.tfsets the Terraform version to at least 1.2. It also sets versions for the providers used by the configuration.variables.tfcontains aregionandclusternamevariable that controls where to create the EKS clustervpc.tfprovisions a VPC, subnets, and availability zones using the AWS VPC Module. The module creates a new VPC for this tutorial so it doesn't impact your existing cloud environment and resources.security-groups.tfprovisions the security groups the EKS cluster will use it’s dynamic SGeks-cluster.tfuses the AWS EKS Module to provision an EKS Cluster and other required resources, including Auto Scaling Groups, Security Groups, IAM Roles, and IAM Policies.

Open the eks-cluster.tf

file to review the configuration. The worker-groups

parameter will create three nodes across one node group.

module "eks" {

source = "terraform-aws-modules/eks/aws"

version = "17.24.0"

cluster_name = local.cluster_name

cluster_version = "1.20"

subnets = module.vpc.private_subnets

vpc_id = module.vpc.vpc_id

workers_group_defaults = {

root_volume_type = "gp2"

}

worker_groups = [

{

name = "worker-group-1"

instance_type = "t2.medium"

additional_userdata = "test-eks-aws"

additional_security_group_ids = [aws_security_group.worker_group_mgmt_one.id]

asg_desired_capacity = 2

},

]

}

#

data "aws_eks_cluster" "cluster" {

name = module.eks.cluster_id

}

data "aws_eks_cluster_auth" "cluster" {

name = module.eks.cluster_id

}Now that you've provisioned your EKS cluster, you need to configure kubectl.

First, open the outputs.tf file to review the output values. You will use the region and cluster_name outputs to configure kubectl.

outputs.tf

output "cluster_id" {

description = "EKS cluster ID"

value = module.eks.cluster_id

}

output "cluster_endpoint" {

description = "Endpoint for EKS control plane"

value = module.eks.cluster_endpoint

}

output "cluster_security_group_id" {

description = "Security group ids attached to the cluster control plane"

value = module.eks.cluster_security_group_id

}

output "region" {

description = "AWS region"

value = var.region

}

output "cluster_name" {

description = "Kubernetes Cluster Name"

value = local.cluster_name

}Run the following command to retrieve the access credentials for your cluster and configure kubectl.

P.S THIS COMMAND WORK ONLY AFTER TERRAFORM APPLY

$ aws eks --region $(terraform output -raw region) update-kubeconfig \ --name $(terraform output -raw cluster_name)You can now use kubectl to manage your cluster and deploy Kubernetes configurations to it.

#Change link in argocd/kuber.yaml

source:

repoURL: https://github.com/B-O-W/EKS-Ingress-conntroler-alb.git # Can point to either a Helm chart repo or a git repo.

targetRevision: main # For Helm, this refers to the chart version.

path: manifest/ # This has no meaning for Helm charts pulled directly from a Helm repo instead of git.

# Destination cluster and namespace to deploy the application

destination:

server: https://kubernetes.default.svc

namespace: github

#!/usr/bin/env bash

# Author: Mammadov Elbrus | 28.07.22 | Provision IaaC

# Main Functions:

function main() {

provisionClusters

loadKubeConfig

deployArgoCD

}

function terraformProvision() { # Terraform commands

terraform init -upgrade

terraform plan

terraform apply --auto-approve

}

function provisionClusters() { # Provision EKS Cluster

terraformProvision

}

function loadKubeConfig() { # Load Kubernetes Config file

aws eks --region $(terraform output -raw region) update-kubeconfig --name $(terraform output -raw cluster_name)

}

function deployArgoCD() { # Deploy ArgoCd

kubectl create namespace argocd

kubectl apply -n argocd -f https://raw.githubusercontent.com/argoproj/argo-cd/stable/manifests/install.yaml

kubectl patch svc argocd-server -n argocd -p '{"spec": {"type": "LoadBalancer"}}'

kubectl apply -f argocd/

kubectl get svc -n argocd | grep argocd-server | head -n 1 | awk '{print$4}'; echo " Go to this link it's alb default user admin"

kubectl -n argocd get secret argocd-initial-admin-secret -o jsonpath="{.data.password}" | base64 -d; |echo " It's password:"

}

main1. Script runned Terraform code

2. Load Kubeconfig to your local system

3. Deploy a lot of dependency for ArgoCd and wrote credentials for UI

Admin

`password he wrote after script work`

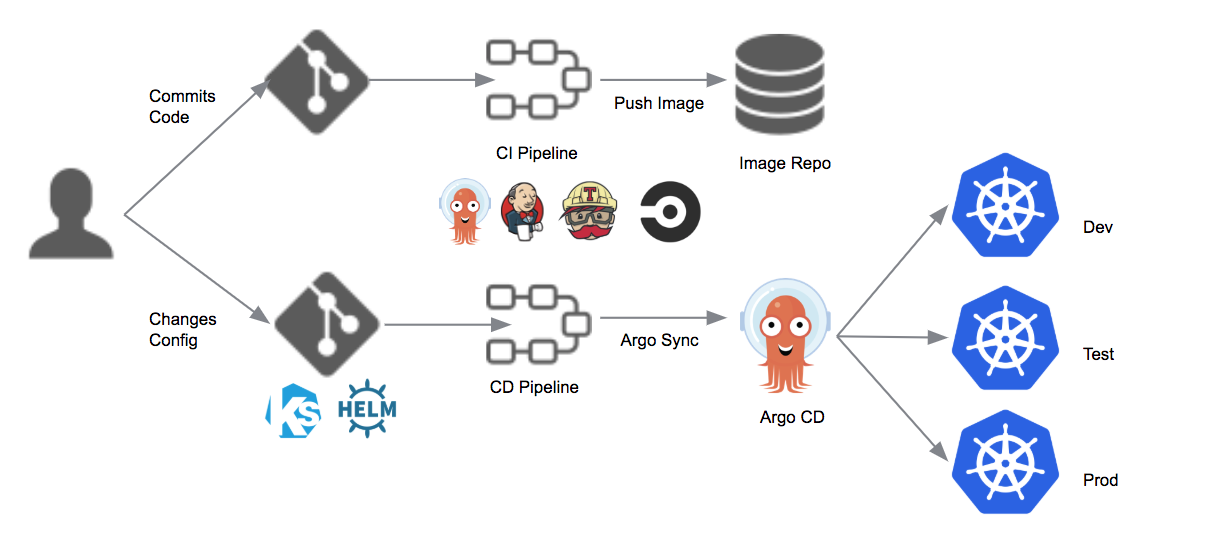

4. *Deploy SimpleApps with ArgoCD(it’s a very simple app nginx with echo banana/apple)*

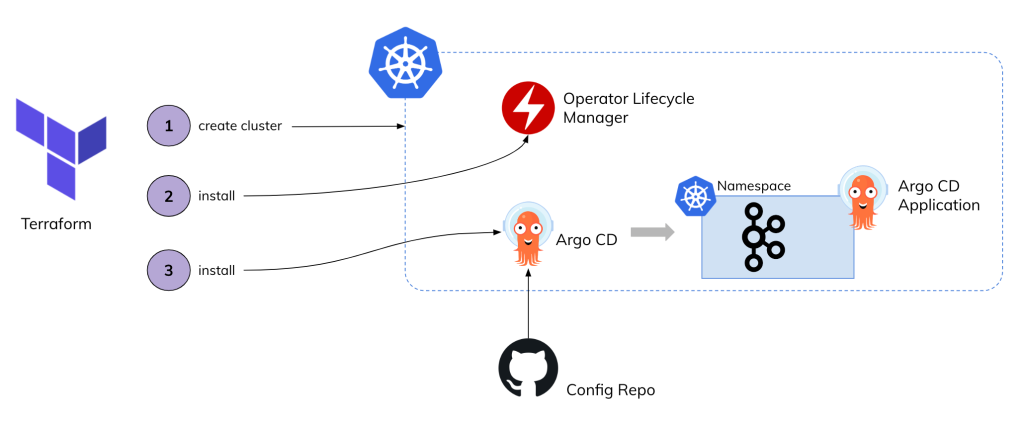

## Terraform create those dependencies

1. Terraform create new vpc-group with name payrif-vpc (cidr/10.0.0.0/16)

2. 3 private and public subnets for this vpc

3. 2 security group for EKS cluster

4. All code present in this Project

5. And if you want one load balancer for app you create service for app and automaticly loadbalancer created and mounted for this service/podP.S out

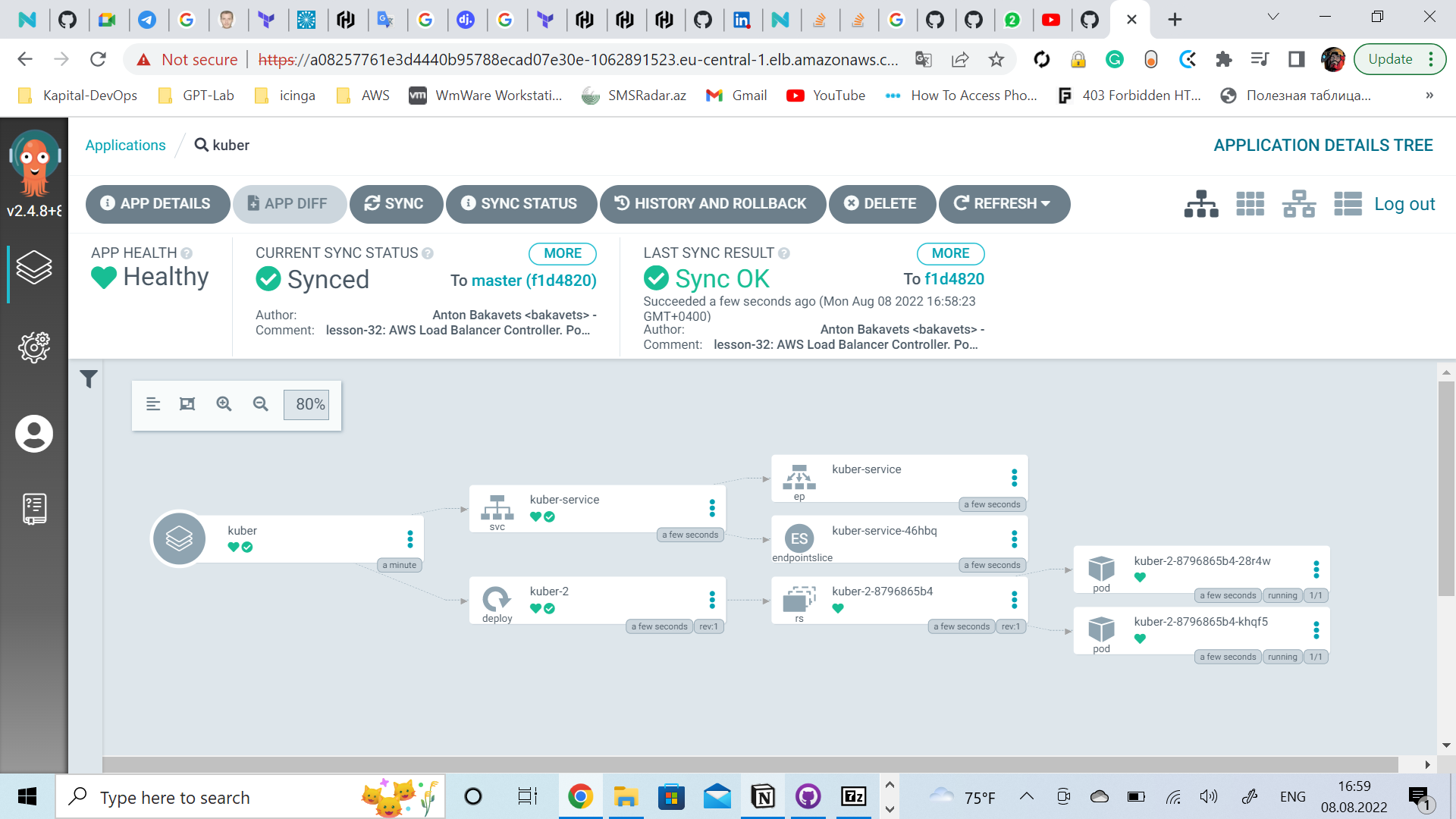

a08257761e3d4440b95788ecad07e30e-1062891523.eu-central-1.elb.amazonaws.com Go to this link it's alb default user admin

zz7G-xkTOuLawhPq It's password:https://www.youtube.com/watch?v=KyaJX_litEM&ab_channel=BAKAVETS

Provision an EKS Cluster (AWS) | Terraform - HashiCorp Learn