Using Logstash to synchronize an Elasticsearch index with MySQL data

| Tag | Dockerfile | Image Size |

|---|---|---|

0.0.1 |

Dockerfile |  |

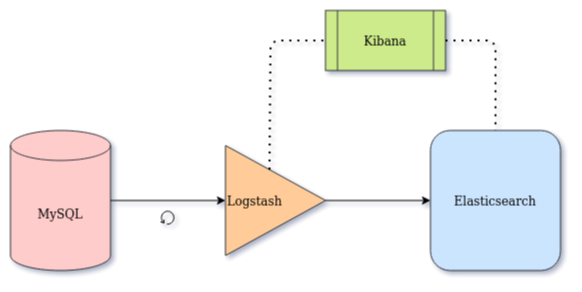

This project is a working example demonstrating how to use Logstash to link Elasticsearch to a MySQL database in order to:

- Build an Elasticsearch index from scratch

- Continuously monitor changes on the database records and replicate any of those changes to Elasticsearch (

create,update,delete)

It uses:

- MySQL as the main database of a given business architecture (version 8.0.23)

- JDBC Connector/J (version 8.0.23)

- Elasticsearch as a text search engine (version 7.10.2)

- Logstash as a connector or data pipe from MySQL to Elasticsearch (version 7.10.2)

- Kibana for monitoring, data visualization, and debuging tool (version 7.10.2)

This project has been developed based on the

sync-elasticsearch-mysqlproject prepared by Redouane Achouri. More details in this article: How to synchronize Elasticsearch with MySQL

This repo is a valid prototype and works as it is, however it is not suitable for a production environment. Please refer to the official documentation of each of the above technologies for instructions on how to go live in your production environment.

On your development/local environment, run the following commands on a terminal:

Note: Make sure to install Docker and Docker Compose

# Clone this project and cd into it

git clone https://github.com/BarisGece/sync-elasticsearch-mysql.git && cd sync-elasticsearch-mysql

# Start the whole architecture

docker-compose up --build # add -d for detached mode

# To keep an eye on the logs

docker-compose logs -f --tail 111 <service-name>To start services separately or in a different order, you can run:

docker-compose up -d mysql

docker-compose up -d elasticsearch kibana

docker-compose up logstashDeploy sync-elasticsearch-mysql in k8s cluster.

Please refer to the above article for testing steps.

- Elasticsearch Performance Tuning Practice at eBay

- Tune for search speed

rally: Macrobenchmarking framework for Elasticsearchindex.number_of_shards: The number of primary shards that an index should have. Defaults to 1. This setting can only be set at index creation time. It cannot be changed on a closed index.- For search operations, 20-25 GB is usually a good shard size - 2. Generic guidelines

- Aim for 20 shards or fewer per GB of heap memory The number of shards a node can hold is proportional to the nod

- A shard size of 50GB is often quoted as a limit that has been seen to work for a variety of use-cases.

index.number_of_replicas: The number of replicas each primary shard has. Defaults to 1.index.refresh_interval: How often to perform a refresh operation, which makes recent changes to the index visible to search. Defaults to 1s.index.search.idle.after: How long a shard can not receive a search or get request until it’s considered search idle. (default is 30s)index.sort.field-index.sort.order

- Inspiration by How to keep Elasticsearch synchronized with a relational database using Logstash and JDBC. However the article does not deal with indexing from scratch and deleted records.

- Data used for this project is available in the Kaggle dataset Goodreads-books

- Logstash JDBC input plugin

- Logstash Mutate filter plugin

- Logstash Elasticsearch output plugin