Modelscope Hub | Paper | Demo

中文 | English

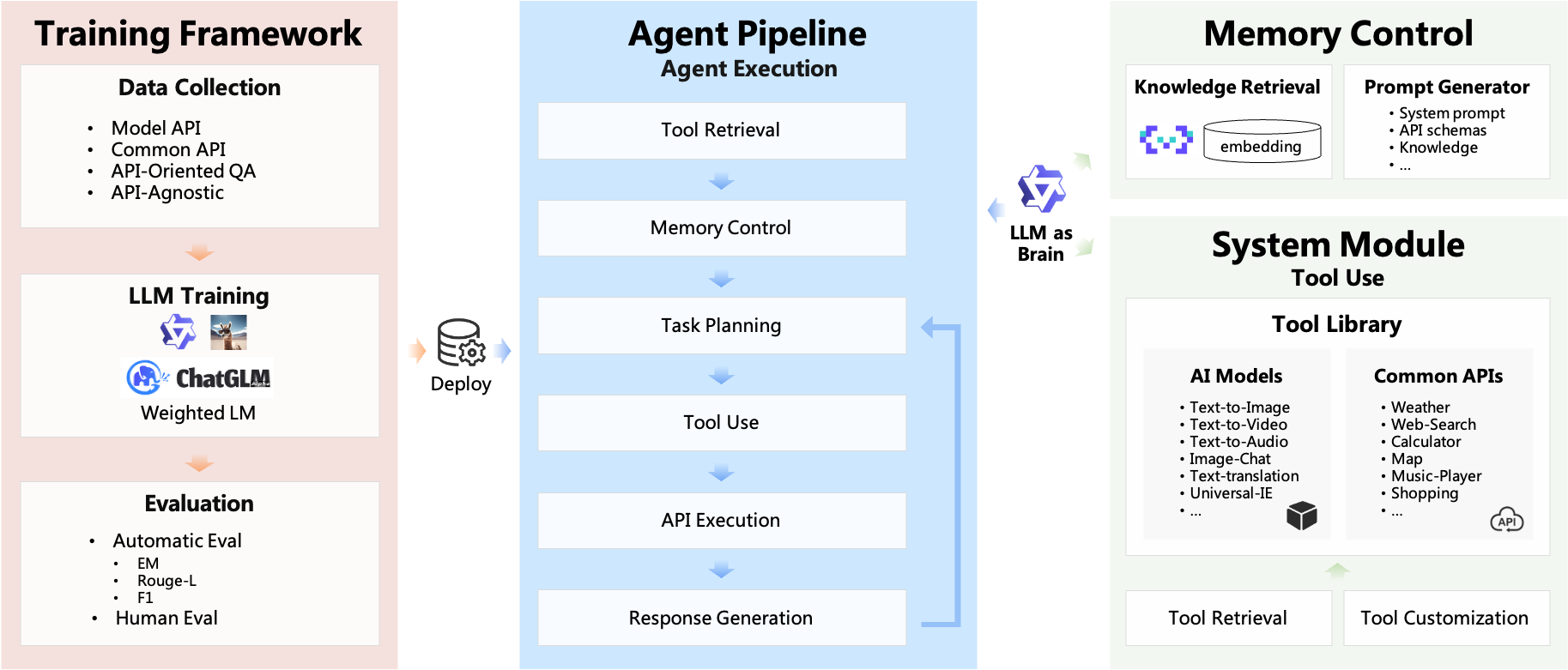

ModelScope-Agent, a general and customizable agent framework for real-world applications, based on open-source LLMs as controllers. It provides a user-friendly system library that are:

- customizable and comprehensive framework: customizable engine design to spanning over tool-use data collection, tool retrieval, tool registration, memory control, customized model training, and evaluation for practical real-world applications.

- opensourced LLMs as controllers: support model training on multiple open-source LLMs of ModelScope Community

- Diversified and Comprehensive APIs: enabling seamless integration with both model APIs and common APIs in a unified way.

To equip the LLMs with tool-use abilities, a comprehensive framework has been proposed spanning over tool-use data collection, tool retrieval, tool registration, memory control, customized model training, and evaluation for practical real-world applications.

- Sep 20, 2023: ModelScope GPT offers a local version through gradio that can be run locally. You can navigate to the demo/msgpt/ directory and execute

bash run_msgpt.sh. - Sep 4, 2023: Three demos, demo_qwen, demo_retrieval_agent and demo_register_tool, have been added, along with detailed tutorials provided.

- Sep 2, 2023: The preprint paper associated with this project was published.

- Aug 22, 2023: Support accessing various AI model APIs using ModelScope tokens.

- Aug 7, 2023: The initial version of the modelscope-agent repository was released.

clone repo and install dependency:

git clone https://github.com/modelscope/modelscope-agent.git

cd modelscope-agent && pip install -r requirements.txtThe ModelScope Notebook offers a free-tier that allows ModelScope user to run the FaceChain application with minimum setup, refer to ModelScope Notebook

# Step1: 我的notebook -> PAI-DSW -> GPU环境

# Step2: Download the [demo file](https://github.com/modelscope/modelscope-agent/blob/master/demo/demo_qwen_agent.ipynb) and upload it to the GPU.

# Step3: Execute the demo notebook in order.To use modelscope-agent, all you need is to instantiate an AgentExecutor object, and use run() to execute your task. For faster agent implementation, please refer to demo_agent. Online demo is available on ModelScope

import os

from modelscope.utils.config import Config

from modelscope_agent.llm import LLMFactory

from modelscope_agent.agent import AgentExecutor

from modelscope_agent.prompt import MSPromptGenerator

# get cfg from file, refer the example in config folder

model_cfg_file = os.getenv('MODEL_CONFIG_FILE', 'config/cfg_model_template.json')

model_cfg = Config.from_file(model_cfg_file)

tool_cfg_file = os.getenv('TOOL_CONFIG_FILE', 'config/cfg_tool_template.json')

tool_cfg = Config.from_file(tool_cfg_file)

# instantiation LLM

model_name = 'modelscope-agent-7b'

llm = LLMFactory.build_llm(model_name, model_cfg)

# prompt generator

prompt_generator = MSPromptGenerator()

# instantiation agent

agent = AgentExecutor(llm, tool_cfg, prompt_generator=prompt_generator)- Single-step & Multi-step tool-use

# Single-step tool-use

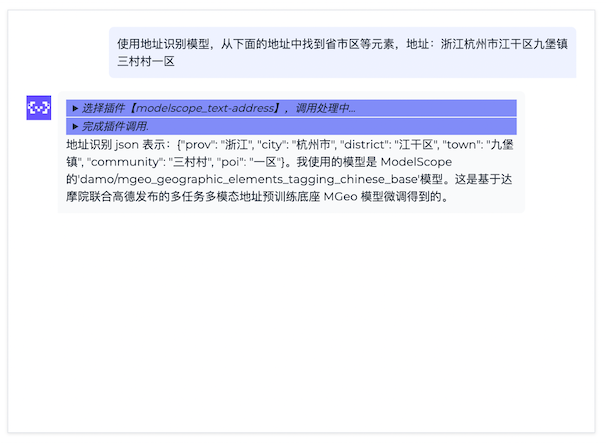

agent.run('使用地址识别模型,从下面的地址中找到省市区等元素,地址:浙江杭州市江干区九堡镇三村村一区', remote=True)

# Multi-step tool-use

agent.reset()

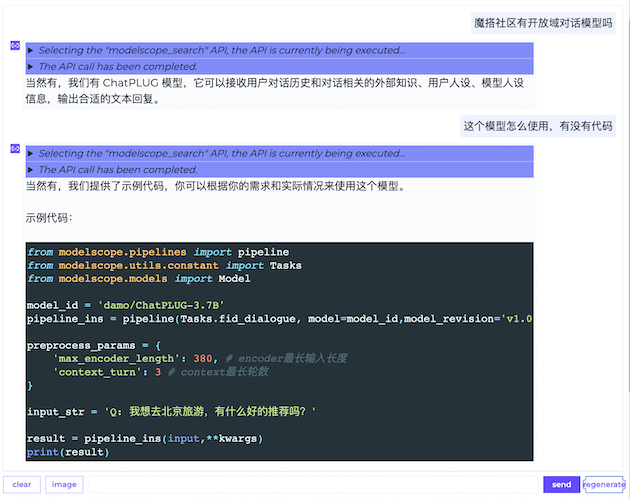

agent.run('写一篇关于Vision Pro VR眼镜的20字宣传文案,并用女声读出来,同时生成个视频看看', remote=True)- Multi-turn tool-use and knowledge-qa

# Multi-turn tool-use

agent.reset()

agent.run('写一个20字左右简短的小故事', remote=True)

agent.run('用女声念出来', remote=True)

agent.run('给这个故事配一张图', remote=True)An AgentExecutor object consists of the following components:

LLM: A large language model that is responsible to process your inputs and decide calling tools.tool_list: A list consists of available tools for agents.PromptGenerator: A module integratesprompt_template,user_input,history,tool_list... into efficient prompt.OutputParser: A module to parse llm response into the tools to be invoked and the corresponding parameters

We provide default implement of these components for users, but you can also custom your components according to your requirement.

Some configurations, user_token etc are not supposed to be public, so we recommend you to use dotenv package and .env file to set these configurations.

Concretely, We provide an .env.template file and corresponding config files in our repo. You can easily customize the configuration by referring to the provided example, and utilize your own .env file to read the configuration settings.

We offer a plug-and-play LLM for users to easily utilize. The specific model details are as follows:

- modelscope-agent-7b: modelscope-agent-7b is a core open-source model that drives the ModelScope-Agent framework. It can be directly downloaded for local use.

- modelscope-agent: A ModelScope-Agent service deployed on DashScope. No local GPU resources are required. Follow the steps below to apply for the use of modelscope-agent:

- Apply to activate the DashScope service, go to

模型广场->通义千问开源系列-> apply for a trial of通义千问7B. The free quota is 100,000 tokens. - Create an API-KEY in

API-kEY管理, and configure it in theconfig/.envfile.

- Apply to activate the DashScope service, go to

The default LLM is ModelScope GPT, which is deployed in a remote server and need user token to request.

If you want to use other llm, you can inherit base class and implement generate() or stream_generate() specifically.

generate(): directly return final responsestream_generate(): return a generator of step response, it can be used when you deploy your application in gradio.

You can also use open-source LLM from ModelScope or Huggingface and inference locally by LLMFactory class. Moreover, you can finetune these models with your datasets or load your custom weights.

# local llm cfg

import os

from modelscope.utils.config import Config

from modelscope_agent.llm import LLMFactory

from modelscope_agent.agent import AgentExecutor

model_name = 'modelscope-agent-7b'

model_cfg = {

'modelscope-agent-7b':{

'type': 'modelscope',

'model_id': 'damo/ModelScope-Agent-7B',

'model_revision': 'v1.0.0',

'use_raw_generation_config': True,

'custom_chat': True

}

}

tool_cfg_file = os.getenv('TOOL_CONFIG_FILE', 'config/cfg_tool_template.json')

tool_cfg = Config.from_file(tool_cfg_file)

llm = LLMFactory(model_name, model_cfg)

agent = AgentExecutor(llm, tool_cfg)We provide some default pipeline tools of multiple domain that integrates in modelscope.

Also, you can custom your tools by inheriting base tool and define names, descriptions, and parameters according to pre-defined schema. And you can implement _local_call() or _remote_call() according to your requirement. Examples of supported tool are provided below. For more detailed tool registration, please refer to tool_doc or too_demo.

- Text-to-Speech Tool

from modelscope_agent.tools import ModelscopePipelineTool

from modelscope.utils.constant import Tasks

from modelscope_agent.output_wrapper import AudioWrapper

class TexttoSpeechTool(ModelscopePipelineTool):

default_model = 'damo/speech_sambert-hifigan_tts_zh-cn_16k'

description = '文本转语音服务,将文字转换为自然而逼真的语音,可配置男声/女声'

name = 'modelscope_speech-generation'

parameters: list = [{

'name': 'input',

'description': '要转成语音的文本',

'required': True

}, {

'name': 'gender',

'description': '用户身份',

'required': True

}]

task = Tasks.text_to_speech

def _remote_parse_input(self, *args, **kwargs):

if 'gender' not in kwargs:

kwargs['gender'] = 'man'

voice = 'zhibei_emo' if kwargs['gender'] == 'man' else 'zhiyan_emo'

kwargs['parameters'] = voice

kwargs.pop('gender')

return kwargs

def _parse_output(self, origin_result, remote=True):

audio = origin_result['output_wav']

return {'result': AudioWrapper(audio)}- Text-Address Tool

from modelscope_agent.tools import ModelscopePipelineTool

from modelscope.utils.constant import Tasks

class TextAddressTool(ModelscopePipelineTool):

default_model = 'damo/mgeo_geographic_elements_tagging_chinese_base'

description = '地址解析服务,针对中文地址信息,识别出里面的元素,包括省、市、区、镇、社区、道路、路号、POI、楼栋号、户室号等'

name = 'modelscope_text-address'

parameters: list = [{

'name': 'input',

'description': '用户输入的地址信息',

'required': True

}]

task = Tasks.token_classification

def _parse_output(self, origin_result, *args, **kwargs):

final_result = {}

for e in origin_result['output']:

final_result[e['type']] = e['span']

return final_resultMoreover, if the tool is a langchain tool, you can directly use our LangchainTool to wrap and adapt with current frameworks.

from modelscope_agent.tools import LangchainTool

from langchain.tools import ShellTool, ReadFileTool

# wrap langchain tools

shell_tool = LangchainTool(ShellTool())

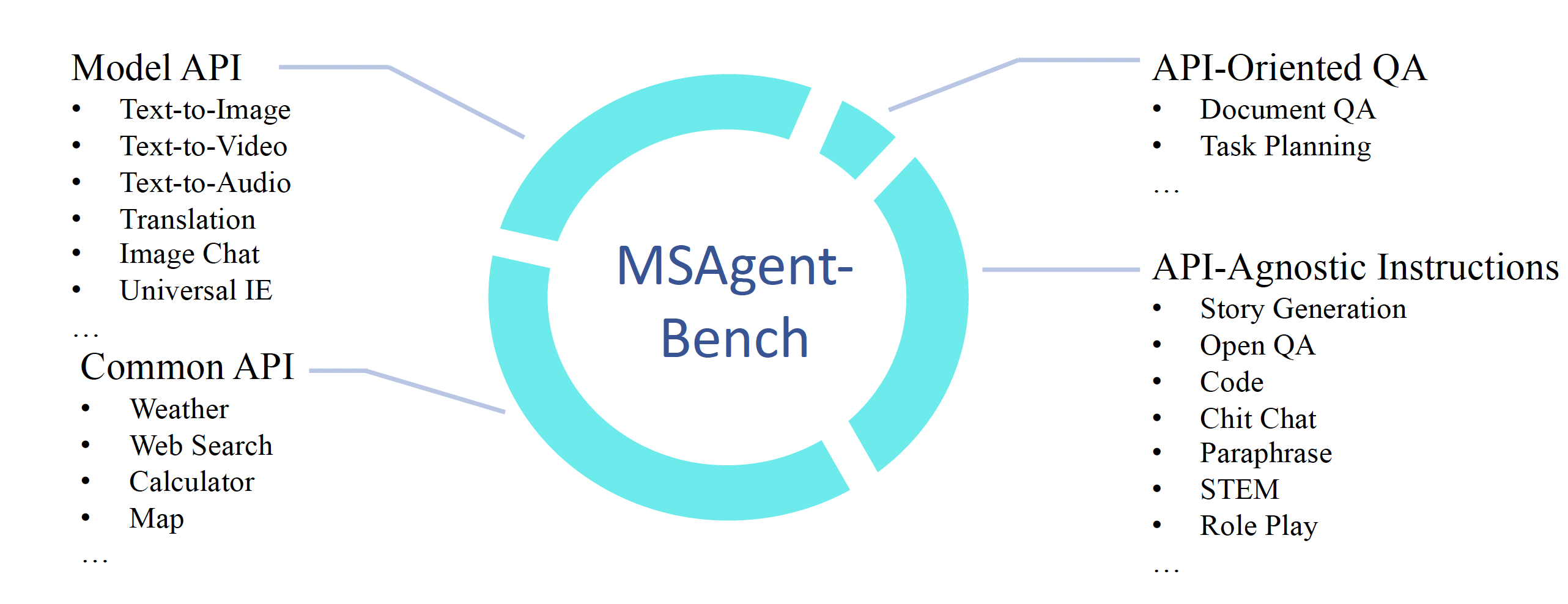

print(shell_tool(commands=["echo 'Hello World!'", "ls"]))We provide a training framework in the demo/tool_agent_finetune_swift, which mainly integrates the SWIFT training framework from ModelScope. Additionally, we release a large-scale tool instruction fine-tuning dataset MSAgent-Bench.

MSAgent-Bench, which is a comprehensive tool dataset encompassing 598k dialogues, including Common API, Model API, API-Oriented QA, and API-agnostic Instructions. You can directly download it on the dataset link or access it through sdk:

from modelscope.msdatasets import MsDataset

ds = MsDataset.load('damo/MSAgent-Bench', split='train')

one_ds = next(iter(ds))The training samples are organized in the following format, the content included between <|startofthink|> and <|endofthink|> represents the generated API request. The content included between <|startofexec|> and <|endofexec|> represents the API execution result.

{

"id":"MS_Agent_Bench_20",

"conversations":[

{

"from":"system",

"value": "你是达摩院xxxx"

},

{

"from":"user",

"value":"按照给定的schema抽取出下面文本对应的信息\nschema:{\"人物\": null, \"地理位置\": null, \"组织机构\": null}\n近日,美国政府宣布将对**1000多种商品加征关税,并威胁进一步加征关税。"

},

{

"from":"assistant",

"value":"<|startofthink|>```JSON\n{\"api_name\": \"modelscope_text-ie\", \"url\": \"http://9.32.64.200:5873/damo/nlp_structbert_siamese-uie_chinese-base\", \"parameters\": {\"text\": \"近日,美国政府宣布将对**1000多种商品加征关税,并威胁进一步加征关税。\", \"schema\": \"{\\\"人物\\\": null, \\\"地理位置\\\": null, \\\"组织机构\\\": null}\"}}\n```<|endofthink|>\n\n<|startofexec|>```JSON\n{\"人物\": [], \"地理位置\": [\"**\", \"美国\"], \"组织机构\": []}\n```<|endofexec|>\n信息抽取结果:{\"人物\": [], \"地理位置\": [\"**\", \"美国\"], \"组织机构\": []}。"

}

]

}Execute the training script.

cd demo/tool_agent_finetune_swift

PYTHONPATH=./ bash scripts/train/run_qwen_ddp.sh

If you would like to learn more about the practical details of Agent, you can refer to our articles and video tutorials:

We appreciate your enthusiasm in participating in our open-source ModelScope-Agent project. If you encounter any issues, please feel free to report them to us. If you have built a new Agent demo and are ready to share your work with us, please create a pull request at any time! If you need any further assistance, please contact us via email at contact@modelscope.cn or communication group!

If you found this work useful, consider giving this repository a star and citing our paper as follows:

@misc{li2023modelscopeagent,

title={ModelScope-Agent: Building Your Customizable Agent System with Open-source Large Language Models},

author={Chenliang Li and Hehong Chen and Ming Yan and Weizhou Shen and Haiyang Xu and Zhikai Wu and Zhicheng Zhang and Wenmeng Zhou and Yingda Chen and Chen Cheng and Hongzhu Shi and Ji Zhang and Fei Huang and Jingren Zhou},

year={2023},

eprint={2309.00986},

archivePrefix={arXiv},

primaryClass={cs.CL}

}