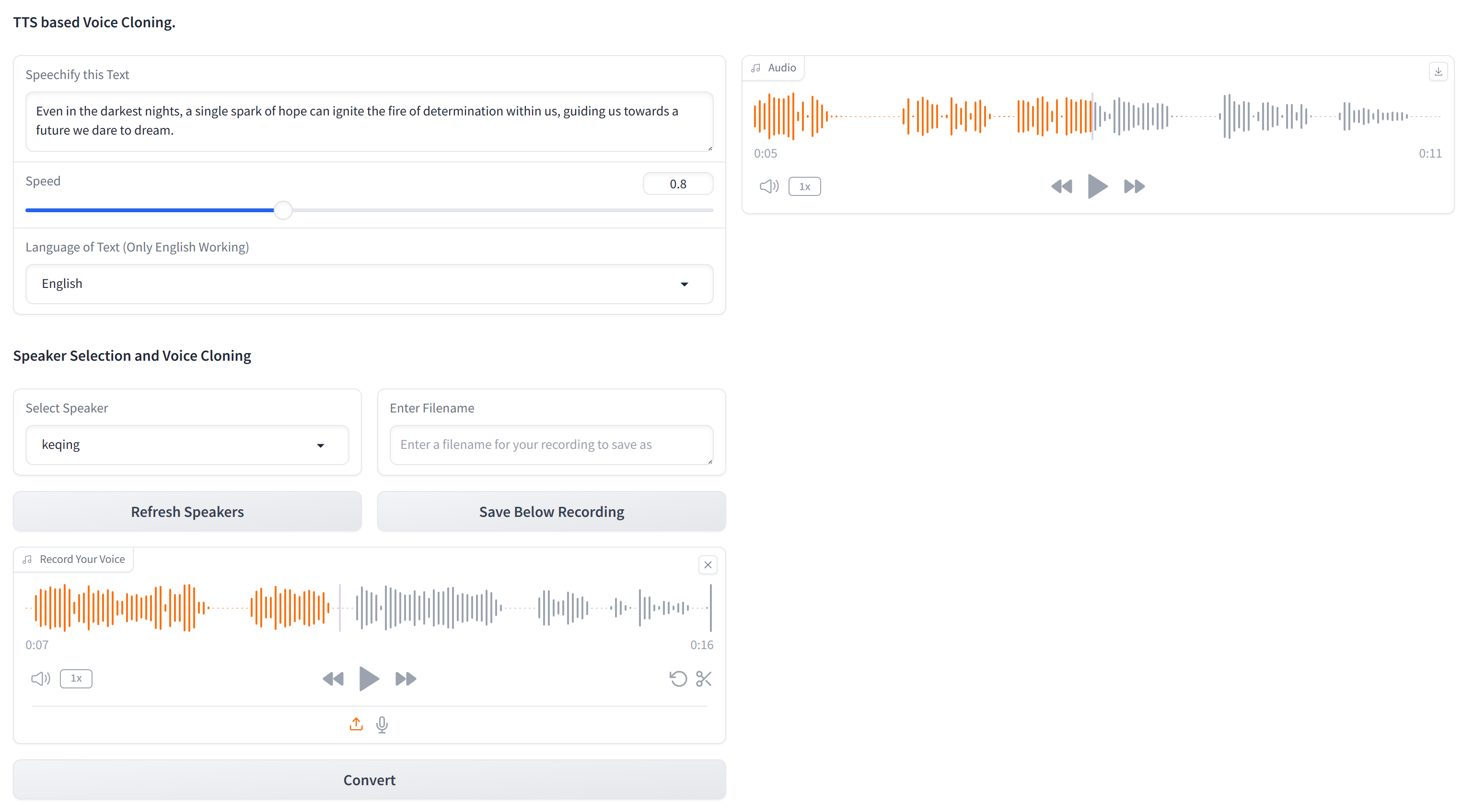

This repository contains the essential code for cloning any voice using just text and a 10-second audio sample of the target voice. XTTS-2-UI is simple to setup and use. Example Results 🔊

Works in 16 languages and has in-built voice recording/uploading. Note: Don't expect EL level quality, it is not there yet.

The model used is tts_models/multilingual/multi-dataset/xtts_v2. For more details, refer to Hugging Face - XTTS-v2 and its specific version XTTS-v2 Version 2.0.2.

To set up this project, follow these steps in a terminal:

-

Clone the Repository

- Clone the repository to your local machine.

git clone https://github.com/pbanuru/xtts2-ui.git cd xtts2-ui

- Clone the repository to your local machine.

-

Create a Virtual Environment:

- Run the following command to create a Python virtual environment:

python -m venv venv

- Activate the virtual environment:

-

Windows:

# cmd prompt venv\Scripts\activate

or

# git bash source venv/Scripts/activate

-

Linux/Mac:

source venv/bin/activate

-

- Run the following command to create a Python virtual environment:

-

Install PyTorch:

- If you have an Nvidia CUDA-Enabled GPU, choose the appropriate PyTorch installation command:

- Before installing PyTorch, check your CUDA version by running:

nvcc --version

- For CUDA 12.1:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu121

- For CUDA 11.8:

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu118

- Before installing PyTorch, check your CUDA version by running:

- If you don't have a CUDA-enabled GPU,: Follow the instructions on the PyTorch website to install the appropriate version of PyTorch for your system.

- If you have an Nvidia CUDA-Enabled GPU, choose the appropriate PyTorch installation command:

-

Install Other Required Packages:

- Install direct dependencies:

pip install -r requirements.txt

- Upgrade the TTS package to the latest version:

pip install --upgrade TTS

- Install direct dependencies:

After completing these steps, your setup should be complete and you can start using the project.

Models will be downloaded automatically upon first use.

Download paths:

- MacOS:

/Users/USR/Library/Application Support/tts/tts_models--multilingual--multi-dataset--xtts_v2 - Windows:

C:\Users\ YOUR-USER-ACCOUNT \AppData\Local\tts\tts_models--multilingual--multi-dataset--xtts_v2 - Linux:

/home/${USER}/.local/share/tts/tts_models--multilingual--multi-dataset--xtts_v2

To run the application:

python app.py

OR

streamlit run app2.py

Or, You can also run from the terminal itself, by providing sample input texts on texts.json and generate multiple audios with multiple speakers, (you may need to adjust on appTerminal.py)

python appTerminal.py

On initial use, you will need to agree to the terms:

[XTTS] Loading XTTS...

> tts_models/multilingual/multi-dataset/xtts_v2 has been updated, clearing model cache...

> You must agree to the terms of service to use this model.

| > Please see the terms of service at https://coqui.ai/cpml.txt

| > "I have read, understood and agreed to the Terms and Conditions." - [y/n]

| | >

If your model is re-downloading each run, please consult Issue 4723 on GitHub.

The dataset consists of a single folder named targets, pre-populated with several voices for testing purposes.

To add more voices (if you don't want to go through the GUI), create a 24KHz WAV file of approximately 10 seconds and place it under the targets folder.

You can use yt-dlp to download a voice from YouTube for cloning:

yt-dlp -x --audio-format wav "https://www.youtube.com/watch?"

| Language | Audio Sample Link |

|---|---|

| English | |

| Russian | |

| Arabic |

Arabic, Chinese, Czech, Dutch, English, French, German, Hungarian, Italian, Japanese (see setup), Korean, Polish, Portuguese, Russian, Spanish, Turkish

If you would like to select Japanese as the target language, you must install a dictionary.

# Lite version

pip install fugashi[unidic-lite]or for more serious processing:

# Full version

pip install fugashi[unidic]

python -m unidic downloadMore details here.

- Heavily based on https://github.com/kanttouchthis/text_generation_webui_xtts/