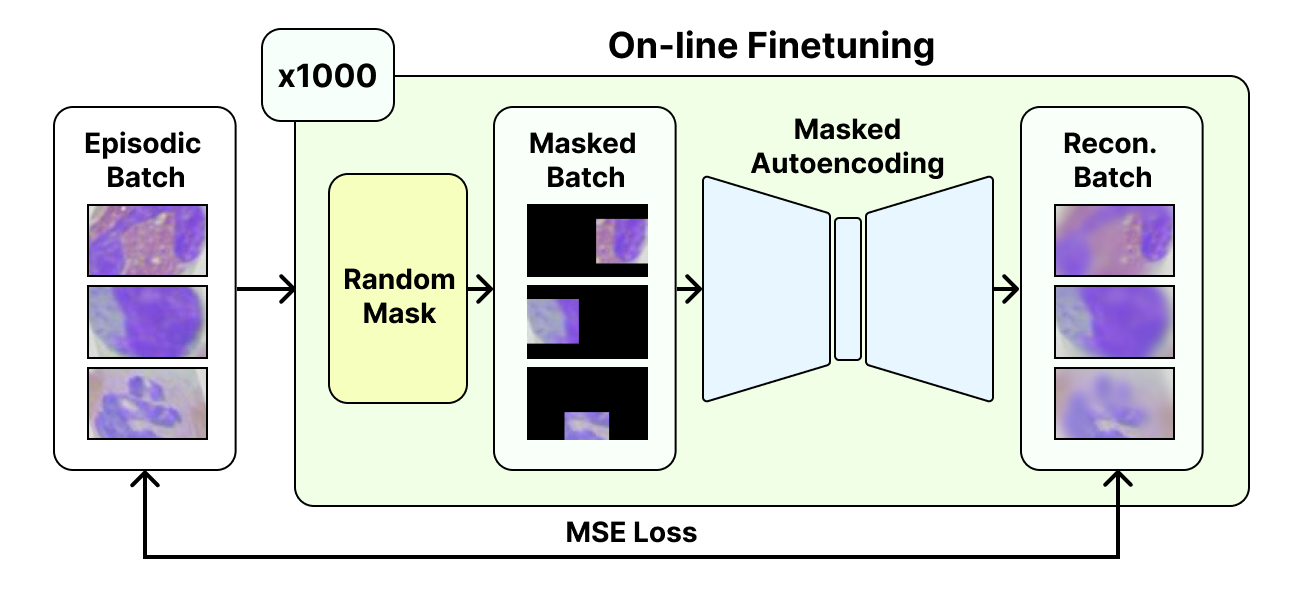

Fully Self-Supervised Masked Autoencoders for Out-of-domain Few-Shot Learning (FSS), a novel technique that adapts a vision transformer (ViT) to new domains through application of an on-line self-supervised finetuning session.

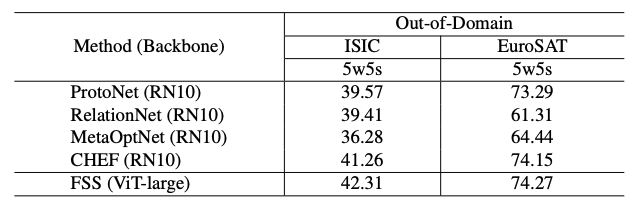

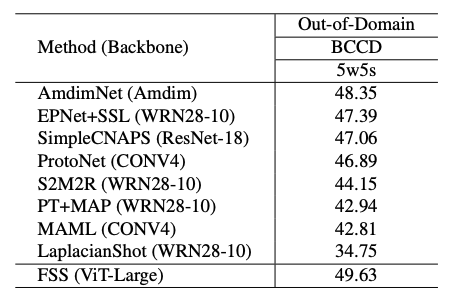

Test FSS on a range of out-of-domain datasets.

Install the requirements necessary for using FSS. A virtual environment (or similar) is recommended.

pip install -r requirements.txtDatasets used in this work can be obtained at the following links:

After downloading all datasets, you should extract/place each respective dataset's folder in the same directory.

You can download MAE pretrained weights from Meta's MAE Implementation

To use the pretrained weights, create a fit_models folder at the root of this repo and place all pretrained weights in it.

Run the mae_test.sh. To adjust parameters for a testing run, edit the number of shots, the finetuning iterations, image size, model type, model weights path, and the path to the root data directory (where you placed the downloaded datasets earlier).

To see a full list of available options, run the following command to see the help dialogue:

python3 mae_test.py --help