English | 简体中文

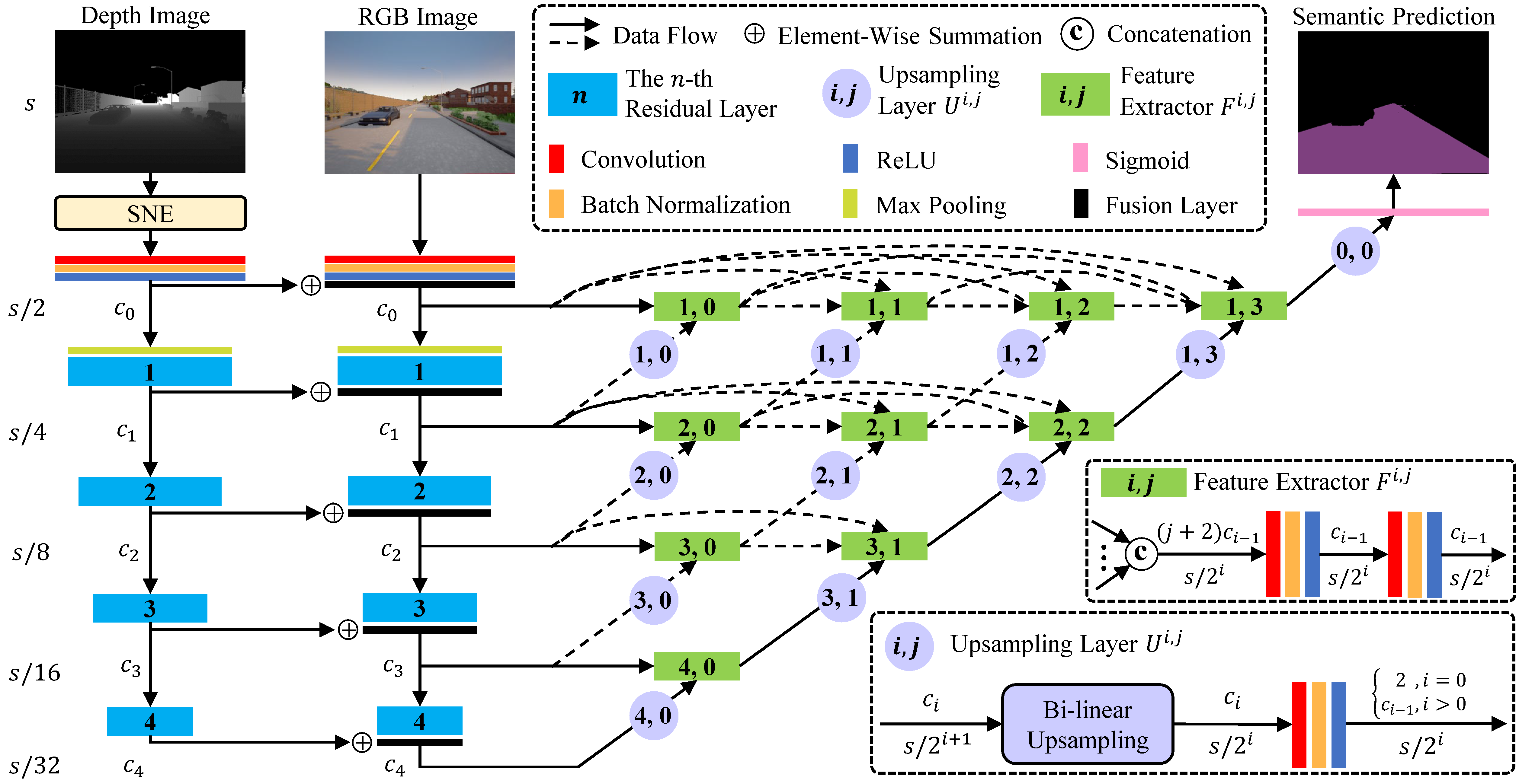

This SNE-RoadSeg2 is based on the official pytorch implementation of SNE-RoadSeg: Incorporating Surface Normal Information into Semantic Segmentation for Accurate Freespace Detection, accepted by ECCV 2020. This is their project page.

In this repo, we provide the training and testing setup for the KITTI Road Dataset. We test our code in Python 3.7, CUDA 10.0, cuDNN 7 and PyTorch 1.1. We provide Dockerfile to build the docker image we use.

Please setup the KITTI Road Dataset and pretrained weights according to the following folder structure:

SNE-RoadSeg

|-- checkpoints

| |-- kitti

| | |-- kitti_net_RoadSeg.pth

|-- data

|-- datasets

| |-- kitti

| | |-- training

| | | |-- calib

| | | |-- depth_u16

| | | |-- gt_image_2

| | | |-- image_2

| | |-- validation

| | | |-- calib

| | | |-- depth_u16

| | | |-- gt_image_2

| | | |-- image_2

| | |-- testing

| | | |-- calib

| | | |-- depth_u16

| | | |-- image_2

|-- examples

...

image_2, gt_image_2 and calib can be downloaded from the KITTI Road Dataset. We implement depth_u16 based on the LiDAR data provided in the KITTI Road Dataset, and it can be downloaded from here. Note that depth_u16 has the uint16 data format, and the real depth in meters can be obtained by double(depth_u16)/1000. Moreover, the pretrained weights kitti_net_RoadSeg.pth for our SNE-RoadSeg-152 can be downloaded from here.

We provide one example in examples. To run it, you only need to setup the checkpoints folder as mentioned above. Then, run the following script:

bash ./scripts/run_example.sh

and you will see normal.png, pred.png and prob_map.png in examples. normal.png is the normal estimation by our SNE; pred.png is the freespace prediction by our SNE-RoadSeg; and prob_map.png is the probability map predicted by our SNE-RoadSeg.

For KITTI submission, you need to setup the checkpoints and the datasets/kitti/testing folder as mentioned above. Then, run the following script:

bash ./scripts/test.sh

and you will get the prediction results in testresults. After that you can follow the submission instructions to transform the prediction results into the BEV perspective for submission.

If everything works fine, you will get a MaxF score of 96.74 for URBAN. Note that this is our re-implemented weights, and it is very similar to the reported ones in the paper (a MaxF score of 96.75 for URBAN).

For training, you need to setup the datasets/kitti folder as mentioned above. You can split the original training set into a new training set and a validation set as you like. Then, run the following script:

bash ./scripts/train.sh

and the weights will be saved in checkpoints and the tensorboard record containing the loss curves as well as the performance on the validation set will be save in runs. Note that use-sne in train.sh controls if we will use our SNE model, and the default is True. If you delete it, our RoadSeg will take depth images as input, and you also need to delete use-sne in test.sh to avoid errors when testing.

Our code is inspired by pytorch-CycleGAN-and-pix2pix, and we thank Jun-Yan Zhu for their great work.