- [2025/5/23] TokenPacker is accepted by IJCV 🎉🎉🎉.

- [2024/10/22] We integrated TokenPacker-HD framework with Osprey to achieve fine-grained high-resolution pixel-level understanding with large performance gains. Please see the codes in this branch for your reference.

- [2024/7/25] We released checkpoints, please check them.

- [2024/7/3] We released the paper of our TokenPacker on Arxiv.

- [2024/7/3] We released the training and inference codes.

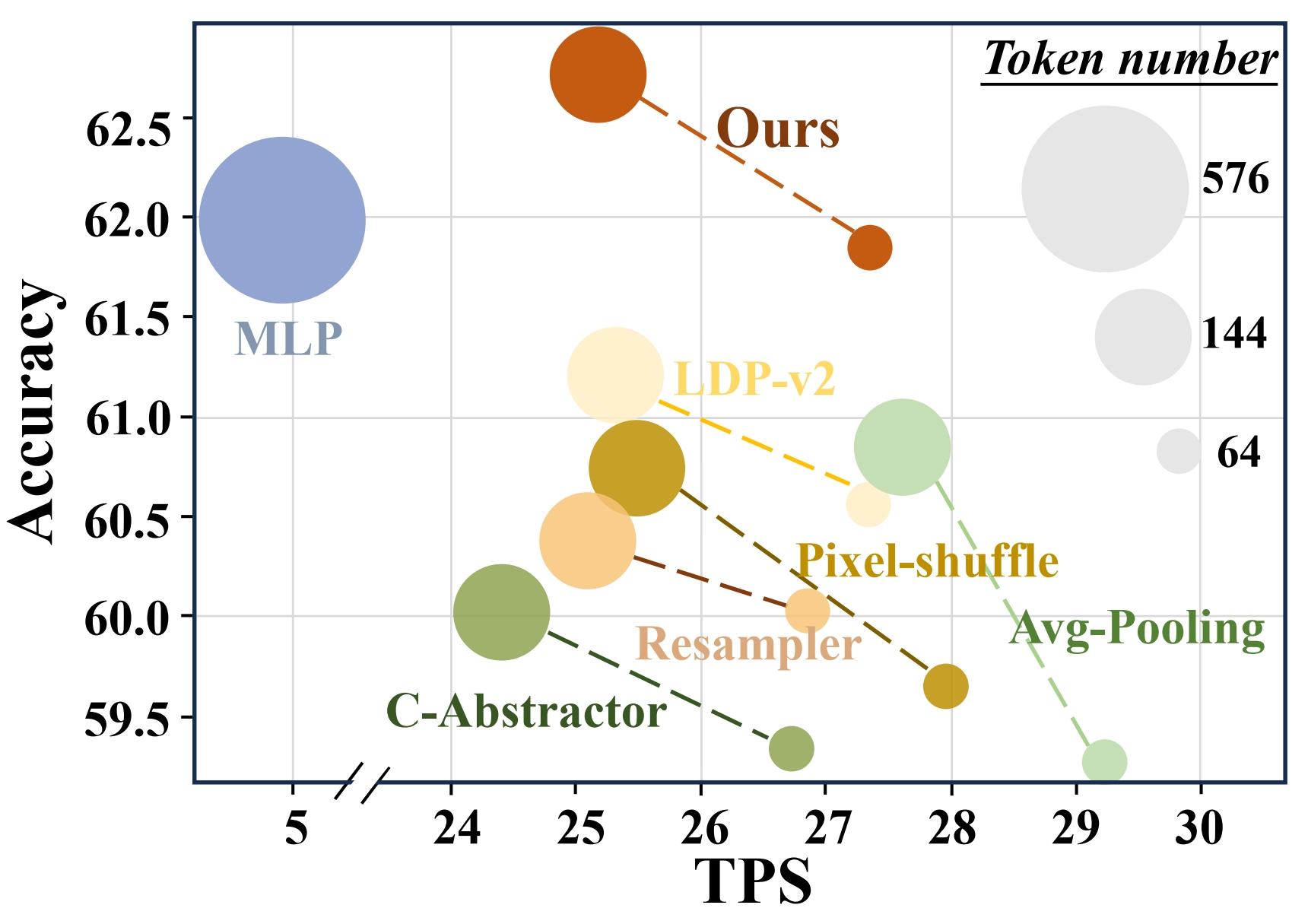

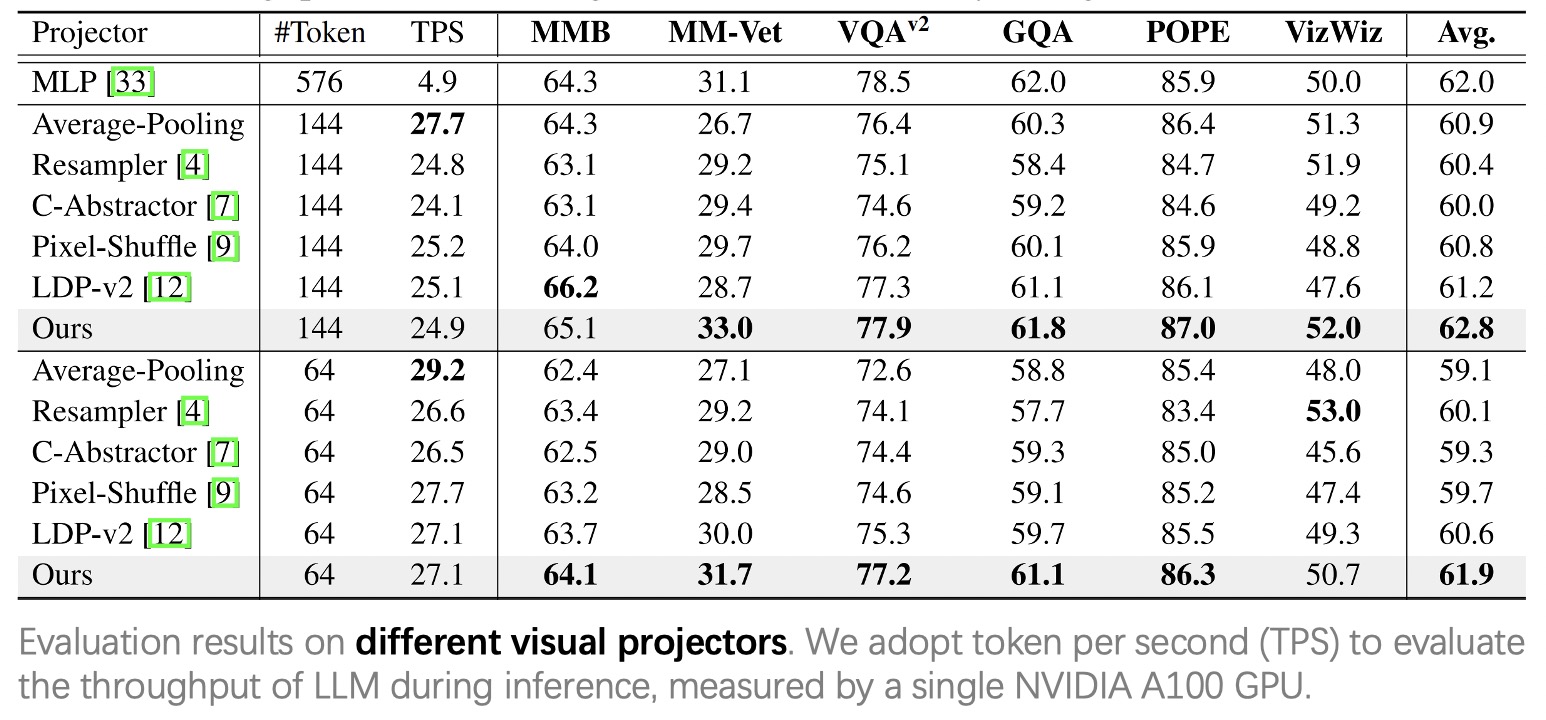

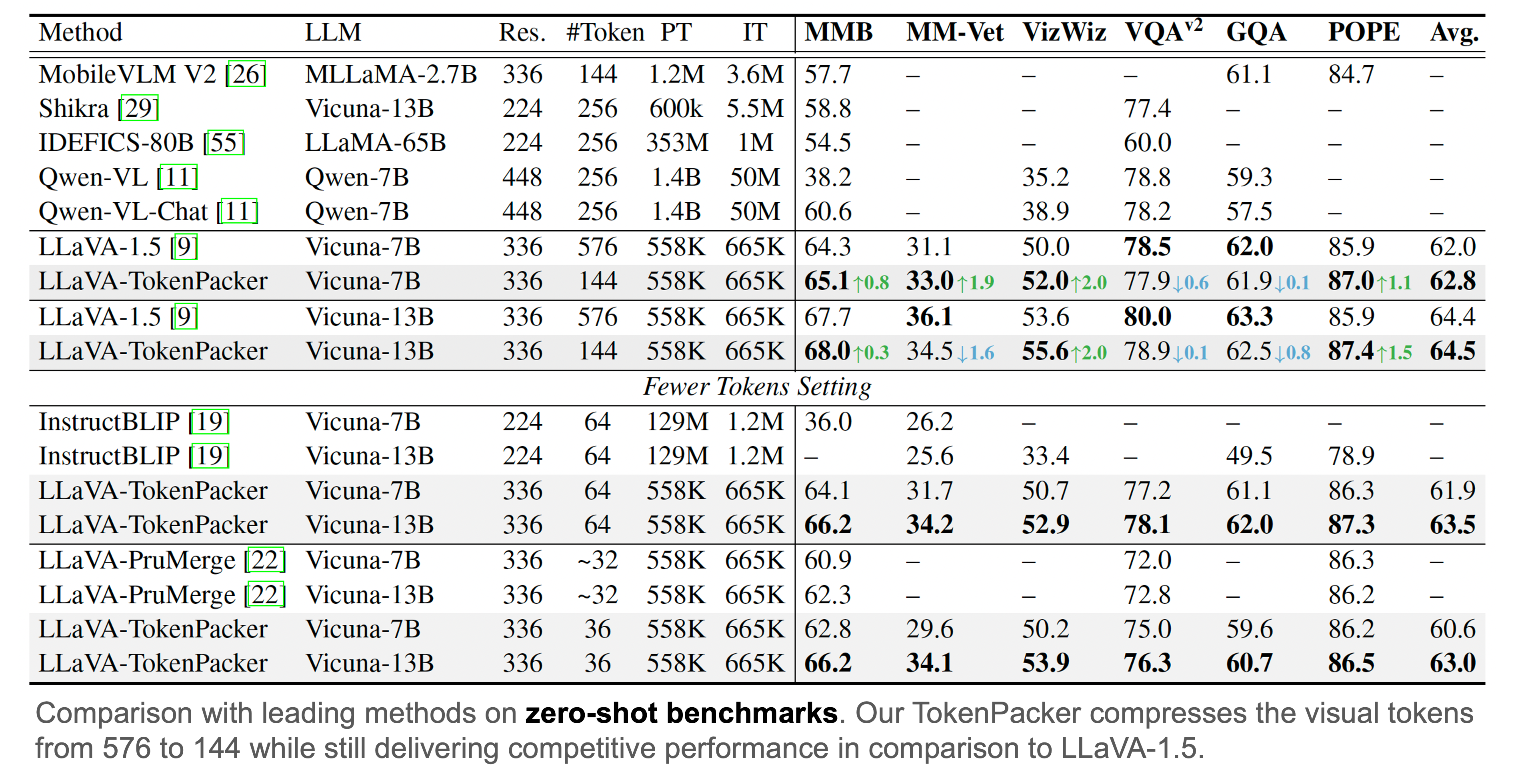

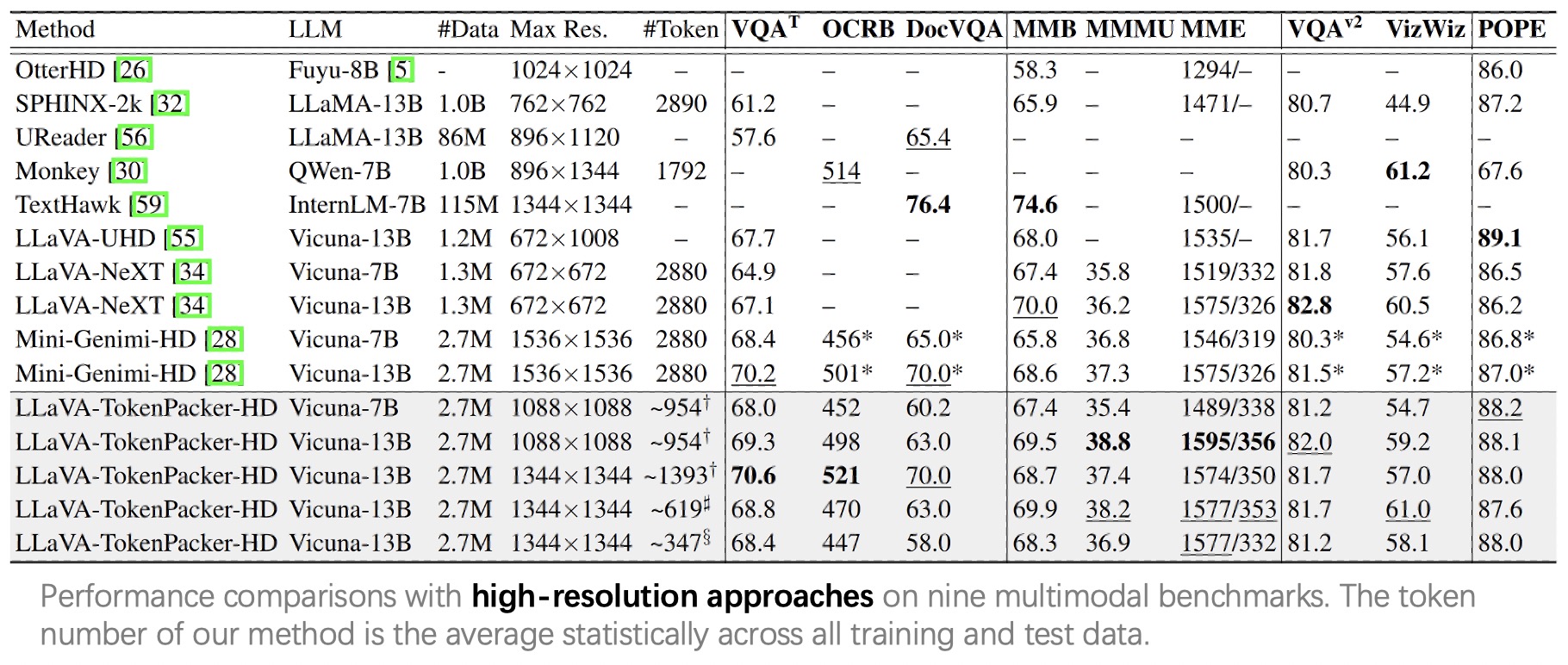

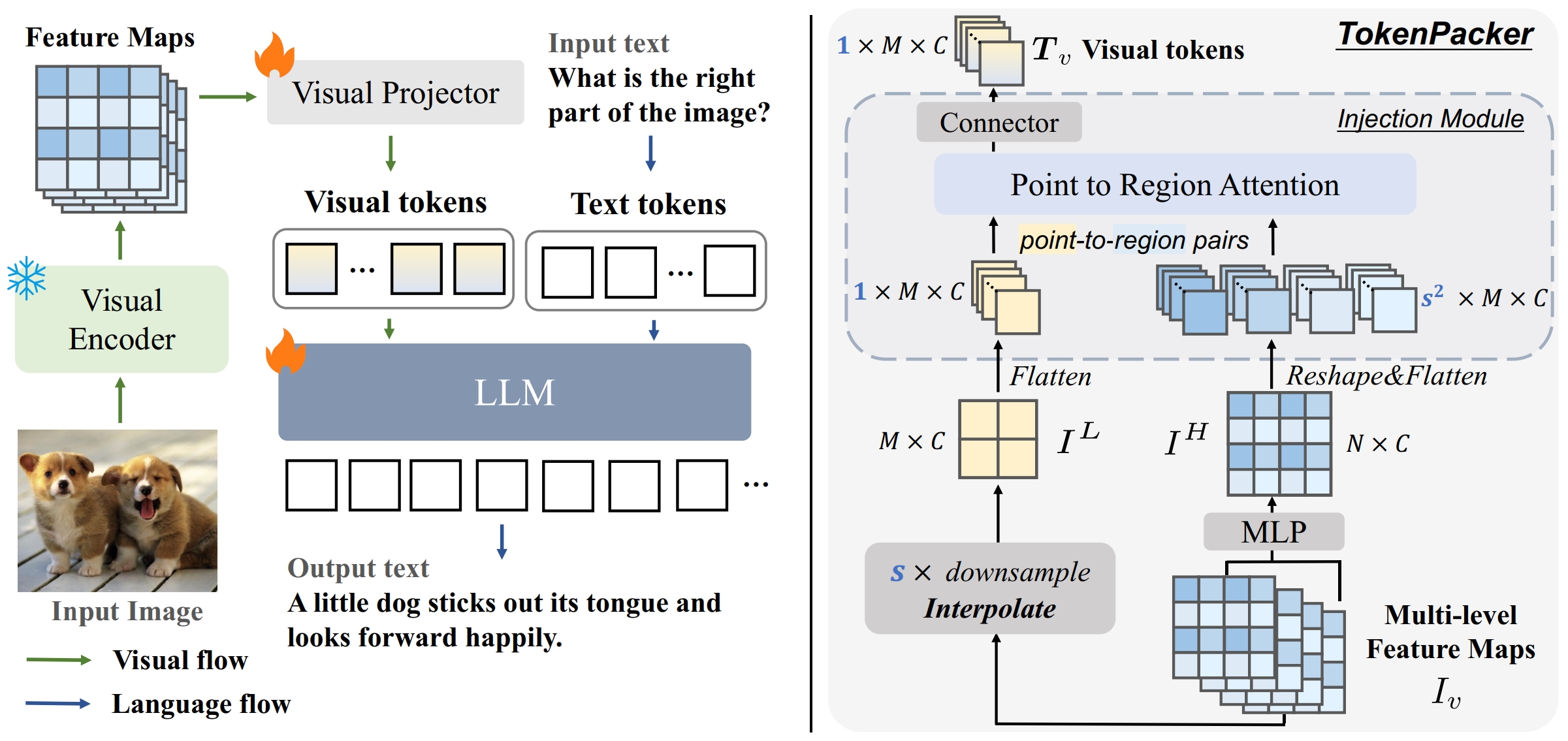

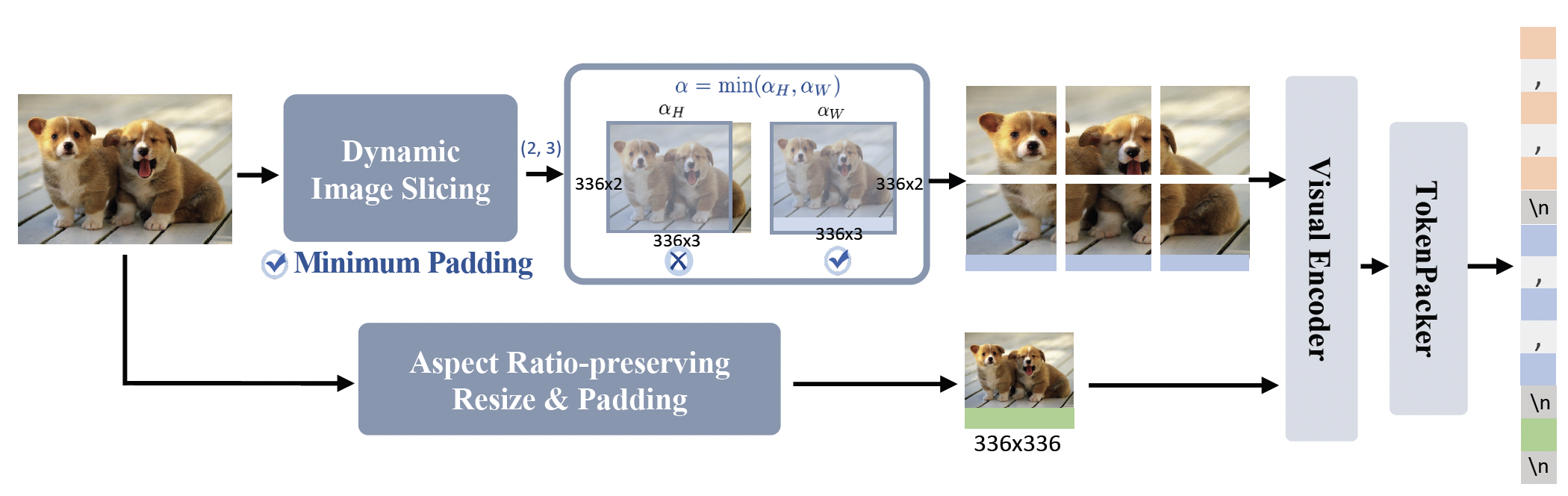

TokenPacker is a novel visual projector, which adopts a coarse-to-fine scheme

to inject the enriched characteristics to generate the condensed visual tokens. Using TokenPacker, we can compress the

visual tokens by 75%∼89%, while achieves comparable or even better performance

across diverse benchmarks with significantly higher efficiency.

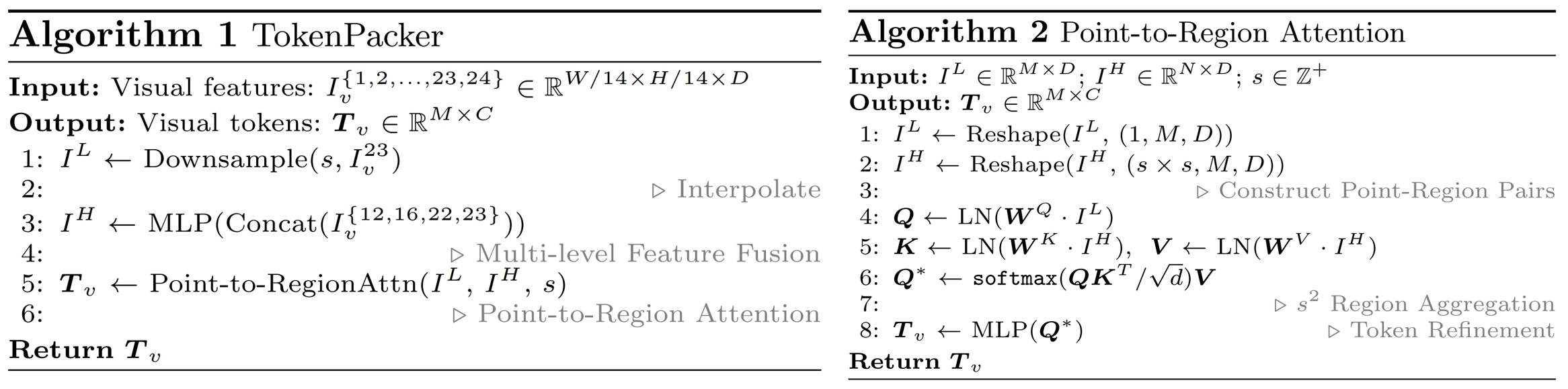

We provide the pseudo-codes to showcase the detailed processing flow.

As a visual projector, TokenPacker is implemented by a class TokenPacker, which can be found in multimodal_projector/builder.py

To support efficient high-resolution image understanding, we further develop an effective image

cropping method TokenPacker-HD.

- Clone this repository and navigate to TokenPacker folder

git clone https://github.com/CircleRadon/TokenPacker.git

cd TokenPacker

- Install packages

conda create -n tokenpacker python=3.10 -y

conda activate tokenpacker

pip install --upgrade pip # enable PEP 660 support

pip install -e .

- Install additional packages for training cases

pip install -e ".[train]"

pip install flash-attn --no-build-isolation

To make a fair comparison, we use the same training data as in LLaVA-1.5, i.e., LLaVA-Pretrain-558K for stage 1, and Mix665k for stage 2.

- Stage1: Image-Text Alignment Pre-training

bash scripts/v1_5/pretrain.sh- Stage2: Visual Instruction Tuning

bash scripts/v1_5/finetune.shNote: Using --scale_factor to control compression ratio, support [2,3,4]

To obtain the competitive high-resolution performance, we use 2.7M data as organized by Mini-Gemini, i.e., 1.2M for stage 1 and 1.5M for stage 2.

- Stage1: Image-Text Alignment Pre-training

bash scripts/v1_5/pretrain_hd.sh- Stage2: Visual Instruction Tuning

bash scripts/v1_5/finetune_hd.shNote:

- Using

--scale_factorto control compression ratio, support [2,3,4]. - Using

--patch_numto control max patch dividing number, support [9,16,25].

| Model | Max Res. | Compre. Ratio | Token Num. | Max Patch Num. | Training Data | Download |

|---|---|---|---|---|---|---|

| TokenPacker-7b | 336x336 | 1/4 | 144 | - | 558K+665K | checkpoints |

| TokenPacker-13b | 336x336 | 1/4 | 144 | - | 558K+665K | checkpoints |

| TokenPacker-HD-7b | 1088x1088 | 1/4 | ~954 | 9 | 1.2M+1.5M | checkpoints |

| TokenPacker-HD-13b | 1088x1088 | 1/4 | ~954 | 9 | 1.2M+1.5M | checkpoints |

| TokenPacker-HD-13b | 1344x1344 | 1/4 | ~1393 | 16 | 1.2M+1.5M | checkpoints |

| TokenPacker-HD-13b | 1344x1344 | 1/9 | ~619 | 16 | 1.2M+1.5M | checkpoints |

| TokenPacker-HD-13b | 1344x1344 | 1/16 | ~347 | 16 | 1.2M+1.5M | checkpoints |

Note:

- The

token numberof TokenPacker-HD is theaveragestatistically across all training and test data. - The training data of

558K+665Kfollows LLaVA-1.5, the one of1.2M+1.5Mfollows Mini-Gemini. - All LLMs use Vicuna-7b/13b as based LLM.

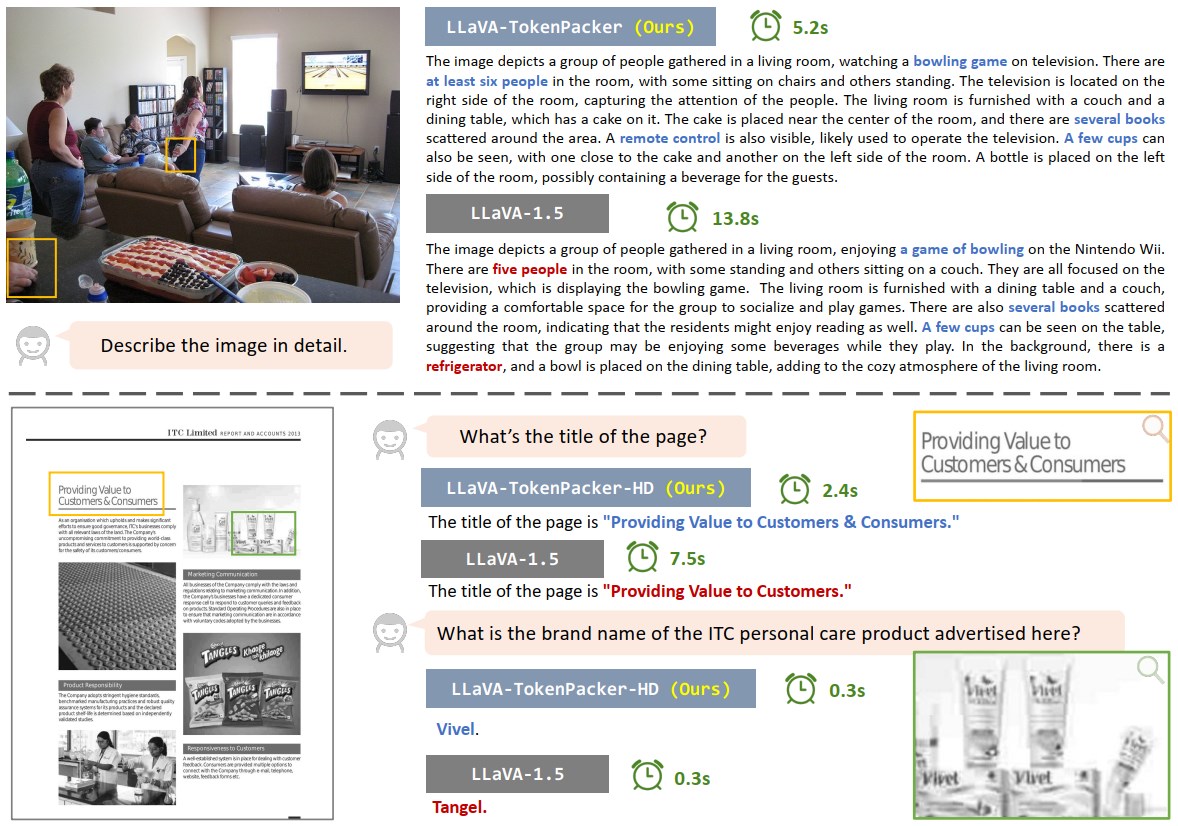

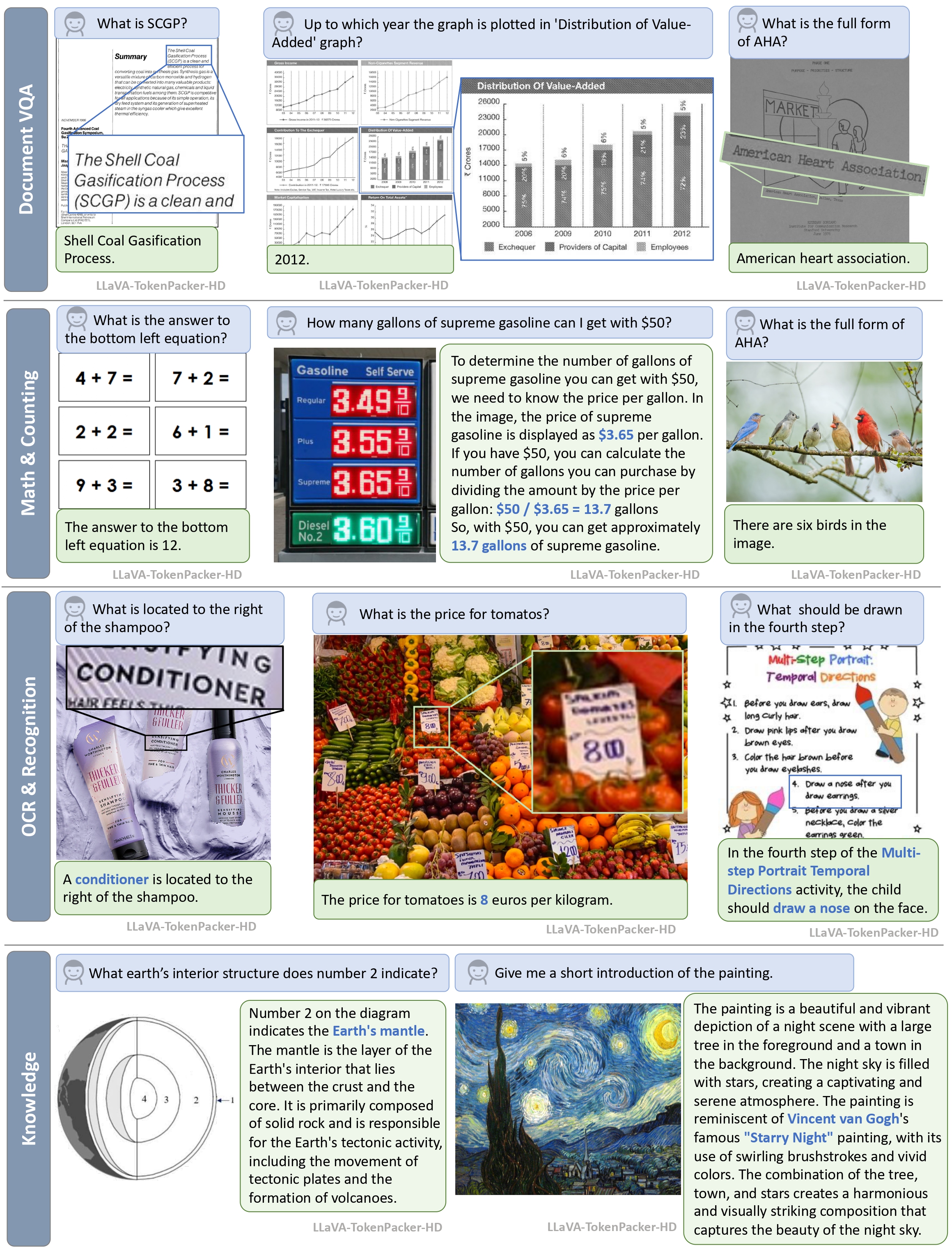

We provide some visual examples.

High-resolution image understanding.

- Release the training and inference codes.

- Release all checkpoints.

- LLaVA-v1.5: the codebase we built upon.

- Mini-Gemini: the organized data we used for training high-resolution method.

For more recent related works, please refer to this repo of Awesome-Token-Compress.

@misc{TokenPacker,

title={TokenPacker: Efficient Visual Projector for Multimodal LLM},

author={Wentong Li, Yuqian Yuan, Jian Liu, Dongqi Tang, Song Wang, Jianke Zhu and Lei Zhang},

year={2024},

eprint={2407.02392},

archivePrefix={arXiv},

primaryClass={cs.CV}

}