This repository is the official implementation of OOTDiffusion

🤩 Please give me a star if you find it interesting!

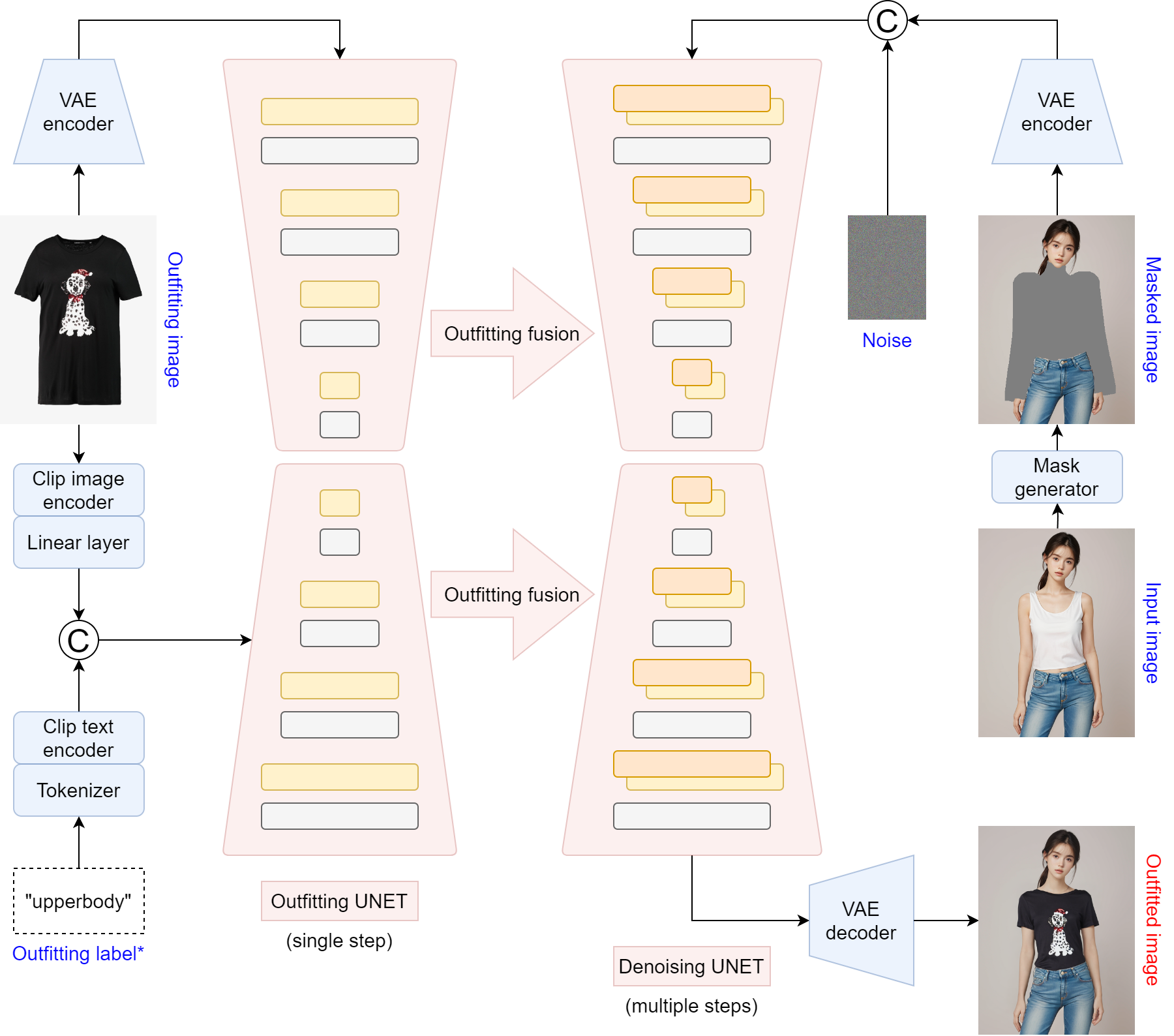

OOTDiffusion: Outfitting Fusion based Latent Diffusion for Controllable Virtual Try-on

Yuhao Xu, Tao Gu, Weifeng Chen, Chengcai Chen

Xiao-i Research

Our paper is coming soon!

🔥🔥 Our model checkpoints trained on VITON-HD (768 * 1024) have been released!

Checkpoints trained on Dress Code (768 * 1024) will be released soon. Thanks for your patience ❤

🤗 Hugging Face Link

We use checkpoints of humanparsing and openpose in preprocess. Please refer to their guidance if you encounter relevant environmental issues

Please download clip-vit-large-patch14 into checkpoints folder

- Clone the repository

git clone https://github.com/levihsu/OOTDiffusion- Create a conda environment and install the required packages

conda create -n ootd python==3.10

conda activate ootd

pip install torch==2.0.1 torchvision==0.15.2 torchaudio==2.0.2 numpy==1.24.4 scipy==1.10.1 scikit-image==0.21.0 opencv-python==4.7.0.72 pillow==9.4.0 diffusers==0.24.0 transformers==4.36.2 accelerate==0.26.1 matplotlib==3.7.4 tqdm==4.64.1 gradio==4.16.0 config==0.5.1 einops==0.7.0 ninja==1.10.2- Half-body model

cd OOTDiffusion/run

python run_ootd.py --model_path <model-image-path> --cloth_path <cloth-image-path> --scale 2.0 --sample 4- Full-body model

Garment category must be paired: 0 = upperbody; 1 = lowerbody; 2 = dress

cd OOTDiffusion/run

python run_ootd.py --model_path <model-image-path> --cloth_path <cloth-image-path> --model_type dc --category 2 --scale 2.0 --sample 4- Paper

- Gradio demo

- Inference code

- Model weights

- Training code