Investigation under the development of the master thesis "DeepRL-based Motion Planning for Indoor Mobile Robot Navigation" @ Institute of Systems and Robotics - University of Coimbra (ISR-UC)

| Module | Software/Hardware |

|---|---|

| Python IDE | Pycharm |

| Deep Learning library | Tensorflow + Keras |

| GPU | GeForce GeForce GTX 1060 |

| Interpreter | Python 3.8 |

| Packages | requirements.txt |

To setup Pycharm + Anaconda + GPU, consult the setup file here.

To import the required packages (requirements.txt), download the file into the project folder and type the following instruction in the project environment terminal:

pip install -r requirements.txt

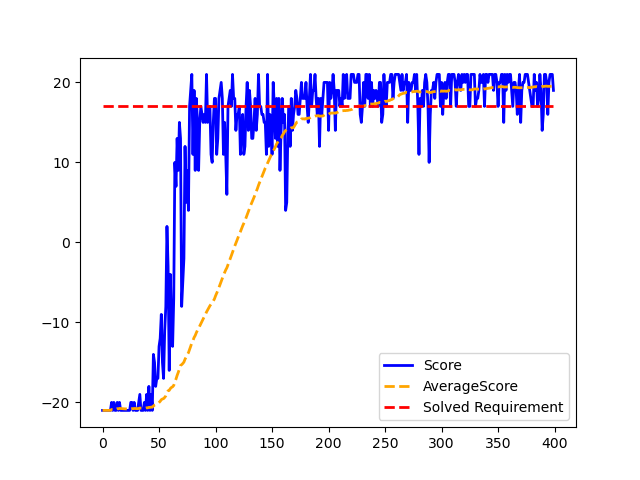

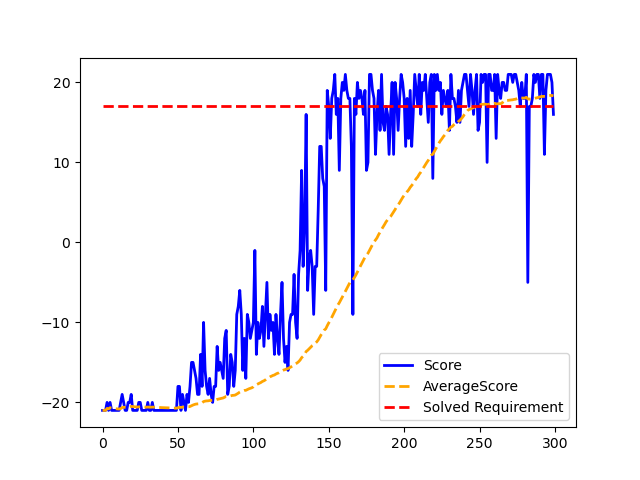

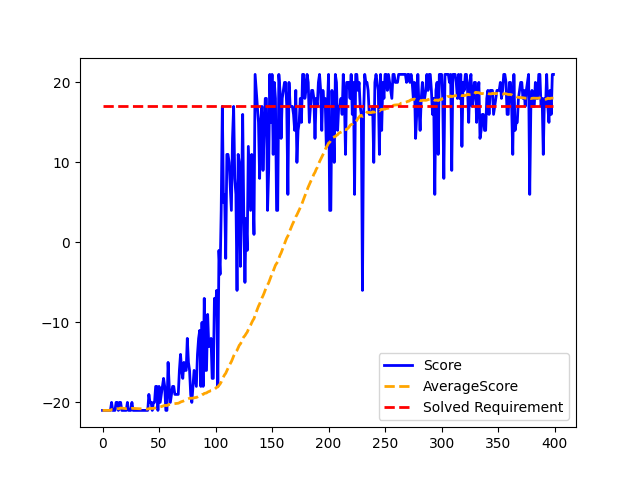

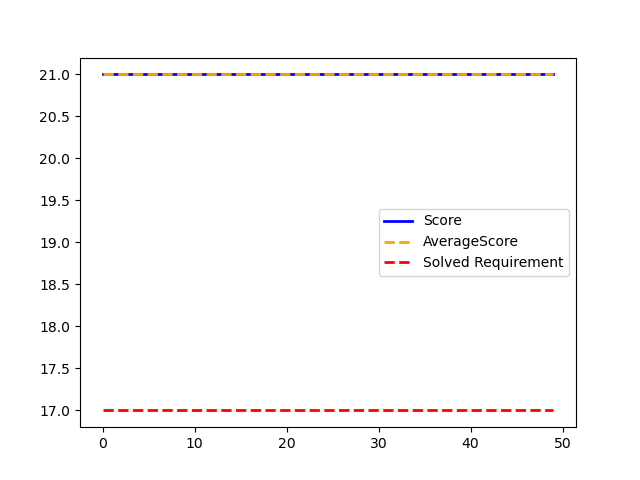

The training process generates a .txt file that track the network models (in 'tf' and .h5 formats) which achieved the solved requirement of the environment. Additionally, an overview image (graph) of the training procedure is created.

To perform several training procedures, the .txt, .png, and directory names must be change. Otherwise, the information of previous training models will get overwritten, and therefore lost.

Regarding testing the saved network models, if using the .h5 model, a 5 episode training is required to initialize/build the keras.model network. Thus, the warnings above mentioned are also appliable to this situation.

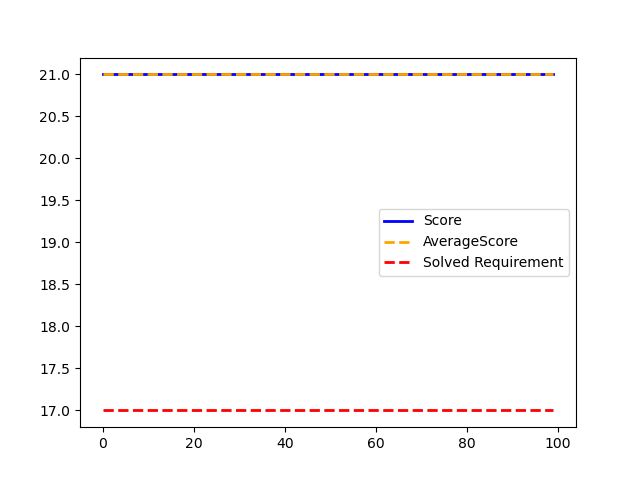

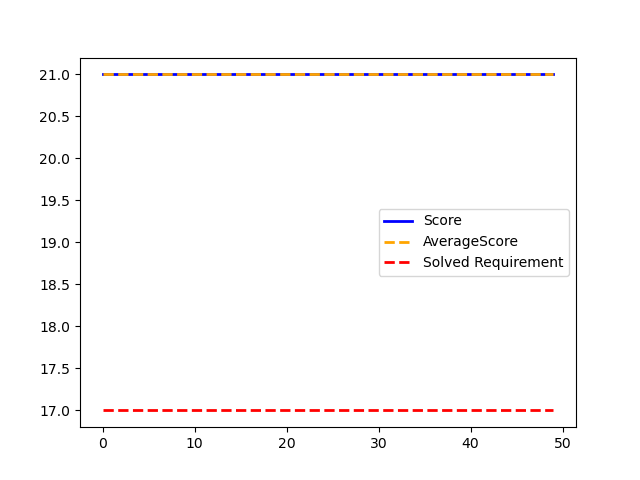

Loading the saved model in 'tf' is the recommended option. After finishing the testing, an overview image (graph) of the training procedure is also generated.

Actions:

0 - No action

1 - No action

2 - Racket go up

3 - Racket go down

4 - Racket go up

5 - Racket go down

Actions (2 & 4) and (3 & 5) - Same movement with different amplitudes

States:

Stack of 4 (80, 80) cropped grey-scaled images (6400 pixels)

Rewards:

Scalar value (1) for a winning rally

Scalar value (-1) for a losing rally

Episode termination:

Player reaches a score of 21

Episode length > 400000

Solved Requirement:

Average score of 17 over 100 consecutive trials

| Train | Test | ||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

Network model used for testing: 'saved_networks/dqn_model10' ('tf' model, also available in .h5)

| Train | Test | ||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

Network model used for testing: 'saved_networks/duelingdqn_model30' ('tf' model, also available in .h5)

| Train | Test | ||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

Network model used for testing: 'saved_networks/d3qn_model50' ('tf' model, also available in .h5)