This is a PyTorch implementation of the LipForensics paper.

pip install -r requirements.txtNote: we used Python version 3.8 to test this code.

-

Follow the links below to download the datasets (you will be asked to fill out some forms before downloading):

- FaceForensics++ (FF++) / FaceShifter (c23)

- DeeperForensics

- CelebDF-v2

- DFDC (the test set of the full version, not the Preview)

-

Extract the frames (e.g. using code in the FaceForensics++ repo.) The filenames of the frames should be as follows: 0000.png, 0001.png, ....

-

Detect the faces and compute 68 face landmarks. For example, you can use RetinaFace and FAN for good results.

-

Place face frames and corresponding landmarks into the appropriate directories:

- For FaceForensics++, FaceShifter, and DeeperForensics, frames for a given video should be

placed in

data/datasets/Forensics/{dataset_name}/{compression}/images/{video}, wheredataset_nameis RealFF (real frames from FF++), Deepfakes, FaceSwap, Face2Face, NeuralTextures, FaceShifter, or DeeperForensics.dataset_nameis c0, c23, or c40, corresponding to no compression, low compression, and high compression, respectively.videois the video name and should be numbered as follows: 000, 001, .... For example, the frame 0102 of real video 067 at c23 compression is found indata/datasets/Forensics/RealFF/c23/images/067/0102.png - For CelebDF-v2, frames for a given video should

be placed in

data/datasets/CelebDF/{dataset_name}/images/{video}wheredataset_nameis RealCelebDF, which should include all real videos from the test set, or FakeCelebDF, which should include all fake videos from the test set. - For DFDC, frames for a given video should be placed in

data/datasets/DFDC/images(both real and fake). The video names from the test set we used in our experiments are given indata/datasets/DFDC/dfdc_all_vids.txt.

The corresponding computed landmarks for each frame should be placed in

.npyformat in the directories defined by replacingimageswithlandmarksabove (e.g. for video "000", the.npyfiles for each frame should be placed indata/datasets/Forensics/RealFF/c23/landmarks/000). - For FaceForensics++, FaceShifter, and DeeperForensics, frames for a given video should be

placed in

-

To crop the mouth region from each frame for all datasets, run

python preprocessing/crop_mouths.py --dataset all

This will write the mouth images into the corresponding

cropped_mouthsdirectory.

- Cross-dataset generalisation (Table 2 in paper):

-

Download the pretrained model and place into

models/weights. This model has been trained on FaceForensics++ (Deepfakes, FaceSwap, Face2Face, and NeuralTextures) and is the one used to get the LipForensics video-level AUC results in Table 2 of the paper, reproduced below:CelebDF-v2 DFDC FaceShifter DeeperForensics 82.4% 73.5% 97.1% 97.6% -

To evaluate on e.g. FaceShifter, run

python evaluate.py --dataset FaceShifter --weights_forgery ./models/weights/lipforensics_ff.pth

-

If you find this repo useful for your research, please consider citing the following:

@inproceedings{haliassos2021lips,

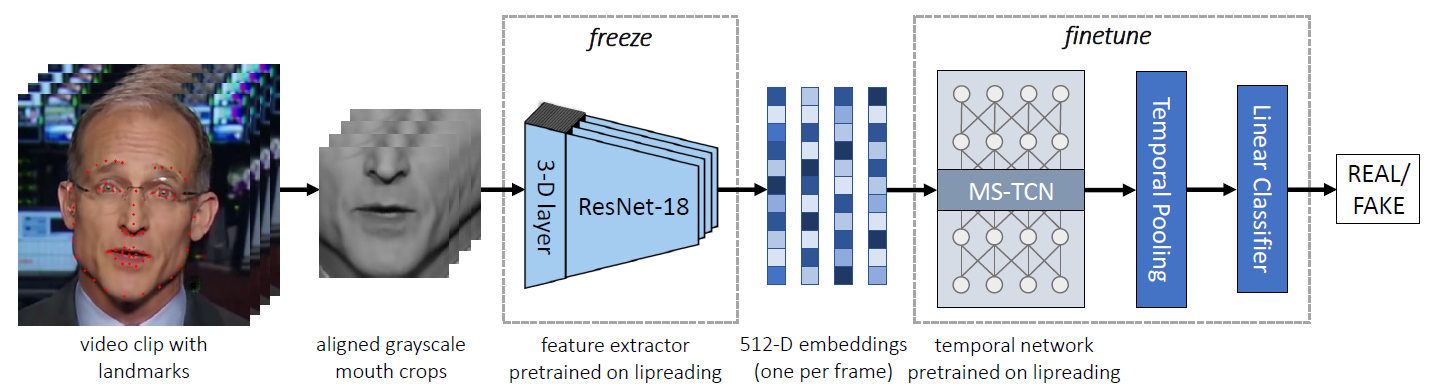

title={Lips Don't Lie: A Generalisable and Robust Approach To Face Forgery Detection},

author={Haliassos, Alexandros and Vougioukas, Konstantinos and Petridis, Stavros and Pantic, Maja},

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

pages={5039--5049},

year={2021}

}