Object detection on compressed images

Large amount of high quality data generated by cameras installed on autonomous driving cars - 9 GB / minute. Several petabytes are collected in a month. Storage of this amount of data is costly for a company. Motivated by the problem, we investigate how much can we compress data and do not lose accuracy of object detection on images?

We do transfer learning on a private dataset with the state-of-the-art SSD and Faster RCNN networks pre-trained on KITTI and COCO image datasets.

Below are the sample performance results of object detection for 100%. 40% and 5% quality JPEG by Faster RCNN.

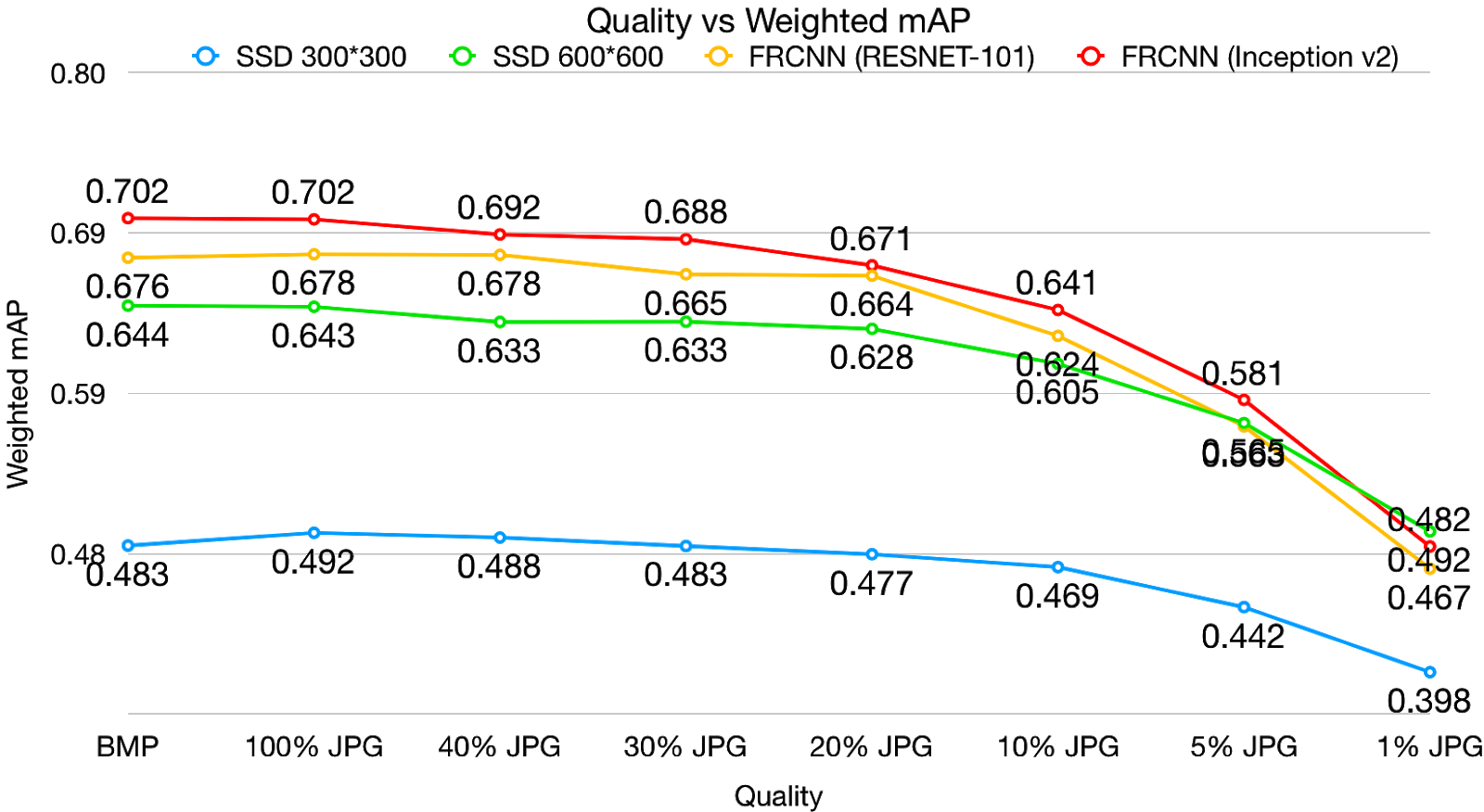

Overall object detection results can be seen on fig. below.

Object detection results demonstrate that operating on compressed images (till 40%) do not bring significant drop in accuracy, while storage rates decrease hugely.

├── LICENSE

├── README.md <- The top-level README for developers using this project.

├── data

│ ├── external <- Data from third party sources.

│ ├── interim <- Intermediate data that has been transformed.

│ ├── processed <- The final, canonical data sets for modeling.

│ └── raw <- The original, immutable data dump.

│

├── models <- Trained and serialized models, model predictions, or model summaries

│

├── notebooks <- Jupyter notebooks. Naming convention is a number (for ordering),

│ the creator's initials, and a short `-` delimited description, e.g.

│ `1.0-jqp-initial-data-exploration`.

│

├── reports <- Generated analysis as HTML, PDF, LaTeX, etc.

│ └── figures <- Generated graphics and figures to be used in reporting

│

├── requirements.txt <- The requirements file for reproducing the analysis environment, e.g.

│ generated with `pip freeze > requirements.txt`

│

├── setup.py <- makes project pip installable (pip install -e .) so src can be imported

├── src <- Source code for use in this project.

│ ├── __init__.py <- Makes src a Python module

│ │

│ ├── data <- Scripts to download or generate data

│ │ └── make_dataset.py

│ │

│ ├── features <- Scripts to turn raw data into features for modeling

| └── build_features.py

└──TensorFlow <- TensorFlow pipeline for transfer learning

Use instructions in readme files in TensorFlow folder to train pre-trained models.

Project based on the cookiecutter data science project template. #cookiecutterdatascience